首先声明一下,Python语言里的多线程是“假”的多线程,对于计算密集型的程序来说,没有帮助,反而可能有害处;但是对于IO密集型的来说,还是能起一些作用的。

Python多线程的具体特点、机理,请看这几个帖子

①为什么在Python里推荐使用多进程而不是多线程? https://bbs.51cto.com/thread-1349105-1.html

②为什么有人说 Python 的多线程是鸡肋呢? https://www.zhihu.com/question/23474039

下面是多线程版本的Pyhon爬虫,可以爬取以太坊智能合约代码,性能比不使用多线程的普通爬虫还是要强一些的。

不使用多线程的普通爬虫:https://www.cnblogs.com/blockchainchain/p/11915130.html

# -*- coding: utf8 -*- import threading import queue import requests from bs4 import BeautifulSoup import traceback import os import time import datetime def printtime(): print(time.strftime("%Y-%m-%d %H:%M:%S:", time.localtime()), end = ' ') return 0 def printthread(ThreadID): print("第 " + ThreadID + " 个线程",end=' ') return 0 def getsccodecore(ThreadID): while not que.empty(): eachLine = que.get() filename = eachLine[29:71] filepath = "C:\\Users\\15321\\Desktop\\SmartContract\\code\\" if (os.path.exists(filepath + filename + '.sol')): printthread(ThreadID) printtime() print(filename + '已存在!') continue # 伪装成浏览器 headers = { 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/78.0.3904.87 Safari/537.36'} failedTimes = 100 while True: # 在制定次数内一直循环,直到访问站点成功 if (failedTimes <= 0): printthread(ThreadID) printtime() print("失败次数过多,请检查网络环境!") break failedTimes -= 1 try: # 以下except都是用来捕获当requests请求出现异常时, # 通过捕获然后等待网络情况的变化,以此来保护程序的不间断运行 printthread(ThreadID) printtime() print('正在连接的的网址链接是 ' + eachLine, end = '') response = requests.get(eachLine, headers=headers, timeout=5) break except requests.exceptions.ConnectionError: printthread(ThreadID) printtime() print('ConnectionError!请等待3秒!') time.sleep(3) except requests.exceptions.ChunkedEncodingError: printthread(ThreadID) printtime() print('ChunkedEncodingError!请等待3秒!') time.sleep(3) except: printthread(ThreadID) printtime() print('Unfortunitely,出现未知错误!请等待3秒!') time.sleep(3) response.encoding = response.apparent_encoding soup = BeautifulSoup(response.text, "html.parser") targetPRE = soup.find_all('pre', 'js-sourcecopyarea editor') fo = open(filepath + filename + '.sol', "w+", encoding="utf-8"); fo.write(targetPRE[0].text) fo.close() printthread(ThreadID) printtime() print(filename + '新建完成!') return 0 def getsccode(threadNum): try: SCAddress = open("C:\\Users\\15321\\Desktop\\SmartContract\\address\\address.txt", "r") except: printtime() print('打开智能合约URL地址仓库错误!请检查文件目录是否正确!') return 1 for eachLine in SCAddress: que.put(eachLine) SCAddress.close() threads = [] #创建指定数量的线程 for i in range(threadNum): t = threading.Thread(target = getsccodecore, args = (str(i),)) threads.append(t) #开始指定数量的线程 for i in range(threadNum): threads[i].start() for i in range(threadNum): threads[i].join() printtime() print("所有线程执行结束!") return 0 def getSCAddress(eachurl, filepath): # 伪装成某种浏览器,防止被服务器拒绝服务 headers = { 'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/78.0.3904.87 Safari/537.36'} # 设置访问网址失败的最高次数,达到制定次数后,报告错误,停止程序 failedTimes = 50 while True: # 一直循环,直到在制定的次数内访问站点成功 if (failedTimes <= 0): printtime() print("失败次数过多,请检查网络环境!") break failedTimes -= 1 # 每执行一次就要减1 try: # 以下except都是用来捕获当requests请求出现异常时, # 通过捕获然后等待网络情况的变化,以此来保护程序的不间断运行 print('正在连接的的网址链接是 ' + eachurl ) response = requests.get(url=eachurl, headers=headers, timeout=5) # 执行到这一句意味着成功访问,于是退出while循环 break except requests.exceptions.ConnectionError: printtime() print('ConnectionError!请等待3秒!') time.sleep(3) except requests.exceptions.ChunkedEncodingError: printtime() print('ChunkedEncodingError!请等待3秒!') time.sleep(3) except: printtime() print('出现未知错误!请等待3秒!') time.sleep(3) # 转换成UTF-8编码 response.encoding = response.apparent_encoding # 煲汤 soup = BeautifulSoup(response.text, "html.parser") # 查找这个字段,这个字段下,包含智能合约代码的URL地址 targetDiv = soup.find_all('div','table-responsive mb-2 mb-md-0') try: targetTBody = targetDiv[0].table.tbody except: printtime() print("targetTBody未成功获取!") return 1 # 以追加的方式打开文件。 # 如果文件不存在,则新建;如果文件已存在,则在文件指针末尾追加 fo = open(filepath + "address.txt", "a") # 把每一个地址,都写到文件里面保存下来 for targetTR in targetTBody: if targetTR.name == 'tr': fo.write("https://etherscan.io" + targetTR.td.find('a', 'hash-tag text-truncate').attrs['href'] + "\n") fo.close() return 0 def updatescurl(): urlList = ["https://etherscan.io/contractsVerified/1?ps=100", "https://etherscan.io/contractsVerified/2?ps=100", "https://etherscan.io/contractsVerified/3?ps=100", "https://etherscan.io/contractsVerified/4?ps=100", "https://etherscan.io/contractsVerified/5?ps=100"] # filepath是保存要爬取的智能合约地址的文件的存放路径 # 请根据自己的需求改成自己想要的路径。 filepath = 'C:\\Users\\15321\\Desktop\\SmartContract\\address\\' # 把旧的存放合约地址的文件清除干净 try: if (os.path.exists(filepath + "address.txt")): os.remove(filepath + "address.txt") printtime() print('已清除%s目录下的旧文件(仓库)!' % filepath) except IOError: printtime() print("出现一个不能处理的错误,终止程序:IOError!") # 函数不正常执行,返回1 return 1 # 读取urlList里的每一个URL网页里的智能合约地址 for eachurl in urlList: time = 0 while( 1 == getSCAddress(eachurl, filepath)): time += 1 if(time == 10): break pass # 函数正常执行,返回0 return 0 def main(threadNum): # 更新要爬取的智能合约的地址 updatescurl() # 根据智能合约的地址去爬取智能合约的代码 getsccode(threadNum) if __name__ == '__main__': que = queue.Queue(500) threadNum = 100 main(threadNum) input()

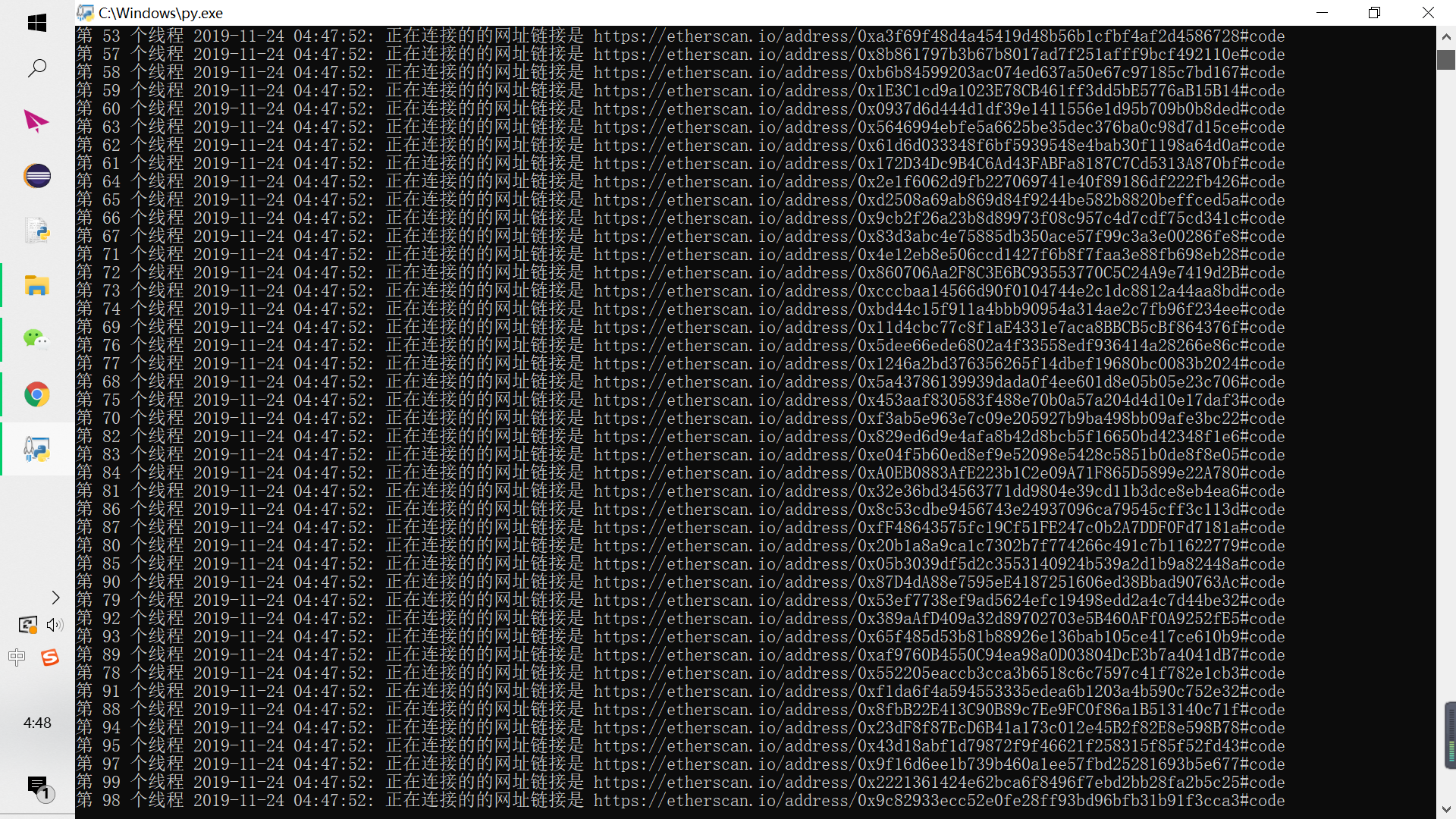

下面是开启100个线程运行的截图:

经过测试,这个多线程的爬虫,如果开启100个线程,1分钟内一般能爬下20-70份solidity code,而未开多线程的那个爬虫,一般一分钟只能爬10-15份,性能提升还是比较可观的~