目录

Outline

\(y\in{R^d}\)

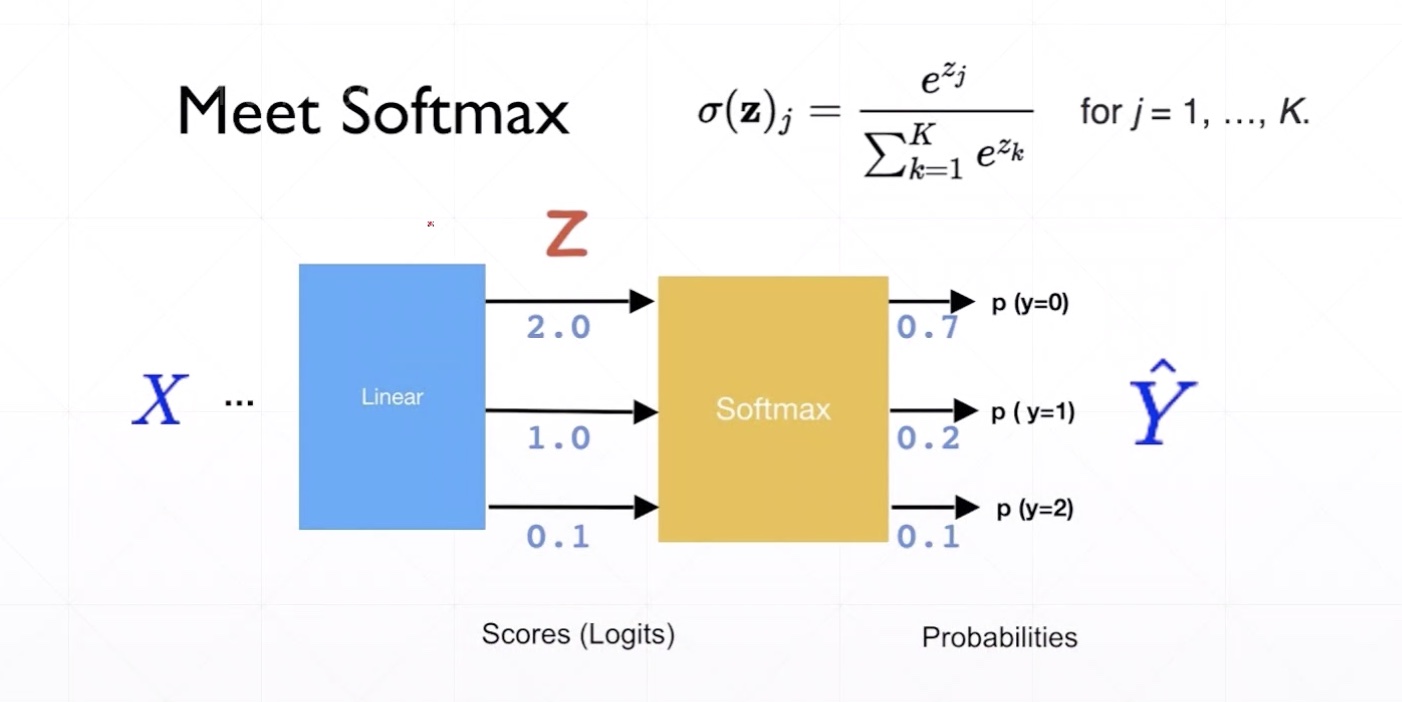

- 多分类一般为概率

\(y_i\in{[0,1]},\,i=0,1,\cdots,y_d-1\)

- 多分类一般要求各个分类和为1

\(y_i\in{[0,1]},\,\sum_{i=0}^{y_d}y_i=1,\,i=0,1,\cdots,y_d-1\)

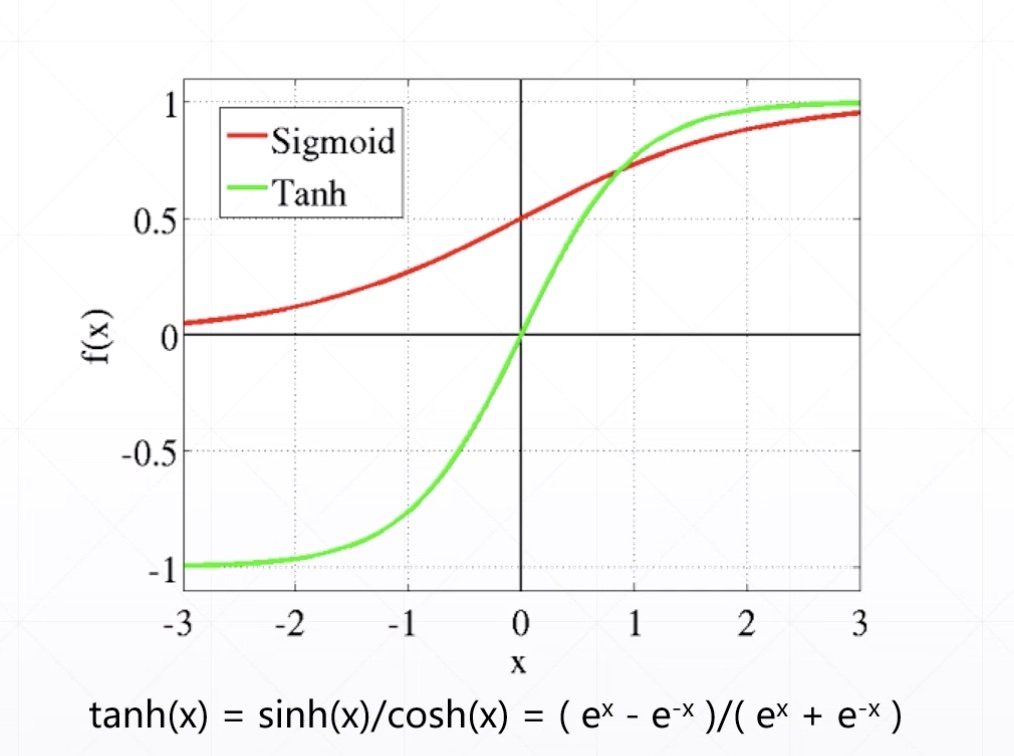

\(y_i\in{[-1,1]},\,i=0,1,\cdots,y_d-1\)

\(y\in{R^d}\)

linear regression

naive classification with MSE

other general prediction

- out = relu(X@W + b)

- logits

\(y_i\in{[0,1]}\)

- binary classfication

- y>0.5,-->1

- y<0.5,-->0

- Image Generation

- rgb

out = relu(X@W + b)

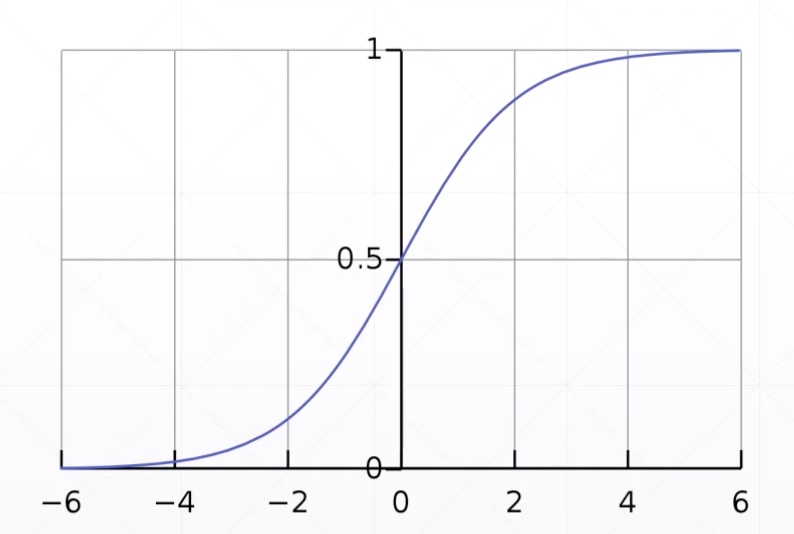

sigmoid

\[ f(x) = \frac{1}{1+e^{-x}} \]

- out' = sigmoid(out) # 把输出值压缩在0-1

import tensorflow as tfa = tf.linspace(-6., 6, 10)

a<tf.Tensor: id=9, shape=(10,), dtype=float32, numpy=

array([-6. , -4.6666665, -3.3333333, -2. , -0.6666665,

0.666667 , 2. , 3.333334 , 4.666667 , 6. ],

dtype=float32)>tf.sigmoid(a)<tf.Tensor: id=21, shape=(10,), dtype=float32, numpy=

array([0.00247264, 0.00931591, 0.03444517, 0.11920291, 0.33924365,

0.6607564 , 0.8807971 , 0.96555483, 0.99068403, 0.9975274 ],

dtype=float32)>x = tf.random.normal([1, 28, 28]) * 5

tf.reduce_min(x), tf.reduce_max(x)(<tf.Tensor: id=49, shape=(), dtype=float32, numpy=-16.714912>,

<tf.Tensor: id=51, shape=(), dtype=float32, numpy=16.983088>)x = tf.sigmoid(x)

tf.reduce_min(x), tf.reduce_max(x)(<tf.Tensor: id=56, shape=(), dtype=float32, numpy=8.940697e-08>,

<tf.Tensor: id=58, shape=(), dtype=float32, numpy=1.0>)\(y_i\in{[0,1]},\,\sum_{i=0}^{y_d}y_i=1\)

a = tf.linspace(-2., 2, 5)

tf.sigmoid(a) # 输出值的和不为1<tf.Tensor: id=73, shape=(5,), dtype=float32, numpy=

array([0.11920292, 0.26894143, 0.5 , 0.7310586 , 0.880797 ],

dtype=float32)>- softmax

tf.nn.softmax(a) # 输出值的和为1<tf.Tensor: id=67, shape=(5,), dtype=float32, numpy=

array([0.01165623, 0.03168492, 0.08612854, 0.23412165, 0.6364086 ],

dtype=float32)>

logits = tf.random.uniform([1, 10], minval=-2, maxval=2)

logits<tf.Tensor: id=81, shape=(1, 10), dtype=float32, numpy=

array([[ 1.988893 , -0.0625844 , -0.77338314, -1.1655569 , -1.8847818 ,

1.3335037 , 1.8299117 , 0.8497076 , -0.15004253, -0.6530676 ]],

dtype=float32)>prob = tf.nn.softmax(logits, axis=1)

prob<tf.Tensor: id=87, shape=(1, 10), dtype=float32, numpy=

array([[0.31882977, 0.04098393, 0.02013342, 0.01360187, 0.00662587,

0.16554914, 0.2719657 , 0.10205092, 0.03755182, 0.02270753]],

dtype=float32)>tf.reduce_sum(prob, axis=1)<tf.Tensor: id=85, shape=(1,), dtype=float32, numpy=array([1.], dtype=float32)>\(y_i\in{[-1,1]}\)

a<tf.Tensor: id=72, shape=(5,), dtype=float32, numpy=array([-2., -1., 0., 1., 2.], dtype=float32)>tf.tanh(a)<tf.Tensor: id=90, shape=(5,), dtype=float32, numpy=

array([-0.9640276, -0.7615942, 0. , 0.7615942, 0.9640276],

dtype=float32)>