Hadoop序列化类型与Java数据类型

| Java数据类型 | Hadoop序列化类型 |

|---|---|

| int | IntWritable |

| string | Text |

| long | LongWritable |

| boolean | BooleanWritable |

| byte | ByteWritable |

| float | FloatWritable |

| double | DoubleWritable |

WordCount

WordCount分为三个阶段:Mapper、Reducer和Driver

(1)Mapper:对数据进行打散

(1)Reducer:对数据进行聚合

(1)Driver:提交任务Job

Mapper阶段:

(1)将数据转换为String

(2)对数据进行切分处理

(3)在每个单词后加1

(4)输出到Reducer阶段

Reducer阶段:

(1)根据Key进行聚合

(2)输出Key出现的总次数

Driver阶段:

(1)创建任务

(2)关联jar包的Driver类

(3)关联使用的Mapper/Reducer类

(4)指定Mapper输出数据的Key-Value类型

(5)指定Reducer输出数据的Key-Value类型

(6)指定数据的输入路径和输出路径

(7)提交任务

WordCount代码编写

WordCountMapper类

package com.MapReduce.util;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

/*

* @author Administrator

* @version 1.0

*

* 数据的输入与输出以key-value进行传输

* keyIn:LongWritable 数据的起始偏移量

* valueIn:Text 具体数据

*

* Mapper要把数据传递到Reducer阶段(<hello,1>)

* keyOut:Text 单词

* valueOut:IntWritable 出现的次数

*/

public class WordCountMapper extends Mapper<LongWritable, Text,Text, IntWritable> {

@Override

//对数据进行打散

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//接入数据

String word = value.toString();

//对数据进行切分

String[] s = word.split(" ");

//以<hello,1>方式遍历

for (String str: s) {

//写到Reducer端

context.write(new Text(str),new IntWritable(1));

}

}

}

WordCountReducer类

package com.MapReduce.util;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

/*

* @author Administrator

* @version 1.0

*

* Mapper端输出的数据类型

* keyIn:Text Mapper输出的key的类型

* valueIn:IntWritable Mapper输出的value的类型

*

* Reducer端输出的数据类型

* keyOut:Text

* valueOut:IntWritable

*/

public class WordCountReducer extends Reducer<Text, IntWritable,Text,IntWritable> {

@Override

//key->单词 value->次数 1 1 1 1

protected void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

int sum = 0;

//记录单词出现的次数

for (IntWritable intWritable:values){

//累加求和

sum += intWritable.get();

}

//输出

context.write(key,new IntWritable(sum));

}

}

WordCountDriver类

package com.MapReduce.util;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

/*

* @author Administrator

* @version 1.0

*/

public class WordCountDriver {

public static void main(String[] args) throws IOException, InterruptedException, ClassNotFoundException {

//加载配置

Configuration configuration = new Configuration();

//创建任务

Job job = Job.getInstance(configuration);

//指定jar包的位置

job.setJarByClass(WordCountDriver.class);

//关联Mapper类

job.setMapperClass(WordCountMapper.class);

//关联Reducer类

job.setReducerClass(WordCountReducer.class);

//设置Mapper输出的数据类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

//设置Reducer输出的数据类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

//设置数据输入的路径

FileInputFormat.setInputPaths(job,new Path(args[0]));

//设置数据输出的路径

FileOutputFormat.setOutputPath(job,new Path(args[1]));

//提交任务

boolean b = job.waitForCompletion(true);

System.exit(b?0:1);

}

}

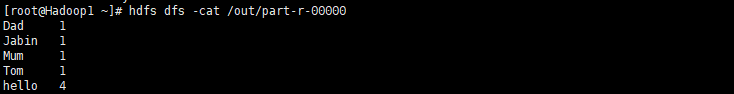

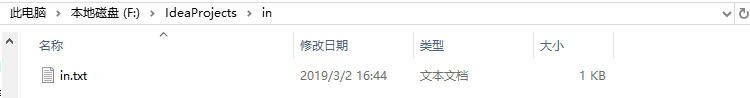

WordCount测试

(1)本地模式

(2)集群模式