版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/u012292754/article/details/86570479

1 HBase集成 MapRedue

https://hbase.apache.org/book.html#mapreduce

export HBASE_HOME=/home/hadoop/apps/hbase-1.2.0-cdh5.7.0

export HADOOP_HOME=/home/hadoop/apps/hadoop-2.6.0-cdh5.7.0

HADOOP_CLASSPATH=`${HBASE_HOME}/bin/hbase mapredcp` $HADOOP_HOME/bin/yarn jar $HBASE_HOME/lib/hbase-server-1.2.0-cdh5.7.0.jar

[hadoop@node1 ~]$ export HBASE_HOME=/home/hadoop/apps/hbase-1.2.0-cdh5.7.0

[hadoop@node1 ~]$ export HADOOP_HOME=/home/hadoop/apps/hadoop-2.6.0-cdh5.7.0

[hadoop@node1 ~]$ HADOOP_CLASSPATH=`${HBASE_HOME}/bin/hbase mapredcp` $HADOOP_HOME/bin/yarn jar $HBASE_HOME/lib/hbase-server-1.2.0-cdh5.7.0.jar

2019-01-21 11:04:47,875 WARN [main] mapreduce.TableMapReduceUtil: The hbase-prefix-tree module jar containing PrefixTreeCodec is not present. Continuing without it.

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/home/hadoop/apps/hbase-1.2.0-cdh5.7.0/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/home/hadoop/apps/hadoop-2.6.0-cdh5.7.0/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

An example program must be given as the first argument.

Valid program names are:

CellCounter: Count cells in HBase table.

WALPlayer: Replay WAL files.

completebulkload: Complete a bulk data load.

copytable: Export a table from local cluster to peer cluster.

export: Write table data to HDFS.

exportsnapshot: Export the specific snapshot to a given FileSystem.

import: Import data written by Export.

importtsv: Import data in TSV format.

rowcounter: Count rows in HBase table.

verifyrep: Compare the data from tables in two different clusters. WARNING: It doesn't work for incrementColumnValues'd cells since the timestamp is changed after being appended to the log.

[hadoop@node1 ~]$

1.1 统计一下 user 表的行数

HADOOP_CLASSPATH=`${HBASE_HOME}/bin/hbase mapredcp` $HADOOP_HOME/bin/yarn jar $HBASE_HOME/lib/hbase-server-1.2.0-cdh5.7.0.jar rowcounter user

2 通过 MapReduce 集成 HBase 对表进行读取和写入操作

package hbase;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.hbase.Cell;

import org.apache.hadoop.hbase.CellUtil;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.client.Put;

import org.apache.hadoop.hbase.client.Result;

import org.apache.hadoop.hbase.client.Scan;

import org.apache.hadoop.hbase.io.ImmutableBytesWritable;

import org.apache.hadoop.hbase.mapreduce.TableMapReduceUtil;

import org.apache.hadoop.hbase.mapreduce.TableMapper;

import org.apache.hadoop.hbase.mapreduce.TableReducer;

import org.apache.hadoop.hbase.util.Bytes;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

import java.io.IOException;

public class User2BasicMapReduce extends Configured implements Tool {

public static class ReadUserMapper extends TableMapper<Text, Put> {

private Text mapOutputKey = new Text();

@Override

public void map(ImmutableBytesWritable key, Result value, Context context) throws IOException, InterruptedException {

//get rowkey

String rowKey = Bytes.toString(key.get());

mapOutputKey.set(rowKey);

Put put = new Put(key.get());

for (Cell cell : value.rawCells()) {

if ("info".equals(Bytes.toString(CellUtil.cloneFamily(cell)))) {

if ("name".equals(Bytes.toString(CellUtil.cloneQualifier(cell)))) {

put.add(cell);

}

if ("age".equals(Bytes.toString(CellUtil.cloneQualifier(cell)))) {

put.add(cell);

}

}

}

context.write(mapOutputKey, put);

}

}

public static class WriteBasicReducer extends TableReducer<Text, Put, ImmutableBytesWritable> {

@Override

public void reduce(Text key, Iterable<Put> values, Context context) throws IOException, InterruptedException {

for (Put put : values) {

context.write(null, put);

}

}

}

//Driver

@Override

public int run(String[] args) throws Exception {

Job job = Job.getInstance(this.getConf(),this.getClass().getSimpleName());

job.setJarByClass(this.getClass());

Scan scan = new Scan();

scan.setCaching(500); // 1 is the default in Scan, which will be bad for MapReduce jobs

scan.setCacheBlocks(false); // don't set to true for MR jobs

// set other scan attrs

TableMapReduceUtil.initTableMapperJob(

"user", // input table

scan, // Scan instance to control CF and attribute selection

ReadUserMapper.class, // mapper class

Text.class, // mapper output key

Put.class, // mapper output value

job);

TableMapReduceUtil.initTableReducerJob(

"basic", // output table

WriteBasicReducer.class, // reducer class

job);

job.setNumReduceTasks(1);

return 0;

}

public static void main(String[] args) throws Exception {

Configuration conf = HBaseConfiguration.create();

int status = ToolRunner.run(conf, new User2BasicMapReduce(), args);

System.exit(status);

}

}

- Maven 打包

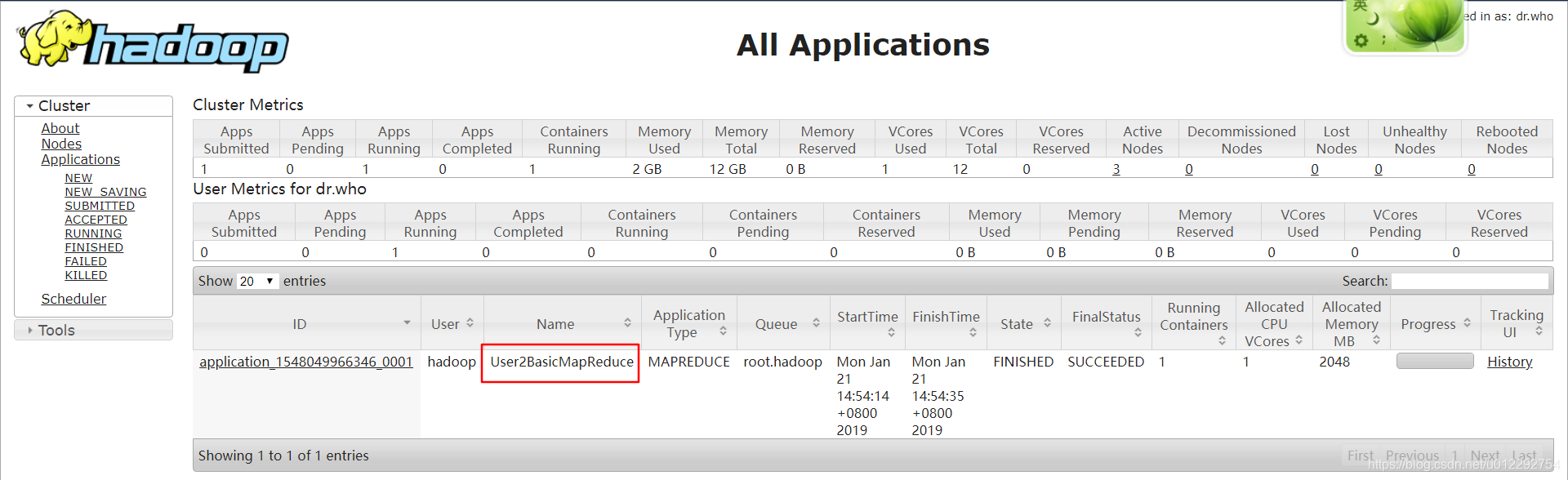

- 运行

export HBASE_HOME=/home/hadoop/apps/hbase-1.2.0-cdh5.7.0

export HADOOP_HOME=/home/hadoop/apps/hadoop-2.6.0-cdh5.7.0

HADOOP_CLASSPATH=`${HBASE_HOME}/bin/hbase mapredcp` $HADOOP_HOME/bin/yarn jar /home/hadoop/testhadoop-1.0.jar hbase.User2BasicMapReduce

- 数据成功导入 basic 表