scrapy.FormRequest 主要用于提交表单数据

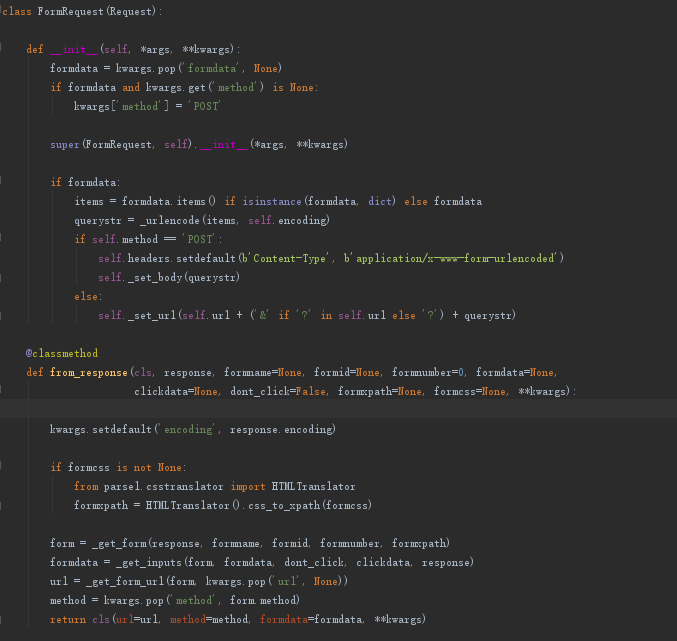

先来看一下源码

参数:

formdata (dict or iterable of tuples) – is a dictionary (or iterable of (key, value) tuples) containing HTML Form data which will be url-encoded and assigned to the body of the request.

从官方文档中可以看到默认是 post 请求

怎么用:

官方例子:

FormRequest(url="http://www.example.com/post/action", formdata={'name': 'John Doe', 'age': '27'}, callback=self.after_post

就是这么简单就发送了一个 post 表单请求, formdata 就是要提交的表单数据。 callback 是指定回调函数,该参数继承于 Request

github登录例子:

class GithubSpider(scrapy.Spider): name = 'github' allowed_domains = ['github.com'] start_urls = ['https://github.com/login'] def parse(self, response): authenticity_token = response.xpath("//input[@name='authenticity_token']/@value").extract_first() utf8 = response.xpath("//input[@name='utf8']/@value").extract_first() commit = response.xpath("//input[@name='commit']/@value").extract_first() post_data = dict( login="your_username", password="your_password", authenticity_token=authenticity_token, utf8=utf8, commit=commit ) yield scrapy.FormRequest( "https://github.com/session", formdata=post_data, callback=self.after_login ) def after_login(self,response): print(re.findall("your_username",response.body.decode()))

scrapy.FormRequest.from_response

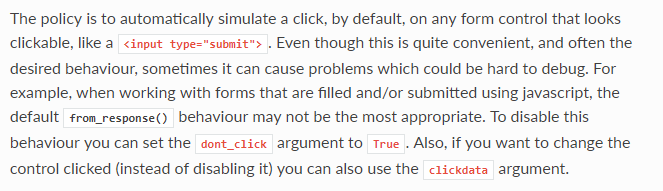

作用:自动的从 response 中寻找form表单(表单action,表单name),并且可以预填充表单认证令牌等(例如Django框架的csrf_token)

定义说明:

怎么用:

官方例子:

通常网站通过 <input type="hidden"> 实现对某些表单字段(如数据或是登录界面中的认证令牌等)的预填充。 使用Scrapy抓取网页时,如果想要预填充或重写像用户名、用户密码这些表单字段,

可以使用 FormRequest.from_response() 方法实现。下面是使用这种方法的爬虫例子

import scrapy class LoginSpider(scrapy.Spider): name = 'example.com' start_urls = ['http://www.example.com/users/login.php'] def parse(self, response): return scrapy.FormRequest.from_response( response, formdata={'username': 'john', 'password': 'secret'}, callback=self.after_login ) def after_login(self, response): # check login succeed before going on if "authentication failed" in response.body: self.log("Login failed", level=scrapy.log.ERROR) return # continue scraping with authenticated session...

github登录例子:

class Github2Spider(scrapy.Spider): name = 'github2' allowed_domains = ['github.com'] start_urls = ['https://github.com/login'] def parse(self, response): yield scrapy.FormRequest.from_response( response, #自动的从response中寻找from表单 formdata={"login":"your_username","password":"your_password"}, callback = self.after_login ) def after_login(self,response): print(re.findall("your_username",response.body.decode()))

对比两次github的模拟登录例子来看,使用from_response方法可以帮助我们寻找到表单提交的地址,以及预填充认证令牌。