FastDFS 系统有三个角色:跟踪服务器(Tracker Server)、存储服务器(Storage Server)和

客户端(Client)。

1.Tracker Server: 跟踪服务器,主要做调度工作,起到均衡的作用;负责管理所有的 storage server和 group,每个 storage 在启动后会连接 Tracker,告知自己所属 group 等信息,并保持周期性心跳。

2.Storage Server:存储服务器,主要提供容量和备份服务;以 group 为单位,每个 group 内可以有多台 storage server,数据互为备份。

3.Client:客户端,上传下载数据的服务器,也就是我们自己的项目所部署在的服务器。

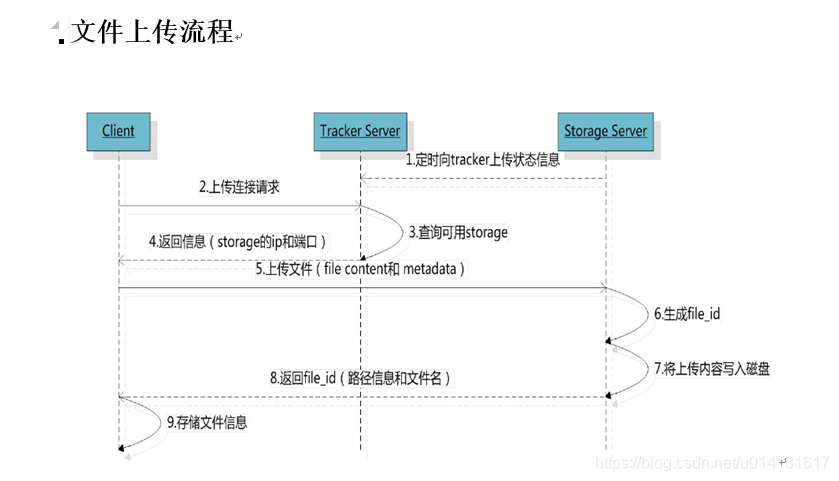

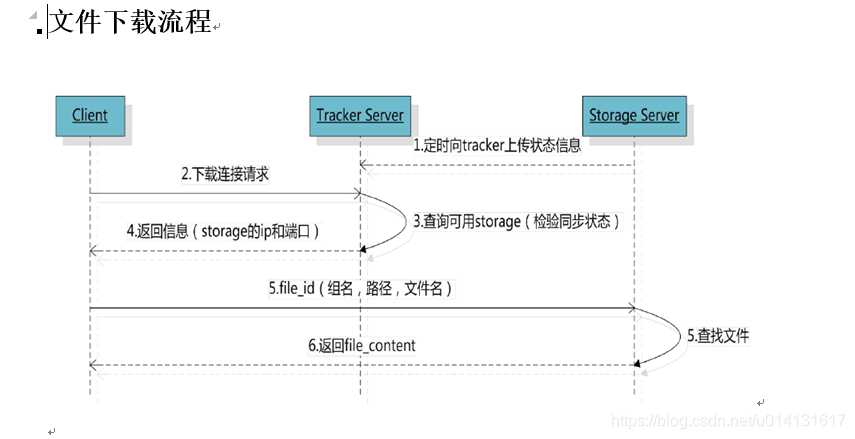

那么怎么上传下载以及同步文件这么操作呢?我在这就不详细说全部流程了,大概上传流程就是客户端发送上传请求到Tracker Server服务器,接着Tracker Server服务器分配group和Storage Server,当然这是有一定规则的,选择好Storage Server后再根据一定规则选择存储在这个服务器会生成一个file_id,这个file_id 包含字段包括:storage server ip、文件创建时间、文件大小、文件 CRC32 校验码和随机数;每个存储目录下有两个 256 * 256 个子目录,后边你会知道一个Storage Server存储目录下有好多个文件夹的,storage 会按文件file_id进行两次 hash ,路由到其中一个子目录,然后将文件存储到该子目录下,最后生成文件路径:group 名称、虚拟磁盘路径、数据两级目录、file_id和文件后缀就是一个完整的文件地址。

1.安装FastDFS

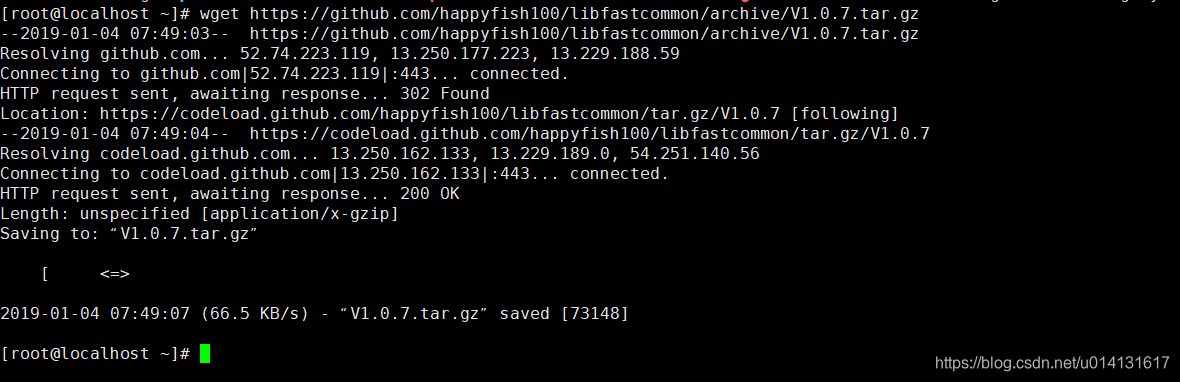

1.安装libfastcommon

输入以下命令

wget https://github.com/happyfish100/libfastcommon/archive/V1.0.7.tar.gz

2.解压libfastcommon

tar -zxvf V1.0.7.tar.gz

3.编译一下

cd libfastcommon-1.0.7

./make.sh

4.执行安装

./make.sh install

5.下载FastDFS

wget https://github.com/happyfish100/fastdfs/archive/V5.05.tar.gz

6.安装FastDFS

tar -zxvf V5.05.tar.gz

7.编译

cd fastdfs-5.05

./make.sh

8.安装

./make.sh install

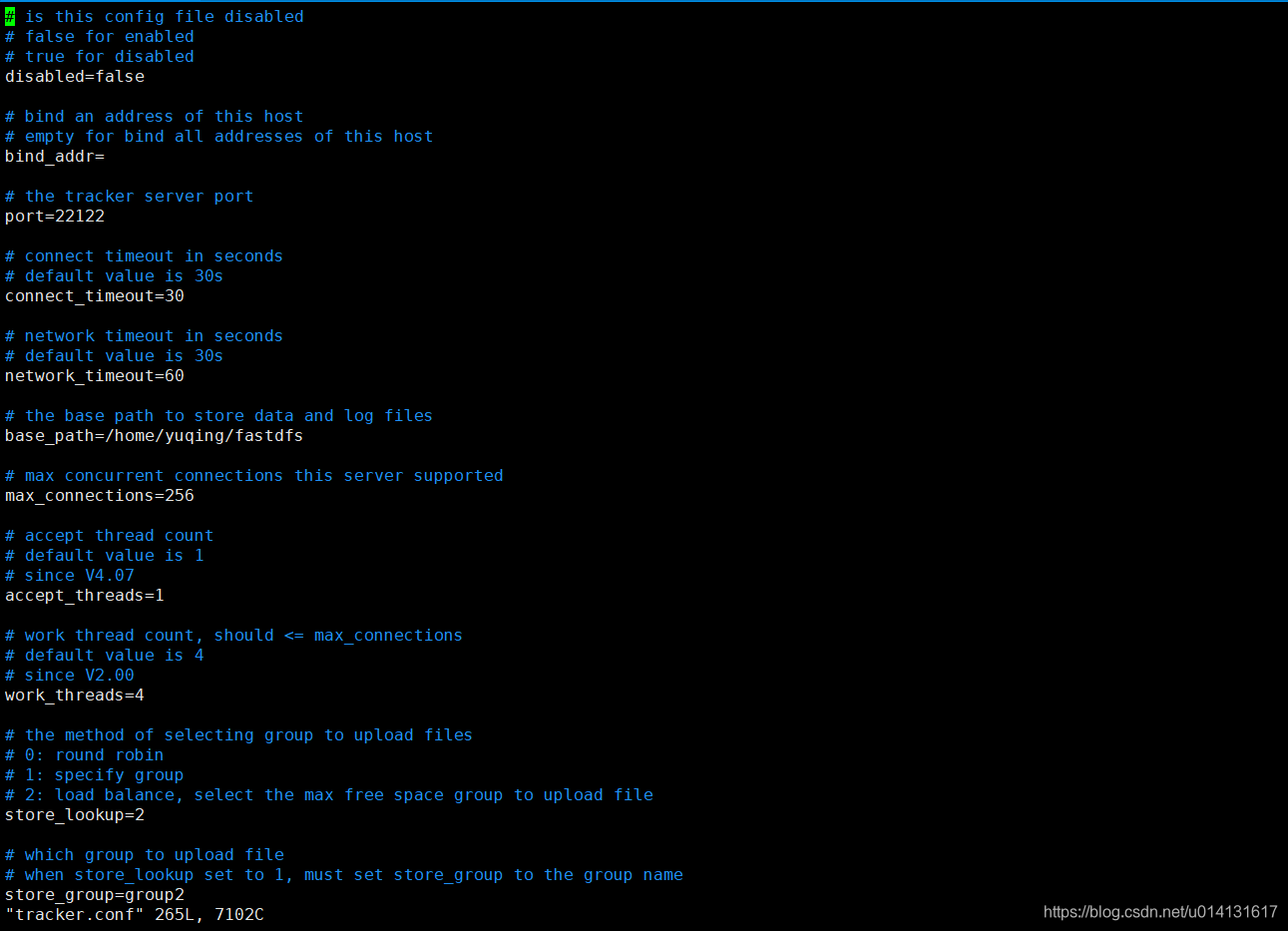

2.配置 Tracker 服务

上述安装成功后,在/etc/目录下会有一个fdfs的目录,进入它。会看到三个.sample后缀的文件,这是作者给我们的示例文件,我们需要把其中的tracker.conf.sample文件改为tracker.conf配置文件并修改它。看命令:

cp tracker.conf.sample tracker.conf

vim tracker.conf

打开tracker.conf文件,只需要找到你只需要该这两个参数就可以了

# the base path to store data and log files

base_path=/data/fastdfs

# HTTP port on this tracker server

http.server_port=80

当然前提是你要有或先创建了/data/fastdfs目录。port=22122这个端口参数不建议修改,除非你已经占用它了。

修改完成保存并退出 vim ,这时候我们可以使用/usr/bin/fdfs_trackerd /etc/fdfs/tracker.conf start来启动 Tracker服务,但是这个命令不够优雅,怎么做呢?使用ln -s 建立软链接:

ln -s /usr/bin/fdfs_trackerd /usr/local/bin

ln -s /usr/bin/stop.sh /usr/local/bin

ln -s /usr/bin/restart.sh /usr/local/bin

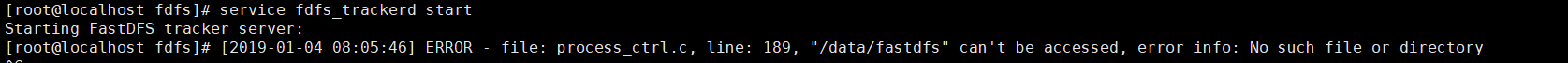

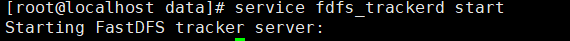

这时候我们就可以使用service fdfs_trackerd start来优雅地启动 Tracker服务了,是不是比刚才带目录的命令好记太多了(懒是社会生产力)。你也可以启动过服务看一下端口是否在监听,命令:

启动服务:service fdfs_trackerd start

查看监听:netstat -unltp|grep fdfs

提示这一个请创建下该目录

启动成功!

3.配置 Storage 服务

现在开始配置 Storage 服务,由于我这是单机器测试,你把 Storage 服务放在多台服务器也是可以的,它有 Group(组)的概念,同一组内服务器互备同步,这里不再演示。直接开始配置,依然是进入/etc/fdfs的目录操作,首先进入它。会看到三个.sample后缀的文件,我们需要把其中的storage.conf.sample文件改为storage.conf配置文件并修改它。还看命令:

cp storage.conf.sample storage.conf

vim storage.conf

打开storage.conf文件后,找到这两个参数进行修改:

# the base path to store data and log files

base_path=/data/fastdfs/storage

# store_path#, based 0, if store_path0 not exists, it's value is base_path

# the paths must be exist

store_path0=/data/fastdfs/storage

#store_path1=/home/yuqing/fastdfs2

# tracker_server can ocur more than once, and tracker_server format is

# "host:port", host can be hostname or ip address

tracker_server=192.168.198.129:22122 # 服务器ip

当然你的/data/fastdfs目录下要有storage文件夹,没有就创建一个,不然会报错的,日志以及文件都会在这个下面,启动时候会自动生成许多文件夹。stroage的port=23000这个端口参数也不建议修改,默认就好,除非你已经占用它了。

修改完成保存并退出 vim ,这时候我们依然想优雅地启动 Storage服务,带目录的命令不够优雅,这里还是使用ln -s 建立软链接:

ln -s /usr/bin/fdfs_storaged /usr/local/bin

启动服务

service fdfs_storaged start

查看监听

netstat -unltp|grep fdfs

当看见有两个监听端口,那就证明安装成功了!

4.安装 Nginx 和 fastdfs-nginx-module

1.下载 Nginx 和 fastdfs-nginx-module ,这里是通过wget下载

wget -c https://nginx.org/download/nginx-1.10.1.tar.gz

wget https://github.com/happyfish100/fastdfs-nginx-module/archive/master.zip

2.解压 fastdfs-nginx-module ,记着这时候别用tar解压了,因为是 .zip 文件,正确命令:

tar -zxvf nginx.....

unzip master.zip

3.配置 nginx 安装,加入fastdfs-nginx-module模块。这是和普通 Nginx 安装不一样的地方,因为加载了模块。

./configure --add-module=../fastdfs-nginx-module-master/src/

安装一堆插件

yum install gcc-c++

yum install -y pcre pcre-devel

yum install -y zlib zlib-devel

yum install -y openssl openssl-devel

进入nginx目录,使用make命令编译再使用make install安装

输入一下命令,如果存在,就说明安装成功

/usr/local/nginx/sbin/nginx -V

5.配置 fastdfs-nginx-module 和 Nginx

1.配置mod-fastdfs.conf,并拷贝到/etc/fdfs文件目录下。

cd /software/fastdfs-nginx-module-master/src/

vim mod_fastdfs.conf

cp mod_fastdfs.conf /etc/fdfs

修改mod-fastdfs.conf配置只需要修改我标注的这三个地方就行了,其他不需要也不建议改变。

# FastDFS tracker_server can ocur more than once, and tracker_server format is

# "host:port", host can be hostname or ip address

# valid only when load_fdfs_parameters_from_tracker is true

tracker_server=192.168.198.129:22122

# if the url / uri including the group name

# set to false when uri like /M00/00/00/xxx

# set to true when uri like ${group_name}/M00/00/00/xxx, such as group1/M00/xxx

# default value is false

url_have_group_name = true

# store_path#, based 0, if store_path0 not exists, it's value is base_path

# the paths must be exist

# must same as storage.conf

store_path0=/data/fastdfs/storage

#store_path1=/home/yuqing/fastdfs1

接着我们需要把fastdfs-5.05下面的配置中还没有存在/etc/fdfs中的拷贝进去

cd /software/fastdfs-5.05/conf

cp anti-steal.jpg http.conf mime.types /etc/fdfs/

2.配置 Nginx。编辑nginx.conf文件:

cd /usr/local/nginx/conf

vi nginx.conf

在配置文件中加入:

location /group1/M00 {

root /data/fastdfs/storage/;

ngx_fastdfs_module;

}

由于我们配置了group1/M00的访问,我们需要建立一个group1文件夹,并建立M00到data的软链接。

mkdir /data/fastdfs/storage/data/group1

ln -s /data/fastdfs/storage/data /data/fastdfs/storage/data/group1/M00

启动 Nginx ,会打印出fastdfs模块的pid,看看日志是否报错,正常不会报错的

/usr/local/nginx/sbin/nginx

开放80端口访问权限。在iptables中加入重启就行,或者你直接关闭防火墙,本地测试环境可以这么干,但到线上万万不能关闭防火墙的。

vi /etc/sysconfig/iptables

-A INPUT -m state --state NEW -m tcp -p tcp --dport 80 -j ACCEPT

重启防火墙,使设置生效:

service iptables restart

上传测试

完成上面的步骤后,我们已经安装配置完成了全部工作,接下来就是测试了。因为执行文件全部在/usr/bin目录下,我们切换到这里,并新建一个test.txt文件,随便写一点什么,我写了This is a test file. by:mafly这句话在里边。然后测试上传:

cd /usr/bin

vim test.txt

fdfs_test /etc/fdfs/client.conf upload test.txt

很不幸,并没有成功,报错了。

ERROR - file: shared_func.c, line: 960, open file /etc/fdfs/client.conf fail, errno: 2, error info: No such file or directory

ERROR - file: ../client/client_func.c, line: 402, load conf file "/etc/fdfs/client.conf" fail, ret code: 2

一般什么事情第一次都不是很顺利,这很正常,通过错误提示我看到,好像没有找到client.conf这个文件,现在想起来的确没有配置这个文件,那我们现在去配置一下图中的两个参数:

cd /etc/fdfs

cp client.conf.sample client.conf

vim client.conf

怎么还依然报错阿???

upload file fail, error no: 2, error info: No such file or directory

哈哈,你是不是测试上传命令中要上传的test.txt文件路径有问题,嗯,那我改一下命令:

/usr/bin/fdfs_test /etc/fdfs/client.conf upload /usr/bin/test.txt

返回文件信息及上传后的文件 HTTP 地址,你打开浏览器访问一下试试。

6.JavaApi的使用

1.pom文件

<groupId>fastdfs_client</groupId>

<artifactId>fastdfs_client</artifactId>

<version>1.25</version>

2.fdfs_client.conf

# connect timeout in seconds

# default value is 30s

connect_timeout=30

# network timeout in seconds

# default value is 30s

network_timeout=60

# the base path to store log files

base_path=/home/fastdfs

# tracker_server can ocur more than once, and tracker_server format is

# "host:port", host can be hostname or ip address

tracker_server=192.168.101.3:22122

tracker_server=192.168.101.4:22122

#standard log level as syslog, case insensitive, value list:

### emerg for emergency

### alert

### crit for critical

### error

### warn for warning

### notice

### info

### debug

log_level=info

# if use connection pool

# default value is false

# since V4.05

use_connection_pool = false

# connections whose the idle time exceeds this time will be closed

# unit: second

# default value is 3600

# since V4.05

connection_pool_max_idle_time = 3600

# if load FastDFS parameters from tracker server

# since V4.05

# default value is false

load_fdfs_parameters_from_tracker=false

# if use storage ID instead of IP address

# same as tracker.conf

# valid only when load_fdfs_parameters_from_tracker is false

# default value is false

# since V4.05

use_storage_id = false

# specify storage ids filename, can use relative or absolute path

# same as tracker.conf

# valid only when load_fdfs_parameters_from_tracker is false

# since V4.05

storage_ids_filename = storage_ids.conf

#HTTP settings

http.tracker_server_port=80

#use "#include" directive to include HTTP other settiongs

##include http.conf

FastdfsClientTest.java

import java.io.File;

import java.io.FileNotFoundException;

import java.io.FileOutputStream;

import java.io.IOException;

import java.util.UUID;

import org.csource.common.NameValuePair;

import org.csource.fastdfs.ClientGlobal;

import org.csource.fastdfs.FileInfo;

import org.csource.fastdfs.StorageClient;

import org.csource.fastdfs.StorageClient1;

import org.csource.fastdfs.StorageServer;

import org.csource.fastdfs.TrackerClient;

import org.csource.fastdfs.TrackerServer;

import org.junit.Test;

public class FastdfsClientTest {

//客户端配置文件

public String conf_filename = "F:\\workspace_indigo\\fastdfsClient\\src\\cn\\itcast\\fastdfs\\cliennt\\fdfs_client.conf";

//本地文件,要上传的文件

public String local_filename = "F:\\develop\\upload\\linshiyaopinxinxi_20140423193847.xlsx";

//将字节流写到磁盘生成文件

private void saveFile(byte[] b, String path, String fileName) {

File file = new File(path+fileName);

FileOutputStream fileOutputStream = null;

try {

fileOutputStream= new FileOutputStream(file);

fileOutputStream.write(b);

} catch (FileNotFoundException e) {

// TODO Auto-generated catch block

e.printStackTrace();

} catch (IOException e) {

// TODO Auto-generated catch block

e.printStackTrace();

}finally{

if(fileOutputStream!=null){

try {

fileOutputStream.close();

} catch (IOException e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

}

}

}

//上传文件

@Test

public void testUpload() {

for(int i=0;i<100;i++){

try {

ClientGlobal.init(conf_filename);

TrackerClient tracker = new TrackerClient();

TrackerServer trackerServer = tracker.getConnection();

StorageServer storageServer = null;

StorageClient storageClient = new StorageClient(trackerServer,

storageServer);

NameValuePair nvp [] = new NameValuePair[]{

new NameValuePair("item_id", "100010"),

new NameValuePair("width", "80"),

new NameValuePair("height", "90")

};

String fileIds[] = storageClient.upload_file(local_filename, null,

nvp);

System.out.println(fileIds.length);

System.out.println("组名:" + fileIds[0]);

System.out.println("路径: " + fileIds[1]);

} catch (FileNotFoundException e) {

e.printStackTrace();

} catch (IOException e) {

e.printStackTrace();

} catch (Exception e) {

e.printStackTrace();

}

}

}

//下载文件

@Test

public void testDownload() {

try {

ClientGlobal.init(conf_filename);

TrackerClient tracker = new TrackerClient();

TrackerServer trackerServer = tracker.getConnection();

StorageServer storageServer = null;

// StorageClient storageClient = new StorageClient(trackerServer,

// storageServer);

StorageClient1 storageClient = new StorageClient1(trackerServer, storageServer);

byte[] b = storageClient.download_file("group1",

"M00/00/00/wKhlBVVZvU6AV3MyAAE1Bar7bBg889.jpg");

if(b !=null){

System.out.println(b.length);

saveFile(b, "F:\\develop\\upload\\temp\\", UUID.randomUUID().toString()+".jpg");

}

} catch (Exception e) {

e.printStackTrace();

}

}

//获取文件信息

@Test

public void testGetFileInfo(){

try {

ClientGlobal.init(conf_filename);

TrackerClient tracker = new TrackerClient();

TrackerServer trackerServer = tracker.getConnection();

StorageServer storageServer = null;

StorageClient storageClient = new StorageClient(trackerServer,

storageServer);

FileInfo fi = storageClient.get_file_info("group1", "M00/00/00/wKhlBVVZvU6AV3MyAAE1Bar7bBg889.jpg");

System.out.println(fi.getSourceIpAddr());

System.out.println(fi.getFileSize());

System.out.println(fi.getCreateTimestamp());

System.out.println(fi.getCrc32());

} catch (Exception e) {

e.printStackTrace();

}

}

//获取文件自定义的mate信息(key、value)

@Test

public void testGetFileMate(){

try {

ClientGlobal.init(conf_filename);

TrackerClient tracker = new TrackerClient();

TrackerServer trackerServer = tracker.getConnection();

StorageServer storageServer = null;

StorageClient storageClient = new StorageClient(trackerServer,

storageServer);

NameValuePair nvps [] = storageClient.get_metadata("group1", "M00/00/00/wKhlBVVZvU6AV3MyAAE1Bar7bBg889.jpg");

if(nvps!=null){

for(NameValuePair nvp : nvps){

System.out.println(nvp.getName() + ":" + nvp.getValue());

}

}

} catch (Exception e) {

e.printStackTrace();

}

}

//删除文件

@Test

public void testDelete(){

try {

ClientGlobal.init(conf_filename);

TrackerClient tracker = new TrackerClient();

TrackerServer trackerServer = tracker.getConnection();

StorageServer storageServer = null;

StorageClient storageClient = new StorageClient(trackerServer,

storageServer);

int i = storageClient.delete_file("group1", "M00/00/00/wKhlBVVZvU6AV3MyAAE1Bar7bBg889.jpg");

System.out.println( i==0 ? "删除成功" : "删除失败:"+i);

} catch (Exception e) {

e.printStackTrace();

}

}

}