MapReduce序列化、分区实验

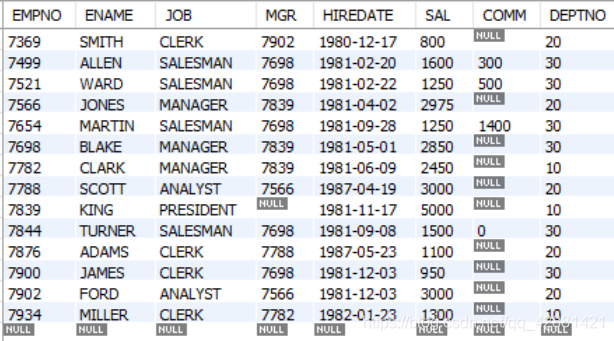

有一张员工表emp.csv,内容如下:

SAL:为员工工资

7369,SMITH,CLERK,7902,1980/12/17,800,,20

7499,ALLEN,SALESMAN,7698,1981/2/20,1600,300,30

7521,WARD,SALESMAN,7698,1981/2/22,1250,500,30

7566,JONES,MANAGER,7839,1981/4/2,2975,,20

7654,MARTIN,SALESMAN,7698,1981/9/28,1250,1400,30

7698,BLAKE,MANAGER,7839,1981/5/1,2850,,30

7782,CLARK,MANAGER,7839,1981/6/9,2450,,10

7788,SCOTT,ANALYST,7566,1987/4/19,3000,,20

7839,KING,PRESIDENT,,1981/11/17,5000,,10

7844,TURNER,SALESMAN,7698,1981/9/8,1500,0,30

7876,ADAMS,CLERK,7788,1987/5/23,1100,,20

7900,JAMES,CLERK,7698,1981/12/3,950,,30

7902,FORD,ANALYST,7566,1981/12/3,3000,,20

7934,MILLER,CLERK,7782,1982/1/23,1300,,10根据如上emp.csv表,假设:

薪资<1500,为低薪,

薪资>=1500,而且薪资<3000为中薪,

薪资>=3000,为高薪。

问题:编写程序实现将对员工数据按低薪、中薪、高薪进行分区存储,输出到三个文件。

要求:职工信息采用一个独立的类存放,并且实现Hadoop序列化。

本实验是在案例四的基础上进行分析:

由以上分析,一共有5个类

新建Maven工程,配置好pom.xml(参考案例二),建立相应的5各类。

参考代码:

Employee.java

package com.myPatition2;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

import org.apache.hadoop.io.Writable;

//定义Employee类实现序列化接口

public class Employee implements Writable{

//字段名 EMPNO, ENAME, JOB, MGR, HIREDATE, SAL, COMM, DEPTNO

//数据类型:Int,Char, Char , Int, Date , Int Int, Int

//数据: 7654, MARTIN, SALESMAN, 7698, 1981/9/28, 1250, 1400, 30

//由以上定义变量

private int empno;

private String ename;

private String job;

private int mgr;

private String hiredate;

private int sal;

private int comm;//奖金

private int deptno;

@Override

public String toString() {

// return "Employee [empno=" + empno + ", ename=" + ename + ", sal=" + sal + ", deptno=" + deptno + "]";

return empno+","+ename+","+job+","+mgr+","+hiredate+","+sal+","+comm+","+deptno;

}

//序列化方法:将java对象转化为可跨机器传输数据流(二进制串/字节)的一种技术

public void write(DataOutput out) throws IOException {

out.writeInt(this.empno);

out.writeUTF(this.ename);

out.writeUTF(this.job);

out.writeInt(this.mgr);

out.writeUTF(this.hiredate);

out.writeInt(this.sal);

out.writeInt(this.comm);

out.writeInt(this.deptno);

}

//反序列化方法:将可跨机器传输数据流(二进制串)转化为java对象的一种技术

public void readFields(DataInput in) throws IOException {

this.empno = in.readInt();

this.ename = in.readUTF();

this.job = in.readUTF();

this.mgr = in.readInt();

this.hiredate = in.readUTF();

this.sal = in.readInt();

this.comm = in.readInt();

this.deptno = in.readInt();

}

//其他类通过set/get方法操作变量:Source-->Generator Getters and Setters

public int getEmpno() {

return empno;

}

public void setEmpno(int empno) {

this.empno = empno;

}

public String getEname() {

return ename;

}

public void setEname(String ename) {

this.ename = ename;

}

public String getJob() {

return job;

}

public void setJob(String job) {

this.job = job;

}

public int getMgr() {

return mgr;

}

public void setMgr(int mgr) {

this.mgr = mgr;

}

public String getHiredate() {

return hiredate;

}

public void setHiredate(String hiredate) {

this.hiredate = hiredate;

}

public int getSal() {

return sal;

}

public void setSal(int sal) {

this.sal = sal;

}

public int getComm() {

return comm;

}

public void setComm(int comm) {

this.comm = comm;

}

public int getDeptno() {

return deptno;

}

public void setDeptno(int deptno) {

this.deptno = deptno;

}

}

注意:Employee类 ,要重写toString()方法,构造出Reduce所要的输出。

SalaryTotalMapper

package com.myPatition2;

import java.io.IOException;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

public class SalaryTotalMapper extends Mapper< LongWritable, Text, NullWritable, Employee> {

@Override

protected void map(LongWritable k1, Text v1,

Context context)

throws IOException, InterruptedException {

//数据:7499,ALLEN,SALESMAN,7698,1981/2/20,1600,300,30

String data = v1.toString();

String[] words = data.split(",");

//创建员工对象

Employee emp = new Employee();

//设置员工属性

emp.setEmpno(Integer.parseInt(words[0]));

emp.setEname(words[1]);

emp.setJob(words[2]);

try {

emp.setMgr(Integer.parseInt(words[3]));//可能为空,加try...catch包围

} catch (NumberFormatException ex) {

ex.printStackTrace();

}

emp.setHiredate(words[4]);

emp.setSal(Integer.parseInt(words[5]));

try {

emp.setComm(Integer.parseInt(words[6]));//可能为空

} catch (NumberFormatException ex) {

ex.printStackTrace();

}

emp.setDeptno(Integer.parseInt(words[7]));

//取出部门号words[7],将String转换为Int,Int转换为IntWritable对象,赋值为k2

NullWritable k2 = NullWritable.get();

//取出工资words[5],将String转换为Int,Int转换为IntWritable对象,赋值为v2

Employee v2 = emp;

//输出k2, v2

context.write(k2, v2);

}

}

SalaryTotalReducer.java

package com.myPatition2;

import java.io.IOException;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

public class SalaryTotalReducer extends Reducer<NullWritable,Employee,NullWritable,Text> {

@Override

protected void reduce(NullWritable k3, Iterable<Employee> v3,

Context context) throws IOException, InterruptedException {

String line=null;

for (Employee v : v3) {

line = v.toString();

context.write(k3, new Text(line));

}

}

}

SalaryTotalMain.java

package com.myPatition2;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class SalaryTotalMain {

public static void main(String[] args) throws Exception {

//1. 创建一个job和任务入口(指定主类)

Job job = Job.getInstance();

job.setJarByClass(SalaryTotalMain.class);

//2. 指定job的mapper和输出的类型<k2 v2>

job.setMapperClass(SalaryTotalMapper.class);

job.setMapOutputKeyClass(NullWritable.class);

job.setMapOutputValueClass(Employee.class);

//这里有变化:

//指定任务的分区规则的类

job.setPartitionerClass(SalaryTotalPartitioner.class);

//指定建立几个分区

job.setNumReduceTasks(3);

//3. 指定job的reducer和输出的类型<k4 v4>

job.setReducerClass(SalaryTotalReducer.class);

job.setOutputKeyClass(NullWritable.class);

job.setOutputValueClass(Text.class);

//4. 指定job的输入和输出路径

FileInputFormat.setInputPaths(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

//5. 执行job

job.waitForCompletion(true);

}

}

SalaryTotalPartitioner.java

package com.myPatition2;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.mapreduce.Partitioner;

// map-outputs:k2,v2-->NullWritable, Employee

public class SalaryTotalPartitioner extends Partitioner<NullWritable, Employee>{

@Override

public int getPartition(NullWritable k2, Employee v2, int numPatition) {

//如何分区: 每个部门放在一个分区

if(v2.getSal() < 1500) {

//放入1号分区中

return 1%numPatition;// 1%3=1

}else if(v2.getSal() >=1500 && v2.getSal() < 3000){

//放入2号分区中

return 2%numPatition;// 2%3=2

}else {

//放入3号分区中

return 3%numPatition;// 3%3=0

}

}

}

写好代码后,打成jar包,提交到hadoop去执行

查看输出文件如下 :除了_SUCCESS外,有三个输出文件。

查看输出结果,确实是按照薪水等级来存放的员工数据的,说明程序已正确。

完成! enjoy it!