倒排索引这个名字让人很容易误解成A-Z,倒排成Z-A;但实际上缺不是这样的。

一般我们是根据问文件来确定文件内容,而倒排索引是指通过文件内容来得到文档的信息,也就是根据一些单词判断他在哪个文件中。

知道了这一点下面就好做了:

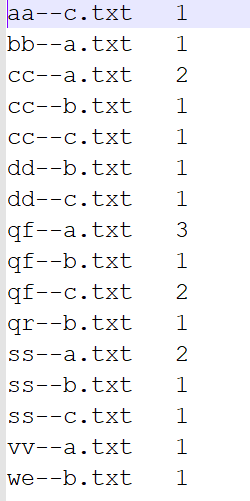

准备一些元数据

下面我们要进行两次MapReduce处理

第一次数据处理

package com.invalid;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public static void main(String[] args) throws Exception {

//创建连接

Configuration conf = new Configuration();

Job job =Job.getInstance(conf);

//设置要运行的jar

job.setJarByClass(InvalidDriver.class);

//设置map和reduce

job.setMapperClass(InvalidMapper.class);

job.setReducerClass(InvalidReducer.class);

//设置map输出

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

//设置reduce输出

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

//设置输入输出路径

FileInputFormat.setInputPaths(job,new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

//程序运行结果

boolean result = job.waitForCompletion(true);

System.out.println(result);

}

}

class InvalidMapper extends Mapper<LongWritable,Text,Text,IntWritable>{

String name;

Text k = new Text();

IntWritable v = new IntWritable();

//setup里读取文件名,且只执行一次

@Override

protected void setup(Context context)

throws IOException ,InterruptedException {

FileSplit split = (FileSplit) context.getInputSplit();

name = split.getPath().getName();

};

@Override

protected void map(LongWritable key, Text value,Context context)

throws IOException ,InterruptedException {

String[] split = value.toString().split(" ");

for (String word : split) {

context.write(new Text(word+"--"+ name), new IntWritable(1));

}

};

}

class InvalidReducer extends Reducer<Text, IntWritable, Text, IntWritable>{

@Override

protected void reduce(Text key, Iterable<IntWritable> values,Context context)

throws IOException, InterruptedException {

int count = 0;

for (IntWritable value : values) {

count+=value.get();

}

context.write(new Text(key), new IntWritable(count));

}

}

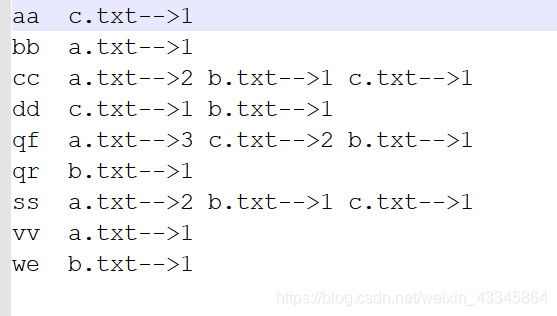

第一次处理结果如下

第二次处理

package com.invalid;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class InvalidDriver2 {

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

Job job =Job.getInstance(conf);

job.setJarByClass(InvalidDriver2.class);

job.setMapperClass(InvalidMapper2.class);

job.setReducerClass(InvalidReducer2.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(Text.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

FileInputFormat.setInputPaths(job,new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

boolean result = job.waitForCompletion(true);

System.out.println(result);

}

}

class InvalidMapper2 extends Mapper<LongWritable,Text,Text,Text>{

@Override

protected void map(LongWritable key, Text value,Context context)

throws IOException ,InterruptedException {

String[] split = value.toString().split("--");

String keys=split[0];

String values=split[1];

context.write(new Text(keys),new Text(values));

}

}

class InvalidReducer2 extends Reducer<Text, Text, Text, Text>{;

@Override

protected void reduce(Text key, Iterable<Text> values,Context context)

throws IOException, InterruptedException {

//String[] split = values.toString().split("\t");

//String value=null;

StringBuilder sb = new StringBuilder();

for (Text string: values) {

sb.append(string.toString().replaceAll("\t", "-->")+" ");

}

context.write(new Text(key), new Text(sb.toString()));

}

}

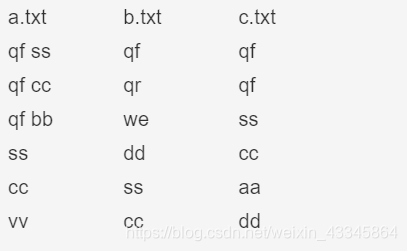

第二次处理结果