版权声明:我是南七小僧,微信: to_my_love ,寻找人工智能相关工作,欢迎交流思想碰撞。 https://blog.csdn.net/qq_25439417/article/details/84061836

# -*- coding: utf-8 -*-

"""

Created on Tue Nov 13 22:53:33 2018

@author: Lenovo

"""

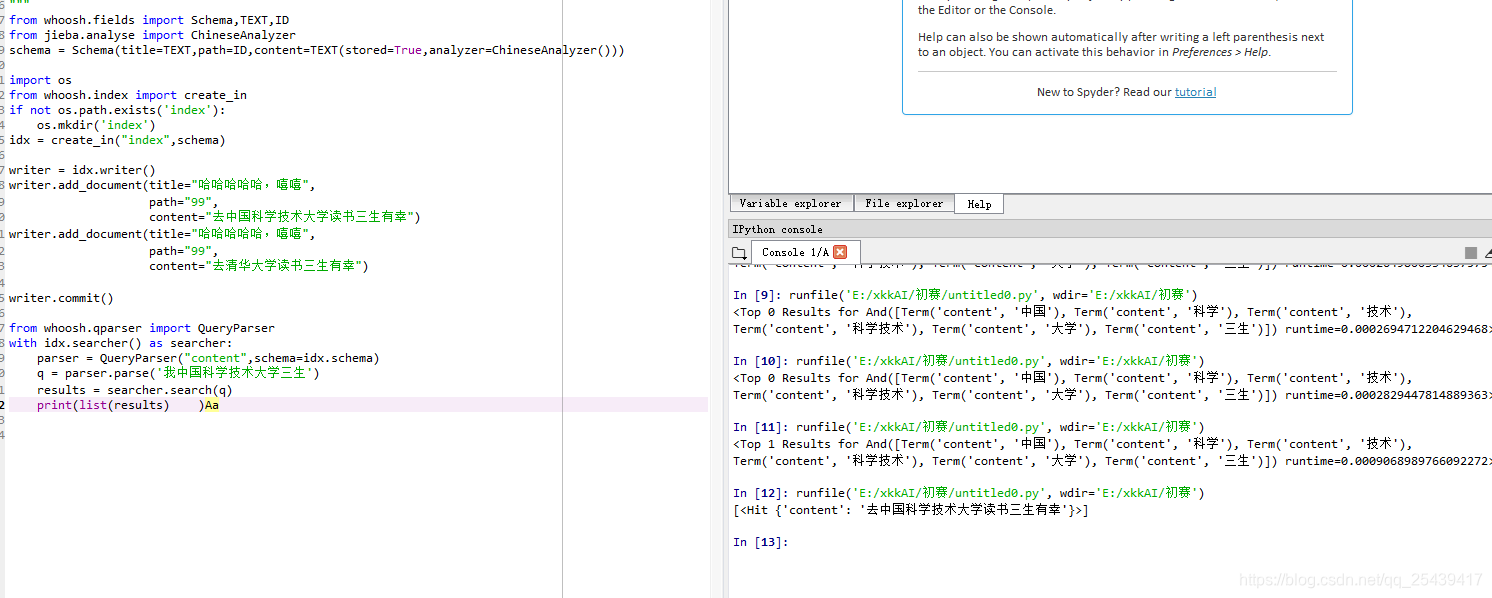

from whoosh.fields import Schema,TEXT,ID

from jieba.analyse import ChineseAnalyzer

schema = Schema(title=TEXT,path=ID,content=TEXT(stored=True,analyzer=ChineseAnalyzer()))

import os

from whoosh.index import create_in

if not os.path.exists('index'):

os.mkdir('index')

idx = create_in("index",schema)

writer = idx.writer()

writer.add_document(title="哈哈哈哈哈,嘻嘻",

path="99",

content="少时诵诗书大撒所三生三世十里桃花")

writer.commit()

from whoosh.qparser import QueryParser

with idx.searcher() as searcher:

parser = QueryParser("content",schema=idx.schema)

q = parser.parse('我是来自中国科学技术大学')

results = searcher.search(q)

print(results)

接下来看一看ChineseAnalyzer的源码:

# encoding=utf-8

from __future__ import unicode_literals

from whoosh.analysis import RegexAnalyzer, LowercaseFilter, StopFilter, StemFilter

from whoosh.analysis import Tokenizer, Token

from whoosh.lang.porter import stem

import jieba

import re

STOP_WORDS = frozenset(('a', 'an', 'and', 'are', 'as', 'at', 'be', 'by', 'can',

'for', 'from', 'have', 'if', 'in', 'is', 'it', 'may',

'not', 'of', 'on', 'or', 'tbd', 'that', 'the', 'this',

'to', 'us', 'we', 'when', 'will', 'with', 'yet',

'you', 'your', '的', '了', '和','我'))

accepted_chars = re.compile(r"[\u4E00-\u9FD5]+")

class ChineseTokenizer(Tokenizer):

def __call__(self, text, **kargs):

words = jieba.tokenize(text, mode="search")

token = Token()

for (w, start_pos, stop_pos) in words:

if not accepted_chars.match(w) and len(w) <= 1:

continue

token.original = token.text = w

# print(token)

token.pos = start_pos

token.startchar = start_pos

token.endchar = stop_pos

yield token

def ChineseAnalyzer(stoplist=STOP_WORDS, minsize=1, stemfn=stem, cachesize=50000):

return (ChineseTokenizer() | LowercaseFilter() |

StopFilter(stoplist=stoplist, minsize=minsize) |

StemFilter(stemfn=stemfn, ignore=None, cachesize=cachesize))

看到我们可以在STOP_WORDS里加入自己的停用词,但是如何指定Dictionary好像还不具备这个功能,我们来对源码进行改造

通过层层追踪,我们发现在Tokenizer中可以通过初始化指定词典

# encoding=utf-8

from __future__ import unicode_literals

from whoosh.analysis import RegexAnalyzer, LowercaseFilter, StopFilter, StemFilter

from whoosh.analysis import Tokenizer, Token

from whoosh.lang.porter import stem

import jieba

import re

tk = jieba.Tokenizer(dictionary=None)

STOP_WORDS = frozenset(('a', 'an', 'and', 'are', 'as', 'at', 'be', 'by', 'can',

'for', 'from', 'have', 'if', 'in', 'is', 'it', 'may',

'not', 'of', 'on', 'or', 'tbd', 'that', 'the', 'this',

'to', 'us', 'we', 'when', 'will', 'with', 'yet',

'you', 'your', '的', '了', '和','我'))

accepted_chars = re.compile(r"[\u4E00-\u9FD5]+")

class ChineseTokenizer(Tokenizer):

def __call__(self, text, **kargs):

words = tk.tokenize(text, mode="search")

token = Token()

for (w, start_pos, stop_pos) in words:

if not accepted_chars.match(w) and len(w) <= 1:

continue

token.original = token.text = w

# print(token)

token.pos = start_pos

token.startchar = start_pos

token.endchar = stop_pos

yield token

def ChineseAnalyzer(stoplist=STOP_WORDS, minsize=1, stemfn=stem, cachesize=50000):

return (ChineseTokenizer() | LowercaseFilter() |

StopFilter(stoplist=stoplist, minsize=minsize) |

StemFilter(stemfn=stemfn, ignore=None, cachesize=cachesize))

把初始化Tokenizer的操作作为全局变量,这样可以做到常驻内存,避免重复加载词典耗时