之前已经按照了Hadoop1.2和java 1.6了

现在准备再安装一个Hadoop2.6.具体安装过程就不重复了。

这里主要记录几个重要的配置参数。

1.bashrc

export JAVA_HOME=/usr/local/src/jdk1.6.0_45

export SQOOP_HOME=/usr/local/src/sqoop-1.99.4-bin-hadoop200

export CLASSPATH=.:$CLASSPATH:$JAVA_HOME/lib

export HADOOP_HOME=/usr/local/src/hadoop-2.6.1

export ZOOKEEPER_HOME=/usr/local/zookeeper-3.4.5

export PATH=$PATH:$JAVA_HOME/bin:$HADOOP_HOME/bin:$ZOOKEEPER_HOME/bin:$SQOOP_HOME/bin

export CATALINA_HOME=$SQOOP_HOME/server

export LOGDIR=$SQOOP_HOME/logs

export SQOOP_SERVER_EXTRA_LIB=$SQOOP_HOME/extra2.core-site.xml

<configuration>

<property>

<name>hadoop.temp.dir</name>

<value>/usr/local/src/hadoop-2.6.1/temp</value>

</property>

<property>

<name>fs.default.name</name>

<value>hdfs://192.168.116.10:9000</value>

</property>

<property>

<name>io.file.buffer.size</name>

<value>131072</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>master:2181,slave1:2181,slave2:2181</value>

</property>

</configuration>3.mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>master:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>master:19888</value>

</property>

</configuration>3.yarn-site.xml

<configuration>

<!-- Site specific YARN configuration properties -->

<property>

<name>yarn.resourcemanager.hostname</name>

<value>master</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>master:8032</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>master:8030</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>master:8031</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>master:8033</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>master:8088</value>

</property>

</configuration>5.hdfs-site.xml

<configuration>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>master:9001</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/usr/local/src/hadoop-2.6.1/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/usr/local/src/hadoop-2.6.1/data</value>

</property>

<property>

<name>dfs.replication</name>

<value>2</value>

</property>

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>

</configuration>还有yarn -env和Hadoop-env配置java就不写了

二、测试一下HDFS,来运行最简单的wordcount:

vim wc.input输入几个单词:hadoop mapreduce hive

hbase spark storm

sqoop hadoop hive

spark hadoop

live spart

保存退出

新建文件夹hdfs dfs -mkdir /test

把文件上传到hdfs :hdfs dfs -put ${HADOOP_HOME}/data/wc.input /test

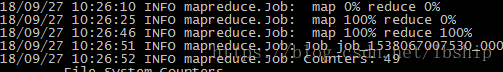

yarn jar /usr/local/src/hadoop-2.6.1/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.6.1.jar wordcount /test/wc.input /test/output

查看结果:hdfs dfs -ls /test/output

可以看到两个文件,_SUCCESS文件是空文件,有这个文件说明Job执行成功,一个reduce会产生一个part-r-开头的文件

查看刚才的结果: hdfs dfs -cat /test/output/part-r-00000

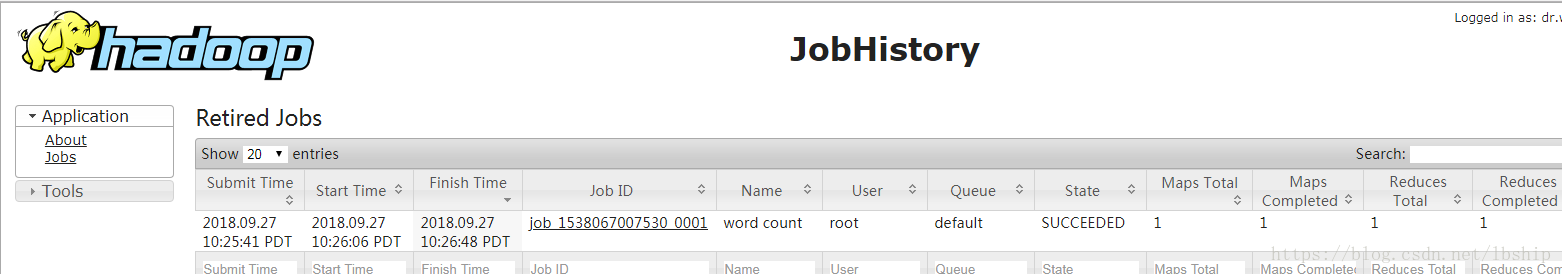

查看一下历史的记录,在浏览器输入http://192.168.116.10:19888/jobhistory

可以看到刚才运行的记录。