原理部分粘贴自:http://blog.51cto.com/breezey/1339402

MFS特性:

Free(GPL)

通用文件系统,不需要修改上层应用就可以使用

可以在线扩容,体系架构可伸缩性极强。

部署简单。

高可用,可设置任意的文件冗余程度

可回收在指定时间内删除的文件

提供netapp,emc,ibm等商业存储的snapshot特性

google filesystem的一个c实现。

提供web gui监控接口。

提高随机读或写的效率

提高海量小文件的读写效率工作原理和设计架构:

MFS的读数据过程:

MFS的读数据过程:

client当需要一个数据时,首先向master server发起查询请求;

管理服务器检索自己的数据,获取到数据所在的可用数据服务器位置ip|port|chunkid;

管理服务器将数据服务器的地址发送给客户端;

客户端向具体的数据服务器发起数据获取请求;

数据服务器将数据发送给客户端;MFS的写数据过程:

MFS的写数据过程:

当客户端有数据写需求时,首先向管理服务器提供文件元数据信息请求存储地址(元数据信息如:文件名|大小|份数等);

管理服务器根据写文件的元数据信息,到数据服务器创建新的数据块;

数据服务器返回创建成功的消息;

管理服务器将数据服务器的地址返回给客户端(chunkIP|port|chunkid);

客户端向数据服务器写数据;

数据服务器返回给客户端写成功的消息;

客户端将此次写完成结束信号和一些信息发送到管理服务器来更新文件的长度和最后修改时间

MFS的删除文件过程:

客户端有删除操作时,首先向Master发送删除信息;

Master定位到相应元数据信息进行删除,并将chunk server上块的删除操作加入队列异步清理;

响应客户端删除成功的信号

MFS修改文件内容的过程:

客户端有修改文件内容时,首先向Master发送操作信息;

Master申请新的块给.swp文件,

客户端关闭文件后,会向Master发送关闭信息;

Master会检测内容是否有更新,若有,则申请新的块存放更改后的文件,删除原有块和.swp文件块;

若无,则直接删除.swp文件块。

MFS重命名文件的过程:

客户端重命名文件时,会向Master发送操作信息;

Master直接修改元数据信息中的文件名;返回重命名完成信息;实验环境必须保证每个节点有解析:

redhat.7.3

westos1 172.25.254.11 mfsmaster节点

westos2 172.25.254.12 从节点,就是真正储存数据的节点

westos3 172.25.254.13 通server2

真机: 172.25.254.84 client

selinux=disable ,防火墙关闭,开机不启动NetworkManager关闭,开机不启动在server1作为master节点:

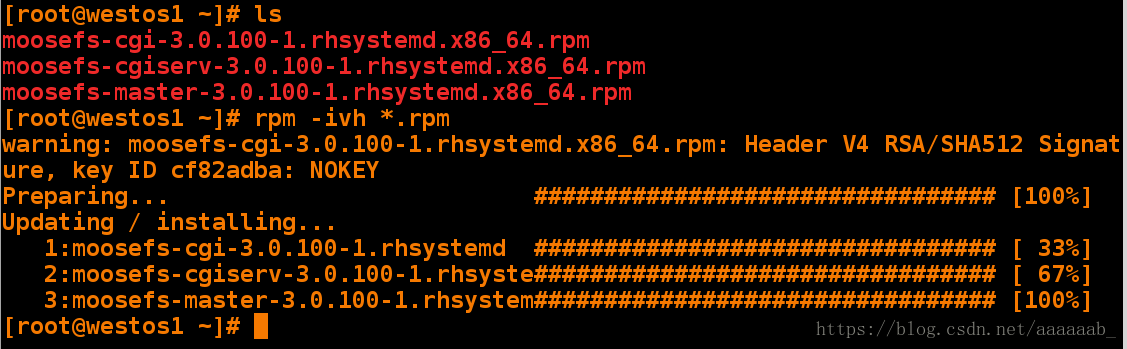

[root@westos1 ~]# ls

moosefs-cgi-3.0.100-1.rhsystemd.x86_64.rpm

moosefs-cgiserv-3.0.100-1.rhsystemd.x86_64.rpm

moosefs-master-3.0.100-1.rhsystemd.x86_64.rpm

[root@westos1 ~]# rpm -ivh *.rpm

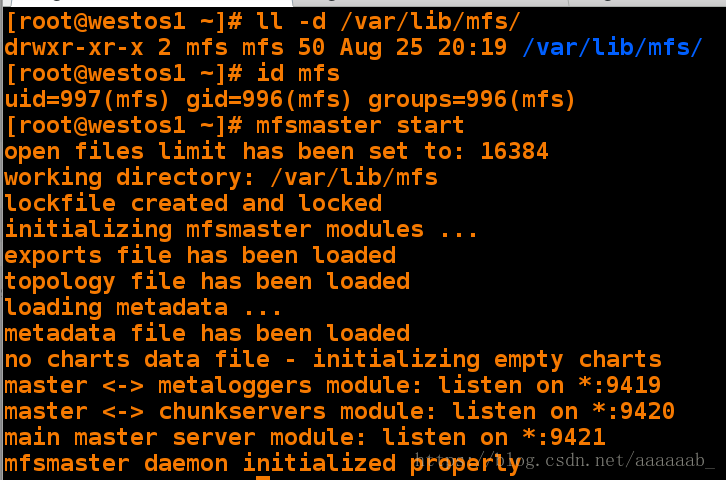

启动服务:

如下图所示,没有报错,ok,开启了三个端口9419 #metalogger 监听的端口地址(默认是9419);9420 #用于chunkserver 连接的端口地址(默认是9420);9421 #用于客户端挂接连接的端口地址(默认是9421);

[root@westos1 ~]# ll -d /var/lib/mfs/

drwxr-xr-x 2 mfs mfs 50 Aug 25 20:19 /var/lib/mfs/

[root@westos1 ~]# id mfs

uid=997(mfs) gid=996(mfs) groups=996(mfs)

[root@westos1 ~]# mfsmaster start 开启服务

9420通信节点,9421 客户端对外连接端口,9419 和源数据日志结合。定期和master端同步数据

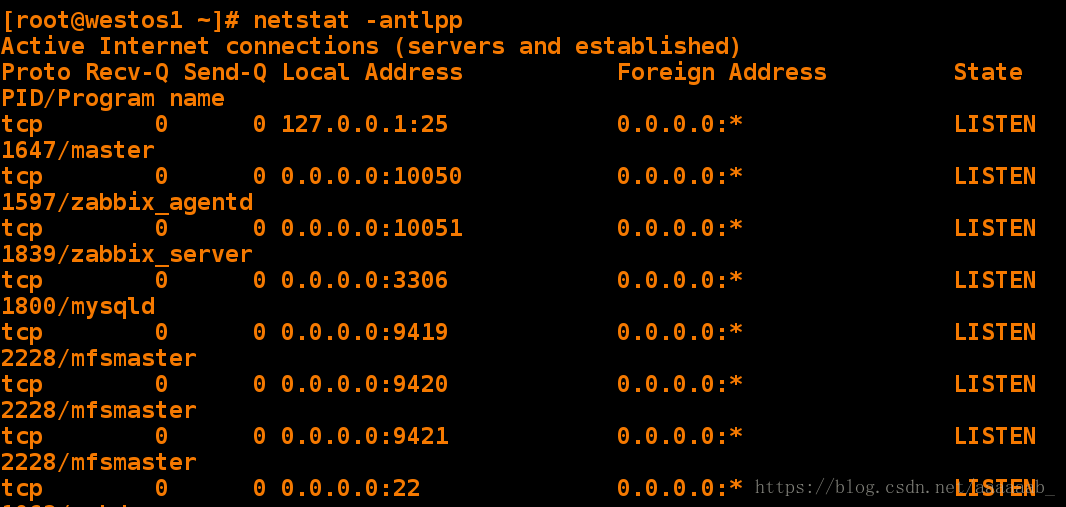

[root@westos1 ~]# netstat -antlpp 可以看到9419,9420,9421端口

Active Internet connections (servers and established)

Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name

tcp 0 0 127.0.0.1:25 0.0.0.0:* LISTEN 1647/master

tcp 0 0 0.0.0.0:10050 0.0.0.0:* LISTEN 1597/zabbix_agentd

tcp 0 0 0.0.0.0:10051 0.0.0.0:* LISTEN 1839/zabbix_server

tcp 0 0 0.0.0.0:3306 0.0.0.0:* LISTEN 1800/mysqld

tcp 0 0 0.0.0.0:9419 0.0.0.0:* LISTEN 2228/mfsmaster

tcp 0 0 0.0.0.0:9420 0.0.0.0:* LISTEN 2228/mfsmaster

tcp 0 0 0.0.0.0:9421 0.0.0.0:* LISTEN 2228/mfsmaster

tcp 0 0 0.0.0.0:22 0.0.0.0:* LISTEN 1063/sshd LISTEN 1839/zabbix_server

tcp6 0 0 :::80 :::* LISTEN 1059/httpd

tcp6 0 0 :::22 :::* LISTEN 1063/sshd

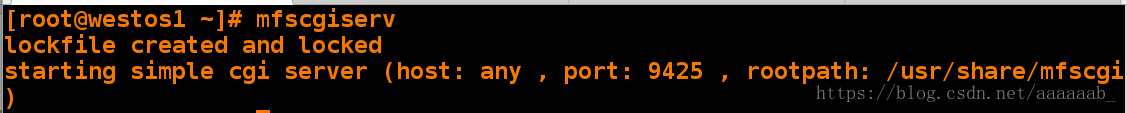

启用cgi服务:

[root@westos1 ~]# mfscgiserv

lockfile created and locked

starting simple cgi server (host: any , port: 9425 , rootpath: /usr/share/mfscgi)

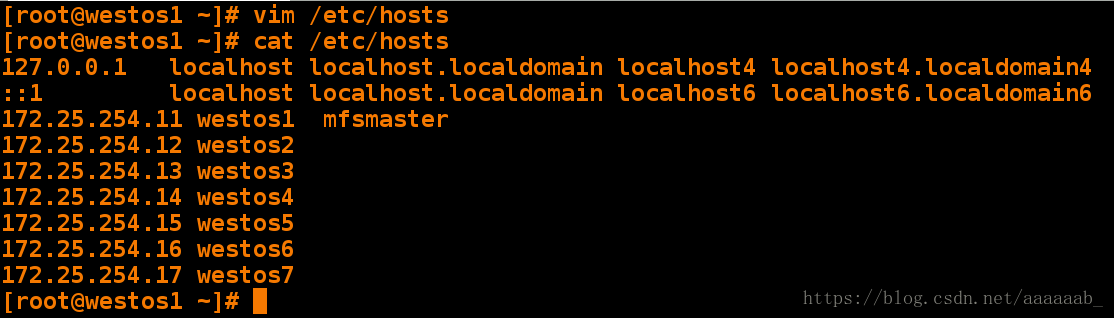

在server1添加解析:

[root@westos1 ~]# vim /etc/hosts

[root@westos1 ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.25.254.11 westos1 mfsmaster

172.25.254.12 westos2

172.25.254.13 westos3

172.25.254.14 westos4

172.25.254.15 westos5

172.25.254.16 westos6

172.25.254.17 westos7

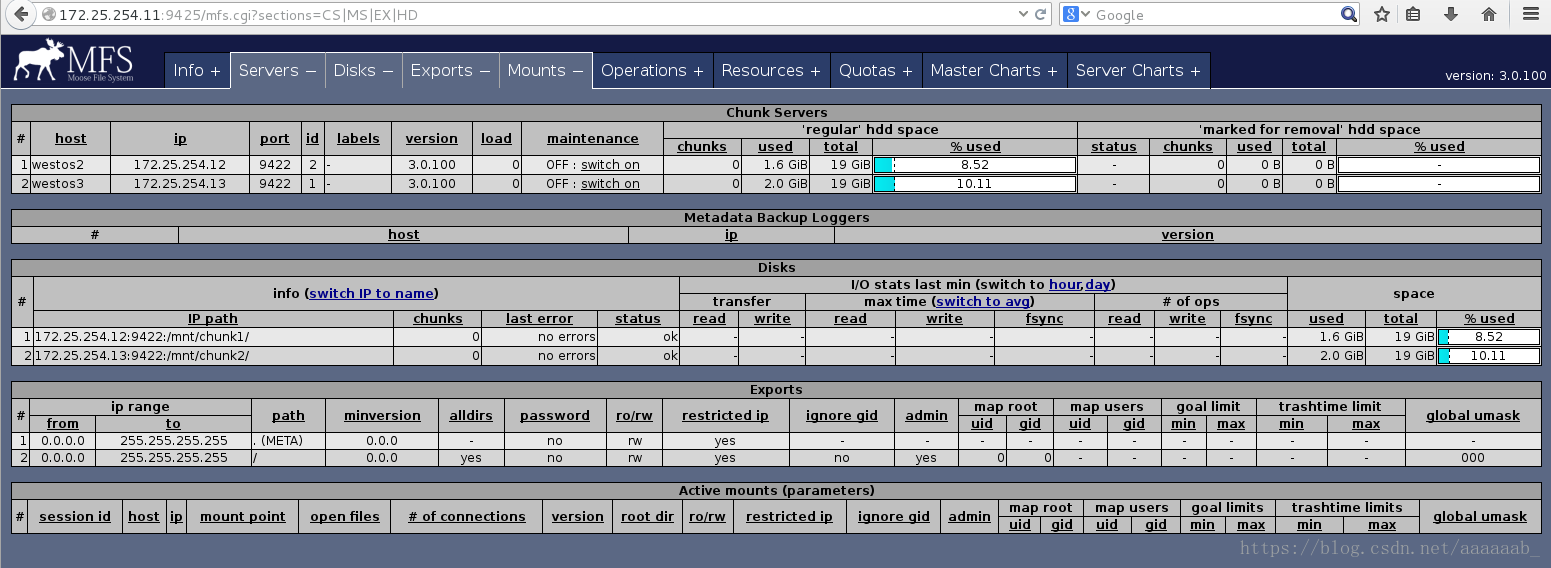

在浏览器访问:http://172.25.254.11:9425/mfs.cgi可以看到节点信息

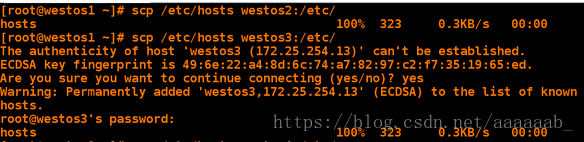

保证主从节点均有解析:

[root@westos1 ~]# scp /etc/hosts westos2:/etc/

[root@westos1 ~]# scp /etc/hosts westos3:/etc/

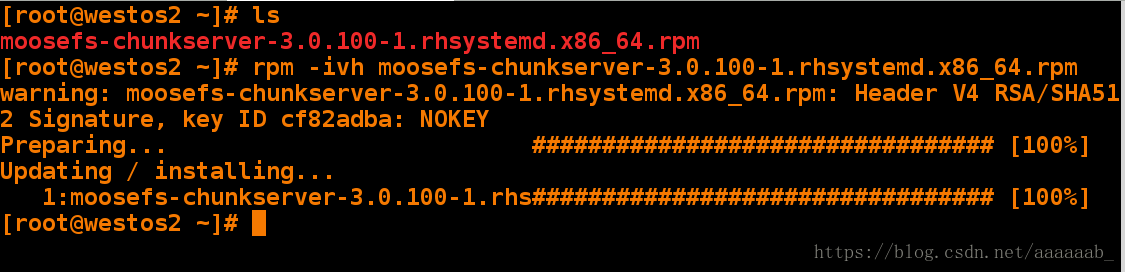

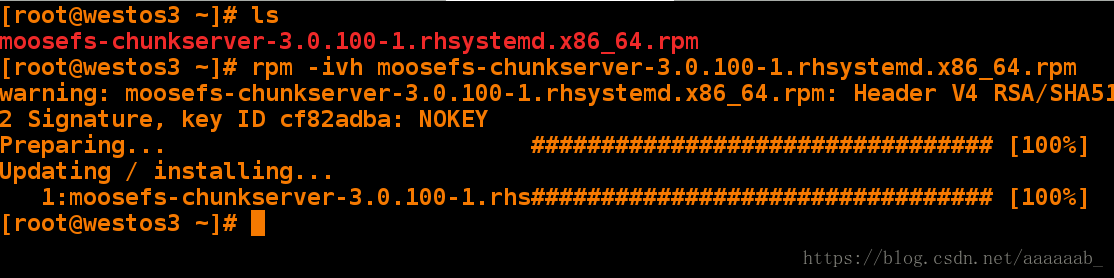

在从节点安装包:

[root@westos2 ~]# ls

moosefs-chunkserver-3.0.100-1.rhsystemd.x86_64.rpm

[root@westos2 ~]# rpm -ivh moosefs-chunkserver-3.0.100-1.rhsystemd.x86_64.rpm [root@westos2 ~]# ls

moosefs-chunkserver-3.0.100-1.rhsystemd.x86_64.rpm

[root@westos2 ~]# rpm -ivh moosefs-chunkserver-3.0.100-1.rhsystemd.x86_64.rpm

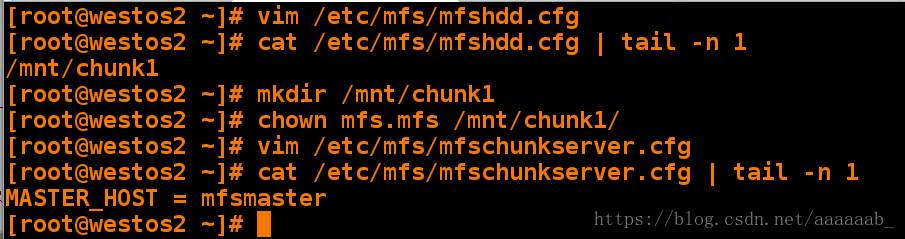

进行从节点的配置:

[root@westos2 ~]# vim /etc/mfs/mfshdd.cfg

[root@westos2 ~]# cat /etc/mfs/mfshdd.cfg | tail -n 1 最后一行添加

/mnt/chunk1

[root@westos2 ~]# mkdir /mnt/chunk1

[root@westos2 ~]# chown mfs.mfs /mnt/chunk1/

[root@westos2 ~]# vim /etc/mfs/mfschunkserver.cfg 最后一行添加

[root@westos2 ~]# cat /etc/mfs/mfschunkserver.cfg | tail -n 1

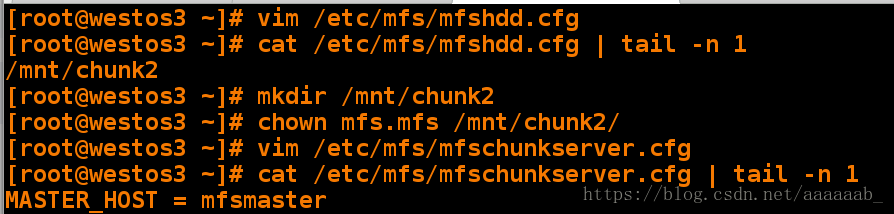

MASTER_HOST = mfsmasterroot@westos3 ~]# vim /etc/mfs/mfshdd.cfg

[root@westos3 ~]# cat /etc/mfs/mfshdd.cfg | tail -n 1

/mnt/chunk2

[root@westos3 ~]# mkdir /mnt/chunk2

[root@westos3 ~]# chown mfs.mfs /mnt/chunk2/

[root@westos3 ~]# vim /etc/mfs/mfschunkserver.cfg

[root@westos3 ~]# cat /etc/mfs/mfschunkserver.cfg | tail -n 1

MASTER_HOST = mfsmaster

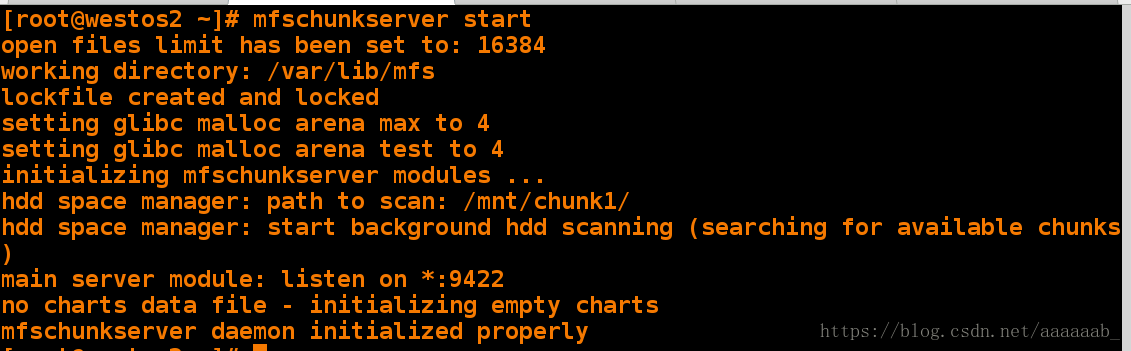

在westos2和westos3开启服务:

[root@westos2 ~]# mfschunkserver start

open files limit has been set to: 16384

working directory: /var/lib/mfs

lockfile created and locked

setting glibc malloc arena max to 4

setting glibc malloc arena test to 4

initializing mfschunkserver modules ...

hdd space manager: path to scan: /mnt/chunk1/

hdd space manager: start background hdd scanning (searching for available chunks)

main server module: listen on *:9422

no charts data file - initializing empty charts

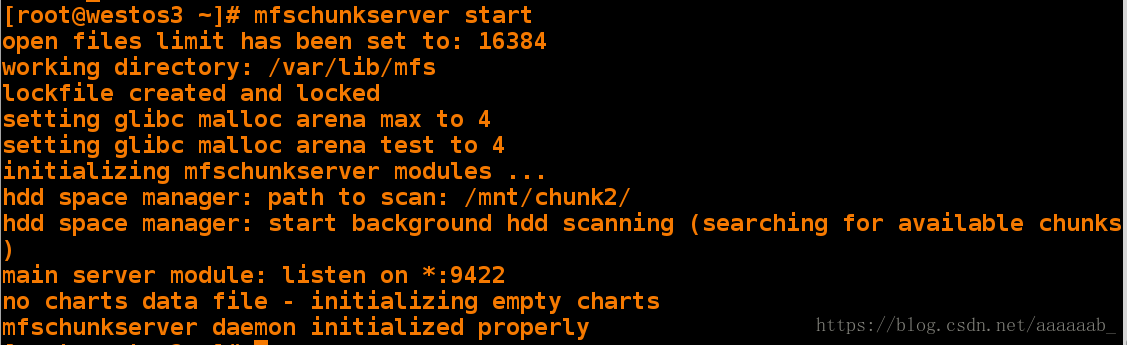

mfschunkserver daemon initialized properly[root@westos3 ~]# mfschunkserver start

open files limit has been set to: 16384

working directory: /var/lib/mfs

lockfile created and locked

setting glibc malloc arena max to 4

setting glibc malloc arena test to 4

initializing mfschunkserver modules ...

hdd space manager: path to scan: /mnt/chunk2/

hdd space manager: start background hdd scanning (searching for available chunks)

main server module: listen on *:9422

no charts data file - initializing empty charts

mfschunkserver daemon initialized properly

在浏览器查看可以看到节点信息:

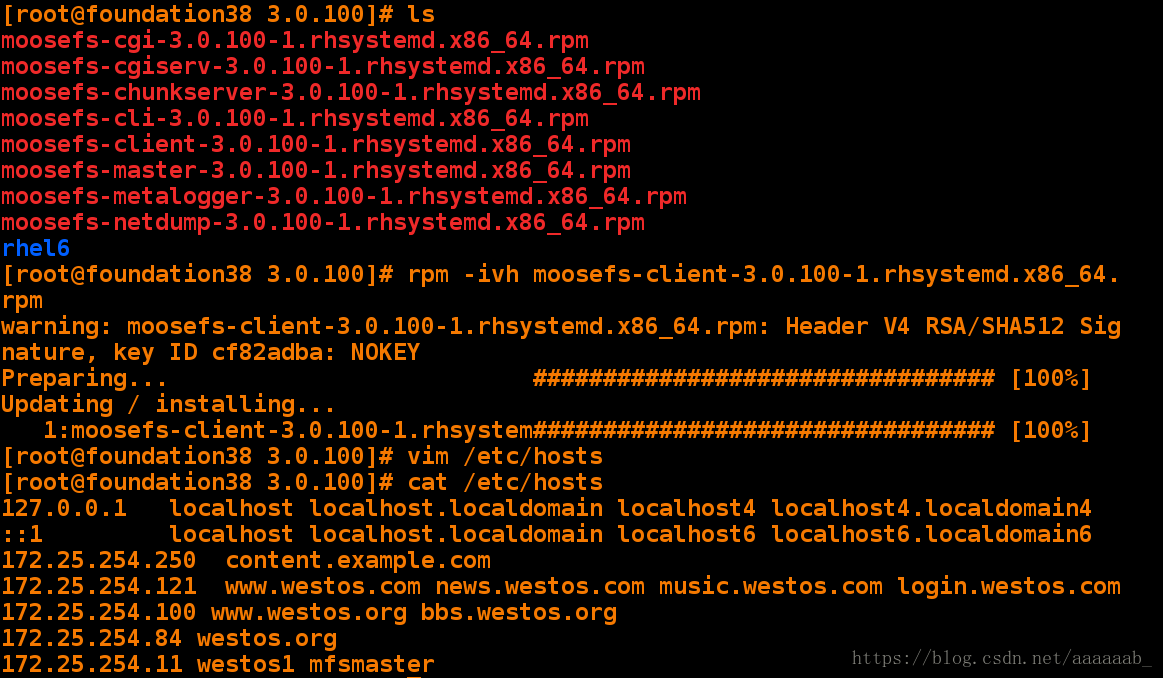

在真机安装客户端配置解析:

[root@foundation38 3.0.100]# ls

moosefs-cgi-3.0.100-1.rhsystemd.x86_64.rpm

moosefs-cgiserv-3.0.100-1.rhsystemd.x86_64.rpm

moosefs-chunkserver-3.0.100-1.rhsystemd.x86_64.rpm

moosefs-cli-3.0.100-1.rhsystemd.x86_64.rpm

moosefs-client-3.0.100-1.rhsystemd.x86_64.rpm

moosefs-master-3.0.100-1.rhsystemd.x86_64.rpm

moosefs-metalogger-3.0.100-1.rhsystemd.x86_64.rpm

moosefs-netdump-3.0.100-1.rhsystemd.x86_64.rpm

rhel6

[root@foundation38 3.0.100]# rpm -ivh moosefs-client

[root@foundation38 3.0.100]# vim /etc/hosts

[root@foundation38 3.0.100]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

172.25.254.250 content.example.com

172.25.254.121 www.westos.com news.westos.com music.westos.com login.westos.com

172.25.254.100 www.westos.org bbs.westos.org

172.25.254.84 westos.org

172.25.254.11 westos1 mfsmaster

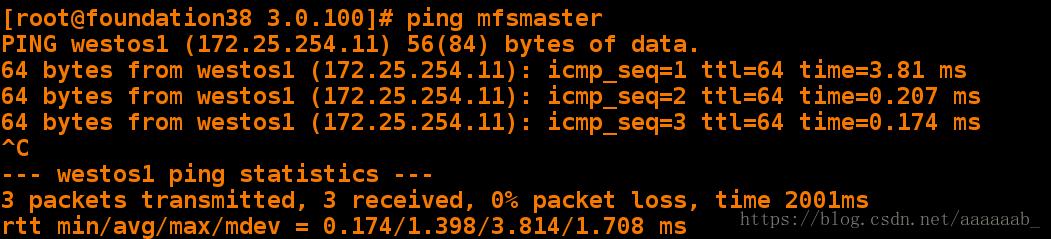

测试是否可以ping的通mfsmaster:

[root@foundation38 3.0.100]# ping mfsmaster

PING westos1 (172.25.254.11) 56(84) bytes of data.

64 bytes from westos1 (172.25.254.11): icmp_seq=1 ttl=64 time=3.81 ms

64 bytes from westos1 (172.25.254.11): icmp_seq=2 ttl=64 time=0.207 ms

64 bytes from westos1 (172.25.254.11): icmp_seq=3 ttl=64 time=0.174 ms

^C

--- westos1 ping statistics ---

3 packets transmitted, 3 received, 0% packet loss, time 2001ms

rtt min/avg/max/mdev = 0.174/1.398/3.814/1.708 ms

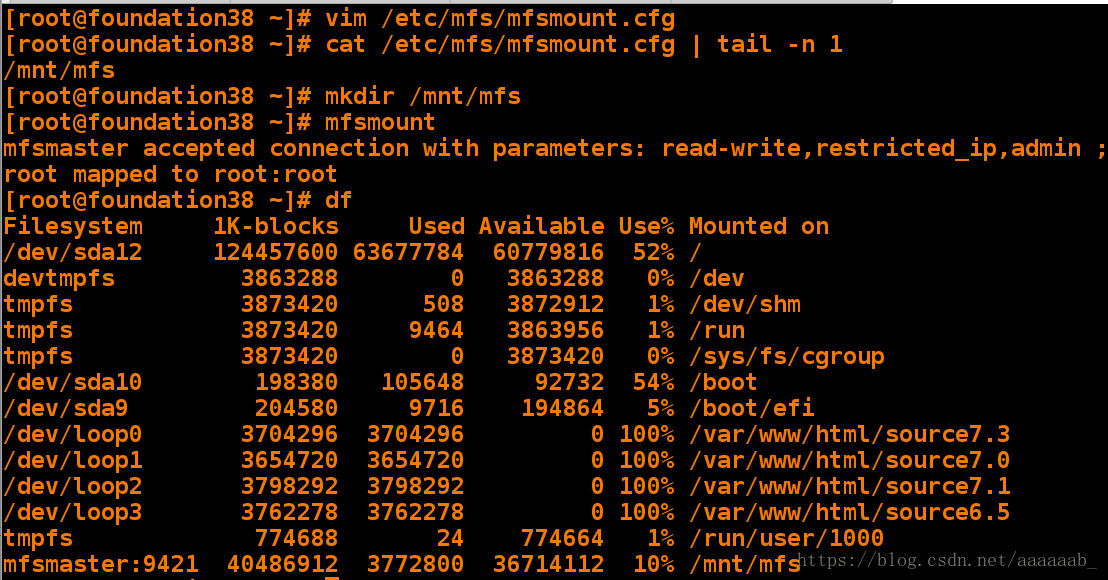

在真机挂载mfs:

[root@foundation38 ~]# vim /etc/mfs/mfsmount.cfg

[root@foundation38 ~]# cat /etc/mfs/mfsmount.cfg | tail -n 1

/mnt/mfs

[root@foundation38 ~]# mkdir /mnt/mfs

[root@foundation38 ~]# mfsmount

mfsmaster accepted connection with parameters: read-write,restricted_ip,admin ; root mapped to root:root

[root@foundation38 ~]# df

Filesystem 1K-blocks Used Available Use% Mounted on

/dev/sda12 124457600 63677784 60779816 52% /

devtmpfs 3863288 0 3863288 0% /dev

tmpfs 3873420 508 3872912 1% /dev/shm

tmpfs 3873420 9464 3863956 1% /run

tmpfs 3873420 0 3873420 0% /sys/fs/cgroup

/dev/sda10 198380 105648 92732 54% /boot

/dev/sda9 204580 9716 194864 5% /boot/efi

/dev/loop0 3704296 3704296 0 100% /var/www/html/source7.3

/dev/loop1 3654720 3654720 0 100% /var/www/html/source7.0

/dev/loop2 3798292 3798292 0 100% /var/www/html/source7.1

/dev/loop3 3762278 3762278 0 100% /var/www/html/source6.5

tmpfs 774688 24 774664 1% /run/user/1000

mfsmaster:9421 40486912 3772800 36714112 10% /mnt/mfs

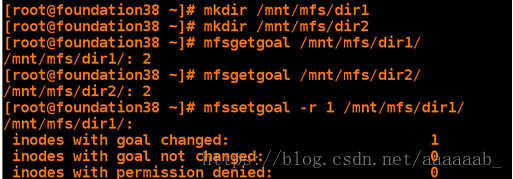

设置存储用来备份文件:

[root@foundation38 ~]# mkdir /mnt/mfs/dir1

[root@foundation38 ~]# mkdir /mnt/mfs/dir2

[root@foundation38 ~]# mfsgetgoal /mnt/mfs/dir1/

/mnt/mfs/dir1/: 2

[root@foundation38 ~]# mfsgetgoal /mnt/mfs/dir2/

/mnt/mfs/dir2/: 2

[root@foundation38 ~]# mfssetgoal -r 1 /mnt/mfs/dir1/ 默认为两个更改为1个

/mnt/mfs/dir1/:

inodes with goal changed: 1

inodes with goal not changed: 0

inodes with permission denied: 0

[root@foundation38 ~]# mfsgetgoal /mnt/mfs/dir1/

/mnt/mfs/dir1/: 1

备份文件进行分布式存储:

[root@foundation38 ~]# cp /etc/fstab /mnt/mfs/dir2/ 将fstab存储两份

[root@foundation38 ~]# cp /etc/passwd /mnt/mfs/dir1/ 将passwd存储一份

[root@foundation38 ~]# mfsfileinfo /mnt/mfs/dir1/passwd 查看文件信息

/mnt/mfs/dir1/passwd:

chunk 0: 0000000000000009_00000001 / (id:9 ver:1)

copy 1: 172.25.254.13:9422 (status:VALID) passwd存储原理部分粘贴自:http://blog.51cto.com/breezey/1339402

[root@foundation38 ~]# mfsfileinfo /mnt/mfs/dir2/fstab 查看文件信息

/mnt/mfs/dir2/fstab:

chunk 0: 0000000000000008_00000001 / (id:8 ver:1)

copy 1: 172.25.254.12:9422 (status:VALID)

copy 2: 172.25.254.13:9422 (status:VALID)关闭westos2节点,对于fatab文件来说不影响,因为备份了两份,而且passwd也在westos3存储,所以节点信息均可以查询到:

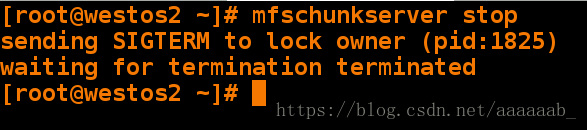

[root@westos2 ~]# mfschunkserver stop

sending SIGTERM to lock owner (pid:1825)

waiting for termination terminated

由于fstab有两份文件关闭westos2不影响:

[root@foundation38 ~]# mfsfileinfo /mnt/mfs/dir1/passwd 查看文件信息

/mnt/mfs/dir1/passwd:

chunk 0: 0000000000000009_00000001 / (id:9 ver:1)

copy 1: 172.25.254.13:9422 (status:VALID)

[root@foundation38 ~]# mfsfileinfo /mnt/mfs/dir2/fstab 查看文件信息

/mnt/mfs/dir2/fstab:

chunk 0: 0000000000000008_00000001 / (id:8 ver:1)

copy 1: 172.25.254.13:9422 (status:VALID)westos2恢复服务:

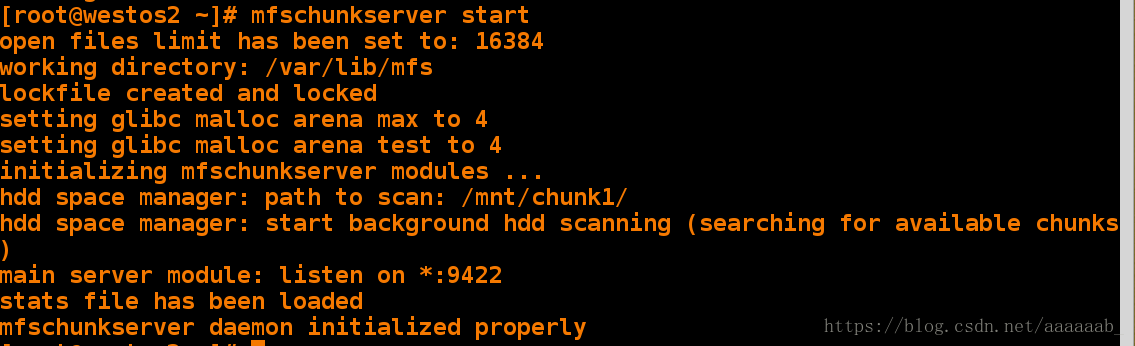

[root@westos2 ~]# mfschunkserver start

open files limit has been set to: 16384

working directory: /var/lib/mfs

lockfile created and locked

setting glibc malloc arena max to 4

setting glibc malloc arena test to 4

initializing mfschunkserver modules ...

hdd space manager: path to scan: /mnt/chunk1/

hdd space manager: start background hdd scanning (searching for available chunks)

main server module: listen on *:9422

stats file has been loaded

mfschunkserver daemon initialized properly

客户端恢复正常:

[root@foundation38 ~]# mfsfileinfo /mnt/mfs/dir1/passwd 查看文件信息

/mnt/mfs/dir1/passwd:

chunk 0: 0000000000000009_00000001 / (id:9 ver:1)

copy 1: 172.25.254.13:9422 (status:VALID)

[root@foundation38 ~]# mfsfileinfo /mnt/mfs/dir2/fstab 查看文件信息

/mnt/mfs/dir2/fstab:

chunk 0: 0000000000000008_00000001 / (id:8 ver:1)

copy 1: 172.25.254.12:9422 (status:VALID)

copy 2: 172.25.254.13:9422 (status:VALID)对于大文件,实行离散存储:

[root@foundation38 ~]# cd /mnt/mfs/

[root@foundation38 mfs]# cd dir1/

[root@foundation38 dir1]# ls

passwd

[root@foundation38 dir1]# dd if=/dev/zero of=file1 bs=1M count=100

100+0 records in

100+0 records out

104857600 bytes (105 MB) copied, 0.268454 s, 391 MB/s

[root@foundation38 dir1]# mfsfileinfo file1

file1:

chunk 0: 0000000000000003_00000001 / (id:3 ver:1)

copy 1: 172.25.254.12:9422 (status:VALID)

chunk 1: 0000000000000004_00000001 / (id:4 ver:1)

copy 1: 172.25.254.13:9422 (status:VALID)

[root@foundation38 dir1]# cd ../dir2/

[root@foundation38 dir2]# dd if=/dev/zero of=file1 bs=1M count=100

100+0 records in

100+0 records out

104857600 bytes (105 MB) copied, 0.568865 s, 184 MB/s

[root@foundation38 dir2]# mfsfileinfo file1

file1:

chunk 0: 0000000000000005_00000001 / (id:5 ver:1)

copy 1: 172.25.254.12:9422 (status:VALID)

copy 2: 172.25.254.13:9422 (status:VALID)

chunk 1: 0000000000000006_00000001 / (id:6 ver:1)

copy 1: 172.25.254.12:9422 (status:VALID)

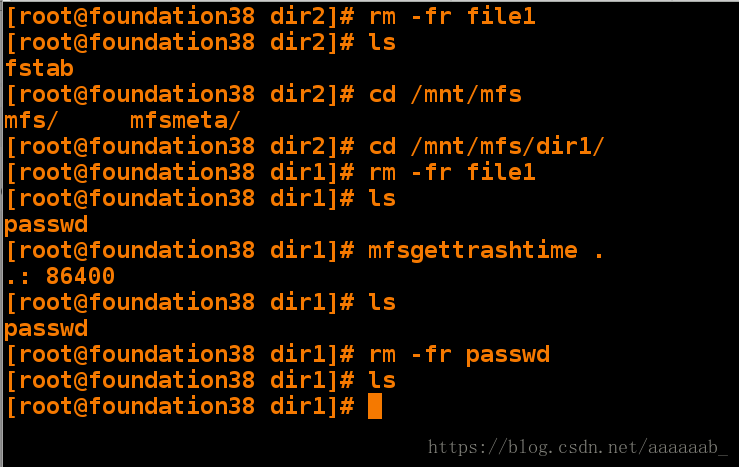

copy 2: 172.25.254.13:9422 (status:VALID)删除数据进行恢复测试:

[root@foundation38 dir2]# rm -fr file1

[root@foundation38 dir2]# ls

fstab

[root@foundation38 dir2]# cd /mnt/mfs

mfs/ mfsmeta/

[root@foundation38 dir2]# cd /mnt/mfs/dir1/

[root@foundation38 dir1]# rm -fr file1

[root@foundation38 dir1]# ls

passwd

[root@foundation38 dir1]# mfsgettrashtime .

.: 86400

[root@foundation38 dir1]# ls

passwd

[root@foundation38 dir1]# rm -fr passwd

[root@foundation38 dir1]# ls

[root@foundation38 dir1]#

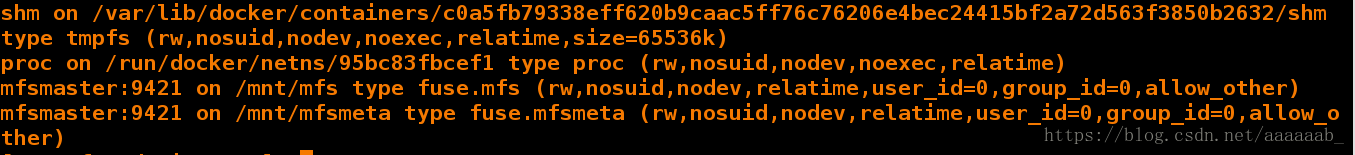

挂载查看:

[root@foundation38 dir1]# mkdir /mnt/mfsmeta

[root@foundation38 dir1]# mfsmount -m /mnt/mfsmeta/

mfsmaster accepted connection with parameters: read-write,restricted_ip

[root@foundation38 dir1]# df 用df命令无法查看

Filesystem 1K-blocks Used Available Use% Mounted on

/dev/sda12 124457600 64210636 60246964 52% /

devtmpfs 3863288 0 3863288 0% /dev

tmpfs 3873420 508 3872912 1% /dev/shm

tmpfs 3873420 9464 3863956 1% /run

tmpfs 3873420 0 3873420 0% /sys/fs/cgroup

/dev/sda10 198380 105648 92732 54% /boot

/dev/sda9 204580 9716 194864 5% /boot/efi

/dev/loop0 3704296 3704296 0 100% /var/www/html/source7.3

/dev/loop1 3654720 3654720 0 100% /var/www/html/source7.0

/dev/loop2 3798292 3798292 0 100% /var/www/html/source7.1

/dev/loop3 3762278 3762278 0 100% /var/www/html/source6.5

tmpfs 774688 20 774668 1% /run/user/1000

mfsmaster:9421 40486912 3780352 36706560 10% /mnt/mfs用mount命令查看:

[root@foundation38 ~]# mount

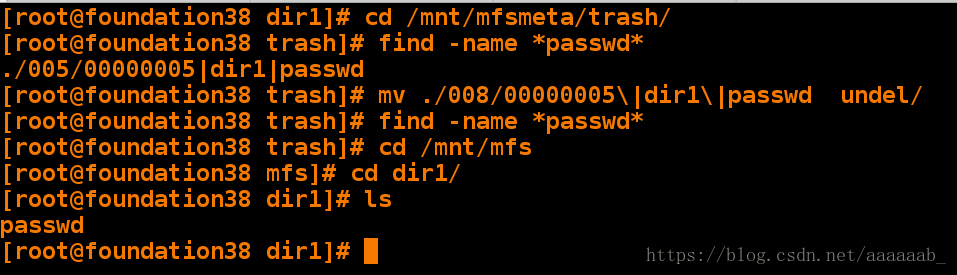

可以直接恢复文件:

[root@foundation38 dir1]# cd /mnt/mfsmeta/trash/

[root@foundation38 trash]# find -name *passwd*

./005/00000005|dir1|passwd

[root@foundation38 trash]# mv ./008/00000005\|dir1\|passwd undel/

[root@foundation38 trash]# find -name *passwd*

[root@foundation38 trash]# cd /mnt/mfs

[root@foundation38 mfs]# cd dir1/

[root@foundation38 dir1]# ls 已经找回文件

passwd

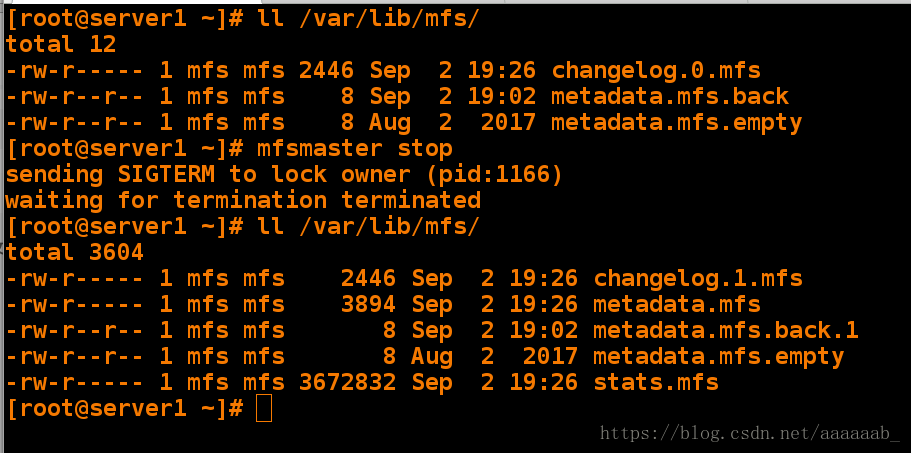

补充:当mfs-master开启的时候在/var/lib/mfs/会有metadata.mfs.back这个文件,关闭的时候,没有

[root@server1 ~]# ll /var/lib/mfs/

total 12

-rw-r----- 1 mfs mfs 2446 Sep 2 19:26 changelog.0.mfs

-rw-r--r-- 1 mfs mfs 8 Sep 2 19:02 metadata.mfs.back

-rw-r--r-- 1 mfs mfs 8 Aug 2 2017 metadata.mfs.empty

[root@server1 ~]# mfsmaster stop

sending SIGTERM to lock owner (pid:1166)

waiting for termination terminated

[root@server1 ~]# ll /var/lib/mfs/

total 3604

-rw-r----- 1 mfs mfs 2446 Sep 2 19:26 changelog.1.mfs

-rw-r----- 1 mfs mfs 3894 Sep 2 19:26 metadata.mfs

-rw-r--r-- 1 mfs mfs 8 Sep 2 19:02 metadata.mfs.back.1

-rw-r--r-- 1 mfs mfs 8 Aug 2 2017 metadata.mfs.empty

-rw-r----- 1 mfs mfs 3672832 Sep 2 19:26 stats.mfs