1.基本语法

bin/hadoop fs 具体命令

2.命令大全

[luomk@hadoop102 hadoop-2.7.2]$ bin/hadoop fs

[-appendToFile <localsrc> ... <dst>]

[-cat [-ignoreCrc] <src> ...]

[-checksum <src> ...]

[-chgrp [-R] GROUP PATH...]

[-chmod [-R] <MODE[,MODE]... | OCTALMODE> PATH...]

[-chown [-R] [OWNER][:[GROUP]] PATH...]

[-copyFromLocal [-f] [-p] [-l] <localsrc> ... <dst>]

[-copyToLocal [-p] [-ignoreCrc] [-crc] <src> ... <localdst>]

[-count [-q] [-h] <path> ...]

[-cp [-f] [-p | -p[topax]] <src> ... <dst>]

[-createSnapshot <snapshotDir> [<snapshotName>]]

[-deleteSnapshot <snapshotDir> <snapshotName>]

[-df [-h] [<path> ...]]

[-du [-s] [-h] <path> ...]

[-expunge]

[-find <path> ... <expression> ...]

[-get [-p] [-ignoreCrc] [-crc] <src> ... <localdst>]

[-getfacl [-R] <path>]

[-getfattr [-R] {-n name | -d} [-e en] <path>]

[-getmerge [-nl] <src> <localdst>]

[-help [cmd ...]]

[-ls [-d] [-h] [-R] [<path> ...]]

[-mkdir [-p] <path> ...]

[-moveFromLocal <localsrc> ... <dst>]

[-moveToLocal <src> <localdst>]

[-mv <src> ... <dst>]

[-put [-f] [-p] [-l] <localsrc> ... <dst>]

[-renameSnapshot <snapshotDir> <oldName> <newName>]

[-rm [-f] [-r|-R] [-skipTrash] <src> ...]

[-rmdir [--ignore-fail-on-non-empty] <dir> ...]

[-setfacl [-R] [{-b|-k} {-m|-x <acl_spec>} <path>]|[--set <acl_spec> <path>]]

[-setfattr {-n name [-v value] | -x name} <path>]

[-setrep [-R] [-w] <rep> <path> ...]

[-stat [format] <path> ...]

[-tail [-f] <file>]

[-test -[defsz] <path>]

[-text [-ignoreCrc] <src> ...]

[-touchz <路径> ...]

[-truncate [-w] <length> <path> ...]

[-usage [cmd ...]]

3.常用命令实操

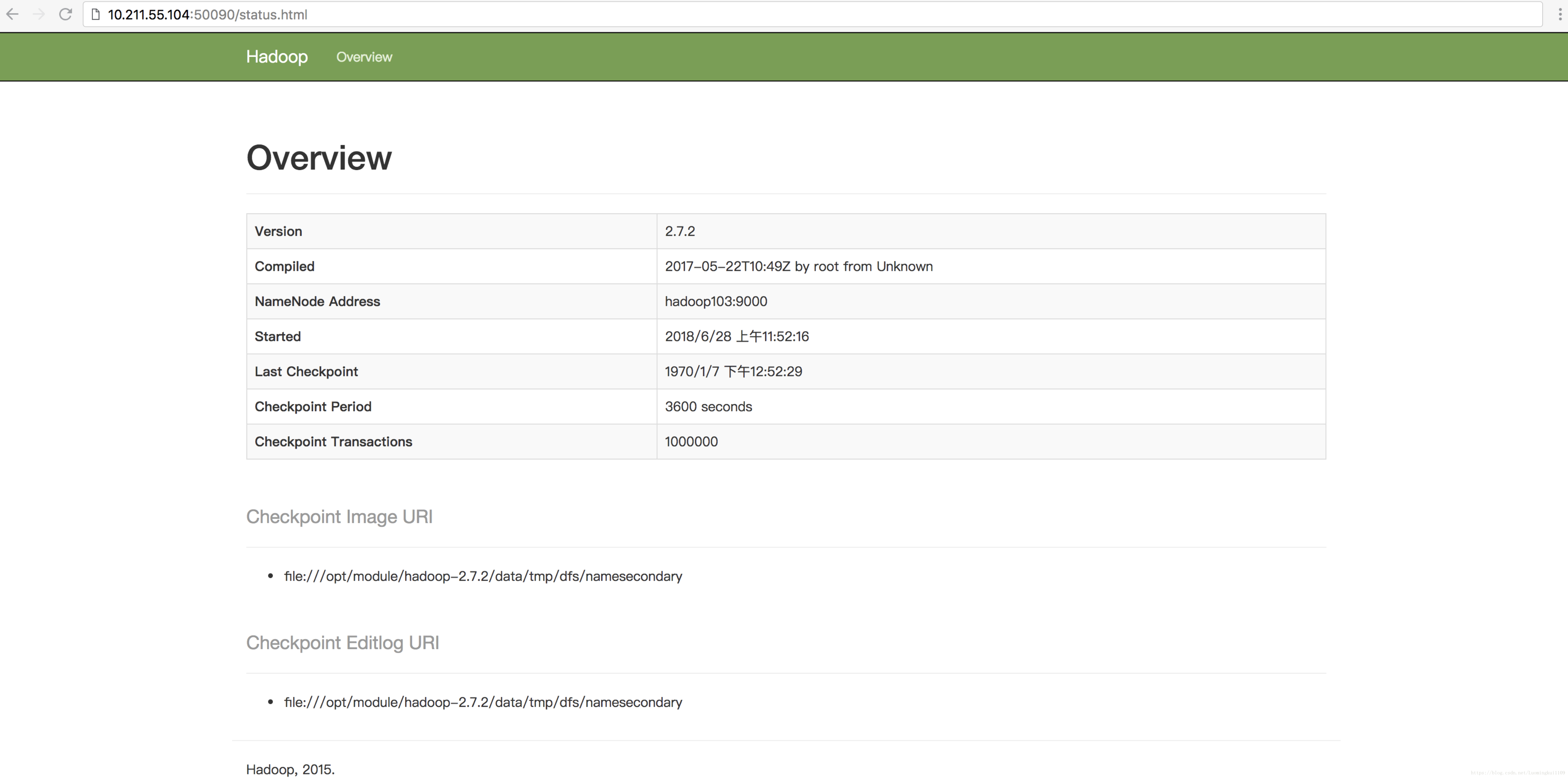

(0)启动的Hadoop集群(方便后续的测试)

[luomk @ hadoop102 hadoop-2.7.2] $ sbin / start-dfs.sh

[luomk @ hadoop103 hadoop-2.7.2] $ sbin / start-yarn.sh

(1)-help:输出这个命令参数

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -help rm

(2)-ls:显示目录信息

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -ls /

(3)-mkdir:在HDFS上创建目录

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -mkdir -p / sanguo / shuguo

(4)-moveFromLocal从本地剪切粘贴到hdfs

[luomk @ hadoop102 hadoop-2.7.2] $ touch kongming.txt

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -moveFromLocal ./kongming.txt / sanguo / shuguo

(5) - appendToFile:追加一个文件到已经存在的文件末尾

[luomk @ hadoop102 hadoop-2.7.2] $ touch liubei.txt

[luomk @ hadoop102 hadoop-2.7.2] $ vi liubei.txt

输入

三谷毛禄

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -appendToFile liubei.txt /sanguo/shuguo/kongming.txt

(6)-cat:显示文件内容

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -cat /sanguo/shuguo/kongming.txt

(7)-tail:显示一个文件的末尾

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -tail /sanguo/shuguo/kongming.txt

(8)-chgrp,-chmod,-chown:linux文件系统中的用法一样,修改文件所属权限

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -chmod 666 /sanguo/shuguo/kongming.txt

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -chown luomk:luomk /sanguo/shuguo/kongming.txt

(9)-copyFromLocal:从本地文件系统中拷贝文件到HDFS路径去

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -copyFromLocal README.txt /

(10)-copyToLocal:从HDFS拷贝到本地

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -copyToLocal /sanguo/shuguo/kongming.txt ./

(11)-cp:从hdfs的一个路径拷贝到hdfs的另一个路径

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -cp /sanguo/shuguo/kongming.txt /zhuge.txt

(12)-mv:在HDFS目录中移动文件

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -mv /zhuge.txt / sanguo / shuguo /

(13)-get:等同于copyToLocal,就是从HDFS下载文件到本地

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -get /sanguo/shuguo/kongming.txt ./

(14)-getmerge:合并下载多个文件,比如hdfs的目录/ aaa /下有多个文件:log.1,log.2,log.3,...

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -getmerge / user / luomk / test / * ./zaiyiqi.txt

(15)-put:等同于copyFromLocal

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -put ./zaiyiqi.txt / user / luomk /

(16)-rm:删除文件或文件夹(可以递归全部删除)

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -rm /user/luomk/test/jinlian2.txt

(17)-rmdir:删除空目录

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -mkdir / test

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -rmdir / test

(18)-du统计文件夹的大小信息

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -du -s -h / user / luomk / test

2.7 K /用户/ luomk /测试

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -du -h / user / luomk / test

1.3 K /user/luomk/test/README.txt

15 /user/luomk/test/jinlian.txt

1.4 K /user/luomk/test/zaiyiqi.txt

(19)-setrep:设置HDFS中文件的副本数量

[luomk @ hadoop102 hadoop-2.7.2] $ hadoop fs -setrep 10 /sanguo/shuguo/kongming.txt

这里设置的副本数只是记录在的NameNode的元数据中,是否真的会有这么多副本,还得看数据管理部的数量。因为目前只有3台设备,最多也就3个副本,只有节点数的增加到10台时,副本数才能达到10。