第一步:下载相关rpm包

下载地址:http://archive.cloudera.com/cdh5/redhat/6/x86_64/cdh/5.14.0/RPMS/x86_64/

需要下载jar包如下:

impala-2.11.0+cdh5.14.0+0-1.cdh5.14.0.p0.50.el6.x86_64.rpm

impala-catalog-2.11.0+cdh5.14.0+0-1.cdh5.14.0.p0.50.el6.x86_64.rpm

impala-debuginfo-2.11.0+cdh5.14.0+0-1.cdh5.14.0.p0.50.el6.x86_64.rpm

impala-server-2.11.0+cdh5.14.0+0-1.cdh5.14.0.p0.50.el6.x86_64.rpm

impala-shell-2.11.0+cdh5.14.0+0-1.cdh5.14.0.p0.50.el6.x86_64.rpm

impala-state-store-2.11.0+cdh5.14.0+0-1.cdh5.14.0.p0.50.el6.x86_64.rpm

impala-udf-devel-2.11.0+cdh5.14.0+0-1.cdh5.14.0.p0.50.el6.x86_64.rpm

第二步:安装rpm包

rpm -ivh --nodeps impala-2.11.0+cdh5.14.0+0-1.cdh5.14.0.p0.50.el6.x86_64.rpm

rpm -ivh --nodeps impala-catalog-2.11.0+cdh5.14.0+0-1.cdh5.14.0.p0.50.el6.x86_64.rpm

rpm -ivh --nodeps impala-debuginfo-2.11.0+cdh5.14.0+0-1.cdh5.14.0.p0.50.el6.x86_64.rpm

rpm -ivh --nodeps impala-server-2.11.0+cdh5.14.0+0-1.cdh5.14.0.p0.50.el6.x86_64.rpm

rpm -ivh --nodeps impala-shell-2.11.0+cdh5.14.0+0-1.cdh5.14.0.p0.50.el6.x86_64.rpm

rpm -ivh --nodeps impala-state-store-2.11.0+cdh5.14.0+0-1.cdh5.14.0.p0.50.el6.x86_64.rpm

rpm -ivh --nodeps impala-udf-devel-2.11.0+cdh5.14.0+0-1.cdh5.14.0.p0.50.el6.x86_64.rpm

第三步:重新创建包软连接

使用rpm安装之后在/usr/lib/impala/lib下面会发现很多无效软连接,全部删除

sudo rm -rf /usr/lib/impala/lib/avro*.jar

sudo rm -rf /usr/lib/impala/lib/hadoop-*.jar

sudo rm -rf /usr/lib/impala/lib/hive-*.jar

sudo rm -rf /usr/lib/impala/lib/hbase-*.jar

sudo rm -rf /usr/lib/impala/lib/parquet-hadoop-bundle.jar

sudo rm -rf /usr/lib/impala/lib/sentry-*.jar

sudo rm -rf /usr/lib/impala/lib/zookeeper.jar

sudo rm -rf /usr/lib/impala/lib/libhadoop.so

sudo rm -rf /usr/lib/impala/lib/libhadoop.so.1.0.0

sudo rm -rf /usr/lib/impala/lib/libhdfs.so

sudo rm -rf /usr/lib/impala/lib/libhdfs.so.0.0.0

删除之后:重新创建软连接:我自己开发脚本如下:

注意事项:脚本中jar包版本是自己环境中的jar包版本,不要直接运用,必须修改,否则依然找不到软连接

#!/bin/bash

HBASE_HOME=/soft/hbase/hbase-1.3.1

HADOOP_HOME=/soft/hadoop/hadoop-2.7.1

HIVE_HOME=/soft/hive/apache-hive-1.2.0-bin

ZK_HOME=/soft/zookeeper/zookeeper-3.4.7

SENTRY_HOME=/usr/lib/sentry

sudo ln -s $HADOOP_HOME/share/hadoop/common/lib/hadoop-annotations-2.7.1.jar /usr/lib/impala/lib/hadoop-annotations.jar

sudo ln -s $HADOOP_HOME/share/hadoop/common/lib/hadoop-auth-2.7.1.jar /usr/lib/impala/lib/hadoop-auth.jar

sudo ln -s $HADOOP_HOME/share/hadoop/tools/lib/hadoop-aws-2.7.1.jar /usr/lib/impala/lib/hadoop-aws.jar

sudo ln -s $HADOOP_HOME/share/hadoop/common/hadoop-common-2.7.1.jar /usr/lib/impala/lib/hadoop-common.jar

sudo ln -s $HADOOP_HOME/share/hadoop/hdfs/hadoop-hdfs-2.7.1.jar /usr/lib/impala/lib/hadoop-hdfs.jar

sudo ln -s $HADOOP_HOME/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.7.1.jar /usr/lib/impala/lib/hadoop-mapreduce-client-common.jar

sudo ln -s $HADOOP_HOME/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.7.1.jar /usr/lib/impala/lib/hadoop-mapreduce-client-core.jar

sudo ln -s $HADOOP_HOME/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.1.jar /usr/lib/impala/lib/hadoop-mapreduce-client-jobclient.jar

sudo ln -s $HADOOP_HOME/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.7.1.jar /usr/lib/impala/lib/hadoop-mapreduce-client-shuffle.jar

sudo ln -s $HADOOP_HOME/share/hadoop/yarn/hadoop-yarn-api-2.7.1.jar /usr/lib/impala/lib/hadoop-yarn-api.jar

sudo ln -s $HADOOP_HOME/share/hadoop/yarn/hadoop-yarn-client-2.7.1.jar /usr/lib/impala/lib/hadoop-yarn-client.jar

sudo ln -s $HADOOP_HOME/share/hadoop/yarn/hadoop-yarn-common-2.7.1.jar /usr/lib/impala/lib/hadoop-yarn-common.jar

sudo ln -s $HADOOP_HOME/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.7.1.jar /usr/lib/impala/lib/hadoop-yarn-server-applicationhistoryservice.jar

sudo ln -s $HADOOP_HOME/share/hadoop/yarn/hadoop-yarn-server-common-2.7.1.jar /usr/lib/impala/lib/hadoop-yarn-server-common.jar

sudo ln -s $HADOOP_HOME/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.7.1.jar /usr/lib/impala/lib/hadoop-yarn-server-nodemanager.jar

sudo ln -s $HADOOP_HOME/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.7.1.jar /usr/lib/impala/lib/hadoop-yarn-server-resourcemanager.jar

sudo ln -s $HADOOP_HOME/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.7.1.jar /usr/lib/impala/lib/hadoop-yarn-server-web-proxy.jar

sudo ln -s $HBASE_HOME/lib/avro-1.7.4.jar /usr/lib/impala/lib/avro.jar

sudo ln -s $HBASE_HOME/lib/hbase-annotations-1.3.1.jar /usr/lib/impala/lib/hbase-annotations.jar

sudo ln -s $HBASE_HOME/lib/hbase-client-1.3.1.jar /usr/lib/impala/lib/hbase-client.jar

sudo ln -s $HBASE_HOME/lib/hbase-common-1.3.1.jar /usr/lib/impala/lib/hbase-common.jar

sudo ln -s $HBASE_HOME/lib/hbase-protocol-1.3.1.jar /usr/lib/impala/lib/hbase-protocol.jar

sudo ln -s $HIVE_HOME/lib/hive-ant-1.2.0.jar /usr/lib/impala/lib/hive-ant.jar

sudo ln -s $HIVE_HOME/lib/hive-beeline-1.2.0.jar /usr/lib/impala/lib/hive-beeline.jar

sudo ln -s $HIVE_HOME/lib/hive-common-1.2.0.jar /usr/lib/impala/lib/hive-common.jar

sudo ln -s $HIVE_HOME/lib/hive-exec-1.2.0.jar /usr/lib/impala/lib/hive-exec.jar

sudo ln -s $HIVE_HOME/lib/hive-hbase-handler-1.2.0.jar /usr/lib/impala/lib/hive-hbase-handler.jar

sudo ln -s $HIVE_HOME/lib/hive-metastore-1.2.0.jar /usr/lib/impala/lib/hive-metastore.jar

sudo ln -s $HIVE_HOME/lib/hive-serde-1.2.0.jar /usr/lib/impala/lib/hive-serde.jar

sudo ln -s $HIVE_HOME/lib/hive-service-1.2.0.jar /usr/lib/impala/lib/hive-service.jar

sudo ln -s $HIVE_HOME/lib/hive-shims-common-1.2.0.jar /usr/lib/impala/lib/hive-shims-common.jar

sudo ln -s $HIVE_HOME/lib/hive-shims-1.2.0.jar /usr/lib/impala/lib/hive-shims.jar

sudo ln -s $HIVE_HOME/lib/hive-shims-scheduler-1.2.0.jar /usr/lib/impala/lib/hive-shims-scheduler.jar

sudo ln -s $HADOOP_HOME/lib/native/libhadoop.so /usr/lib/impala/lib/libhadoop.so

sudo ln -s $HADOOP_HOME/lib/native/libhadoop.so.1.0.0 /usr/lib/impala/lib/libhadoop.so.1.0.0

sudo ln -s $HADOOP_HOME/lib/native/libhdfs.so /usr/lib/impala/lib/libhdfs.so

sudo ln -s $HADOOP_HOME/lib/native/libhdfs.so.0.0.0 /usr/lib/impala/lib/libhdfs.so.0.0.0

sudo ln -s $HIVE_HOME/lib/parquet-hadoop-bundle-1.6.0.jar /usr/lib/impala/lib/parquet-hadoop-bundle.jar

sudo ln -s $SENTRY_HOME/lib/sentry-binding-hive-1.4.0-cdh5.3.3.jar /usr/lib/impala/lib/sentry-binding-hive.jar

sudo ln -s $SENTRY_HOME/lib/sentry-core-common-1.4.0-cdh5.3.3.jar /usr/lib/impala/lib/sentry-core-common.jar

sudo ln -s $SENTRY_HOME/lib/sentry-core-model-db-1.4.0-cdh5.3.3.jar /usr/lib/impala/lib/sentry-core-model-db.jar

sudo ln -s $SENTRY_HOME/lib/sentry-policy-common-1.4.0-cdh5.3.3.jar /usr/lib/impala/lib/sentry-policy-common.jar

sudo ln -s $SENTRY_HOME/lib/sentry-policy-db-1.4.0-cdh5.3.3.jar /usr/lib/impala/lib/sentry-policy-db.jar

sudo ln -s $SENTRY_HOME/lib/sentry-provider-cache-1.4.0-cdh5.3.3.jar /usr/lib/impala/lib/sentry-provider-cache.jar

sudo ln -s $SENTRY_HOME/lib/sentry-provider-common-1.4.0-cdh5.3.3.jar /usr/lib/impala/lib/sentry-provider-common.jar

sudo ln -s $SENTRY_HOME/lib/sentry-provider-db-1.4.0-cdh5.3.3.jar /usr/lib/impala/lib/sentry-provider-db.jar

sudo ln -s $SENTRY_HOME/lib/sentry-provider-file-1.4.0-cdh5.3.3.jar /usr/lib/impala/lib/sentry-provider-file.jar

sudo ln -s $ZK_HOME/zookeeper-3.4.7.jar /usr/lib/impala/lib/zookeeper.jar

第四步:下载big-top-utils

下载地址:http://archive-primary.cloudera.com/cdh5/redhat/6/x86_64/cdh/5.3.3/RPMS/noarch/

第五步:安装bigtop-utils

rpm -ivh --nodeps bigtop-utils-0.7.0+cdh5.13.0+0-1.cdh5.13.0.p0.34.el6.noarch.rpm

第六步:修改bigtop-utils配置

cd /etc/default/

vi bigtop-utils

添加:export JAVA_HOME=/soft/java/jdk1.8.0_65 //对应自己的java_home

source /etc/default/bigtop-utils

第七步:复制xml文件到impala配置路径

cp $HADOOP_HOME/etc/hadoop/hdfs-site.xml /etc/impala/conf

修改hdfs-site.xml

<property>

<name>dfs.client.read.shortcircuit</name>

<value>true</value>

</property>

<property>

<name>dfs.domain.socket.path</name>

<value>/var/run/hadoop-hdfs/dn</value>

</property>

<property>

<name>dfs.datanode.hdfs-blocks-metadata.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.client.use.legacy.blockreader.local</name>

<value>false</value>

</property>

<property>

<name>dfs.datanode.data.dir.perm</name>

<value>750</value>

</property>

<property>

<name>dfs.block.local-path-access.user</name>

<value>hadoop</value>

</property>

<property>

<name>dfs.client.file-block-storage-locations.timeout</name>

<value>3000</value>

</property>

cp $HADOOP_HOME/etc/hadoop/core-site.xml /etc/impala/conf

第八步:复制mysql-connector jar包到/usr/share/java并改名

cp mysql-connector-*.jar /usr/share/java/mysql-connector-java.jar

第九步:修改配置文件:

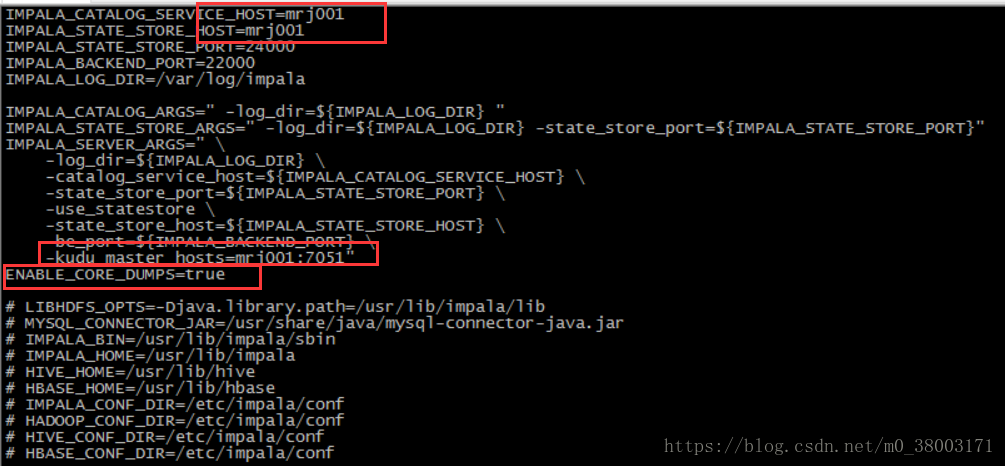

vi /etc/default/impala

红色部分为需要修改和添加的

第十步:启动impala服务

hive --service metastore &

hive --service hiveserver &

service impala-state-store start

service impala-catalog start

service impala-server start

第十一步:启动impala-shell

show databases;//验证操作