【行人检测】检测图片中的行人

在Opencv3.4.0中自带行人检测(视频中的)的例子,在安装路径下的

..\opencv3_4\opencv\sources\samples\cpp\peopledetect.cpp

本节在其基础上稍加改动,便可运行。附录部分为程序中用到的几个关键函数的参数解析。

【运行环境】VS2017+Opencv3.4.0+windows

主要步骤:

1.声明一个hog特征说明符(HOGDescriptor hog)

2.设置SVM检测器

3.进行多尺度检测

4.将检测结果(矩形)画出来

完整程序:

// Hog_SVM_Pedestrian.cpp: 定义控制台应用程序的入口点。

//图片中的行人检测

#include "stdafx.h"

#include<opencv2/core/core.hpp>

#include<opencv2/highgui/highgui.hpp>

#include<opencv2/imgproc/imgproc.hpp>

#include<opencv2/objdetect.hpp> // include hog

#include<iostream>

using namespace std;

using namespace cv;

void detectAndDraw(HOGDescriptor &hog,Mat &img)

{

vector<Rect> found, found_filtered;

double t = (double)getTickCount();

hog.detectMultiScale(img, found, 0, Size(8, 8), Size(32, 32), 1.05, 2);//多尺度检测目标,返回的矩形从大到小排列

t = (double)getTickCount() - t;

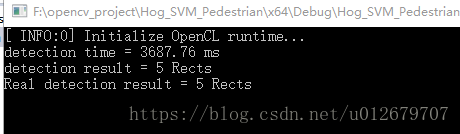

cout << "detection time = " << (t*1000. / cv::getTickFrequency()) << " ms" << endl;

cout << "detection result = " << found.size() << " Rects" << endl;

for (size_t i = 0; i < found.size(); i++)

{

Rect r = found[i];

size_t j;

// Do not add small detections inside a bigger detection. 如果有嵌套的话,则取外面最大的那个矩形框放入found_filtered中

for (j = 0; j < found.size(); j++)

if (j != i && (r & found[j]) == r)

break;

if (j == found.size())

found_filtered.push_back(r);

}

cout << "Real detection result = " << found_filtered.size() << " Rects" << endl;

for (size_t i = 0; i < found_filtered.size(); i++)

{

Rect r = found_filtered[i];

// The HOG detector returns slightly larger rectangles than the real objects,

// hog检测结果返回的矩形比实际的要大一些

// so we slightly shrink the rectangles to get a nicer output.

// r.x += cvRound(r.width*0.1);

// r.width = cvRound(r.width*0.8);

// r.y += cvRound(r.height*0.07);

// r.height = cvRound(r.height*0.8);

rectangle(img, r.tl(), r.br(), cv::Scalar(0, 255, 0), 3);

}

}

int main()

{

Mat img = imread("pedestrian.jpg");

HOGDescriptor hog;

hog.setSVMDetector(HOGDescriptor::getDefaultPeopleDetector() ); //getDefaultPeopleDetector():

//Returns coefficients of the classifier trained for people detection (for 64x128 windows). //Returns coefficients of the classifier trained for people detection (for 64x128 windows).

detectAndDraw(hog, img);

namedWindow("frame");

imshow("frame", img);

while( waitKey(10) != 27) ;

destroyWindow("show");

return 0;

}

运行结果:

------------------------------------------- 附录 ----------------------------------------------

setSVMDetector()函数:

/**@brief Sets coefficients for the linear SVM classifier.设置线性SVM分类器的系数

@param _svmdetector coefficients for the linear SVM classifier.

*/

CV_WRAP virtual void setSVMDetector(InputArray _svmdetector);

getDefaultPeopleDetector()函数:

/** @brief Returns coefficients of the classifier trained for people detection (for 64x128 windows).

返回 已训练好的用于行人检测 的分类器的系数

*/

CV_WRAP static std::vector<float> getDefaultPeopleDetector();

detectMultiScale()函数详解:

/** @brief Detects objects of different sizes in the input image. The detected objects are returned as a list

of rectangles.多尺度检测目标,检测到的目标以矩形list返回

@param img: Matrix of the type CV_8U(单通道) or CV_8UC3(三通道) containing an image where objects are detected.

@param foundLocations :Vector of rectangles where each rectangle contains the detected object.

@param hitThreshold(击中率): Threshold for the distance between features and SVM classifying plane.

Usually it is 0 and should be specfied in the detector coefficients (as the last free coefficient).

But if the free coefficient is omitted (which is allowed), you can specify it manually here.

@param winStride(窗口滑动步长 = cell大小): Window stride. It must be a multiple of block stride.

@param padding(填充): Padding

@param scale(检测窗口增大的系数): Coefficient of the detection window increase.

@param finalThreshold(最终的阈值): Final threshold

@param useMeanshiftGrouping(使用平均移位分组): indicates grouping algorithm

*/

virtual void detectMultiScale(InputArray img, CV_OUT std::vector<Rect>& foundLocations,

double hitThreshold = 0, Size winStride = Size(),

Size padding = Size(), double scale = 1.05,

double finalThreshold = 2.0, bool useMeanshiftGrouping = false) const;

------------------------------------------- END -------------------------------------

参考:

https://blog.csdn.net/masibuaa/article/details/16003847