解析网页

1.BeautifulSoup解析库

1.它是一个类,功能就是把不友好的html文件转换成有好的形式,就像我们在浏览器里面看到的源代码一样,除此之外我们还可以通过它找到标签,找到html的整个树形结构。从而找到每一个节点信息。

2.安装:命令提示符:pip install BeautifulSoup4

3.测试

import requests

from bs4 import BeautifulSoup

url = 'http://python123.io/ws/demo.html'

r = requests.get(url)

print("原生获取的html/n",r.text)

demo = r.text

soup = BeautifulSoup(demo,"html.parser") #把demo 做成BeautifulSoup能理解的汤,另外的参数是标准卡的解析器

print("解析之后/n",soup.prettify()) #这里的prettify()可以让网页换行,标签识别等,常用。

4.BeautifulSoup将任何输入都转换成UTF8格式:

bs4库的基本元素:Tag Name Attributes NavigableString Comment

bs4库的遍历功能:

向下:.content .children .decendants

向上: .parent .parents

同行遍历:.next_sibling .previous_sibling .next_siblings .previous_siblings

5.看看一般的html格式:

<html>

<head>

<title>

This is a python demo page

</title>

</head>

<body>

<p class="title">

<b>

The demo python introduces several python courses.

</b>

</p>

<p class="course">

Python is a wonderful general-purpose programming language. You can learn Python from novice to professional by tracking the following courses:

<a class="py1" href="http://www.icourse163.org/course/BIT-268001" id="link1">

Basic Python

</a>

and

<a class="py2" href="http://www.icourse163.org/course/BIT-1001870001" id="link2">

Advanced Python

</a>

.

</p>

</body>

</html>

在看看它的抽象模型:

6.组织信息

插入遍历方法的代码

>>> import requests

>>> from bs4 import BeautifulSoup

>>> r = requests.get("http://python123.io/ws/demo.html")

>>> soup = BeautifulSoup(r.text,"html.parser")

>>> soup.head # soup的head标签

<head><title>This is a python demo page</title></head>

>>> soup.head.contents # 获取head标签下的所有儿子标签

[<title>This is a python demo page</title>]

>>> soup.body.contents

['\n', <p class="title"><b>The demo python introduces several python courses.</b></p>, '\n', <p class="course">Python is a wonderful general-purpose programming language. You can learn Python from novice to professional by tracking the following courses:

<a class="py1" href="http://www.icourse163.org/course/BIT-268001" id="link1">Basic Python</a> and <a class="py2" href="http://www.icourse163.org/course/BIT-1001870001" id="link2">Advanced Python</a>.</p>, '\n']

>>> # 上面的列表就是body的所有儿子节点,包括 标签,和标签同级的字符串 这里只有儿子节点不会含有孙子节点

>>> #但是 .parents 与decendents就是所的,包括一切比他们“小一级的标签”

>>> for parent in soup.a.parents:

if parent is None:

print(parent)

else:

print(parent.name)

p

body

html

[document]

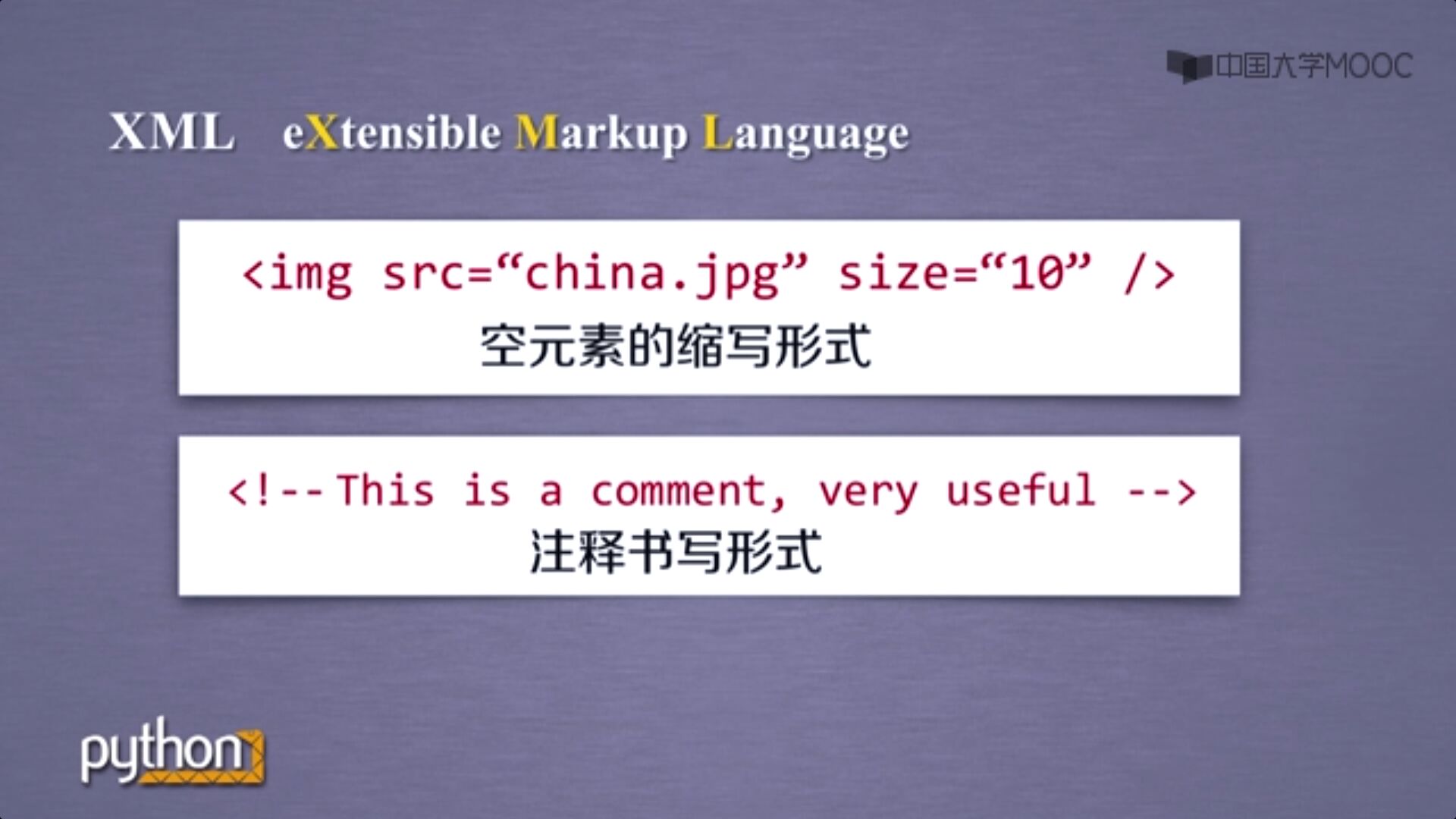

信息标记的三种方式(标记后的信息更加容易理解)

xml(html) json方式 yamel方式

就比如html就是一种标记了的信息。

信息提取的方法:

>>> import requests

>>> from bs4 import BeautifulSoup

>>> r = requests.get("http://python123.io/ws/demo.html")

>>> soup = BeautifulSoup(r.text,"html.parser")

#提取信息

for link in soup.find_all('a'):

print(link.get('href'))#