一 、lvs介绍:

LVS是Linux Virtual Server的简写,意即Linux虚拟服务器,是一个虚拟的服务器集群系统。本项目在1998年5月由章文嵩博士成立,是中国国内最早出现的自由软件项目之一。

- LVS构成: 由一个或多个VIP +多个真实服务器构成

- LVS 简单工作原理:用户请求LVS VIP,LVS根据转发方式和算法,将请求转发给后端服务器,后端服务器接受到请求,返回给用户。对于用户来说,看不到WEB后端具体的应用。

二、实现lvs负载均衡方式:

- 实现LVS负载均衡转发方式有三种,分别为NAT、DR、TUN模式,LVS均衡算法包括:RR(round-robin)、LC(least_connection)、W(weight)RR、WLC模式等(RR为轮询模式,LC为最少连接模式)。

(1)LVS NAT原理:

用户请求LVS VIP到达director(LVS服务器:LB),director将请求的报文的目标IP地址改成后端的realserver IP地址,同时将报文的目标端口也改成后端选定的realserver相应端口,最后将报文发送到realserver,realserver将数据返给director,director再把数据发送给用户。(两次请求都经过director,所以访问大的话,director会成为瓶颈),如图所示:

(2) LVS DR原理:

用户请求LVS VIP到达director(LB均衡器),director将请求的报文的目标MAC地址改成后端的realserver MAC地址,目标IP为VIP(不变),源IP为用户IP地址(保持不变),然后Director将报文发送到realserver,realserver检测到目标为自己本地VIP,如果在同一个网段,然后将请求直接返给用户。如果用户跟realserver不在一个网段,则通过网关返回用户,如图所示:

三、 LVS负载均衡实战配置

1、利用ipvsadm 配置实现,nat模式:

LVS负载均衡技术实现是基于Linux内核模块IP_VS,与iptables一样是直接工作在内核中,互联网主流的Linux发行版默认都已经集成了ipvs模块,因此只需安装管理工具ipvsadm:

yum install ipvsadm -y

- 配置:

ipvsadm -A -t 192.168.10.16:80 -s rr

ipvsadm -a -t 192.168.10.16:80 -r 192.168.10.135 -m -w 2

ipvsadm -a -t 192.168.10.16:80 -r 192.168.10.136 -m -w 2 - 相关参数说明:

-A 增加一台虚拟服务器VIP地址;

-t 虚拟服务器提供的是tcp服务;

-s 使用的调度算法;

-a 在虚拟服务器中增加一台后端真实服务器;

-r 指定真实服务器地址;

-w 后端真实服务器的权重;

-m 设置当前转发方式为NAT模式;-g为直接路由模式;-i 模式为隧道模式。

这样就配置了一个VIP是192.168.10.16,端口是80、真实服务器是192.168.10.134、192.168.10.135, 端口是80 的虚拟服务器集群,当访问192.168.10.16:80 时,会按照rr 轮询的规则将请求转发给134和135主机的80端口。

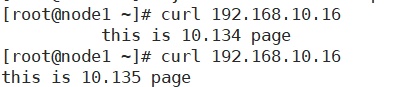

测试:

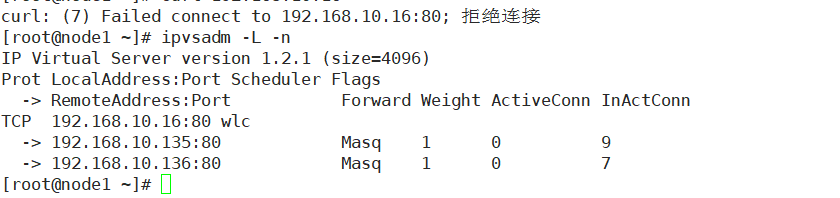

但是这种方法有个缺点,当后端服务器134或135服务挂掉的时候,例如136的nginx服务不在了,访问VIP就会报错:

也就是说ipvsadm不会自动剔除掉有问题的服务器:

由此引入keepalived:

- 它能按照配置的规则自动检测服务器的运行状态,并进行剔除和添加的动作,使用户完全感受不到后台服务器是否存在宕机的状态,当后端一台WEB服务器工作正常后Keepalived自动将WEB服务器加入到服务器群中,这些工作全部自动完成,不需要人工干涉,需要人工做的只是修复故障的WEB服务器。

- 另外使用keepalived可进行HA故障切换,也就是有一台备用的LVS,主LVS 宕机,LVS VIP自动切换到从,可以基于LVS+Keepalived实现负载均衡及高可用功能,满足网站7x24小时稳定高效的运行。

Keepalived基于三层检测(IP层,TCP传输层,及应用层),主要用于检测WEB服务器的状态,如果有一台WEB服务器死机,或工作出现故障,Keepalived检测到并将有故障的WEB服务器从系统中剔除;

需要注意,如果使用了keepalived.conf配置,就不需要再执行ipvsadm -A命令去添加均衡的realserver命令了,所有的配置都在keepalived.conf里面设置即可。

2、利用keepalived配置实现NAT模式

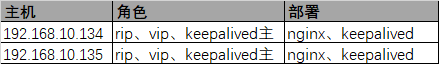

- 需求 搭建VIP为192.168.10.16 rip 为192.168.10.134、192.168.10.135、192.168.10.136 的服务器集群

2.1 环境

2.2 keepalived master的配置

192.168.10.134

清空ipvsadm 的配置:

修改/etc/keepalived/keepalived.conf 内容:

[root@node1 ~]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id node1

}

vrrp_script chk_nginx {

script "/data/sh/check_nginx.sh"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface ens33

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.10.16

}

}

virtual_server 192.168.10.16 80 {

delay_loop 1

lb_algo rr

lb_kind NAT

persistence_timeout 0

protocol TCP

real_server 192.168.10.135 80 {

weight 10

TCP_CHECK {

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

connect port 80

}

}

real_server 192.168.10.134 80 {

weight 10

TCP_CHECK {

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

connect port 80

}

}

}

2.3 keepalived backup的配置

192.168.10.135 修改/etc/keepalived/keepalived.conf 内容:

[root@node2 keepalived]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id node1

}

vrrp_script chk_nginx {

script "/data/sh/check_nginx.sh"

interval 2

weight -20

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

virtual_router_id 51

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.10.16

}

}

virtual_server 192.168.10.16 80 {

delay_loop 1

lb_algo rr

lb_kind NAT

persistence_timeout 0

protocol TCP

real_server 192.168.10.135 80 {

weight 10

TCP_CHECK {

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

connect port 80

}

}

real_server 192.168.10.134 80 {

weight 10

TCP_CHECK {

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

connect port 80

}

}

}

2.4 测试

启动192.168.10.134、192.168.10.135 keepalived 服务,主备同时运行:

(1)启动三个RIP 192.168.10.134、192.168.10.135、192.168.10.136 的nginx服务 :

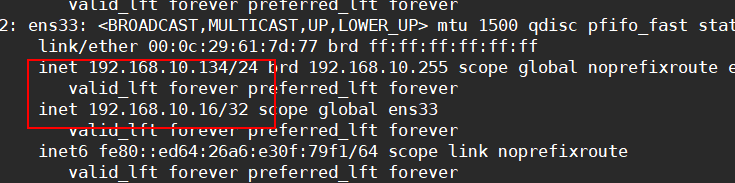

VIP绑定在 MASTER 192.168.10.134上:

查看ipvs 列表:

[root@node1 ~]# ipvsadm -L -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.10.16:80 rr

-> 192.168.10.134:80 Masq 10 0 0

-> 192.168.10.135:80 Masq 10 0 0

-> 192.168.10.136:80 Masq 10 0 0

日志显示在发ARP包:

Apr 13 16:47:24 test_kubeadm_web Keepalived_vrrp[103964]: Sending gratuitous ARP on ens33 for 192.168.10.16

Apr 13 16:47:24 test_kubeadm_web Keepalived_vrrp[103964]: VRRP_Instance(VI_1) Sending/queueing gratuitous ARPs on ens33 for 192.168.10.16

Apr 13 16:47:24 test_kubeadm_web Keepalived_vrrp[103964]: Sending gratuitous ARP on ens33 for 192.168.10.16

Apr 13 16:47:24 test_kubeadm_web Keepalived_vrrp[103964]: Sending gratuitous ARP on ens33 for 192.168.10.16

Apr 13 16:47:24 test_kubeadm_web Keepalived_vrrp[103964]: Sending gratuitous ARP on ens33 for 192.168.10.16

BACKUP 192.168.10.135 主机keepalived日志显示成为BACKUP 状态:

[root@node2 keepalived]# tail /var/log/keepalived.log

Apr 13 06:47:18 node2 Keepalived_vrrp[70905]: VRRP_Instance(VI_1) Sending/queueing gratuitous ARPs on ens33 for 192.168.10.16

Apr 13 06:47:18 node2 Keepalived_vrrp[70905]: Sending gratuitous ARP on ens33 for 192.168.10.16

Apr 13 06:47:18 node2 Keepalived_vrrp[70905]: Sending gratuitous ARP on ens33 for 192.168.10.16

Apr 13 06:47:18 node2 Keepalived_vrrp[70905]: Sending gratuitous ARP on ens33 for 192.168.10.16

Apr 13 06:47:18 node2 Keepalived_vrrp[70905]: Sending gratuitous ARP on ens33 for 192.168.10.16

Apr 13 06:47:18 node2 Keepalived_vrrp[70905]: VRRP_Instance(VI_1) Received advert with higher priority 100, ours 90

Apr 13 06:47:18 node2 Keepalived_vrrp[70905]: VRRP_Instance(VI_1) Entering BACKUP STATE

、

(2)停掉一个RIP 192.168.10.136 的nginx服务

[root@node3 ~]# systemctl stop nginx

[root@node3 ~]#

134 日志:

Apr 13 16:53:51 test_kubeadm_web Keepalived_healthcheckers[103963]: TCP connection to [192.168.10.136]:80 failed.

Apr 13 16:53:54 test_kubeadm_web Keepalived_healthcheckers[103963]: TCP connection to [192.168.10.136]:80 failed.

Apr 13 16:53:54 test_kubeadm_web Keepalived_healthcheckers[103963]: Check on service [192.168.10.136]:80 failed after 1 retry.

Apr 13 16:53:54 test_kubeadm_web Keepalived_healthcheckers[103963]: Removing service [192.168.10.136]:80 from VS [192.168.10.16]:80

135 日志,跟MASTER一致:

Apr 13 06:53:52 node2 Keepalived_healthcheckers[70904]: TCP connection to [192.168.10.136]:80 failed.

Apr 13 06:53:55 node2 Keepalived_healthcheckers[70904]: TCP connection to [192.168.10.136]:80 failed.

Apr 13 06:53:55 node2 Keepalived_healthcheckers[70904]: Check on service [192.168.10.136]:80 failed after 1 retry.

Apr 13 06:53:55 node2 Keepalived_healthcheckers[70904]: Removing service [192.168.10.136]:80 from VS [192.168.10.16]:80

查看ipvs列表,已自动从RIP集群中剔除136主机:

[root@node1 ~]# ipvsadm -L -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.10.16:80 rr

-> 192.168.10.134:80 Masq 10 0 0

-> 192.168.10.135:80 Masq 10 0 0

测试请求转发正常:

[root@node1 ~]# curl 192.168.10.16

this is 10.134 page

[root@node1 ~]# curl 192.168.10.16

this is 10.135 page

[root@node1 ~]# curl 192.168.10.16

this is 10.134 page

[root@node1 ~]# curl 192.168.10.16

this is 10.135 page

[root@node1 ~]#

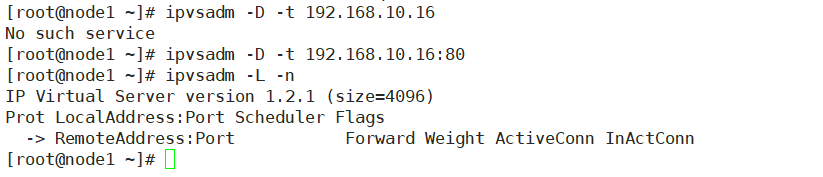

(3)停掉192.168.10.134 主机 的keepalived服务

[root@node1 ~]# systemctl stop keepalived.service

[root@node1 ~]# tail /var/log/keepalived.log

Apr 13 16:53:54 test_kubeadm_web Keepalived_healthcheckers[103963]: Check on service [192.168.10.136]:80 failed after 1 retry.

Apr 13 16:53:54 test_kubeadm_web Keepalived_healthcheckers[103963]: Removing service [192.168.10.136]:80 from VS [192.168.10.16]:80

Apr 13 16:56:41 test_kubeadm_web Keepalived[103962]: Stopping

Apr 13 16:56:41 test_kubeadm_web Keepalived_vrrp[103964]: VRRP_Instance(VI_1) sent 0 priority

Apr 13 16:56:41 test_kubeadm_web Keepalived_vrrp[103964]: VRRP_Instance(VI_1) removing protocol VIPs.

Apr 13 16:56:41 test_kubeadm_web Keepalived_healthcheckers[103963]: Removing service [192.168.10.135]:80 from VS [192.168.10.16]:80

Apr 13 16:56:41 test_kubeadm_web Keepalived_healthcheckers[103963]: Removing service [192.168.10.134]:80 from VS [192.168.10.16]:80

Apr 13 16:56:41 test_kubeadm_web Keepalived_healthcheckers[103963]: Stopped

Apr 13 16:56:42 test_kubeadm_web Keepalived_vrrp[103964]: Stopped

Apr 13 16:56:42 test_kubeadm_web Keepalived[103962]: Stopped Keepalived v1.3.5 (03/19,2017), git commit v1.3.5-6-g6fa32f2

134 主机上已没有ipvs集群:

[root@node1 ~]# ipvsadm -L -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

135主机显示已切换成为master:

Apr 13 06:56:42 node2 Keepalived_vrrp[70905]: VRRP_Instance(VI_1) Transition to MASTER STATE

Apr 13 06:56:43 node2 Keepalived_vrrp[70905]: VRRP_Instance(VI_1) Entering MASTER STATE

Apr 13 06:56:43 node2 Keepalived_vrrp[70905]: VRRP_Instance(VI_1) setting protocol VIPs.

Apr 13 06:56:43 node2 Keepalived_vrrp[70905]: Sending gratuitous ARP on ens33 for 192.168.10.16

Apr 13 06:56:43 node2 Keepalived_vrrp[70905]: VRRP_Instance(VI_1) Sending/queueing gratuitous ARPs on ens33 for 192.168.10.16

Apr 13 06:56:43 node2 Keepalived_vrrp[70905]: Sending gratuitous ARP on ens33 for 192.168.10.16

135 上查看ipvs 列表:

[root@node2 keepalived]# ipvsadm -L -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.10.16:80 rr

-> 192.168.10.134:80 Masq 10 0 0

-> 192.168.10.135:80 Masq 10 0 0

[root@node2 keepalived]#

3、利用keepalived配置实现DR模式

- 环境说明:

3.1 转发流程概述:

- 转发过程:

1, 首先用户用CIP请求VIP,中间肯定会有路由,这里为了方便理解,暂时省

略。

2, 根据上图可以看到,不管是调度器还是Real Server上都需要配置VIP,那么

当用户请求到达我们的集群网络的前端路由器的时候,请求数据包的源地址为CIP

目标地址为VIP,此时交换机会发广播问谁是VIP,那么我们集群中所有的节点都

配置有VIP,此时谁先响应路由器那么路由器就会将用户请求发给谁,这样一来我

们的集群系统的调度就没有意义了,那我们可以让Real Server 不回应来自网

络中的ARP地址解析请求,这样一来用户的请求数据包只会找调度器的VIP。

3,当调度器收到用户的请求后根据此前设定好的调度算法结果来确定将请求负载

到某台Real Server上去,假设此时根据调度算法的结果,会将请求负载到

RealServer 1上面去,此时调度器会将数据帧中的目标MAC地址修改为Real

Server1的MAC地址,然后再将数据帧发送出去。

4,当Real Server1 收到一个源地址为CIP目标地址为VIP的数据包时,Real

Server1发现目标地址为VIP,而VIP是自己,于是接受数据包并给予处理,当

Real Server1处理完请求后,会将一个源地址为VIP目标地址为CIP的数据包发

出去,

3.2 keepalived配置:

1)192.168.10.134 上的配置:

[root@node1 keepalived]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id node1

}

vrrp_script chk_nginx {

script "/data/sh/check_nginx.sh"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface ens33

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.10.16/24

}

}

virtual_server 192.168.10.16 80 {

delay_loop 1

lb_algo rr

lb_kind DR

persistence_timeout 0

protocol TCP

real_server 192.168.10.134 80 {

weight 10

TCP_CHECK {

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

connect port 80

}

}

real_server 192.168.10.135 80 {

weight 10

TCP_CHECK {

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

connect port 80

}

}

}

2) 3.2 192.168.10.135 上的配置:

[root@node2 ~]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id node1

}

vrrp_script chk_nginx {

script "/data/sh/check_nginx.sh"

interval 2

weight -20

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

virtual_router_id 51

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.10.16

}

}

virtual_server 192.168.10.16 80 {

delay_loop 1

lb_algo rr

lb_kind DR

persistence_timeout 0

protocol TCP

real_server 192.168.10.135 80 {

weight 10

TCP_CHECK {

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

connect port 80

}

}

real_server 192.168.10.134 80 {

weight 10

TCP_CHECK {

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

connect port 80

}

}

}

3.3 RIP配置

1) 绑定VIP到lo:1 网卡,使rip接收到请求时发现destIp是VIP,且VIP在自己的网卡上存在,就处理该请求

2) 设置arp抑制

# arp抑制:

# arp_ignore 默认为0,表示只要这台机器上面任何一个网卡设备上面有这个ip,就响应arp请求;设置为1,表示当arp请求过来的时候,如果接收的设备上面没有这个ip,就不响应。

# arp_announce 默认为0,表示将任何接口上的任何地址向外通告,设置为2,表示仅向与本地接口上的地址匹配的网络进行通告

echo 1 > /proc/sys/net/ipv4/conf/all/arp_ignore

echo 2 > /proc/sys/net/ipv4/conf/all/arp_announce

RIP的配置可写成如下的脚本一键配置:

[root@node2 ~]# cat /data/sh/keepalived2.sh

#!/bin/bash

#by cuicj

#############################

VIP=192.168.10.16

RIP=192.168.10.135

INTERFACE=lo:1

GATEWAY=192.168.10.134

case "$1" in

start)

cd /etc/sysconfig/network-scripts/

cat > ifcfg-$INTERFACE <<-EOF

DEVICE=$INTERFACE

IPADDR=$VIP

NETMASK=255.255.255.255

ONBOOT=yes

NAME=loopback

EOF

ifdown $INTERFACE &>/dev/null && ifup ${INTERFACE}

echo 1 >/proc/sys/net/ipv4/conf/all/arp_ignore

echo 2 >/proc/sys/net/ipv4/conf/all/arp_announce

route add default gw $GATEWAY &>/dev/null

;;

stop)

ifdown $INTERFACE

cd /etc/sysconfig/network-scripts/

rm -rf ifcfg-$INTERFACE

route del default gw $GATEWAY &>/dev/null

;;

status)

echo "VIP: `ip a | awk '/lo:1/{print $2}'`"

echo "GATEWAY: `route -n | awk 'NR==3{print $2}'`"

;;

*)

echo "$0: Usage: $0 {start|status|stop}"

exit 1

;;

esac

在192.168.10.134、135 上分别执行脚本,启动keepalived后,执行完毕后,会有如下信息:

134:

135:

3.3 DR模式测试:

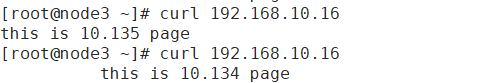

1)轮询:

在客户端 CIP 192.168.10.136 上访问VIP 192.168.10.16

2)故障主机自动剔除与恢复:

134主机上停止nginx服务:

[root@node1 keepalived]# systemctl stop nginx

[root@node1 keepalived]# ipvsadm -L -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.10.16:80 rr

-> 192.168.10.135:80 Route 10 0 2

[root@node1 keepalived]#

136上只返回135主机的查询结果:

134主机恢复nginx服务:

[root@node1 keepalived]# systemctl start nginx

[root@node1 keepalived]# ipvsadm -L -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.10.16:80 rr

-> 192.168.10.134:80 Route 10 0 0

-> 192.168.10.135:80 Route 10 0 5

[root@node1 keepalived]#

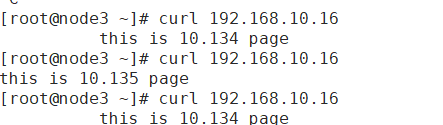

136上返回轮询结果:

3)ha 高可用测试:

134 主机停止keepalived服务:

[root@node1 keepalived]# systemctl stop keepalived

[root@node1 keepalived]# ipvsadm -L -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

[root@node1 keepalived]#

135 接管,成为MASTER:

[root@node2 ~]# ipvsadm -L -n

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 192.168.10.16:80 rr

-> 192.168.10.134:80 Route 10 0 0

-> 192.168.10.135:80 Route 10 0 0

[root@node2 ~]#

136 测试访问VIP 轮询: