Linux内核区间树(interval tree)是一种用于高效存储和查询区间的数据结构,被广泛用于许多内核子系统中,例如文件系统,内存管理,虚拟化等等,在区间树中,每个节点都表示一个区间,并存储一个键值,该键值通常是区间的左端点。

具体来说,在Linux内核中,区间树的键值类型是unsigned long,用于存储区间的左端点,每个区间树节点包含两个unsigned long类型的变量,start和last,分别表示节点所代表的区间的左端点和右端点,区间树还可以存储每个节点相关的数据结构,例如用于存储文件的inode结构体,因此,通过区间树可以高效率地查询满足某个条件的区间,并找到相关的数据结构。

#include <linux/init.h>

#include <linux/module.h>

#include <linux/moduleparam.h>

#include <linux/stat.h>

#include <linux/fs.h>

#include <linux/namei.h>

#include <linux/kdev_t.h>

#include <linux/cdev.h>

#include <linux/slab.h>

#include <linux/device.h>

#include <linux/seq_file.h>

#include <linux/sched/signal.h>

#include <linux/proc_fs.h>

#include <linux/pid.h>

#include <linux/pci.h>

#include <linux/usb.h>

#include <linux/kobject.h>

#include <linux/sched/mm.h>

#include <linux/platform_device.h>

#include <linux/netdevice.h>

#include <net/net_namespace.h>

#include <linux/sched/debug.h>

#include <linux/topology.h>

#include <linux/version.h>

#include <linux/fs_struct.h>

#include <linux/mount.h>

#include <linux/i2c.h>

#include <linux/backing-dev-defs.h>

#include <linux/dmi.h>

#include <linux/interval_tree_generic.h>

int seqfile_debug_mode = 0;

EXPORT_SYMBOL(seqfile_debug_mode);

module_param(seqfile_debug_mode, int, 0664);

int pid_number = -1;

EXPORT_SYMBOL(pid_number);

module_param(pid_number, int, 0664);

static int kobj_created = 0;

static struct kset *class_zilong;

static struct kobject kobj;

static void kill_processes(int pid_nr);

//========================================================================================================================================

struct va_mapping {

void *cookie;

struct rb_node rb;

uint64_t start;

uint64_t last;

uint64_t __subtree_last;

};

#define START(node) ((node)->start)

#define LAST(node) ((node)->last)

//bool zlcao_vm_it_augment_compute_max(struct va_mapping *node, bool exit);

//void zlcao_vm_it_augment_propagate(struct rb_node *rb, struct rb_node *stop);

//void zlcao_vm_it_augment_copy(struct rb_node *rb_old, struct rb_node *rb_new);

//void zlcao_vm_it_augment_rotate(struct rb_node *rb_old, struct rb_node *rb_new);

//void zlcao_vm_it_insert(struct va_mapping *node, struct rb_root_cached *root);

//void zlcao_vm_it_remove(struct va_mapping *node, struct rb_root_cached *root);

//struct va_mapping *zlcao_vm_it_subtree_search(struct va_mapping *node, uint64_t start, uint64_t last);

//struct va_mapping *zlcao_vm_it_iter_first(struct rb_root_cached *root, uint64_t start, uint64_t last);

//struct va_mapping *zlcao_vm_it_iter_next(struct va_mapping *node, uint64_t start, uint64_t last);

//static const struct rb_augment_callbacks zlcao_vm_it_augment = { .propagate = zlcao_vm_it_augment_propagate, .copy = zlcao_vm_it_augment_copy, .rotate = zlcao_vm_it_augment_rotate };

INTERVAL_TREE_DEFINE(struct va_mapping, rb, uint64_t, __subtree_last,START, LAST, static, zlcao_vm_it)

static struct rb_root_cached root;

void interval_tree_test(struct seq_file *m)

{

struct va_mapping *va, *vb, *vc, *vtmp;

struct rb_node *tmp;

va = kmalloc_array(10, sizeof(*va), GFP_KERNEL);

if(va == NULL) {

printk("%s line %d, failure.\n", __func__, __LINE__);

return;

}

va[0].start = 3;

va[0].last = 4;

zlcao_vm_it_insert(&va[0], &root);

va[1].start = 5;

va[1].last = 7;

zlcao_vm_it_insert(&va[1], &root);

va[2].start = 9;

va[2].last = 11;

zlcao_vm_it_insert(&va[2], &root);

va[3].start = 100;

va[3].last = 111;

zlcao_vm_it_insert(&va[3], &root);

va[4].start = 180;

va[4].last = 211;

zlcao_vm_it_insert(&va[4], &root);

va[5].start = 280;

va[5].last = 311;

zlcao_vm_it_insert(&va[5], &root);

va[6].start = 380;

va[6].last = 411;

zlcao_vm_it_insert(&va[6], &root);

va[7].start = 580;

va[7].last = 611;

zlcao_vm_it_insert(&va[7], &root);

va[8].start = 9;

va[8].last = 1000;

zlcao_vm_it_insert(&va[8], &root);

va[9].start = 105;

va[9].last = 190;

zlcao_vm_it_insert(&va[9], &root);

seq_printf(m, "interval tree test fine!\n");

tmp = rb_first(&root.rb_root);

if(tmp != NULL && tmp != root.rb_root.rb_node) {

vb = container_of(tmp, struct va_mapping, rb);

seq_printf(m, "first node start %lld, last %lld!\n", vb->start, vb->last);

}

while(tmp) {

tmp = rb_next(tmp);

if(tmp != NULL && tmp != root.rb_root.rb_node) {

vb = container_of(tmp, struct va_mapping, rb);

seq_printf(m, "node start %lld, last %lld!\n", vb->start, vb->last);

} else if(tmp == root.rb_root.rb_node) {

vb = container_of(tmp, struct va_mapping, rb);

seq_printf(m, "finish node start %lld, last %lld!\n", vb->start, vb->last);

} else {

seq_printf(m, "tmp is null!\n");

}

}

for (vc = zlcao_vm_it_iter_first(&root, 0, U64_MAX),vtmp = vc ? zlcao_vm_it_iter_next(vc, 0, U64_MAX) : NULL;

vc;

vc = vtmp, vtmp = vtmp ? zlcao_vm_it_iter_next(vtmp, 0, U64_MAX) : NULL) {

seq_printf(m, "vist start %lld, last %lld!\n", vc->start, vc->last);

}

vb = container_of(root.rb_root.rb_node, struct va_mapping, rb);

vc = zlcao_vm_it_subtree_search(vb, 103, 189);

if(vc == NULL) {

seq_printf(m, "%s line %d, vc is null.\n", __func__, __LINE__);

} else {

seq_printf(m, "find ok %lld, last %lld!\n", vc->start, vc->last);

}

vc = zlcao_vm_it_subtree_search(vb, 1, 10);

if(vc == NULL) {

seq_printf(m, "%s line %d, vc is null.\n", __func__, __LINE__);

} else {

seq_printf(m, "find ok %lld, last %lld!\n", vc->start, vc->last);

}

while(vc)

{

vc = zlcao_vm_it_iter_next(vc, 1, 10);

if(vc == NULL) {

seq_printf(m, "%s line %d, vc is null.\n", __func__, __LINE__);

} else {

seq_printf(m, "find ok %lld, last %lld!\n", vc->start, vc->last);

}

}

zlcao_vm_it_remove(&va[0], &root);

zlcao_vm_it_remove(&va[1], &root);

zlcao_vm_it_remove(&va[2], &root);

zlcao_vm_it_remove(&va[3], &root);

zlcao_vm_it_remove(&va[4], &root);

zlcao_vm_it_remove(&va[5], &root);

zlcao_vm_it_remove(&va[6], &root);

zlcao_vm_it_remove(&va[7], &root);

zlcao_vm_it_remove(&va[8], &root);

zlcao_vm_it_remove(&va[9], &root);

memset(&root, 0x00, sizeof(root));

kfree(va);

return;

}

#undef START

#undef LAST

//========================================================================================================================================

// 开始输出任务列表

// my_seq_ops_start()的返回值,会传递给my_seq_ops_next()的v参数

static void *my_seq_ops_start(struct seq_file *m, loff_t *pos)

{

loff_t index = *pos;

struct task_struct *task;

printk("%s line %d, index %lld.count %ld, size %ld here.\n", __func__, __LINE__, index, m->count, m->size);

if(seqfile_debug_mode == 0) {

// 如果缓冲区不足, seq_file可能会重新调用start()函数,

// 并且传入的pos是之前已经遍历到的位置,

// 这里需要根据pos重新计算开始的位置

for_each_process(task) {

if (index-- == 0) {

return task;

}

}

} else {

return NULL + (*pos == 0);

}

return NULL;

}

// 继续遍历, 直到my_seq_ops_next()放回NULL或者错误

static void *my_seq_ops_next(struct seq_file *m, void *v, loff_t *pos)

{

struct task_struct *task = NULL;

if(seqfile_debug_mode == 0) {

task = next_task((struct task_struct *)v);

// 这里加不加好像都没有作用

++ *pos;

// 返回NULL, 遍历结束

if(task == &init_task) {

return NULL;

}

} else {

++ *pos;

}

return task;

}

// 遍历完成/出错时seq_file会调用stop()函数

static void my_seq_ops_stop(struct seq_file *m, void *v)

{

}

static void pci_reset_sbr(struct pci_dev *dev)

{

uint16_t ctrl;

pci_read_config_word(dev, PCI_BRIDGE_CONTROL, &ctrl);

ctrl |= PCI_BRIDGE_CTL_BUS_RESET;

pci_write_config_word(dev, PCI_BRIDGE_CONTROL, ctrl);

msleep(2);

ctrl &= ~PCI_BRIDGE_CTL_BUS_RESET;

pci_write_config_word(dev, PCI_BRIDGE_CONTROL, ctrl);

ssleep(1);

}

static struct pci_dev *g_pci_dev = NULL;

static int lookup_pci_devices_reset(struct device *dev, void *data)

{

struct seq_file *m = (struct seq_file *)data;

struct pci_dev *pdev = to_pci_dev(dev);

seq_printf(m, "%s line %d vendor id 0x%x, device id 0x%x, devname %s.\n", __func__, __LINE__, pdev->vendor, pdev->device, dev_name(&pdev->dev));

// find the EHCI device.8086:a12f

//if ((pdev->vendor == 0x1ee0) && (pdev->device == 0xf)) {

if ((pdev->vendor == 0x168c) && (pdev->device == 0x36)) {

//if ((pdev->vendor == 0x8086) && (pdev->device == 0xa12f)) {

seq_printf(m, "%s line %d, do the reset of wireless device, domain 0x%04x, device PCI:%d:%d:%d\n.", \

__func__, __LINE__, pci_domain_nr(pdev->bus), pdev->bus->number, PCI_SLOT(pdev->devfn), PCI_FUNC(pdev->devfn));

g_pci_dev = pdev;

}

return 0;

}

static unsigned int pci_rescan_bus_bridge_resize_priv(struct pci_dev *bridge)

{

unsigned int max;

struct pci_bus *bus = bridge->subordinate;

max = pci_scan_child_bus(bus);

pci_assign_unassigned_bridge_resources(bridge);

pci_bus_add_devices(bus);

return max;

}

static struct pci_bus *find_pci_root_bus(struct pci_bus *bus)

{

while (bus->parent) bus = bus->parent;

return bus;

}

static int lookup_pci_devices(struct device *dev, void *data)

{

struct seq_file *m = (struct seq_file *)data;

struct pci_dev *pdev = to_pci_dev(dev);

struct pci_bus *rootbus = NULL;

rootbus = find_pci_root_bus(pdev->bus);

seq_printf(m, "vendor id 0x%x, device id 0x%x, devname %s.domain: 0x%04x, BDF: %x:%x:%x. sub 0x%p. rootbus number %d, rootbusname %s, bridgename %s. bridge %s.\n", \

pdev->vendor, pdev->device, dev_name(&pdev->dev),pci_domain_nr(pdev->bus), pdev->bus->number, \

PCI_SLOT(pdev->devfn), PCI_FUNC(pdev->devfn), pdev->subordinate, rootbus->number, rootbus->name, dev_name(pdev->bus->bridge), dev_name(pdev->bus->bridge));

if(pdev->bus->self) {

seq_printf(m,"bridge pdev->bus->self->dev = 0x%px, %s.\n", &pdev->bus->self->dev, dev_name(&pdev->bus->self->dev));

}

seq_printf(m, "sr-iov is %d %d.\n", pci_num_vf(pdev), pdev->is_physfn);

if(dev_is_pf(&pdev->dev)) {

seq_printf(m, "is pf.\n");

} else {

seq_printf(m, "is vf.\n");

}

if(pdev->dev.bus->iommu_ops) {

seq_printf(m, "%s line %d iommu ops is 0x%lx.\n", __func__, __LINE__, (unsigned long)pdev->dev.bus->iommu_ops);

} else {

seq_printf(m, "no iommuops.\n");

}

if(pdev->dev.dma_ops) {

seq_printf(m, "%s line %d dma ops is 0x%lx.\n", __func__, __LINE__, (unsigned long)pdev->dev.dma_ops);

} else {

seq_printf(m, "no dma ops.\n");

}

if(pdev->dev.iommu_group) {

seq_printf(m, "%s line %d iommu group id is 0x%px.\n", __func__, __LINE__, pdev->dev.iommu_group);

} else {

seq_printf(m, "%s line %d no iommu group exist.\n", __func__, __LINE__);

}

return 0;

}

static int lookup_pci_drivers(struct device_driver *drv, void *data)

{

struct seq_file *m = (struct seq_file *)data;

seq_printf(m, "driver name %s.\n", drv->name);

return 0;

}

static int lookup_platform_devices(struct device *dev, void *data)

{

struct seq_file *m = (struct seq_file *)data;

struct platform_device *platdev = to_platform_device(dev);

seq_printf(m, "devpath %s.\n", platdev->name);

return 0;

}

static int lookup_platform_drivers(struct device_driver *drv, void *data)

{

struct seq_file *m = (struct seq_file *)data;

seq_printf(m, "driver name %s.\n", drv->name);

return 0;

}

static int list_device_belongs_todriver_pci(struct device *dev, void *p)

{

struct seq_file *m = (struct seq_file *)p;

struct pci_dev *pdev = to_pci_dev(dev);

seq_printf(m, "vendor id 0x%x, device id 0x%x, devname %s.\n", pdev->vendor, pdev->device, dev_name(&pdev->dev));

return 0;

}

static int list_device_belongs_todriver_platform(struct device *dev, void *p)

{

struct seq_file *m = (struct seq_file *)p;

struct platform_device *platdev = to_platform_device(dev);

seq_printf(m, "platdevname %s.\n", platdev->name);

return 0;

}

static int pcie_device_info_find_bridge(struct pci_dev *pdev, void *data)

{

struct seq_file *m = (struct seq_file *)data;

struct pci_bus *rootbus = NULL;

rootbus = pci_find_bus(0, 0);

if(pci_is_bridge(pdev)){

seq_printf(m, "bridge find.vendor id 0x%x, device id 0x%x, devname %s. domain:0x%04x, BDF: %x:%x:%x, subordinate 0x%p, busid %d, rootbus %d rootself 0x%p.\n", \

pdev->vendor, pdev->device, dev_name(&pdev->dev), pci_domain_nr(pdev->bus), pdev->bus->number, PCI_SLOT(pdev->devfn), PCI_FUNC(pdev->devfn), pdev->subordinate,

pdev->subordinate->number, rootbus->number, rootbus->self);

}

return 0;

}

static int pcie_device_info(struct pci_dev *pdev, void *data)

{

struct seq_file *m = (struct seq_file *)data;

seq_printf(m, "vendor id 0x%04x, device id 0x%04x, devname %s, belongs to bus %16s, parent bus name %6s subordinate 0x%p.\n", \

pdev->vendor, pdev->device, dev_name(&pdev->dev), pdev->bus->name, pdev->bus->parent? pdev->bus->parent->name : "null", pdev->subordinate);

if(pdev->subordinate) {

seq_printf(m, " subordinate have bus name %s.\n", pdev->subordinate->name);

if(pdev->subordinate->self) {

seq_printf(m, " subordinate have dev name %s.\n", dev_name(&pdev->subordinate->self->dev));

if(pdev->subordinate->self != pdev) {

seq_printf(m, " cant happend!\n");

} else {

seq_printf(m, " surely!\n");

}

}

} else {

seq_printf(m, " subordinate not have.\n");

}

if(pdev->bus->self) {

seq_printf(m, " device belongs to child pci bus %s.\n", dev_name(&pdev->bus->self->dev));

} else {

seq_printf(m, " device belongs to top lvl pci bus.\n");

}

seq_printf(m, "\n");

return 0;

}

struct zilong_attribute {

struct attribute attr;

ssize_t (*show)(struct kobject *kobj, struct zilong_attribute *attr, char *buf);

ssize_t (*store)(struct kobject *dev, struct zilong_attribute *attr,const char *buf, size_t count);

};

#define to_zilong_attr(_attr) container_of(_attr, struct zilong_attribute, attr)

static ssize_t zilong_attr_show(struct kobject *kobj, struct attribute *attr, char *buf)

{

struct zilong_attribute *zlattr = to_zilong_attr(attr);

zlattr->show(kobj, zlattr, buf);

return 0;

}

static ssize_t zilong_attr_store(struct kobject *kobj, struct attribute *attr, const char *buf, size_t count)

{

ssize_t size;

struct zilong_attribute *zlattr = to_zilong_attr(attr);

size = zlattr->store(kobj, zlattr, buf, count);

return size;

}

static const struct sysfs_ops zilong_sysfs_ops = {

.show = zilong_attr_show,

.store = zilong_attr_store,

};

static struct kobj_type zilong_ktype = {

.release = NULL,

.sysfs_ops = &zilong_sysfs_ops,

.namespace = NULL,

.get_ownership = NULL,

};

ssize_t height_show(struct kobject *kobj, struct zilong_attribute *attr, char *buf)

{

char *p = buf;

p += sprintf(p, "I am 180 cm tall.\n");

printk("%s line %d output %ld.\n", __func__, __LINE__, p - buf);

return p - buf;

}

ssize_t height_store(struct kobject *dev, struct zilong_attribute *attr,const char *buf, size_t count)

{

unsigned long height = 0;

sscanf(buf, "%lx", &height);

if (printk_ratelimit())

printk("%s line %d, height %ld.\n", __func__, __LINE__, height);

return count;

}

struct zilong_attribute height = {

.attr = {

.name = "height",

.mode = 0777,

},

.show = height_show,

.store = height_store,

};

static void f(struct super_block *sb, void *data)

{

struct seq_file *m = (struct seq_file*)data;

// for the sb->s_root dentry, d_parent is itself.

seq_dentry(m, sb->s_root, " \t\n\\");

seq_printf(m, "device %s.\n", sb->s_bdi->dev_name);

}

struct vfsmount *get_path_mount(char *path)

{

struct path fspath;

struct vfsmount *mnt;

int err = kern_path(path, LOOKUP_FOLLOW, &fspath);

if (err){

printk(KERN_ERR "failed to get path.\n");

return NULL;

}

mnt = fspath.mnt;

return mnt;

}

#if 1

struct dentry *get_mount_entry(struct vfsmount *mnt, void **n, struct vfsmount **parent)

{

void *p = NULL;

p = mnt;

mnt = p;

return NULL;

}

#endif

struct dentry *get_mount_entry(struct vfsmount *mnt, void**, struct vfsmount **parent);

static void hack_mount(struct seq_file *m, struct vfsmount *mnt)

{

struct path path;

char buf[64];

char *name;

void *ptr;

struct vfsmount *mt;

struct dentry *dent = (struct dentry*) (*(unsigned long*)((unsigned long)mnt - sizeof(void*)));

seq_printf(m, "mnt parent dentry name %s, %s.\n", dent->d_parent->d_name.name, dent->d_name.name);

dent = get_mount_entry(mnt, &ptr, &mt);

seq_printf(m, "mnt parent dentry name %s, %s, offset %ld.\n", dent->d_parent->d_name.name, dent->d_name.name, (unsigned long)ptr - (unsigned long)mnt);

path.mnt = mnt;

path.dentry=mnt->mnt_root;

name = d_path(&path, buf, sizeof(buf));

seq_printf(m, "mount dir is %s.\n", name);

if(mt){

struct vfsmount *cd = NULL;

seq_printf(m, "parent fs is %s,mt=0x%px.\n", mt->mnt_sb->s_type->name,mt);

get_mount_entry(mt, &ptr, &cd);

if(cd) {

seq_printf(m, "parent parent fs is %s cd=0x%px.\n", cd->mnt_sb->s_type->name, cd);

} else {

seq_printf(m, "parent parent fs is null.\n");

}

}

}

static void check_fs_mount(struct seq_file *m, char *path)

{

struct vfsmount *mnt, *root;

struct dentry* dentry = NULL;

root = current->fs->root.mnt;

mnt = get_path_mount(path);

if(mnt){

seq_printf(m, "fstype %s, info %s, mntroot %s, mntroot 0x%px, superroot %px, root %px.\n", \

mnt->mnt_sb->s_type->name, mnt->mnt_sb->s_id, mnt->mnt_root->d_name.name, mnt->mnt_root, mnt->mnt_sb->s_root, root);

seq_printf(m, "fstype %s, info %s, mntroot %s, mntroot 0x%px, superroot %px, root %px,mnt %px.\n", \

root->mnt_sb->s_type->name, root->mnt_sb->s_id, root->mnt_root->d_name.name, root->mnt_root, root->mnt_sb->s_root, root, mnt);

if(mnt->mnt_sb->s_root == mnt->mnt_root){

seq_printf(m, "root identical.\n");

} else {

seq_printf(m, "root non-identical.\n");

}

dentry = mnt->mnt_root;

while (dentry->d_parent != dentry) {

dentry = dentry->d_parent;

seq_printf(m, "dentry name %s.\n", dentry->d_name.name);

}

hack_mount(m, mnt);

}

}

void list_all_mount_points(struct seq_file *m)

{

//struct task_struct *task = get_current();

check_fs_mount(m, "/");

//check_fs_mount(m, "/home/zlcao/Workspace/linux/ramfs/mountdir");

check_fs_mount(m, "/dev");

check_fs_mount(m, "/tmp");

check_fs_mount(m, "/var");

check_fs_mount(m, "/dev/pts");

check_fs_mount(m, "/home/zlcao/Workspace/zlramfs");

return;

}

static int __process_all_devices(struct device *dev, void *data)

{

struct seq_file *m = (struct seq_file*)data;

if(dev->type == &i2c_adapter_type){

struct i2c_adapter *adap = to_i2c_adapter(dev);

seq_printf(m, "this is adaptor adaptor name %s dev name %s.\n", adap->name, dev_name(&adap->dev));

}

else if(dev->type == &i2c_client_type){

struct i2c_client *clie = to_i2c_client(dev);

seq_printf(m, "this is device %s, dev %s.\n", clie->name, dev_name(&clie->dev));

}

else

seq_printf(m, "cant hanppend.\n");

return 0;

}

static void probe_i2c_adaptor(struct seq_file *m, int id)

{

struct i2c_adapter *adap;

adap = i2c_get_adapter(id);

if(!adap){

seq_printf(m, "cant find i2c adaptor 6.\n");

return;

}

//i2c_transfer(adap, msg, ...);

seq_printf(m, "i2c adaptor name %s, i2c dev name %s.\n", adap->name, dev_name(&adap->dev));

i2c_put_adapter(adap);

i2c_for_each_dev(m, __process_all_devices);

}

void list_all_i2c_devices(struct seq_file *m)

{

probe_i2c_adaptor(m, 0);

probe_i2c_adaptor(m, 1);

probe_i2c_adaptor(m, 2);

probe_i2c_adaptor(m, 3);

probe_i2c_adaptor(m, 4);

probe_i2c_adaptor(m, 5);

probe_i2c_adaptor(m, 6);

probe_i2c_adaptor(m, 7);

return;

}

typedef struct dmi_info_entry

{

int id;

char *name;

}dmi_info_entry_t;

#define DMI_ENTRY(idx) {.id = idx, .name = #idx}

dmi_info_entry_t dmi_tbl[] = {

DMI_ENTRY(DMI_NONE),

DMI_ENTRY(DMI_BIOS_VENDOR),

DMI_ENTRY(DMI_BIOS_VERSION),

DMI_ENTRY(DMI_BIOS_DATE),

//DMI_ENTRY(DMI_BIOS_RELEASE),

//DMI_ENTRY(DMI_EC_FIRMWARE_RELEASE),

DMI_ENTRY(DMI_SYS_VENDOR),

DMI_ENTRY(DMI_PRODUCT_NAME),

DMI_ENTRY(DMI_PRODUCT_VERSION),

DMI_ENTRY(DMI_PRODUCT_SERIAL),

DMI_ENTRY(DMI_PRODUCT_UUID),

DMI_ENTRY(DMI_PRODUCT_SKU),

DMI_ENTRY(DMI_PRODUCT_FAMILY),

DMI_ENTRY(DMI_BOARD_VENDOR),

DMI_ENTRY(DMI_BOARD_NAME),

DMI_ENTRY(DMI_BOARD_VERSION),

DMI_ENTRY(DMI_BOARD_SERIAL),

DMI_ENTRY(DMI_BOARD_ASSET_TAG),

DMI_ENTRY(DMI_CHASSIS_VENDOR),

DMI_ENTRY(DMI_CHASSIS_TYPE),

DMI_ENTRY(DMI_CHASSIS_VERSION),

DMI_ENTRY(DMI_CHASSIS_SERIAL),

DMI_ENTRY(DMI_CHASSIS_ASSET_TAG),

//DMI_ENTRY(DMI_STRING_MAX),

DMI_ENTRY(DMI_OEM_STRING),

};

void dmi_info_verbose(struct seq_file *m)

{

int i;

for (i = 0; i < sizeof(dmi_tbl)/sizeof(dmi_info_entry_t); i ++){

const char *s = dmi_get_system_info(dmi_tbl[i].id);

if(s)

seq_printf(m, "DMI %s INFO: %s.\n", dmi_tbl[i].name, s);

else

seq_printf(m, "DMI %s INFO is null.\n", dmi_tbl[i].name);

}

return;

}

unsigned long get_mm_rss_counter(struct mm_struct *mm, int idx)

{

unsigned long val = atomic_long_read(&mm->rss_stat.count[idx]);

return val;

}

void list_bus_iommu(struct seq_file *m)

{

if(pci_bus_type.iommu_ops)

seq_printf(m, "pci_bus type iommu %px.\n", pci_bus_type.iommu_ops);

else

seq_printf(m, "pci bus iommuops is null.\n");

if(i2c_bus_type.iommu_ops)

seq_printf(m, "i2c_bus type iommu %px.\n", i2c_bus_type.iommu_ops);

else

seq_printf(m, "i2c bus iommuops is null.\n");

if(platform_bus_type.iommu_ops)

seq_printf(m, "platform_bus type iommu %px.\n", platform_bus_type.iommu_ops);

else

seq_printf(m, "platform bus iommuops is null.\n");

}

void list_all_process_vma(struct seq_file *m)

{

int i;

struct mm_struct *mm;

struct rb_node *nd;

struct rb_root *root;

struct vm_area_struct *vma;

char buf[256];

mm = current->mm;

root = &mm->mm_rb;

seq_printf(m, "mm->mm_count %d, mm->mm_users %d mm->total_vm(total pages mapped) %ld, mm->pinned_vm 0x%llx.\n", \

atomic_read(&mm->mm_count), atomic_read(&mm->mm_users), mm->total_vm, atomic64_read(&mm->pinned_vm));

seq_printf(m, "pagetable bytes 0x%lx.\n", mm_pgtables_bytes(mm));

for(i = 0; i < NR_MM_COUNTERS; i ++) {

seq_printf(m, "rss idx %d: 0x%lx.\n", i, get_mm_rss_counter(mm, i));

}

i = 0;

seq_printf(m, "iter vam with rbtree in mm_struct.\n");

for (nd = rb_first(root); nd; nd = rb_next(nd)) {

char *nm = "?";

vma = rb_entry(nd, struct vm_area_struct, vm_rb);

memset(buf, 0x00, 256);

if(vma->vm_file) {

nm = file_path(vma->vm_file, buf, 255);

}

seq_printf(m, "%d: %s line %d, vma->start: 0x%lx, vma->end: 0x%lx, file %s.\n", \

i++, __func__, __LINE__, vma->vm_start, vma->vm_end, nm);

}

i = 0;

seq_printf(m, "iter vam with vmlist in mm_struct.\n");

vma = mm->mmap;

while(vma != NULL) {

seq_printf(m, "%d: %s line %d, vma->start: 0x%lx, vma->end: 0x%lx.\n", \

i++, __func__, __LINE__, vma->vm_start, vma->vm_end);

vma = vma->vm_next;

}

return;

}

void list_all_mount_device(struct seq_file *m)

{

#if 0

struct nsproxy *nsp;

struct task_struct *task = current;

struct mnt_namespace *ns = NULL;

struct list_head *p;

struct mount *mnt, *ret = NULL;

task_lock(task);

nsp = task->nsproxy;

if (!nsp || !nsp->mnt_ns) {

printk("%s line %d. return error.\n", __func__, __LINE__);

task_unlock(task);

put_task_struct(task);

return;

}

ns = nsp->mnt_ns;

if (!task->fs) {

printk("%s line %d. return error.\n", __func__, __LINE__);

task_unlock(task);

put_task_struct(task);

return;

}

task_unlock(task);

put_task_struct(task);

list_for_each_continue(p, &ns->list) {

mnt = list_entry(p, typeof(*mnt), mnt_list);

}

return;

#else

struct file_system_type *fs_type;

fs_type = get_fs_type("ext4");

if(fs_type == NULL){

printk("%s line %d. return error.\n", __func__, __LINE__);

return;

}

iterate_supers_type(fs_type, f, m);

#endif

}

// 此函数将数据写入`seq_file`内部的缓冲区

// `seq_file`会在合适的时候把缓冲区的数据拷贝到应用层

// 参数@V是start/next函数的返回值

static int my_seq_ops_show(struct seq_file *m, void *v)

{

struct task_struct *task = NULL;

struct task_struct *tsk = NULL;

struct task_struct *p = NULL;

struct file *file = m->private;

struct pid *session = NULL;

if(seqfile_debug_mode == 0) {

seq_puts(m, " file=");

seq_file_path(m, file, "\n");

seq_putc(m, ' ');

task = (struct task_struct *)v;

session = task_session(task);

tsk = pid_task(session, PIDTYPE_PID);

if(task->flags & PF_KTHREAD) {

seq_printf(m, "Kernel thread output: PID=%u, task: %s, index=%lld, read_pos=%lld, %s.\n", task_tgid_nr(task),/* task->tgid,*/

task->comm, m->index, m->read_pos, tsk? "has session" : "no session");

} else {

seq_printf(m, "User thread: PID=%u, task: %s, index=%lld, read_pos=%lld %s.\n", task_tgid_nr(task), /* task->tgid,*/

task->comm, m->index, m->read_pos, tsk? "has session" : "no session");

}

seq_printf(m, "==================\n");

sched_show_task(task);

} else if(seqfile_debug_mode == 1) {

struct task_struct *g, *p;

static int oldcount = 0;

static int entercount = 0;

char *str;

printk("%s line %d here enter %d times.\n", __func__, __LINE__, ++ entercount);

seq_printf(m, "%s line %d here enter %d times.\n", __func__, __LINE__, ++ entercount);

rcu_read_lock();

for_each_process_thread(g, p) {

struct task_struct *session = pid_task(task_session(g), PIDTYPE_PID);

struct task_struct *thread = pid_task(task_session(p), PIDTYPE_PID);

struct task_struct *ggroup = pid_task(task_pgrp(g), PIDTYPE_PID);

struct task_struct *pgroup = pid_task(task_pgrp(p), PIDTYPE_PID);

struct pid * pid = task_session(g);

if(list_empty(&p->tasks)) {

str = "empty";

} else {

str = "not empty";

}

seq_printf(m, "process %s(pid %d tgid %d,cpu%d) thread %s(pid %d tgid %d,cpu%d),threadnum %d, %d. tasks->prev = %p, \

tasks->next = %p, p->tasks=%p, %s, process parent %s(pid %d tgid %d), thread parent%s(pid %d, tgid %d, files %p\n)",

g->comm, task_pid_nr(g), task_tgid_nr(g), task_cpu(g), \

p->comm, task_pid_nr(p), task_tgid_nr(p), task_cpu(p), \

get_nr_threads(g), get_nr_threads(p), p->tasks.prev, p->tasks.next, &p->tasks, str, g->real_parent->comm, \

task_pid_nr(g->real_parent),task_tgid_nr(g->real_parent), p->real_parent->comm, task_pid_nr(p->real_parent), task_tgid_nr(p->real_parent), p->files);

if(ggroup) {

seq_printf(m, "ggroup(pid %d tgid %d).", task_pid_nr(ggroup),task_tgid_nr(ggroup));

}

if(pgroup) {

seq_printf(m, "pgroup(pid %d tgid %d).", task_pid_nr(pgroup),task_tgid_nr(pgroup));

}

seq_printf(m, "current smp processor id %d.", smp_processor_id());

if(thread) {

seq_printf(m, "thread session %s(%d).", thread->comm, task_pid_nr(thread));

}

if(session) {

seq_printf(m, "process session %s(%d).", session->comm, task_pid_nr(session));

}

if(oldcount == 0 || oldcount != m->size) {

printk("%s line %d, m->count %ld, m->size %ld.", __func__, __LINE__, m->count, m->size);

oldcount = m->size;

}

if(pid){

seq_printf(m, "pid task %p,pgid task %p, psid_task %p", pid_task(pid, PIDTYPE_PID), pid_task(pid, PIDTYPE_PGID), pid_task(pid, PIDTYPE_SID));

seq_printf(m, "pid task %s,pgid task %s, psid_task %s", pid_task(pid, PIDTYPE_PID)->comm, pid_task(pid, PIDTYPE_PGID)->comm, pid_task(pid, PIDTYPE_SID)->comm);

}

seq_printf(m, "\n");

}

rcu_read_unlock();

} else if(seqfile_debug_mode == 2) {

for_each_process(task) {

struct pid *pgrp = task_pgrp(task);

seq_printf(m, "Group Header %s(%d,cpu%d):\n", task->comm, task_pid_nr(task), task_cpu(task));

do_each_pid_task(pgrp, PIDTYPE_PGID, p) {

seq_printf(m, " process %s(%d,cpu%d) thread %s(%d,cpu%d),threadnum %d, %d.\n",

task->comm, task_pid_nr(task), task_cpu(task), \

p->comm, task_pid_nr(p), task_cpu(p), \

get_nr_threads(task), get_nr_threads(p));

} while_each_pid_task(pgrp, PIDTYPE_PGID, p);

}

} else if (seqfile_debug_mode == 3) {

for_each_process(task) {

struct pid *session = task_session(task);

struct task_struct *tsk = pid_task(session, PIDTYPE_PID);

if(tsk) {

seq_printf(m, "session task %s(%d,cpu%d):", tsk->comm, task_pid_nr(tsk), task_cpu(tsk));

} else {

seq_printf(m, "process %s(%d,cpu%d) has no session task.", task->comm, task_pid_nr(task), task_cpu(task));

}

seq_printf(m, "session header %s(%d,cpu%d):\n", task->comm, task_pid_nr(task), task_cpu(task));

do_each_pid_task(session, PIDTYPE_SID, p) {

seq_printf(m, " process %s(%d,cpu%d) thread %s(%d,cpu%d),threadnum %d, %d, spidtask %s(%d,%d).\n",

task->comm, task_pid_nr(task), task_cpu(task), \

p->comm, task_pid_nr(p), task_cpu(p), \

get_nr_threads(task), get_nr_threads(p), pid_task(session, PIDTYPE_SID)->comm, pid_task(session, PIDTYPE_SID)->tgid, pid_task(session, PIDTYPE_SID)->pid);

if(pid_task(session, PIDTYPE_PID)) {

seq_printf(m, "pidtask %s(%d,%d).\n", pid_task(session, PIDTYPE_PID)->comm, pid_task(session, PIDTYPE_PID)->tgid, pid_task(session, PIDTYPE_PID)->pid);

}

} while_each_pid_task(pgrp, PIDTYPE_SID, p);

}

} else if(seqfile_debug_mode == 4) {

struct task_struct *thread, *child;

for_each_process(task) {

seq_printf(m, "process %s(%d,cpu%d):\n", task->comm, task_pid_nr(task), task_cpu(task));

for_each_thread(task, thread) {

list_for_each_entry(child, &thread->children, sibling) {

seq_printf(m, " thread %s(%d,cpu%d) child %s(%d,cpu%d),threadnum %d, %d.\n",

thread->comm, task_pid_nr(thread), task_cpu(thread), \

child->comm, task_pid_nr(child), task_cpu(child), \

get_nr_threads(thread), get_nr_threads(child));

}

}

}

} else if(seqfile_debug_mode == 5) {

struct task_struct *g, *t;

do_each_thread (g, t) {

seq_printf(m, "Process %s(%d cpu%d), thread %s(%d cpu%d), threadnum %d.\n", g->comm, task_pid_nr(g), task_cpu(g), t->comm, task_pid_nr(t), task_cpu(t), get_nr_threads(g));

} while_each_thread (g, t);

} else if(seqfile_debug_mode == 6) {

for_each_process(task) {

struct pid *pid = task_pid(task);

seq_printf(m, "Process %s(%d,cpu%d) pid %d, tgid %d:\n", task->comm, task_pid_nr(task), task_cpu(task), task_pid_vnr(task), task_tgid_vnr(task));

do_each_pid_task(pid, PIDTYPE_TGID, p) {

seq_printf(m, " process %s(%d,cpu%d) thread %s(%d,cpu%d),threadnum %d, %d. pid %d, tgid %d\n",

task->comm, task_pid_nr(task), task_cpu(task), \

p->comm, task_pid_nr(p), task_cpu(p), \

get_nr_threads(task), get_nr_threads(p), task_pid_vnr(p), task_tgid_vnr(p));

} while_each_pid_task(pid, PIDTYPE_TGID, p);

}

} else if(seqfile_debug_mode == 7) {

for_each_process(task) {

struct pid *pid = task_pid(task);

seq_printf(m, "Process %s(%d,cpu%d) pid %d, tgid %d:\n", task->comm, task_pid_nr(task), task_cpu(task), task_pid_vnr(task), task_tgid_vnr(task));

do_each_pid_task(pid, PIDTYPE_PID, p) {

seq_printf(m, " process %s(%d,cpu%d) thread %s(%d,cpu%d),threadnum %d, %d. pid %d, tgid %d\n",

task->comm, task_pid_nr(task), task_cpu(task), \

p->comm, task_pid_nr(p), task_cpu(p), \

get_nr_threads(task), get_nr_threads(p), task_pid_vnr(p), task_tgid_vnr(p));

} while_each_pid_task(pid, PIDTYPE_PID, p);

}

} else if(seqfile_debug_mode == 8) {

bus_for_each_dev(&pci_bus_type, NULL, (void*)m, lookup_pci_devices);

bus_for_each_drv(&pci_bus_type, NULL, (void*)m, lookup_pci_drivers);

// class_find_device.

// class_find_device_by_name.

// class_for_each_device.

} else if(seqfile_debug_mode == 9) {

struct device_driver *drv;

int ret;

drv = driver_find("pcieport", &pci_bus_type);

ret = driver_for_each_device(drv, NULL, (void*)m, list_device_belongs_todriver_pci);

} else if(seqfile_debug_mode == 10) {

for_each_process(task) {

#if LINUX_VERSION_CODE >= KERNEL_VERSION(5, 7, 0)

seq_printf(m, "Process %s(%d),state 0x%08x, exit_state 0x%08x, refcount %d, usage %d rcucount %d.", \

task->comm, task->tgid, task->__state, task->exit_state, refcount_read(&task->stack_refcount), refcount_read(&task->usage), refcount_read(&task->rcu_users));

#else

seq_printf(m, "Process %s(%d),state 0x%lx, exit_state 0x%08x, refcount %d, usage %d rcucount %d.", \

task->comm, task->tgid, task->state, task->exit_state, refcount_read(&task->stack_refcount), refcount_read(&task->usage), refcount_read(&task->rcu_users));

#endif

if(task->parent) {

seq_printf(m, "parent name %s pid %d.\n", task->parent->comm, task->parent->tgid);

} else {

seq_printf(m, "no parent.\n");

}

}

} else if(seqfile_debug_mode == 11) {

struct pci_bus *bus;

list_for_each_entry(bus, &pci_root_buses, node) {

seq_printf(m, "pcibus name %s.\n", bus->name);

pci_walk_bus(bus, pcie_device_info, (void*)m);

}

} else if(seqfile_debug_mode == 12) {

struct device_driver *drv;

int ret;

// EXPORT_SYMBOL(usb_bus_type);

// bus_for_each_dev(&usb_bus_type, NULL, (void*)m, lookup_usb_devices);

// bus_for_each_drv(&usb_bus_type, NULL, (void*)m, lookup_usb_drivers);

bus_for_each_dev(&platform_bus_type, NULL, (void*)m, lookup_platform_devices);

bus_for_each_drv(&platform_bus_type, NULL, (void*)m, lookup_platform_drivers);

drv = driver_find("demo_platform", &platform_bus_type);

ret = driver_for_each_device(drv, NULL, (void*)m, list_device_belongs_todriver_platform);

} else if(seqfile_debug_mode == 13) {

int ret;

class_zilong = kset_create_and_add("zilong_class", NULL, NULL);

if (!class_zilong) {

printk("%s line %d, fatal error, create class failure.\n", __func__, __LINE__);

return -ENOMEM;

}

ret = kobject_init_and_add(&kobj, &zilong_ktype, &class_zilong->kobj, "%s-%d", "zilong", 1);

if(ret < 0) {

printk("%s line %d, fatal error, create kobject failure.\n", __func__, __LINE__);

return -ENOMEM;

}

kobj_created = 1;

ret = sysfs_create_file(&kobj, &height.attr);

if(ret != 0){

printk("%s line %d, fatal error, create sysfs attribute failure.\n", __func__, __LINE__);

return -ENOMEM;

}

kobj_created = 1;

} else if(seqfile_debug_mode == 14) {

// cad pid is process 1 pid.

int ret = kill_cad_pid(SIGINT, 1);

printk("%s lne %d ret %d.\n", __func__, __LINE__, ret);

} else if(seqfile_debug_mode == 15) {

kill_processes(pid_number);

} else if(seqfile_debug_mode == 16) {

struct pci_dev *pdev = NULL;

struct pci_dev *pparent = NULL;

struct pci_bus *bus = NULL;

struct pci_bus *rootbus = NULL;

struct pci_bus *findbus = NULL;

findbus = pci_find_bus(0, 0);

list_for_each_entry(rootbus, &pci_root_buses, node) {

seq_printf(m, "pcibus name %s bus %p, findbus %p.\n", rootbus->name, rootbus, findbus);

break;

}

bus_for_each_dev(&pci_bus_type, NULL, (void*)m, lookup_pci_devices_reset);

pdev = g_pci_dev;

if(pdev == NULL) {

printk("%s line %d, return null.\n", __func__, __LINE__);

return -1;

}

pci_reset_sbr(pdev);

pci_lock_rescan_remove();

pci_stop_and_remove_bus_device(pdev);

pci_unlock_rescan_remove();

bus = pdev->bus;

//if (!pci_is_root_bus(bus) && list_empty(&bus->devices))

if (0)

{

printk("%s line %d, lightweight rescan.\n", __func__, __LINE__);

pci_lock_rescan_remove();

pci_rescan_bus_bridge_resize_priv(bus->self);

pci_unlock_rescan_remove();

} else {

printk("%s line %d, rescan.\n", __func__, __LINE__);

if(bus->self) {

bus = bus->self->bus;

pparent = bus->self;

}

seq_printf(m, "%s line %d, do the reset of wireless device, device PCI BUS No.:%d. busname %s, bus %p.\n.", \

__func__, __LINE__, bus->number, bus->name, bus);

if(pparent && pparent->bus) {

seq_printf(m, "%s line %d, do the reset of wireless device, device PCI:0x%04x:%d:%d.%d\n.", \

__func__, __LINE__, pci_domain_nr(pparent->bus), pparent->bus->number, PCI_SLOT(pparent->devfn), PCI_FUNC(pparent->devfn));

}

if(bus == rootbus) {

seq_printf(m, "warnings: reset root bus.\n");

}

pci_lock_rescan_remove();

pci_rescan_bus(bus);

pci_unlock_rescan_remove();

}

} else if(seqfile_debug_mode == 17) {

struct pci_bus *pbus = NULL;

while ((pbus = pci_find_next_bus(pbus)) != NULL) {

seq_printf(m, "find bus %s.\n", pbus->name);

}

list_for_each_entry(pbus, &pci_root_buses, node) {

seq_printf(m, "pcibus name %s.\n", pbus->name);

pci_walk_bus(pbus, pcie_device_info_find_bridge, (void*)m);

}

bus_for_each_dev(&pci_bus_type, NULL, (void*)m, lookup_pci_devices);

} else if(seqfile_debug_mode == 18) {

struct net *net;

struct net_device *ndev;

for_each_net(net)

for_each_netdev(net, ndev) {

seq_printf(m, "%s line %d, ndev->name %s. net 0x%p.\n", __func__, __LINE__, ndev->name, net);

}

} else if(seqfile_debug_mode == 19) {

unsigned long *p;

const struct cpumask *mask = cpu_cpu_mask(0);

p = (unsigned long*)mask;

seq_printf(m, "mask 0x%lx.\n", p[0]);

} else if(seqfile_debug_mode == 20) {

struct task_struct *process, *thread;

rcu_read_lock();

for_each_process_thread(process, thread) {

if (unlikely(thread->flags & PF_VCPU)) {

#if LINUX_VERSION_CODE >= KERNEL_VERSION(5, 7, 0)

seq_printf(m, "%s line %d, comm %s, pid %d, is %s thread state 0x%x, proces %s(%d).\n",

__func__, __LINE__, thread->comm, thread->pid, thread->mm? "user" : "kernel",thread->__state, process->comm, process->pid);

#else

seq_printf(m, "%s line %d, comm %s, pid %d, is %s thread state 0x%lx, proces %s(%d).\n",

__func__, __LINE__, thread->comm, thread->pid, thread->mm? "user" : "kernel",thread->state, process->comm, process->pid);

#endif

}

}

rcu_read_unlock();

} else if(seqfile_debug_mode == 21) {

list_all_mount_device(m);

} else if(seqfile_debug_mode == 22) {

list_all_mount_points(m);

} else if(seqfile_debug_mode == 23) {

list_all_i2c_devices(m);

} else if(seqfile_debug_mode == 24) {

dmi_info_verbose(m);

} else if(seqfile_debug_mode == 25) {

list_all_process_vma(m);

} else if(seqfile_debug_mode == 26) {

list_bus_iommu(m);

} else if(seqfile_debug_mode == 27) {

struct pci_dev *tmp = NULL;

for_each_pci_dev(tmp) {

lookup_pci_devices(&tmp->dev, m);

}

} else if(seqfile_debug_mode == 28) {

interval_tree_test(m);

} else {

printk("%s line %d,cant be here, seqfile_debug_mode = %d.\n", __func__, __LINE__, seqfile_debug_mode);

}

return 0;

}

static struct task_struct *find_lock_task_mm(struct task_struct *p)

{

struct task_struct *t;

rcu_read_lock();

for_each_thread(p, t) {

task_lock(t);

if (likely(t->mm))

goto found;

task_unlock(t);

}

t = NULL;

found:

rcu_read_unlock();

return t;

}

static bool process_shares_task_mm(struct task_struct *p, struct mm_struct *mm)

{

struct task_struct *t;

for_each_thread(p, t) {

struct mm_struct *t_mm = READ_ONCE(t->mm);

if (t_mm)

return t_mm == mm;

}

return false;

}

static void kill_processes(int pid_nr)

{

struct task_struct *victim;

struct task_struct *p;

struct mm_struct *mm;

int old_cnt,new_cnt;

victim = get_pid_task(find_vpid(pid_nr), PIDTYPE_PID);

if(victim == NULL) {

printk("%s line %d,return.\n", __func__, __LINE__);

return;

}

printk("%s line %d, task has live %d threads total.\n", __func__, __LINE__, atomic_read(&victim->signal->live));

p = find_lock_task_mm(victim);

if (!p) {

put_task_struct(victim);

return;

} else {

get_task_struct(p);

put_task_struct(victim);

victim = p;

}

mm = victim->mm;

mmgrab(mm);

kill_pid(find_vpid(pid_nr), SIGKILL, 1);

task_unlock(victim);

rcu_read_lock();

for_each_process(p) {

if (!process_shares_task_mm(p, mm))

continue;

if (same_thread_group(p, victim))

continue;

if (unlikely(p->flags & PF_KTHREAD))

continue;

kill_pid(get_pid(task_pid(p)), SIGKILL, 1);

}

rcu_read_unlock();

mmdrop(mm);

old_cnt = atomic_read(&victim->signal->live);

while((new_cnt=atomic_read(&victim->signal->live))) {

if(new_cnt != old_cnt) {

printk("%s line %d, live %d.\n", __func__, __LINE__, atomic_read(&victim->signal->live));

old_cnt = new_cnt;

}

}

put_task_struct(victim);

}

static const struct seq_operations my_seq_ops = {

.start = my_seq_ops_start,

.next = my_seq_ops_next,

.stop = my_seq_ops_stop,

.show = my_seq_ops_show,

};

static int proc_seq_open(struct inode *inode, struct file *file)

{

int ret;

struct seq_file *m;

ret = seq_open(file, &my_seq_ops);

if(!ret) {

m = file->private_data;

m->private = file;

}

return ret;

}

static ssize_t proc_seq_write(struct file *file, const char __user *buffer, size_t count, loff_t *pos)

{

char debug_string[16];

int debug_no;

memset(debug_string, 0x00, sizeof(debug_string));

if (count >= sizeof(debug_string)) {

printk("%s line %d, fata error, write count exceed max buffer size.\n", __func__, __LINE__);

return -EINVAL;

}

if (copy_from_user(debug_string, buffer, count)) {

printk("%s line %d, fata error, copy from user failure.\n", __func__, __LINE__);

return -EFAULT;

}

if (sscanf(debug_string, "%d", &debug_no) <= 0) {

printk("%s line %d, fata error, read debugno failure.\n", __func__, __LINE__);

return -EFAULT;

}

seqfile_debug_mode = debug_no;

//printk("%s line %d, debug_no %d.\n", __func__, __LINE__, debug_no);

return count;

}

static ssize_t proc_seq_read(struct file *file, char __user *buf, size_t size, loff_t *ppos)

{

ssize_t ret;

printk("%s line %d enter, fuck size %lld size %ld.\n", __func__, __LINE__, *ppos, size);

ret = seq_read(file, buf, size, ppos);

printk("%s line %d exit, fuck size %lld size %ld,ret = %ld.\n", __func__, __LINE__, *ppos, size, ret);

return ret;

}

#if LINUX_VERSION_CODE >= KERNEL_VERSION(5, 7, 0)

static const struct proc_ops seq_proc_ops_new = {

.proc_open = proc_seq_open,

.proc_read = proc_seq_read,

.proc_lseek = seq_lseek,

.proc_release = seq_release,

.proc_write = proc_seq_write,

};

#else

static struct file_operations seq_proc_ops = {

.owner = THIS_MODULE,

.open = proc_seq_open,

.release = seq_release,

.read = proc_seq_read,

.write = proc_seq_write,

.llseek = seq_lseek,

.unlocked_ioctl = NULL,

};

#endif

static struct proc_dir_entry * entry;

static int proc_hook_init(void)

{

printk("%s line %d, init. seqfile_debug_mode = %d.\n", __func__, __LINE__, seqfile_debug_mode);

#if 1

#if LINUX_VERSION_CODE >= KERNEL_VERSION(5, 7, 0)

entry = proc_create("dumptask", 0644, NULL, &seq_proc_ops_new);

#else

entry = proc_create("dumptask", 0644, NULL, &seq_proc_ops);

#endif

#else

entry = proc_create_seq("dumptask", 0644, NULL, &my_seq_ops);

#endif

return 0;

}

static void proc_hook_exit(void)

{

if(kobj_created) {

kobject_del(&kobj);

kset_unregister(class_zilong);

}

proc_remove(entry);

printk("%s line %d, exit.\n", __func__, __LINE__);

return;

}

module_init(proc_hook_init);

module_exit(proc_hook_exit);

MODULE_AUTHOR("zlcao");

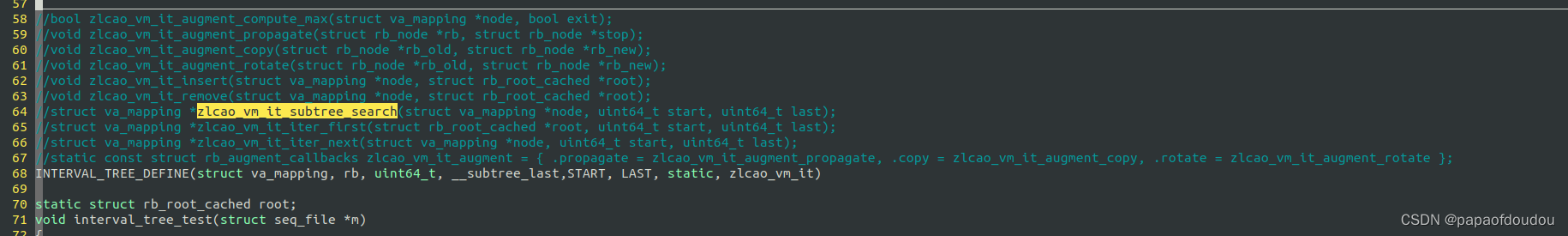

MODULE_LICENSE("GPL");区间树使用了宏模板方式定义,用户只需要在源码中调用INTERVAL_TREE_DEFINE宏,传入必要的参数,编译器便会自动展开,生成对应的区间树接口函数:

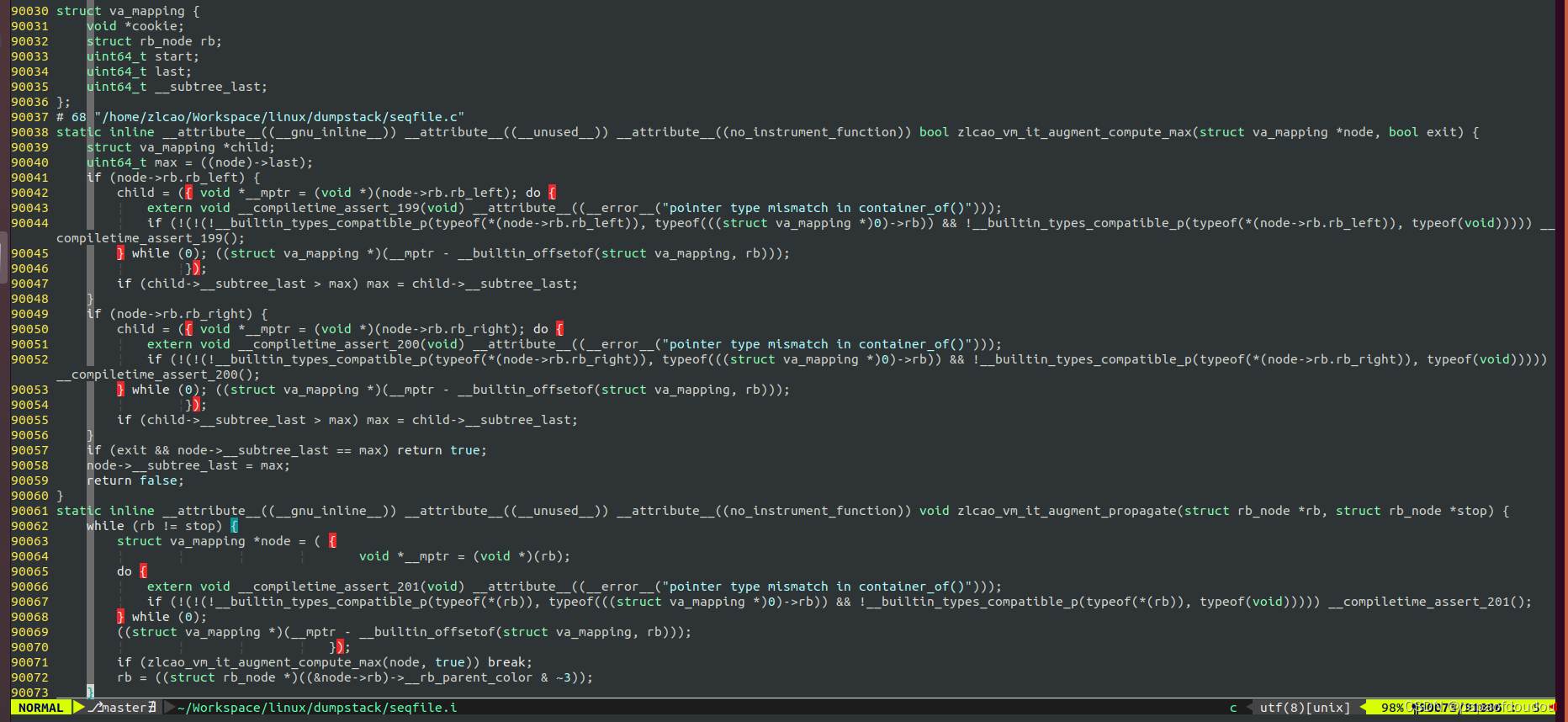

利用编译器预处理功能将宏模板展开,并用astyle进行格式化。

make -C /lib/modules/5.4.0-148-generic/build M=/home/zlcao/Workspace/linux/dumpstack seqfile.i

rb_first返回红黑数的左子节点,可以看到其起始为3,是所有节点中最小的,印证了区间数是以START作为键值。另外,也可以从__subtree_last域反推出这一点,__subtree_last记录的是子树中最大的last,如果使用右边端点做键值,则直接按照红黑树结构,寻找最右侧的节点即可找到这个值,为什么还多此一举使用一个单独的变量__subtree_last去记录呢?通常,LINUX使用比较经济的策略管理内存,不会浪费空间去保存冗余变量。