文章目录

前言

在之前的博客中,已经对于FFmpeg的介绍、编译、拉流、解码等做了详细的介绍。现在紧跟着上一篇博客,在之前的拉流编解码后,使用SDL进行播放。

具体对于SDL的介绍与编译请查看上一篇博客

|版本声明:山河君,未经博主允许,禁止转载

一、SDLAPI介绍

1.初始化子系统

int SDL_Init(Uint32 flags);

flags值可以是以下几种或者一起:

- SDL_INIT_TIMER: 定时器子系统

- SDL_INIT_AUDIO: 音频子系统

- SDL_INIT_VIDEO:视频子系统;自动初始化事件子系统

- SDL_INIT_JOYSTICK:操纵杆子系统;自动初始化事件子系统

- SDL_INIT_HAPTIC:触觉(力反馈)子系统

- SDL_INIT_GAMECONTROLLER:控制器子系统;自动初始化操纵杆子系统

- SDL_INIT_EVENTS: 事件子系统

- SDL_INIT_EVERYTHING: 以上所有子系统

- SDL_INIT_NOPARACHUTE:兼容性;该标志被忽略

return:成功时返回 0,失败时返回负错误代码;调用SDL_GetError () 获取更多信息。

备注:在子系统使用结束后,需要调用SDL_Quit () 以关闭

2.打开音频设备

int SDL_OpenAudio(SDL_AudioSpec * desired, SDL_AudioSpec * obtained);

参数:

- desired:表示所需输出格式的SDL_AudioSpec结构。有关如何准备此结构的详细信息,请参阅SDL_OpenAudioDevice文档。

- obtained: 用实际参数填充的SDL_AudioSpec结构,或 NULL。

return:如果成功则返回 0,将实际的硬件参数放入 指向的结构中obtained。

备注:更强大的方法是使用SDL_OpenAudioDevice (),指定使用设备

3.SDL_AudioSpec 结构体

包含音频输出格式的结构。它还包含在音频设备需要更多数据时调用的回调。

| 类型 | 释义 |

|---|---|

| int freq | 每秒采样数 |

| SDL_AudioFormat format | 音频数据格式 |

| Uint8 channels | 独立声道数 |

| Uint8 silence | 音频缓冲区静音值 |

| Uint16 samples | 样本中的音频缓冲区大小 |

| Uint32 size | 音频缓冲区大小 |

| SDL_AudioCallback callback | 当音频设备需要更多数据时调用的函数 |

| void *userdata | 传递给回调的指针 |

其中

-

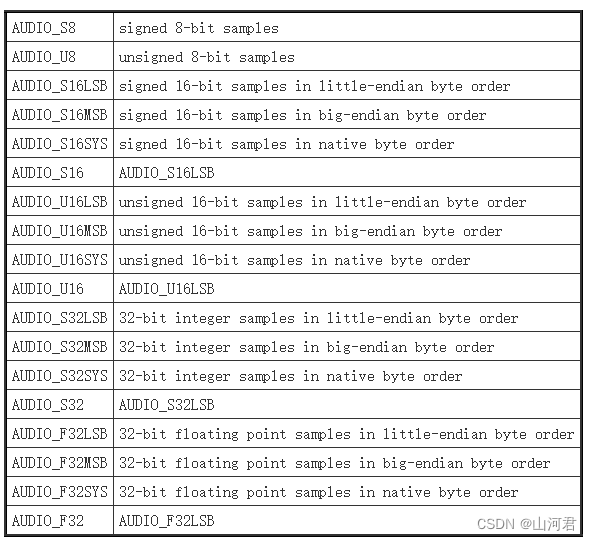

音频数据格式参数值如下:

-

支持的通道数如下

SDL2.0以后可以支持的值为 1(单声道)、2(立体声)、4(四声道)和 6 (5.1) -

回调函数模型如下

void SDL_AudioCallback(void* userdata, Uint8* stream, int len) -

样本中的音频缓冲区大小

由于本例子中播放的是MP3,MP3一帧数据大小为1152采样,所以该值为1152

4.暂停音频设备

void SDL_PauseAudio(int pause_on);

参数:非零暂停,0取消暂停

备注:一般是传入1代表暂停

二、使用实例

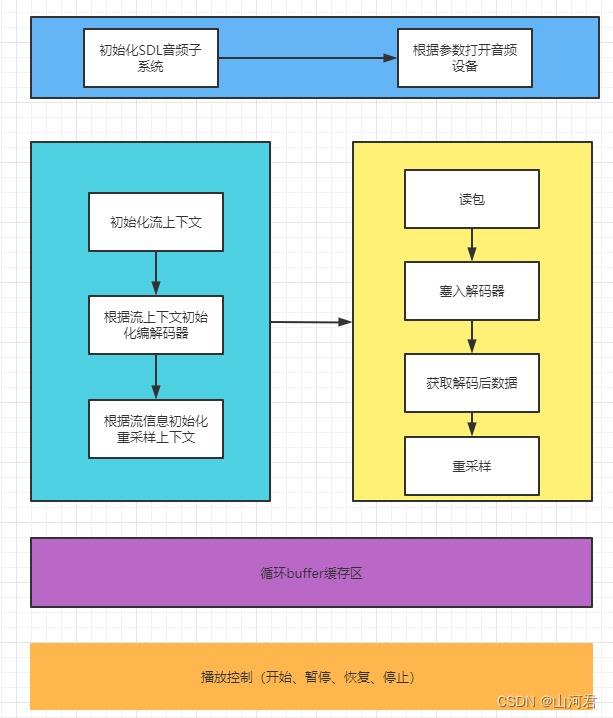

1.粗浅设计

播放的基础是建立在正确拉流、并进行编解码的基础之上,所以下面的代码是对之前的例子的修改和补充。关于拉流、解码的API就不进行过多的介绍了。

整体的设计结构如下图:

整体对外接口

bool StartPlay(const char* pAudioFilePath);

bool StopPlay();

bool PausePlay();

bool ResumePlay();

分别对应开始播放、暂停播放、恢复播放、停止播放。

2.头文件

代码如下:

#pragma once

extern "C" {

#include "include/libavformat/avformat.h"

#include "include/libavcodec/avcodec.h"

#include "include/libavutil/avutil.h"

#include "include/libswresample/swresample.h"

}

#include "SDL.h"

#include <iostream>

#include <mutex>

#include <Windows.h>

#include <vector>

namespace AudioReadFrame

{

#define MAX_AUDIO_FRAME_SIZE 192000 // 1 second of 48khz 16bit audio 2 channel

#define MAX_CACHE_BUFFER_SIZE 50*1024*1024 // 5M

//自定义结构体存储信息

struct FileAudioInst

{

long long duration; ///< second

long long curl_time; ///< second

int sample_rate; ///< samples per second

int channels; ///< number of audio channels

FileAudioInst()

{

duration = 0;

curl_time = 0;

sample_rate = 0;

channels = 0;

}

};

//拉流线程状态

enum ThreadState

{

eRun = 1,

eExit,

};

enum PlaySate

{

ePlay = 1,

ePause,

eStop

};

struct CircleBuffer

{

CircleBuffer() : dataBegin(0), dataEnd(0), buffLen_(0) {

}

uint32_t buffLen_;

uint32_t dataBegin;

uint32_t dataEnd;

std::vector<uint8_t> decodeBuffer_;

};

class CAudioReadFrame

{

public:

CAudioReadFrame();

~CAudioReadFrame();

public:

bool StartPlay(const char* pAudioFilePath);

bool StopPlay();

bool PausePlay();

bool ResumePlay();

private:

//加载流文件

bool LoadAudioFile(const char* pAudioFilePath);

//开始读流

bool StartReadFile();

//停止读流

bool StopReadFile();

//释放资源

bool FreeResources();

//改变拉流线程的装填

void ChangeThreadState(ThreadState eThreadState);

//拉流线程

void ReadFrameThreadProc();

//utf转GBK

std::string UTF8ToGBK(const std::string& strUTF8);

private:

uint32_t GetBufferFromCache(uint8_t* buffer, int bufferLen);

void PushBufferCache(uint8_t* buffer, int bufferLen);

void ClearCache();

void AudioCallback(Uint8* stream, int len);

static void SDL_AudioCallback(void* userdata, Uint8* stream, int len);

private:

typedef std::unique_ptr<std::thread> ThreadPtr;

//目的是为了重定向输出ffmpeg日志到本地文件

#define PRINT_LOG 0

#ifdef PRINT_LOG

private:

static FILE* m_pLogFile;

static void LogCallback(void* ptr, int level, const char* fmt, va_list vl);

#endif

//目的是为了将拉流数据dump下来

#define DUMP_AUDIO 1

#ifdef DUMP_AUDIO

FILE* decode_file;

FILE* play_file;

#endif // DUMP_FILE

private:

bool m_bIsReadyForRead;

int m_nStreamIndex;

uint8_t* m_pSwrBuffer;

std::mutex m_lockResources;

std::mutex m_lockThread;

FileAudioInst* m_pFileAudioInst;

ThreadPtr m_pReadFrameThread;

ThreadState m_eThreadState;

private:

SwrContext* m_pSwrContext; //重采样

AVFrame* m_pAVFrame; //音频包

AVCodec* m_pAVCodec; //编解码器

AVPacket* m_pAVPack; //读包

AVCodecParameters * m_pAVCodecParameters; //编码参数

AVCodecContext* m_pAVCodecContext; //解码上下文

AVFormatContext* m_pAVFormatContext; //IO上下文

private:

PlaySate m_ePlayState;

std::mutex cacheBufferLock;

CircleBuffer decodeBuffer_;

};

}

3.实现文件

代码如下:

#include "CAudioReadFrame.h"

#include <sstream>

namespace AudioReadFrame

{

#ifdef FFMPEG_LOG_OUT

FILE* CAudioReadFrame::m_pLogFile = nullptr;

void CAudioReadFrame::LogCallback(void* ptr, int level, const char* fmt, va_list vl)

{

if (m_pLogFile == nullptr)

{

m_pLogFile = fopen("E:\\log\\log.txt", "w+");

}

if (m_pLogFile)

{

vfprintf(m_pLogFile, fmt, vl);

fflush(m_pLogFile);

}

}

#endif

CAudioReadFrame::CAudioReadFrame() :

m_ePlayState(PlaySate::eStop)

{

std::cout << av_version_info() << std::endl;

#if DUMP_AUDIO

decode_file = fopen("E:\\log\\decode_file.pcm", "wb+");

play_file = fopen("E:\\log\\play_file.pcm", "wb+");

#endif

#ifdef FFMPEG_LOG_OUT

if (m_pLogFile != nullptr)

{

fclose(m_pLogFile);

m_pLogFile = nullptr;

}

time_t t = time(nullptr);

struct tm* now = localtime(&t);

std::stringstream time;

time << now->tm_year + 1900 << "/";

time << now->tm_mon + 1 << "/";

time << now->tm_mday << "/";

time << now->tm_hour << ":";

time << now->tm_min << ":";

time << now->tm_sec << std::endl;

std::cout << time.str();

av_log_set_level(AV_LOG_TRACE); //设置日志级别

av_log_set_callback(LogCallback);

av_log(NULL, AV_LOG_INFO, time.str().c_str());

#endif

SDL_Init(SDL_INIT_AUDIO);

ClearCache();

}

CAudioReadFrame::~CAudioReadFrame()

{

#if DUMP_AUDIO

if (decode_file) {

fclose(decode_file);

decode_file = nullptr;

}

#endif

StopReadFile();

}

bool CAudioReadFrame::StartPlay(const char* pAudioFilePath)

{

if (m_ePlayState == PlaySate::ePlay)

return false;

if (!LoadAudioFile(pAudioFilePath))

{

return false;

}

StartReadFile();

SDL_PauseAudio(0);

m_ePlayState = PlaySate::ePlay;

return true;

}

bool CAudioReadFrame::StopPlay()

{

if (m_ePlayState == PlaySate::eStop)

return false;

StopReadFile();

ClearCache();

SDL_CloseAudio();

return true;

}

bool CAudioReadFrame::PausePlay()

{

if (m_ePlayState == PlaySate::ePause || m_ePlayState != PlaySate::ePlay)

return false;

SDL_PauseAudio(1);

}

bool CAudioReadFrame::ResumePlay()

{

if (m_ePlayState == PlaySate::ePause)

return false;

SDL_PauseAudio(0);

}

bool CAudioReadFrame::LoadAudioFile(const char* pAudioFilePath)

{

ChangeThreadState(ThreadState::eExit);

FreeResources();

av_log_set_level(AV_LOG_TRACE); //设置日志级别

m_nStreamIndex = -1;

m_pAVFormatContext = avformat_alloc_context();

m_pAVFrame = av_frame_alloc();

m_pSwrContext = swr_alloc();

m_pFileAudioInst = new FileAudioInst;

m_pSwrBuffer = (uint8_t *)av_malloc(MAX_AUDIO_FRAME_SIZE);

m_pAVPack = av_packet_alloc();

//Open an input stream and read the header

if (avformat_open_input(&m_pAVFormatContext, pAudioFilePath, NULL, NULL) != 0) {

av_log(NULL, AV_LOG_ERROR, "Couldn't open input stream.\n");

return false;

}

//Read packets of a media file to get stream information

if (avformat_find_stream_info(m_pAVFormatContext, NULL) < 0) {

av_log(NULL, AV_LOG_ERROR, "Couldn't find stream information.\n");

return false;

}

for (unsigned int i = 0; i < m_pAVFormatContext->nb_streams; i++)

{

//因为一个url可以包含多股,如果存在多股流,找到音频流,因为现在只读MP3,所以只找音频流

if (m_pAVFormatContext->streams[i]->codecpar->codec_type == AVMEDIA_TYPE_AUDIO) {

m_nStreamIndex = i;

break;

}

}

if (m_nStreamIndex == -1) {

av_log(NULL, AV_LOG_ERROR, "Didn't find a audio stream.\n");

return false;

}

m_pAVCodecParameters = m_pAVFormatContext->streams[m_nStreamIndex]->codecpar;

m_pAVCodec = (AVCodec *)avcodec_find_decoder(m_pAVCodecParameters->codec_id);

// Open codec

m_pAVCodecContext = avcodec_alloc_context3(m_pAVCodec);

avcodec_parameters_to_context(m_pAVCodecContext, m_pAVCodecParameters);

if (avcodec_open2(m_pAVCodecContext, m_pAVCodec, NULL) < 0) {

av_log(NULL, AV_LOG_ERROR, "Could not open codec.\n");

return false;

}

//初始化重采样 采样率为双通 short, 48k

AVChannelLayout outChannelLayout;

AVChannelLayout inChannelLayout;

outChannelLayout.nb_channels = 2;

inChannelLayout.nb_channels = m_pAVCodecContext->ch_layout.nb_channels;

if (swr_alloc_set_opts2(&m_pSwrContext, &outChannelLayout, AV_SAMPLE_FMT_S16, 48000,

&inChannelLayout, m_pAVCodecContext->sample_fmt, m_pAVCodecContext->sample_rate, 0, NULL)

!= 0)

{

av_log(NULL, AV_LOG_ERROR, "swr_alloc_set_opts2 fail.\n");

return false;

}

swr_init(m_pSwrContext);

//保留流信息

m_pFileAudioInst->duration = m_pAVFormatContext->duration / 1000;//ms

m_pFileAudioInst->channels = m_pAVCodecParameters->ch_layout.nb_channels;

m_pFileAudioInst->sample_rate = m_pAVCodecParameters->sample_rate;

SDL_AudioSpec want;

want.freq = 48000;

want.channels = 2;

want.format = AUDIO_S16SYS;

want.samples = 1152;

want.userdata = this;

want.callback = SDL_AudioCallback;

if (SDL_OpenAudio(&want, NULL) < 0) {

av_log(NULL, AV_LOG_ERROR, "SDL_OpenAudio fail:%s .\n", SDL_GetError());

return false;

}

m_bIsReadyForRead = true;

return true;

}

bool CAudioReadFrame::StartReadFile()

{

if (!m_bIsReadyForRead)

{

av_log(NULL, AV_LOG_ERROR, "File not ready");

return false;

}

if (m_pReadFrameThread != nullptr)

{

if (m_pReadFrameThread->joinable())

{

m_pReadFrameThread->join();

m_pReadFrameThread.reset(nullptr);

}

}

ChangeThreadState(ThreadState::eRun);

m_pReadFrameThread.reset(new std::thread(&CAudioReadFrame::ReadFrameThreadProc, this));

return true;

}

bool CAudioReadFrame::StopReadFile()

{

ChangeThreadState(ThreadState::eExit);

if (m_pReadFrameThread != nullptr)

{

if (m_pReadFrameThread->joinable())

{

m_pReadFrameThread->join();

m_pReadFrameThread.reset(nullptr);

}

}

FreeResources();

return true;

}

void CAudioReadFrame::ReadFrameThreadProc()

{

while (true)

{

if (m_eThreadState == ThreadState::eExit)

{

break;

}

//读取一个包

int nRet = av_read_frame(m_pAVFormatContext, m_pAVPack);

if (nRet != 0)

{

av_log(NULL, AV_LOG_ERROR, "read frame no data error:%d\n", nRet);

ChangeThreadState(ThreadState::eExit);

continue;

}

//判断读取流是否正确

if (m_pAVPack->stream_index != m_nStreamIndex)

{

av_log(NULL, AV_LOG_ERROR, "read frame no data error:\n");

continue;

}

//将一个包放入解码器

nRet = avcodec_send_packet(m_pAVCodecContext, m_pAVPack);

if (nRet < 0) {

av_log(NULL, AV_LOG_ERROR, "avcodec_send_packet error:%d\n", nRet);

continue;

}

//从解码器读取解码后的数据

nRet = avcodec_receive_frame(m_pAVCodecContext, m_pAVFrame);

if (nRet != 0) {

av_log(NULL, AV_LOG_ERROR, "avcodec_receive_frame error:%d\n", nRet);

continue;

}

//重采样,采样率不变

memset(m_pSwrBuffer, 0, MAX_AUDIO_FRAME_SIZE);

nRet = swr_convert(m_pSwrContext, &m_pSwrBuffer, MAX_AUDIO_FRAME_SIZE, (const uint8_t **)m_pAVFrame->data, m_pAVFrame->nb_samples);

if (nRet <0)

{

av_log(NULL, AV_LOG_ERROR, "swr_convert error:%d\n", nRet);

continue;

}

int buffSize = av_samples_get_buffer_size(NULL, 2, nRet, AV_SAMPLE_FMT_S16, 1);

#if DUMP_AUDIO

//获取重采样之后的buffer大小

fwrite((char*)m_pSwrBuffer, 1, buffSize, decode_file);

#endif

PushBufferCache((uint8_t*)m_pSwrBuffer, buffSize);

av_packet_unref(m_pAVPack);

}

}

bool CAudioReadFrame::FreeResources()

{

std::lock_guard<std::mutex> locker(m_lockResources);

if (m_pSwrBuffer)

{

av_free(m_pSwrBuffer);

m_pSwrBuffer = nullptr;

}

if (m_pFileAudioInst)

{

delete m_pFileAudioInst;

m_pFileAudioInst = nullptr;

}

if (m_pSwrContext)

{

swr_free(&m_pSwrContext);

m_pSwrContext = nullptr;

}

if (m_pAVFrame)

{

av_frame_free(&m_pAVFrame);

m_pAVFrame = nullptr;

}

if (m_pAVPack)

{

av_packet_free(&m_pAVPack);

m_pAVPack = nullptr;

}

if (m_pAVFormatContext)

{

avformat_free_context(m_pAVFormatContext);

m_pAVFormatContext = nullptr;

}

if (m_pAVCodecContext)

{

avcodec_close(m_pAVCodecContext);

m_pAVCodecContext = nullptr;

}

m_bIsReadyForRead = false;

return true;

}

void CAudioReadFrame::ChangeThreadState(ThreadState eThreadState)

{

std::lock_guard<std::mutex> locker(m_lockThread);

if (m_eThreadState != eThreadState)

{

m_eThreadState = eThreadState;

}

}

std::string CAudioReadFrame::UTF8ToGBK(const std::string& strUTF8)

{

int len = MultiByteToWideChar(CP_UTF8, 0, strUTF8.c_str(), -1, NULL, 0);

wchar_t* wszGBK = new wchar_t[len + 1];

memset(wszGBK, 0, len * 2 + 2);

MultiByteToWideChar(CP_UTF8, 0, strUTF8.c_str(), -1, wszGBK, len);

len = WideCharToMultiByte(CP_ACP, 0, wszGBK, -1, NULL, 0, NULL, NULL);

char *szGBK = new char[len + 1];

memset(szGBK, 0, len + 1);

WideCharToMultiByte(CP_ACP, 0, wszGBK, -1, szGBK, len, NULL, NULL);

//strUTF8 = szGBK;

std::string strTemp(szGBK);

delete[]szGBK;

delete[]wszGBK;

return strTemp;

}

void CAudioReadFrame::PushBufferCache(uint8_t* buffer, int bufferLen)

{

std::lock_guard<std::mutex> locker(cacheBufferLock);

if (decodeBuffer_.dataBegin == decodeBuffer_.dataEnd)

{

av_log(NULL, AV_LOG_ERROR, "Cache is maybe full, but not play, cache from begin\n");

decodeBuffer_.dataBegin = 0;

decodeBuffer_.dataEnd = 0;

decodeBuffer_.buffLen_ = 0;

}

else if (decodeBuffer_.dataBegin < decodeBuffer_.dataEnd)

{

if ((bufferLen + decodeBuffer_.dataEnd) > MAX_CACHE_BUFFER_SIZE)

{

if ((bufferLen + decodeBuffer_.dataEnd - MAX_CACHE_BUFFER_SIZE) >= decodeBuffer_.dataBegin)

{

av_log(NULL, AV_LOG_ERROR, "This packet is so strong to fill cache, cache from begin\n");

decodeBuffer_.dataBegin = 0;

decodeBuffer_.dataEnd = 0;

decodeBuffer_.buffLen_ = 0;

}

else

{

memcpy(decodeBuffer_.decodeBuffer_.data() + decodeBuffer_.dataEnd, buffer, MAX_CACHE_BUFFER_SIZE - decodeBuffer_.dataEnd);

memcpy(decodeBuffer_.decodeBuffer_.data(), (uint8_t*)buffer + (MAX_CACHE_BUFFER_SIZE - decodeBuffer_.dataEnd),

bufferLen + decodeBuffer_.dataEnd - MAX_CACHE_BUFFER_SIZE);

decodeBuffer_.dataEnd = bufferLen + decodeBuffer_.dataEnd - MAX_CACHE_BUFFER_SIZE;

decodeBuffer_.buffLen_ += bufferLen;

return;

}

}

}

else if (decodeBuffer_.dataBegin > decodeBuffer_.dataEnd)

{

if (bufferLen + decodeBuffer_.dataEnd >= decodeBuffer_.dataBegin)

{

av_log(NULL, AV_LOG_ERROR, "This packet is so strong to fill cache, cache from begin\n");

decodeBuffer_.dataBegin = 0;

decodeBuffer_.dataEnd = 0;

decodeBuffer_.buffLen_ = 0;

}

}

memcpy(decodeBuffer_.decodeBuffer_.data() + decodeBuffer_.dataEnd, buffer, bufferLen);

decodeBuffer_.dataEnd += bufferLen;

decodeBuffer_.buffLen_ += bufferLen;

}

uint32_t CAudioReadFrame::GetBufferFromCache(uint8_t* buffer, int bufferLen)

{

std::lock_guard<std::mutex> locker(cacheBufferLock);

uint32_t iReadLen = 0;

if (decodeBuffer_.dataBegin < decodeBuffer_.dataEnd)

{

iReadLen = (decodeBuffer_.dataBegin + bufferLen) > decodeBuffer_.dataEnd ? decodeBuffer_.dataEnd - decodeBuffer_.dataBegin : bufferLen;

memcpy(buffer, decodeBuffer_.decodeBuffer_.data() + decodeBuffer_.dataBegin, iReadLen);

decodeBuffer_.dataBegin += iReadLen;

}

else if (decodeBuffer_.dataBegin > decodeBuffer_.dataEnd)

{

if (decodeBuffer_.dataBegin + bufferLen > MAX_CACHE_BUFFER_SIZE)

{

uint32_t iLen1 = MAX_CACHE_BUFFER_SIZE - decodeBuffer_.dataBegin;

memcpy(buffer, decodeBuffer_.decodeBuffer_.data() + decodeBuffer_.dataBegin, iLen1);

decodeBuffer_.dataBegin = 0;

uint32_t len2 = bufferLen - iLen1;

len2 = decodeBuffer_.dataBegin + len2 > decodeBuffer_.dataEnd ? decodeBuffer_.dataEnd - decodeBuffer_.dataBegin : len2;

memcpy((uint8_t*)(buffer)+iLen1, decodeBuffer_.decodeBuffer_.data() + decodeBuffer_.dataBegin, len2);

decodeBuffer_.dataBegin += len2;

iReadLen = iLen1 + len2;

}

else

{

iReadLen = bufferLen;

memcpy(buffer, decodeBuffer_.decodeBuffer_.data() + decodeBuffer_.dataBegin, iReadLen);

decodeBuffer_.dataBegin += iReadLen;

}

}

if (iReadLen < bufferLen)

{

memset((uint8_t*)buffer + iReadLen, 0, bufferLen - iReadLen);

}

decodeBuffer_.buffLen_ -= iReadLen;

return iReadLen;

}

void CAudioReadFrame::ClearCache()

{

std::lock_guard<std::mutex> locker(cacheBufferLock);

decodeBuffer_.decodeBuffer_.clear();

decodeBuffer_.decodeBuffer_.resize(MAX_CACHE_BUFFER_SIZE);

decodeBuffer_.dataBegin = 0;

decodeBuffer_.dataEnd = 0;

decodeBuffer_.buffLen_ = 0;

}

void CAudioReadFrame::SDL_AudioCallback(void* userdata, Uint8* stream, int len) {

CAudioReadFrame* pThis = static_cast<CAudioReadFrame*>(userdata);

if (pThis) {

pThis->AudioCallback(stream, len);

}

}

void CAudioReadFrame::AudioCallback(Uint8* stream, int len) {

SDL_memset(stream, 0, len);

uint8_t playAudioData[9600];

uint32_t playLen = GetBufferFromCache(playAudioData, len);

if (playLen == 0)

{

return;

}

m_pFileAudioInst->curl_time += 1000 * playLen / 2 / m_pFileAudioInst->channels / m_pFileAudioInst->sample_rate;

SDL_MixAudio(stream, playAudioData, playLen, SDL_MIX_MAXVOLUME);

#if DUMP_AUDIO

fwrite(playAudioData, len, 1, play_file);

#endif

}

}

4.使用示例

#include "CAudioReadFrame.h"

int main()

{

AudioReadFrame::CAudioReadFrame cTest;

cTest.StartPlay("E:\\原音_女声.mp3");

system("pause");

return 0;

}

总结

以上就是在音频的拉流、解码以及重采样之后,对于音频数据进行播放的示例,当然对于如何选择播放设备,如何应对比较大的播放文件,如何进行播放实时的音频流的解决办法以及方案会在后续博客中坚持记录。

如果对您有所帮助,请帮忙点个赞吧!