一、CANAL 简介

canal是Alibaba旗下的一款开源项目,纯Java开发.它是基于数据库增量日志解析,提供增量数据订阅&消费,目前主要支持mysql。

应用场景

canal的数据同步不是全量的,而是增量。基于binary log增量订阅和消费,canal可以做:

- 数据库镜像

- 数据库实时备份

- 索引构建和实时维护

- 业务cache(缓存)刷新

具体业务场景

- 数据同步,比如:做在线、离线数据库之间的数据同步操作;

- 数据消费,比如:需要根据关注的数据库表的变化,做搜索增量;

- 数据脱敏,比如:需要将线上动态数据导入到其他地方,做数据脱敏。

Mysql主从复制原理

MySQL的master将写入二进制日志(binary log,可以通过show binlog events进行查看)

MySQL的slave将master的binary log events拷贝到它的中继日志(relay log)

MySQL的slave重放relay log中的事件,将数据变更反映到它自己的数据库中

Canal工作原理

canal实际上是把自己模拟成了MySQL的slave,向MySQL master发送dump协议

MySQL master收到dump请求后,开始推送自己的binary log给slave,也就是canal

canal解析binary log对象(原始为 byte 流)

本文档介绍单canal server 同步数据到rdb的方法

同步逻辑:src_mysql(172.16.134.24) <-> canal-deploy <-> client-adapter <-> dest_mysql(172.16.134.25)

二、Cancal 各个组件用途及介绍

2.1 组件介绍

canal-deploy:可将其看做canal server。它负责伪装成mysql从库,接收、解析binlog并投递(不做处理)到指定的目标端(RDS、MQ 或 canal adapter)

canal-adapter:是canal的客户端适配器,可将其看作canal client。能够直接将canal同步的数据写入到目标数据库(hbase,rdb,es),

rdb是关系型数据库比如MySQL、Oracle、PostgresSQL和SQLServer等,比较的快捷方便。

canal-admin:为canal提供整体配置管理、节点运维等面向运维的功能,提供相对友好的WebUI操作界面,方便更多用户快速和安全的操作。

三、Cancal 各个组件安装配置

3.1 Canal-admin 的安装

1、数据库创建用户

| create user canal identified with mysql_native_password by 'canal'; grant all privileges on *.* to 'canal'@'%'; flush privileges; |

2、下载并解压

| wget https://github.com/alibaba/canal/releases/download/canal-1.1.5/canal.admin-1.1.5.tar.gz cd /u01/canal-admin tar –zxvf canal.admin-1.1.5.tar.gz |

3、初始化原数据

| mysql -uroot -proot -S /u01/mysql3310/data/mysql.sock mysql> source /u01/canal-admin/conf/canal_manager.sql |

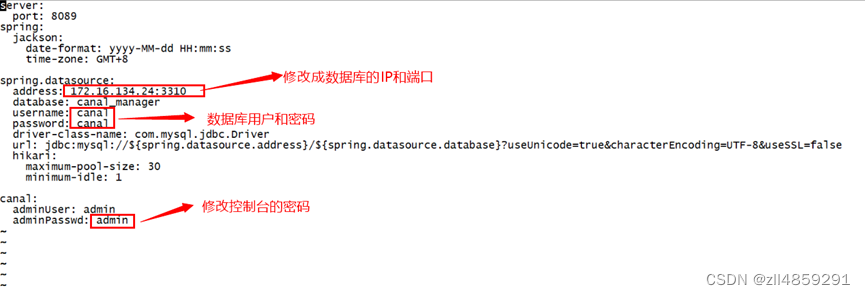

4、修改配置文件

vi u01/canal-admin/conf/application.yml

5、启动canal-admin

| cd /u01/canal-admin/bin sh startup.sh 排查错误见:/u01/canal-admin/logs/admin.log |

6、登录canal-admin

注意: canal admin,默认密码:admin/123456,第一次登录必须使用此密码登录,然后改成配置文件中的密码

3.2 Canal-deployer 的安装部署

1、下载并解压

| wget https://github.com/alibaba/canal/releases/download/canal-1.1.5/canal.deployer-1.1.5.tar.gz cd /u01/canal-deployer tar –zxvf canal.deployer-1.1.5.tar.gz |

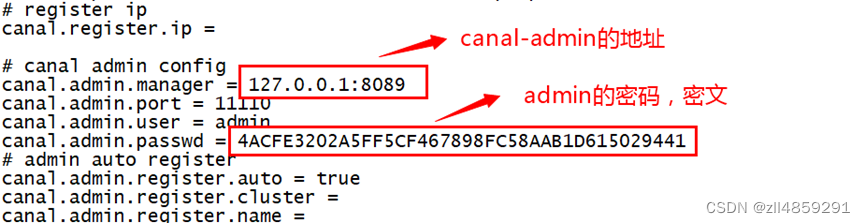

2、修改配置文件

| cp canal.properties canal.properties_bk cp canal_local.properties canal.properties 说明:canal_local.properties 为引入canal-admin 使用,但canal-admin实际加载的是canal.properties vi /u01/canal-deployer/conf/ canal_local.properties

|

| mysql> select * from canal_user \G *************************** 1. row *************************** id: 1 username: admin password: 6BB4837EB74329105EE4568DDA7DC67ED2CA2AD9 name: Canal Manager roles: admin introduction: NULL avatar: NULL creation_date: 2019-07-14 00:05:28 1 row in set (0.00 sec) |

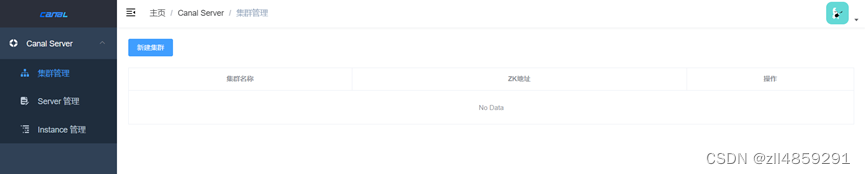

3、新建集群(可选)

如果需要集群,需要在集群管理内新建集群,配置集群信息,我们先不配置集群,选择单点

4、新建server(可选)

如果不需要集群,选中单机

下面三个必填项 server名称自定义,Server IP 为当前服务器的IP ,admin端口默认11110

5、启动canal-deployer

| cd /u01/canal-deployer/bin sh startup.sh |

启动完成后,canal服务端会自动注册到管理端,并完成Server创建

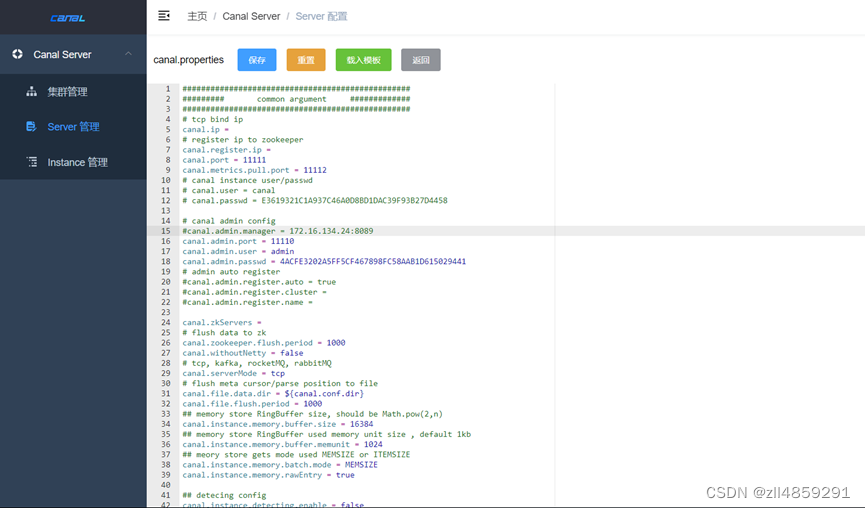

6、修改server配置

依次点击 “Server管理” ➡️ “操作” ➡️ “配置/修改”,修改 canal server 的基础信息和 canal.properties 配置信息

| 配置信息:主要有ZK集群配置、服务模式、目标端配置(MQ、RDS),根据需要修改 |

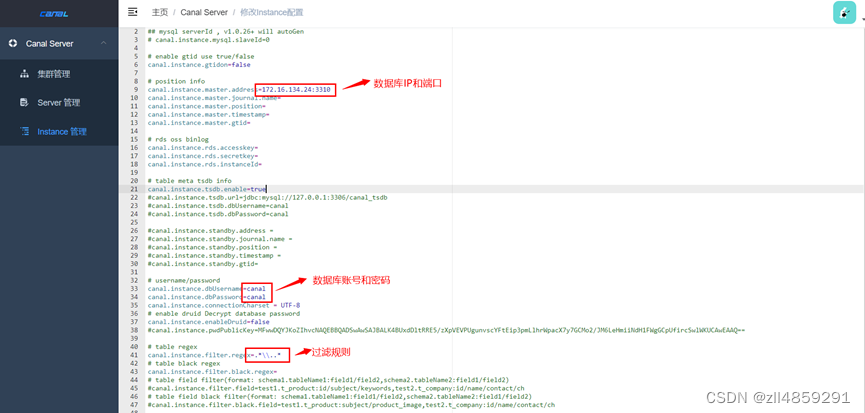

7、新建instance配置文件

依次点击 “Instance管理” ➡️ “新建instance” ➡️ “输入instance名称”➡️ “选择集群/主机” ➡️”载入模板”,修改instance.properties 配置信息

配置项说明:

修改,主要就是维护instance.properties配置,做了修改之后会触发对应单机或集群server上的instance做动态reload

删除,相当于直接执行instance stop,并执行配置删除

启动/停止,对instance进行状态变更,做了修改会触发对应单机或集群server上的instance做启动/停止操作

日志,主要针对instance运行状态时,获取对应instance的最后100行日志,比如example/example.log

3.3 Canal-adapter 的安装

1、下载并解压

| wget https://github.com/alibaba/canal/releases/download/canal-1.1.5/canal.adapter-1.1.5.tar.gz cd /u01/canal-deployer tar –zxvf canal.adapter-1.1.5.tar.gz |

2、修改配置文件

配置目标:捕获源库TEST所有变化到目标库TEST中

| cd /u01/canal-adapter/conf cp application.yml application.yml_bk vi application.yml |

修改项

1、canal-server 地址和端口

2、数据库地址、账号密码(源库、目标库)

3、canalAdapters 相关配置

| server: port: 8081 spring: jackson: date-format: yyyy-MM-dd HH:mm:ss time-zone: GMT+8 default-property-inclusion: non_null canal.conf: mode: tcp #tcp kafka rocketMQ rabbitMQ # canal client的模式: tcp kafka rocketMQ flatMessage: true zookeeperHosts: syncBatchSize: 1000 retries: 0 timeout: accessKey: secretKey: consumerProperties: # canal tcp consumer canal.tcp.server.host: 172.16.134.24:11111 #对应单机模式下的canal server的ip:port canal.tcp.zookeeper.hosts: #对应集群模式下的zk地址, 如果配置了canalServerHost, 则以canalServerHost为准 canal.tcp.batch.size: 500 canal.tcp.username: canal.tcp.password: # kafka consumer kafka.bootstrap.servers: 127.0.0.1:9092 kafka.enable.auto.commit: false kafka.auto.commit.interval.ms: 1000 kafka.auto.offset.reset: latest kafka.request.timeout.ms: 40000 kafka.session.timeout.ms: 30000 kafka.isolation.level: read_committed kafka.max.poll.records: 1000 # rocketMQ consumer rocketmq.namespace: rocketmq.namesrv.addr: 127.0.0.1:9876 rocketmq.batch.size: 1000 rocketmq.enable.message.trace: false rocketmq.customized.trace.topic: rocketmq.access.channel: rocketmq.subscribe.filter: # rabbitMQ consumer rabbitmq.host: rabbitmq.virtual.host: rabbitmq.username: rabbitmq.password: rabbitmq.resource.ownerId: srcDataSources: #定义源数据库# defaultDS: url: jdbc:mysql://172.16.134.24:3310/test?useUnicode=true username: canal password: canal canalAdapters: #适配器列表 - instance: canal_1221 # canal instance Name or mq topic name#canal-server 上定义的instance# groups: #分组列表,一组内配置被订阅串行执行 - groupId: g1 #分组id, 如果是MQ模式将用到该值 outerAdapters: #分组内适配器列表 - name: logger #日志打印适配器 - name: rdb #指定为rdb类型同步 key: mysql1 #指adapte的唯一key, 与表映射配置中outerAdapterKey对应 properties: jdbc.driverClassName: com.mysql.jdbc.Driver jdbc.url: jdbc:mysql://172.16.134.25:3310/test?useUnicode=true jdbc.username: canal jdbc.password: canal # properties: #可以定义多个# #jdbc.driverClassName: com.mysql.jdbc.Driver #jdbc.url: jdbc:mysql://172.16.134.25:3310/test01?useUnicode=true #jdbc.username: canal #jdbc.password: canal # - name: rdb # key: oracle1 # properties: # jdbc.driverClassName: oracle.jdbc.OracleDriver # jdbc.url: jdbc:oracle:thin:@localhost:49161:XE # jdbc.username: mytest # jdbc.password: m121212 # - name: rdb # key: postgres1 # properties: # jdbc.driverClassName: org.postgresql.Driver # jdbc.url: jdbc:postgresql://localhost:5432/postgres # jdbc.username: postgres # jdbc.password: 121212 # threads: 1 # commitSize: 3000 # - name: hbase # properties: # hbase.zookeeper.quorum: 127.0.0.1 # hbase.zookeeper.property.clientPort: 2181 # zookeeper.znode.parent: /hbase # - name: es # hosts: 127.0.0.1:9300 # 127.0.0.1:9200 for rest mode # properties: # mode: transport # or rest # # security.auth: test:123456 # only used for rest mode # cluster.name: elasticsearch # - name: kudu # key: kudu # properties: # kudu.master.address: 127.0.0.1 # ',' split multi address |

注意点:

- 其中 outAdapter 的配置: name统一为rdb, key为对应的数据源的唯一标识需和下面的表映射文件中的outerAdapterKey对应, properties为目标库jdbc的相关参数

- adapter将会自动加载 conf/rdb 下的所有.yml结尾的表映射配置文件

修改rdb 配置文件

| cd /u01/canal-adapter/conf/rdb vi mytest_user.yml #dataSourceKey: defaultDS # 源数据源的key, 对应上面配置的srcDataSources中的值 #destination: example # cannal的instance或者MQ的topic #groupId: # 对应MQ模式下的groupId, 只会同步对应groupId的数据 #outerAdapterKey: mysql1 # adapter key, 对应上面配置outAdapters中的key #concurrent: true #是否按主键hash并行同步, 并行同步的表必须保证主键不会更改及主键不能为其他同步表的外键!! #dbMapping: # database: text #源数据源的database/shcema # table: user #源数据源表名 # targetTable: tb_user #目标数据源的表名 ,这边不能设置为目标数据库.表名,目标库只能在应outerAdapter中的表示的库名 # targetPk: #主键映射 # id: id #如果是复合主键可以换行映射多个 # # mapAll: true # 是否整表映射, 要求源表和目标表字段名一模一样 # 是否整表映射, 要求源表和目标表字段名一模一样 (如果targetColumns也配置了映射,则以targetColumns配置为准) # 注意这段表述“以targetColumns配置为准”。这个并不是说只同步 targetColumns配置的属性。而是说一样要同步 源表的所有属性。 # 但是考虑到目标表的属性名称可能不完全一致,有区别的属性名称可以通过targetColumns来配置映射关系,没有配置的默认属性默认都是相同。 # 如果只需要同步部分源表的属性到目标表中,这里应该设置false # targetColumns: #字段映射,格式:目标表字段: 源表字段, 如果字段名一样源表字段名可不填 #id: id #abbr_name_01: abbr_name #full_name_01: full_name #contact_person_01: contact_person # etlCondition: "where c_time>={}" #简单的过滤 # commitBatch: 3000 #批量提交的大小 # Mirror schema synchronize config #schema dataSourceKey: defaultDS destination: canal_1221 groupId: g1 outerAdapterKey: mysql1 concurrent: true dbMapping: mirrorDb: true #镜像复制,DDL,DML database: text #即两库的schema要一模一样 |

3、启动canal-adapter

| cd /u01/canal-adapter/bin sh startup.sh |

3.4 同步验证

源库插入数据

mysql>use test;

mysql> insert into tt3 values(3,'IN','辽宁KK服务有限公司','尚XX','22247');

Query OK, 1 row affected (0.03 sec)

mysql> insert into tt9 values(3,'OUT','京CC有限公司','KK科技有限','21362');

Query OK, 1 row affected (0.00 sec)

查看adapter日志

| 2023-04-04 16:20:46.440 [pool-7-thread-1] INFO c.a.o.canal.client.adapter.logger.LoggerAdapterExample - DML: {"data":null,"database":"","destination":"canal_1221","es":1680596446000,"groupId":"g1","isDdl":false,"old":null,"pkNames":[],"sql":"insert into tt3 values(3,'IN','辽宁KK服务有限公司','尚XX','22247')","table":"tt3","ts":1680596446440,"type":"QUERY"} 2023-04-04 16:20:46.440 [pool-7-thread-1] INFO c.a.o.canal.client.adapter.logger.LoggerAdapterExample - DML: {"data":[{"id":3,"id_tye":"IN","zt_name":"辽宁KK服务有限公司","zdr_name":"尚XX","zdr_id":"22247"}],"database":"test","destination":"canal_1221","es":1680596446000,"groupId":"g1","isDdl":false,"old":null,"pkNames":[],"sql":"","table":"tt3","ts":1680596446440,"type":"INSERT"} 2023-04-04 16:20:46.491 [pool-4-thread-1] DEBUG c.a.o.canal.client.adapter.rdb.service.RdbSyncService - DML: {"data":{"id":3,"id_tye":"IN","zt_name":"辽宁KK服务有限公司","zdr_name":"尚XX","zdr_id":"22247"},"database":"test","destination":"canal_1221","old":null,"table":"tt3","type":"INSERT"} 2023-04-04 16:24:28.285 [pool-7-thread-1] INFO c.a.o.canal.client.adapter.logger.LoggerAdapterExample - DML: {"data":null,"database":"","destination":"canal_1221","es":1680596668000,"groupId":"g1","isDdl":false,"old":null,"pkNames":[],"sql":"insert into tt9 values(3,'OUT','京CC有限公司','KK科技有限','21362')","table":"tt9","ts":1680596668285,"type":"QUERY"} 2023-04-04 16:24:28.285 [pool-7-thread-1] INFO c.a.o.canal.client.adapter.logger.LoggerAdapterExample - DML: {"data":[{"id":3,"id_tye":"OUT","zt_name":"京CC有限公司","zdr_name":"KK科技有限","zdr_id":"21362"}],"database":"test","destination":"canal_1221","es":1680596668000,"groupId":"g1","isDdl":false,"old":null,"pkNames":[],"sql":"","table":"tt9","ts":1680596668285,"type":"INSERT"} 2023-04-04 16:24:28.291 [pool-4-thread-1] DEBUG c.a.o.canal.client.adapter.rdb.service.RdbSyncService - DML: {"data":{"id":3,"id_tye":"OUT","zt_name":"京CC有限公司","zdr_name":"KK科技有限","zdr_id":"21362"},"database":"test","destination":"canal_1221","old":null,"table":"tt9","type":"INSERT"} |

检查数据是否同步

目标端:

mysql> select * from tt3;

+------+--------+-----------------------------+----------+--------+

| id | id_tye | zt_name | zdr_name | zdr_id |

+------+--------+-----------------------------+----------+--------+

| 1 | IN | 北京#KK科技有限公司 | 郭三 | 22245 |

| 2 | OUT | 北京#KK科技有限公司 | 郭四 | 22246 |

| 3 | IN | 辽宁KK服务有限公司 | 尚XX | 22247 |

+------+--------+-----------------------------+----------+--------+

mysql> select * from tt9;

+----+--------+----------------------------+-----------------------+----------------------------+

| id | id_tye | zt_name | zdr_name | zdr_id |

+----+--------+----------------------------+-----------------------+----------------------------+

| 1 | "IN" | "北京 | KK科技有限公司" | "郭三" |

| 2 | "IN" | "北京 | KK科技有限公司" | "郭四"

|

| 3 | OUT | 京CC有限公司 | KK科技有限 | 21362 |

+----+--------+----------------------------+-----------------------+----------------------------+

8 rows in set (0.00 sec)

数据已经同步

四、同步实验

4.1 实验一:实现mysql -> mysql 指定库同步(db名相同)

配置操作上面已经实现

4.2 实验二:实现mysql -> mysql 指定表同步(db name,table name可不相同)

4.2.1 配置application.yml文件

| srcDataSources: #定义源数据库# defaultDS: url: jdbc:mysql://172.16.134.24:3310/test?useUnicode=true username: canal password: canal canalAdapters: #适配器列表 - instance: canal_1221 # canal instance Name or mq topic name#canal-server 上定义的instance# groups: #分组列表,一组内配置被订阅串行执行 - groupId: g1 #分组id, 如果是MQ模式将用到该值 outerAdapters: #分组内适配器列表 - name: logger #日志打印适配器 - name: rdb #指定为rdb类型同步 key: mysql1 #指adapte的唯一key, 与表映射配置中outerAdapterKey对应 properties: jdbc.driverClassName: com.mysql.jdbc.Driver jdbc.url: jdbc:mysql://172.16.134.25:3310/test001?useUnicode=true jdbc.username: canal jdbc.password: canal |

配置mytest_user1.yml文件(多个表创建多个yml文件)

| dataSourceKey: defaultDS destination: canal_1221 groupId: g1 outerAdapterKey: mysql1 concurrent: true dbMapping: database: test table: tt9 targetTable: tt8 targetPk: id: id mapAll: true # targetColumns: # id: # name: # role_id: # c_time: # test1: # etlCondition: "where c_time>={}" # commitBatch: 3000 # 批量提交的大小 |

4.2.2源库插入数据

mysql>use test;

mysql> insert into tt9 values(3,'OUT','辽宁KK服务有限公司','尚ke','21362');

Query OK, 1 row affected (0.00 sec)

查看adapter日志

| 2023-04-10 11:46:57.998 [pool-48-thread-1] INFO c.a.o.canal.client.adapter.logger.LoggerAdapterExample - DML: {"data":null,"database":"","destination":"canal_1221","es":1681098417000,"groupId":"g1","isDdl":false,"old":null,"pkNames":[],"sql":"insert into tt9 values(3,'OUT','辽宁KK服务有限公司','尚ke','21362')","table":"tt9","ts":1681098417998,"type":"QUERY"} 2023-04-10 11:46:57.998 [pool-48-thread-1] INFO c.a.o.canal.client.adapter.logger.LoggerAdapterExample - DML: {"data":[{"xx_id":3,"id_tye":"OUT","zt_name":"辽宁KK服务有限公司","zdr_name":"尚ke","zdr_id":"21362"}],"database":"test","destination":"canal_1221","es":1681098417000,"groupId":"g1","isDdl":false,"old":null,"pkNames":["xx_id"],"sql":"","table":"tt9","ts":1681098417998,"type":"INSERT"} 2023-04-10 11:46:58.002 [pool-42-thread-1] DEBUG c.a.o.canal.client.adapter.rdb.service.RdbSyncService - DML: {"data":{"xx_id":3,"id_tye":"OUT","zt_name":"辽宁KK服务有限公司","zdr_name":"尚ke","zdr_id":"21362"},"database":"test","destination":"canal_1221","old":null,"table":"tt9","type":"INSERT"} |

4.2.3 检查数据是否同步

目标端:

mysql> select * from tt9;

+-------+--------+----------------------------+----------+--------+

| xx_id | id_tye | zt_name | zdr_name | zdr_id |

+-------+--------+----------------------------+----------+--------+

| 3 | OUT | 辽宁KK服务有限公司 | 尚ke | 21362 |

+-------+--------+----------------------------+----------+--------+

1 row in set (0.00 sec)

数据已经同步