上一话

复现Object Detection,会复现的网络架构有:

1.SSD: Single Shot MultiBox Detector(√)

2.RetinaNet(√)

3.Faster RCNN

4.YOLO系列

....

代码:

2.复现RetinaNet

之前已经讲过RetinaNet,链接如下:

也不想做过多的讲解了,就讲讲在RetinaNet中是如何更换Backbones(将以前的ResNet更换为DarkNet)

之前ResNet骨干网络的代码

我懒得写了直接调用Pytorch包的,但是值得注意的是输出的feature map的channels可能需要修改(这里我在RetinaNet.py中进行了修改),与之后Neck(FPN)网络中输入channles匹配。

import torch

from torch import nn

from torchvision.models import resnet18, resnet34, resnet50, \

resnet101, resnet152

class ResNet(nn.Module):

def __init__(self, resnet_type="resnet50", pretrained=False):

super(ResNet, self).__init__()

if resnet_type == "resnet18":

self.model = resnet18(pretrained=pretrained)

elif resnet_type == "resnet34":

self.model = resnet34(pretrained=pretrained)

elif resnet_type == "resnet50":

self.model = resnet50(pretrained=pretrained)

elif resnet_type == "resnet101":

self.model = resnet101(pretrained=pretrained)

elif resnet_type == "resnet152":

self.model = resnet152(pretrained=pretrained)

del self.model.fc

del self.model.avgpool

def forward(self, x):

x = self.model.conv1(x)

x = self.model.bn1(x)

x = self.model.relu(x)

x = self.model.maxpool(x)

x = self.model.layer1(x)

C3 = self.model.layer2(x)

C4 = self.model.layer3(C3)

C5 = self.model.layer4(C4)

del x

return [C3, C4, C5]

if __name__ == "__main__":

backbone = ResNet(resnet_type='resnet18', pretrained=True)

x = torch.randn([16, 3, 512, 512])

C3, C4, C5 = backbone(x)

print(C3.shape) # torch.Size([16, 512, 64, 64])

print(C4.shape) # torch.Size([16, 1024, 32, 32])

print(C5.shape) # torch.Size([16, 2048, 16, 16])DarkNet骨干网络的代码

这里更换的backbones是DarkNetTiny,DarkNet19和DarkNet53,DarkNet系列是出自YOLO系列,其中DarkNet19是来自于YOLO9000(也就是我们通常意义上的YOLOv2[1],DarkNet53是来自于最经典的YOLOv3[2],而DarkNetTiny是来自YOLOv3-Tiny[2]。

import torch

import torch.nn as nn

__all__ = [

'darknettiny',

'darknet19',

'darknet53',

]

class DarkNet(nn.Module):

def __init__(self, darknet_type='darknet19'):

super(DarkNet, self).__init__()

self.darknet_type = darknet_type

if darknet_type == 'darknettiny':

self.model = darknettiny()

elif darknet_type == 'darknet19':

self.model = darknet19()

elif darknet_type == 'darknet53':

self.model = darknet53()

def forward(self, x):

out = self.model(x)

return out

class ActBlock(nn.Module):

def __init__(self, act_type='leakyrelu', inplace=True):

super(ActBlock, self).__init__()

assert act_type in ['silu', 'relu', 'leakyrelu'], \

"Unsupported activation function!"

if act_type == 'silu':

self.act = nn.SiLU(inplace=inplace)

elif act_type == 'relu':

self.act = nn.ReLU(inplace=inplace)

elif act_type == 'leakyrelu':

self.act = nn.LeakyReLU(0.1, inplace=inplace)

def forward(self, x):

x = self.act(x)

return x

class ConvBlock(nn.Module):

def __init__(self, inplanes, planes, kernel_size, stride, padding, groups=1, has_bn=True, has_act=True,

act_type='leakyrelu'):

super(ConvBlock, self).__init__()

bias = False if has_bn else True

self.layer = nn.Sequential(

nn.Conv2d(in_channels=inplanes, out_channels=planes, kernel_size=kernel_size, stride=stride,

padding=padding, groups=groups, bias=bias),

nn.BatchNorm2d(planes) if has_bn else nn.Sequential(),

ActBlock(act_type=act_type, inplace=True) if has_act else nn.Sequential()

)

def forward(self, x):

x = self.layer(x)

return x

class DarkNetTiny(nn.Module):

def __init__(self, act_type='leakyrelu'):

super(DarkNetTiny, self).__init__()

self.conv1 = ConvBlock(inplanes=3, planes=16, kernel_size=3, stride=1, padding=1, groups=1, has_bn=True,

has_act=True, act_type=act_type)

self.conv2 = ConvBlock(inplanes=16, planes=32, kernel_size=3, stride=1, padding=1, groups=1, has_bn=True,

has_act=True, act_type=act_type)

self.conv3 = ConvBlock(inplanes=32, planes=64, kernel_size=3, stride=1, padding=1, groups=1, has_bn=True,

has_act=True, act_type=act_type)

self.conv4 = ConvBlock(inplanes=64, planes=128, kernel_size=3, stride=1, padding=1, groups=1, has_bn=True,

has_act=True, act_type=act_type)

self.conv5 = ConvBlock(inplanes=128, planes=256, kernel_size=3, stride=1, padding=1, groups=1, has_bn=True,

has_act=True, act_type=act_type)

self.conv6 = ConvBlock(inplanes=256, planes=512, kernel_size=3, stride=1, padding=1, groups=1, has_bn=True,

has_act=True, act_type=act_type)

self.maxpool = nn.MaxPool2d(kernel_size=2, stride=2)

self.zeropad = nn.ZeroPad2d((0, 1, 0, 1))

self.last_maxpool = nn.MaxPool2d(kernel_size=2, stride=1)

self.out_channels = [64, 128, 256]

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

def forward(self, x):

x = self.conv1(x)

x = self.maxpool(x)

x = self.conv2(x)

x = self.maxpool(x)

C3 = self.conv3(x)

C3 = self.maxpool(C3)

C4 = self.conv4(C3)

C4 = self.maxpool(C4) # 128

C5 = self.conv5(C4)

C5 = self.maxpool(C5) # 256

del x

return [C3, C4, C5]

class D19Block(nn.Module):

def __init__(self, inplanes, planes, layer_num, use_maxpool=False, act_type='leakyrelu'):

super(D19Block, self).__init__()

self.use_maxpool = use_maxpool

layers = []

for i in range(0, layer_num):

if i % 2 == 0:

layers.append(

ConvBlock(inplanes=inplanes, planes=planes, kernel_size=3, stride=1, padding=1, groups=1,

has_bn=True, has_act=True, act_type=act_type))

else:

layers.append(

ConvBlock(inplanes=planes, planes=inplanes, kernel_size=1, stride=1, padding=0, groups=1,

has_bn=True, has_act=True, act_type=act_type))

self.D19Block = nn.Sequential(*layers)

if self.use_maxpool:

self.maxpool = nn.MaxPool2d(kernel_size=2, stride=2)

def forward(self, x):

x = self.D19Block(x)

if self.use_maxpool:

x = self.maxpool(x)

return x

class DarkNet19(nn.Module):

def __init__(self, act_type='leakyrelu'):

super(DarkNet19, self).__init__()

self.layer1 = ConvBlock(inplanes=3, planes=32, kernel_size=3, stride=1, padding=1, groups=1, has_bn=True,

has_act=True, act_type=act_type)

self.layer2 = D19Block(inplanes=32, planes=64, layer_num=1, use_maxpool=True, act_type=act_type)

self.layer3 = D19Block(inplanes=64, planes=128, layer_num=3, use_maxpool=True, act_type=act_type)

self.layer4 = D19Block(inplanes=128, planes=256, layer_num=3, use_maxpool=True, act_type=act_type)

self.layer5 = D19Block(inplanes=256, planes=512, layer_num=5, use_maxpool=True, act_type=act_type)

self.layer6 = D19Block(inplanes=512, planes=1024, layer_num=5, use_maxpool=False, act_type=act_type)

self.maxpool = nn.MaxPool2d(kernel_size=2, stride=2)

self.out_channels = [128, 256, 512]

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

def forward(self, x):

x = self.layer1(x)

x = self.maxpool(x)

x = self.layer2(x)

C3 = self.layer3(x)

C4 = self.layer4(C3)

C5 = self.layer5(C4)

del x

return [C3, C4, C5]

# conv*2+residual

class BasicBlock(nn.Module):

def __init__(self, inplanes, planes):

super(BasicBlock, self).__init__()

self.conv1 = ConvBlock(inplanes=inplanes, planes=planes, kernel_size=1, stride=1, padding=0)

self.conv2 = ConvBlock(inplanes=planes, planes=planes * 2, kernel_size=3, stride=1, padding=1)

def forward(self, x):

out = self.conv1(x)

out = self.conv2(out)

out += x

del x

return out

class DarkNet53(nn.Module):

def __init__(self):

super(DarkNet53, self).__init__()

self.conv1 = ConvBlock(inplanes=3, planes=32, kernel_size=3, stride=1, padding=1)

self.conv2 = ConvBlock(inplanes=32, planes=64, kernel_size=3, stride=2, padding=1)

self.block1 = nn.Sequential(

BasicBlock(inplanes=64, planes=32),

ConvBlock(inplanes=64, planes=128, kernel_size=3, stride=2, padding=1)

) # 128

self.block2 = nn.Sequential(

BasicBlock(inplanes=128, planes=64),

BasicBlock(inplanes=128, planes=64),

ConvBlock(inplanes=128, planes=256, kernel_size=3, stride=2, padding=1)

) # 256

self.block3 = nn.Sequential(

BasicBlock(inplanes=256, planes=128),

BasicBlock(inplanes=256, planes=128),

BasicBlock(inplanes=256, planes=128),

BasicBlock(inplanes=256, planes=128),

BasicBlock(inplanes=256, planes=128),

BasicBlock(inplanes=256, planes=128),

BasicBlock(inplanes=256, planes=128),

BasicBlock(inplanes=256, planes=128),

ConvBlock(inplanes=256, planes=512, kernel_size=3, stride=2, padding=1)

) # 512

self.block4 = nn.Sequential(

BasicBlock(inplanes=512, planes=256),

BasicBlock(inplanes=512, planes=256),

BasicBlock(inplanes=512, planes=256),

BasicBlock(inplanes=512, planes=256),

BasicBlock(inplanes=512, planes=256),

BasicBlock(inplanes=512, planes=256),

BasicBlock(inplanes=512, planes=256),

BasicBlock(inplanes=512, planes=256),

ConvBlock(inplanes=512, planes=1024, kernel_size=3, stride=2, padding=1)

) # 1024

self.block5 = nn.Sequential(

BasicBlock(inplanes=1024, planes=512),

BasicBlock(inplanes=1024, planes=512),

BasicBlock(inplanes=1024, planes=512),

BasicBlock(inplanes=1024, planes=512)

)

self.out_channels = [256, 512, 1024]

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

def forward(self, x):

x = self.conv1(x)

x = self.conv2(x)

x = self.block1(x)

C3 = self.block2(x)

C4 = self.block3(C3)

C5 = self.block4(C4)

del x

return [C3, C4, C5]

def darknettiny(**kwargs):

model = DarkNetTiny(**kwargs)

return model

def darknet19(**kwargs):

model = DarkNet19(**kwargs)

return model

def darknet53(**kwargs):

model = DarkNet53(**kwargs)

return model

if __name__ == '__main__':

x = torch.randn([8, 3, 512, 512])

darknet = DarkNet(darknet_type='darknet53')

[C3, C4, C5] = darknet(x)

print("C3.shape:{}".format(C3.shape))

print("C4.shape:{}".format(C4.shape))

print("C5.shape:{}".format(C5.shape))

# DarkNet53

# C3.shape: torch.Size([8, 256, 64, 64])

# C4.shape: torch.Size([8, 512, 32, 32])

# C5.shape: torch.Size([8, 1024, 16, 16])

# DarkNet19

# C3.shape: torch.Size([8, 128, 64, 64])

# C4.shape: torch.Size([8, 256, 32, 32])

# C5.shape: torch.Size([8, 512, 16, 16])

# DarkNetTiny

# C3.shape: torch.Size([8, 64, 64, 64])

# C4.shape: torch.Size([8, 128, 32, 32])

# C5.shape: torch.Size([8, 256, 16, 16])如何在RetinaNet网络中使用呢?我设置了个Backbones_type,修改这个就行。

RetinaNet.py代码

import os

import sys

BASE_DIR = os.path.dirname(

os.path.dirname(

os.path.abspath(__file__)))

sys.path.append(BASE_DIR)

import torch

import torch.nn as nn

from torchvision.ops import nms

from models.detection.RetinaNet.neck import FPN

from models.detection.RetinaNet.loss import FocalLoss

from models.detection.RetinaNet.anchor import Anchors

from models.detection.RetinaNet.head import clsHead, regHead

from models.detection.RetinaNet.backbones.ResNet import ResNet

from models.detection.RetinaNet.utils.ClipBoxes import ClipBoxes

from models.detection.RetinaNet.backbones.DarkNet import DarkNet

from models.detection.RetinaNet.utils.BBoxTransform import BBoxTransform

# assert input annotations are [x_min, y_min, x_max, y_max]

class RetinaNet(nn.Module):

def __init__(self,

backbones_type="resnet50",

num_classes=80,

planes=256,

pretrained=False,

training=False):

super(RetinaNet, self).__init__()

self.backbones_type = backbones_type

# coco 80, voc 20

self.num_classes = num_classes

self.planes = planes

self.training = training

if backbones_type[:6] == 'resnet':

self.backbone = ResNet(resnet_type=self.backbones_type,

pretrained=pretrained)

elif backbones_type[:7] == 'darknet':

self.backbone = DarkNet(darknet_type=self.backbones_type)

expand_ratio = {

"resnet18": 1,

"resnet34": 1,

"resnet50": 4,

"resnet101": 4,

"resnet152": 4,

"darknettiny": 0.5,

"darknet19": 1,

"darknet53": 2

}

C3_inplanes, C4_inplanes, C5_inplanes = \

int(128 * expand_ratio[self.backbones_type]), \

int(256 * expand_ratio[self.backbones_type]), \

int(512 * expand_ratio[self.backbones_type])

self.fpn = FPN(C3_inplanes=C3_inplanes,

C4_inplanes=C4_inplanes,

C5_inplanes=C5_inplanes,

planes=self.planes)

self.cls_head = clsHead(inplanes=self.planes,

num_classes=self.num_classes)

self.reg_head = regHead(inplanes=self.planes)

self.anchors = Anchors()

self.regressBoxes = BBoxTransform()

self.clipBoxes = ClipBoxes()

self.loss = FocalLoss()

self.freeze_bn()

def freeze_bn(self):

'''Freeze BatchNorm layers.'''

for layer in self.modules():

if isinstance(layer, nn.BatchNorm2d):

layer.eval()

def forward(self, inputs):

if self.training:

img_batch, annots = inputs

# inference

else:

img_batch = inputs

[C3, C4, C5] = self.backbone(img_batch)

del inputs

features = self.fpn([C3, C4, C5])

del C3, C4, C5

# (batch_size, total_anchors_nums, num_classes)

cls_heads = torch.cat([self.cls_head(feature) for feature in features], dim=1)

# (batch_size, total_anchors_nums, 4)

reg_heads = torch.cat([self.reg_head(feature) for feature in features], dim=1)

del features

anchors = self.anchors(img_batch)

if self.training:

return self.loss(cls_heads, reg_heads, anchors, annots)

# inference

else:

transformed_anchors = self.regressBoxes(anchors, reg_heads)

transformed_anchors = self.clipBoxes(transformed_anchors, img_batch)

# scores

finalScores = torch.Tensor([])

# anchor id:0~79

finalAnchorBoxesIndexes = torch.Tensor([]).long()

# coordinates size:[...,4]

finalAnchorBoxesCoordinates = torch.Tensor([])

if torch.cuda.is_available():

finalScores = finalScores.cuda()

finalAnchorBoxesIndexes = finalAnchorBoxesIndexes.cuda()

finalAnchorBoxesCoordinates = finalAnchorBoxesCoordinates.cuda()

# num_classes

for i in range(cls_heads.shape[2]):

scores = torch.squeeze(cls_heads[:, :, i])

scores_over_thresh = (scores > 0.05)

if scores_over_thresh.sum() == 0:

# no boxes to NMS, just continue

continue

scores = scores[scores_over_thresh]

anchorBoxes = torch.squeeze(transformed_anchors)

anchorBoxes = anchorBoxes[scores_over_thresh]

anchors_nms_idx = nms(anchorBoxes, scores, 0.5)

# use idx to find the scores of anchor

finalScores = torch.cat((finalScores, scores[anchors_nms_idx]))

# [0,0,0,...,1,1,1,...,79,79]

finalAnchorBoxesIndexesValue = torch.tensor([i] * anchors_nms_idx.shape[0])

if torch.cuda.is_available():

finalAnchorBoxesIndexesValue = finalAnchorBoxesIndexesValue.cuda()

finalAnchorBoxesIndexes = torch.cat((finalAnchorBoxesIndexes, finalAnchorBoxesIndexesValue))

# [...,4]

finalAnchorBoxesCoordinates = torch.cat((finalAnchorBoxesCoordinates, anchorBoxes[anchors_nms_idx]))

return finalScores, finalAnchorBoxesIndexes, finalAnchorBoxesCoordinates

if __name__ == "__main__":

C = torch.randn([8, 3, 512, 512])

annot = torch.randn([8, 15, 5])

model = RetinaNet(backbones_type="darknet19", num_classes=80, pretrained=True, training=True)

model = model.cuda()

C = C.cuda()

annot = annot.cuda()

model = torch.nn.DataParallel(model).cuda()

model.training = True

out = model([C, annot])

# if model.training == True out==loss

# out = model([C, annot])

# if model.training == False out== scores

# out = model(C)

for i in range(len(out)):

print(out[i])

# Scores: torch.Size([486449])

# tensor([4.1057, 4.0902, 4.0597, ..., 0.0509, 0.0507, 0.0507], device='cuda:0')

# Id: torch.Size([486449])

# tensor([ 0, 0, 0, ..., 79, 79, 79], device='cuda:0')

# loc: torch.Size([486449, 4])

# tensor([[ 45.1607, 249.4807, 170.5788, 322.8085],

# [ 85.9825, 324.4150, 122.9968, 382.6297],

# [148.1854, 274.0474, 179.0922, 343.4529],

# ...,

# [222.5421, 0.0000, 256.3059, 15.5591],

# [143.3349, 204.4784, 170.2395, 228.6654],

# [208.4509, 140.1983, 288.0962, 165.8708]], device='cuda:0')使用此模型的评估结果(并非以上的DarkNet骨干网络,而是自带的ResNet),未精细调节参数以及精度

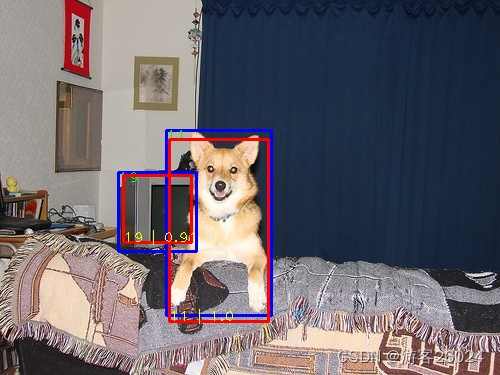

模型评估VOC结果

Network: RetinaNet

backbone: ResNet50

neck: FPN

loss: Focal Loss

dataset: voc

batch_size: 4

optim: Adam

lr: 0.0001

scheduler: WarmupCosineSchedule

epoch: 80| epochs | AP(%) | Download Baidu yun | Key |

|---|---|---|---|

| 80 | 70.1 | https://pan.baidu.com/s/1Bv9IodSnNszbpsxGdzJn0g | dww8 |

模型voc可视化结果

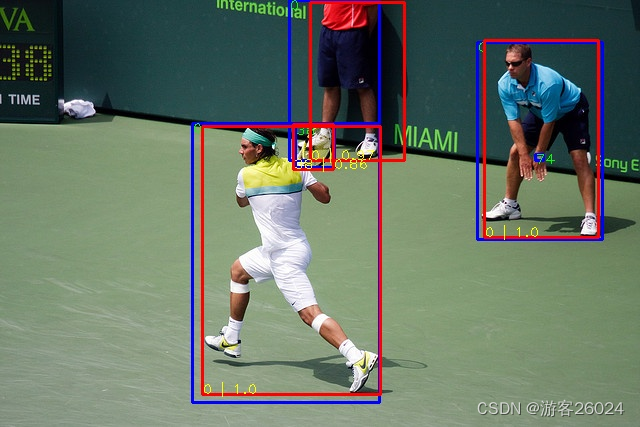

模型评估COCO结果

Network: RetinaNet

backbone: ResNet50

neck: FPN

loss: Focal Loss

dataset: coco

batch_size: 4

optim: Adam

lr: 0.0001

scheduler: ReduceLROnPlateau

patience: 3

epoch: 30

pretrained: True| epochs | AP(%) | Download Baidu yun | Key |

|---|---|---|---|

| 30 | 29.3 | https://pan.baidu.com/s/1eosb5gi9HowC5B-fFncT2g | 5vak |

模型COCO可视化结果

若想知道更多代码详情,请翻看我的gitHub!!

未完...