#-----------------------------------------------------

import matplotlib.pyplot as plt

from sklearn import datasets

digits=datasets.load_digits()

x=digits.data

y=digits.target

#-------------------------------------------------------------

from sklearn.model_selection import train_test_split

x_train,x_test,y_train,y_test=train_test_split(x,y,test_size=0.2)

print(x_train.shape)

from sklearn.neighbors import KNeighborsClassifier

knn_clf=KNeighborsClassifier()

knn_clf.fit(x_train,y_train)

print("normal:",knn_clf.score(x_test,y_test))

#-------------------------------------------------------------

from sklearn.decomposition import PCA

pca=PCA(n_components=2)

pca.fit(x_train)

x_train_reduction=pca.transform(x_train)

x_test_reduction =pca.transform(x_test)

#------------------------------------------------

knn_clf=KNeighborsClassifier()

knn_clf.fit(x_train_reduction,y_train)

print("reduction:",knn_clf.score(x_test_reduction,y_test))

#---------------------------------------------------------------

pca=PCA()

pca.fit(x_train)

plt.figure()

plt.plot([i for i in range(x_train.shape[1])],

[np.sum(pca.explained_variance_ratio_[:i+1]) for i in range(x_train.shape[1])])

plt.show()

#---------------------------------------------------------------

pca=PCA(0.95)

pca.fit(x_train)

print(pca.n_components_)

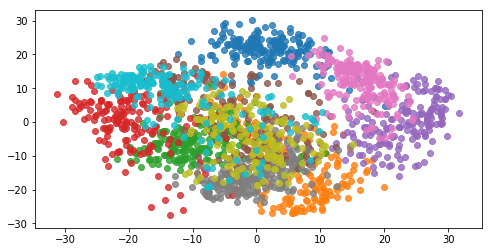

pca=PCA(n_components=2)

pca.fit(x)

x_reduction=pca.transform(x)

print(pca.explained_variance_ratio_)

plt.figure()

for i in range(10):

# print(y==i)

# print(x_reduction[y==i,0])

plt.scatter(x_reduction[y==i,0],x_reduction[y==i,1],alpha=0.8)

#plt.plot([i for i in range(x_train.shape[1])],

# [np.sum(pca.explained_variance_ratio_[:i+1]) for i in range(x_train.shape[1])])

plt.show()