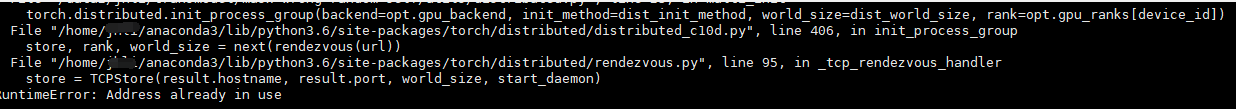

Pytorch用多张GPU训练时,会报地址已被占用的错误。其实是端口号冲突了。

因此解决方法要么kill原来的进程,要么修改端口号。

在代码里重新配置

torch.distributed.init_process_group() dist_init_method = 'tcp://{master_ip}:{master_port}'.format(master_ip='127.0.0.1', master_port='10000')

dist_world_size = opt.world_size #total number of distributed processes.

torch.distributed.init_process_group(backend="nccl", init_method=dist_init_method, world_size=dist_world_size, rank=[0,1])每次只要重新修改master_port