创建目录celery_pro,并在celery_pro下创建下面两个文件

celery.py

# celery.py

# -*- coding:utf8 -*-

from __future__ import absolute_import, unicode_literals

#1. absolute_import 可以使导入的celery是python绝对路基的celery模块,不是当前我们创建的celery.py

#2. unicode_literals 模块可能是python2和3兼容的,不知道

from celery import Celery

# from .celery import Celery #这样才是导入当前目录下的celery

# 填写你的项目名

app = Celery('project',

broker='redis://localhost',

backend='redis://localhost',

include=['celery_pro.tasks',

'celery_pro.tasks2',

])

#celery——pro是存放celery文件的文件夹名字

#实例化时可以添加下面这个属性

app.conf.update(

result_expires=3600, #执行结果放到redis里,一个小时没人取就丢弃

)

# 配置定时任务:每5秒钟执行 调用一次celery_pro下tasks.py文件中的add函数

app.conf.beat_schedule = {

'add-every-5-seconds': {

'task': 'celery_pro.tasks.add', # 寻找tasks下面的add函数

'schedule': 5.0,

'args': (16, 16)

},

}

app.conf.timezone = 'UTC' # 配置的时间规范

if __name__ == '__main__':

app.start()

task.py

# task.py

# -*- coding:utf8 -*-

from __future__ import absolute_import, unicode_literals

from .celery import app #从当前目录导入app

#写一个add函数

@app.task

def add(x, y):

return x + y

task2.py

# task2.py

# -*- coding:utf8 -*-

from __future__ import absolute_import, unicode_literals

from .celery import app

import time,random

@app.task

def randnum(start,end):

time.sleep(3)

return random.randint(start,end)

tasks2.py

touch init.py

在celery_pro目录下新建__init__.py文件,否则执行命令时会报错

执行下面两条命令即可让celery定时执行任务了

-

启动一个worker:在celery_pro外层目录下执行

celery -A celery_pro worker -l info -

启动任务调度器 celery beat

celery -A celery_pro beat -l info -

执行效果

看到celery运行日志中每5秒回返回一次 add函数执行结果

启动celery的worker:每台机器可以启动8个worker

- 在pythondir目录下启动 /pythondir/celery_pro/ 目录下的worker

celery -A celery_pro worker -l info

- 后台启动worker:/pythondir/celery_pro/目录下执行

celery multi start w1 -A celery_pro -l info #在后台启动w1这个worker

celery multi start w1 w2 -A celery_pro -l info #一次性启动w1,w2两个worker

celery -A celery_pro status #查看当前有哪些worker在运行

celery multi stop w1 w2 -A celery_pro #停止w1,w2两个worker

celery multi restart w1 w2 -A celery_pro #重启w1,w2两个worker

也可以手动给celery分配任务:在/pythondir/下执行

python3

from celery_pro import tasks,tasks2

t1 = tasks.add.delay(34,3)

t2 = tasks2.randnum.delay(1,10000)

t1.get()

t2.get()

手动给celery分配任务:在/pythondir/下执行

celery与Django项目最佳实践

pip3 install Django==2.0.4

pip3 install celery==4.3.0

pip3 install redis==3.2.1

pip3 install ipython==7.6.1

find ./ -type f | xargs sed -i 's/\r$//g' # 批量将当前文件夹下所有文件装换成unix格式

celery multi start celery_test -A celery_test -l debug --autoscale=50,5 # celery并发数:最多50个,最少5个

http://docs.celeryproject.org/en/latest/reference/celery.bin.worker.html#cmdoption-celery-worker-autoscale

ps auxww|grep "celery worker"|grep -v grep|awk '{print $2}'|xargs kill -9 # 关闭所有celery进程

在Django中使用celery介绍(celery无法再windows下运行)

-

在Django中使用celery时,celery文件必须以tasks.py

-

Django会自动到每个APP中找tasks.py文件

创建一个Django项目celery_test,和app01

在与项目同名的目录下创建celery.py

# celery.py

# -*- coding: utf-8 -*-

from __future__ import absolute_import

import os

from celery import Celery

# 只要是想在自己的脚本中访问Django的数据库等文件就必须配置Django的环境变量

os.environ.setdefault('DJANGO_SETTINGS_MODULE', 'celery_test.settings')

# app名字

app = Celery('celery_test')

# 配置celery

class Config:

BROKER_URL = 'redis://192.168.56.11:6379'

CELERY_RESULT_BACKEND = 'redis://192.168.56.11:6379'

app.config_from_object(Config)

# 到各个APP里自动发现tasks.py文件

app.autodiscover_tasks()

在与项目同名的目录下的 init.py 文件中添加下面内容

# __init__.py

# -*- coding:utf8 -*-

from __future__ import absolute_import, unicode_literals

# 告诉Django在启动时别忘了检测我的celery文件

from .celery import app as celery_ap

__all__ = ['celery_app']

创建app01/tasks.py文件

# tasks.py

# -*- coding:utf8 -*-

from __future__ import absolute_import, unicode_literals

from celery import shared_task

# 这里不再使用@app.task,而是用@shared_task,是指定可以在其他APP中也可以调用这个任务

@shared_task

def add(x, y):

return x + y

在setings.py文件指定redis服务器的配置

# settings.py

CELERY_BROKER_URL = 'redis://localhost'

CELERY_RESULT_BACKEND = 'redis://localhost'

将celery_test这个Django项目拷贝到centos7.3的django_test文件夹中

保证启动了redis-server

启动一个celery的worker

celery -A celery_test worker -l info

在Linux中启动 Django项目

python3 manage.py runserver 0.0.0.0:9000

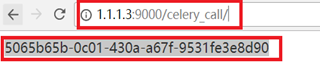

访问http://1.1.1.3:9000/celery_call/ 获取任务id

根据11中的任务id获取对应的值

http://1.1.1.3:9000/celery_result/?id=5065b65b-0c01-430a-a67f-9531fe3e8d90

基于步骤↑:在django中使用计划任务功能

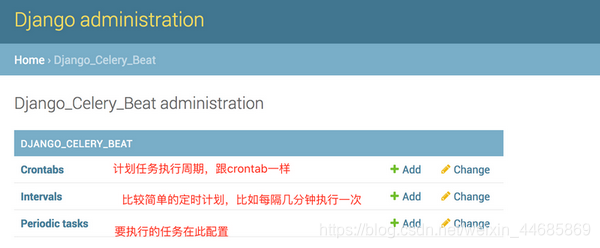

在Django中使用celery的定时任务需要安装django-celery-beat

pip3 install django-celery-beat

在Django的settings中注册django_celery_beat

INSTALLED_APPS = (

...,

'django_celery_beat',

)

执行创建表命令

python3 manage.py makemigrations

python3 manage.py migrate

python3 manage.py startsuperuser

运行Django项目

celery -A celery_test worker -l info

python3 manage.py runserver 0.0.0.0:9000

登录 http://1.1.1.3:9000/admin/ 可以看到多了三张表

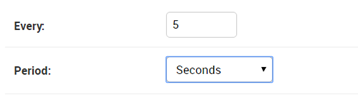

在intervals表中添加一条每5秒钟执行一次的任务的时钟

在Periodic tasks表中创建任务

在/django_test/celery_test/目录下执行下面命令

celery -A celery_test worker -l info #启动一个worker

python manage.py runserver 0.0.0.0:9000 #运行Django项目

celery -A celery_test beat -l info -S django #启动心跳任务

说明:

运行上面命令后就可以看到在运行celery -A celery_test worker -l info 窗口中每5秒钟执行一次app01.tasks.add: 2+3=5

关于添加新任务必须重启心跳问题

-

每次在Django表中添加一个任务就必须重启一下beat

-

但是Django中有一个djcelery插件可以帮助我们不必重启

cdjango+celery+redis实现异步周期任务

注:python的celery模块 4.2.0版本, 刚开始安装的未4.1.1版本,但是定时任务居然不执行

在settings.py中配置celery

# settings.py

#1、如果在django中需要周期性执行,在这里需要注册 django_celery_beat 中间件

INSTALLED_APPS = [

'''

'django_celery_beat',

'''

]

TIME_ZONE = 'Asia/Shanghai' # 将默认的UTC时区给成中国时区

#2、celery:配置celery

BROKER_URL = 'redis://localhost:6379'

CELERY_RESULT_BACKEND = 'redis://localhost:6379'

CELERY_ACCEPT_CONTENT = ['application/json']

CELERY_TASK_SERIALIZER = 'json'

CELERY_RESULT_SERIALIZER = 'json'

CELERY_TASK_RESULT_EXPIRES = 60 * 60

CELERY_TIMEZONE = 'Asia/Shanghai'

CELERY_ENABLE_UTC=False

CELERY_ANNOTATIONS = {'*': {'rate_limit': '500/s'}}

CELERYBEAT_SCHEDULER = 'djcelery.schedulers.DatabaseScheduler'

在与项目同名的目录下创建celery.py

更多定时参考官网:http://docs.celeryproject.org/en/latest/userguide/periodic-tasks.html#crontab-schedules

# # -*- coding: utf-8 -*-

from __future__ import absolute_import

import os

from celery import Celery

from celery.schedules import crontab

from datetime import timedelta

from kombu import Queue

# set the default Django settings module for the 'celery' program.

os.environ.setdefault('DJANGO_SETTINGS_MODULE', 'celery_test.settings')

from django.conf import settings

app = Celery('celery_test')

# Using a string here means the worker will not have to

# pickle the object when using Windows.

class Config:

BROKER_URL = 'redis://1.1.1.3:6379'

CELERY_RESULT_BACKEND = 'redis://1.1.1.3:6379'

CELERY_ACCEPT_CONTENT = ['application/json']

CELERY_TASK_SERIALIZER = 'json'

CELERY_RESULT_SERIALIZER = 'json'

CELERY_TIMEZONE = 'Asia/Shanghai'

ENABLE_UTC = False

CELERY_TASK_RESULT_EXPIRES = 60 * 60

CELERY_ANNOTATIONS = {'*': {'rate_limit': '500/s'}}

# 每次取任务的数量

# CELERYD_PREFETCH_MULTIPLIER = 10

# 每个worker执行多少次任务之后就销毁,防止内存泄漏。相当于--maxtasksperchild参数

CELERYD_MAX_TASKS_PER_CHILD = 16

# 防止死锁

# CELERYD_FORCE_EXECV = True

# 任务发出后,经过一段时间还未收到acknowledge , 就将任务重新交给其他worker执行

# CELERY_DISABLE_RATE_LIMITS = True

# CELERYBEAT_SCHEDULER = 'djcelery.schedulers.DatabaseScheduler'

app.config_from_object(Config)

app.autodiscover_tasks()

#crontab config

app.conf.update(

CELERYBEAT_SCHEDULE = {

# 每隔三分钟执行一次add函数

'every-3-min-add': {

'task': 'app01.tasks.add',

'schedule': timedelta(seconds=180)

},

# 每天下午15:420执行

'add-every-day-morning@14:50': {

'task': 'app01.tasks.minus',

'schedule': crontab(hour=15, minute=20, day_of_week='*/1'),

},

},

)

Queue('transient', routing_key='transient',delivery_mode=1)

celery.py

# -*- coding: utf-8 -*-

from __future__ import absolute_import

import os

from celery import Celery

from celery.schedules import crontab

from datetime import timedelta

from kombu import Queue

# set the default Django settings module for the 'celery' program.

os.environ.setdefault('DJANGO_SETTINGS_MODULE', 'celery_test.settings')

from django.conf import settings

app = Celery('celery_test')

# Using a string here means the worker will not have to

# pickle the object when using Windows.

class Config:

BROKER_URL = 'redis://1.1.1.3:6379'

CELERY_RESULT_BACKEND = 'redis://1.1.1.3:6379'

CELERY_ACCEPT_CONTENT = ['application/json']

CELERY_TASK_SERIALIZER = 'json'

CELERY_RESULT_SERIALIZER = 'json'

CELERY_TIMEZONE = 'Asia/Shanghai'

ENABLE_UTC = False

CELERY_TASK_RESULT_EXPIRES = 60 * 60

CELERY_ANNOTATIONS = {'*': {'rate_limit': '500/s'}}

# 每次取任务的数量

# CELERYD_PREFETCH_MULTIPLIER = 10

# 每个worker执行多少次任务之后就销毁,防止内存泄漏。相当于--maxtasksperchild参数

CELERYD_MAX_TASKS_PER_CHILD = 16

# 防止死锁

# CELERYD_FORCE_EXECV = True

# 任务发出后,经过一段时间还未收到acknowledge , 就将任务重新交给其他worker执行

# CELERY_DISABLE_RATE_LIMITS = True

# CELERYBEAT_SCHEDULER = 'djcelery.schedulers.DatabaseScheduler'

app.config_from_object(Config)

app.autodiscover_tasks()

#crontab config

app.conf.update(

CELERYBEAT_SCHEDULE = {

# 每隔三分钟执行一次add函数

'every-3-min-add': {

'task': 'app01.tasks.add',

'schedule': timedelta(seconds=180)

},

# 每天下午15:420执行

'add-every-day-morning@14:50': {

'task': 'app01.tasks.minus',

'schedule': crontab(hour=15, minute=20, day_of_week='*/1'),

},

},

)

Queue('transient', routing_key='transient',delivery_mode=1)

在任意app下创建tasks.py (django会自动到各app中找到此tasks文件)

# tasks.py

# -*- coding:utf8 -*-

from __future__ import absolute_import, unicode_literals

from celery import shared_task

# 这里不再使用@app.task,而是用@shared_task,是指定可以在其他APP中也可以调用这个任务

@shared_task

def add():

print 'app01.tasks.add'

return 222 + 333

@shared_task

def minus():

print 'app01.tasks.minus'

return 222 - 333

在与项目同名的目录下的 init.py 文件中添加下面内容

# __init__.py

# -*- coding:utf8 -*-

from __future__ import absolute_import, unicode_literals

# 告诉Django在启动时别忘了检测我的celery文件

from .celery import app as celery_ap

__all__ = ['celery_app']

启动脚本(记得开启celery服务)

启动django程序

python manage.py runserver 0.0.0.0:8000

# service.sh

#!/usr/bin/env bash

source ../env/bin/activate

export DJANGO_SETTINGS_MODULE=celery_test.settings

base_dir=`pwd`

mup_pid() {

echo `ps -ef | grep -E "(manage.py)(.*):8000" | grep -v grep| awk '{print $2}'`

}

start() {

python $base_dir/manage.py runserver 0.0.0.0:8000 &>> $base_dir/django.log 2>&1 &

pid=$(mup_pid)

echo -e "\e[00;31mmup is running (pid: $pid)\e[00m"

}

stop() {

pid=$(mup_pid)

echo -e "\e[00;31mmup is stop (pid: $pid)\e[00m"

ps -ef | grep -E "(manage.py)(.*):8000" | grep -v grep| awk '{print $2}' | xargs kill -9 &> /dev/null

}

restart(){

stop

start

}

# See how we were called.

case "$1" in

start)

start

;;

stop)

stop

;;

restart)

restart

;;

*)

echo $"Usage: $0 {start|stop|restart}"

exit 2

esac

启动celery的worker:每台机器可以启动8个worker

celery -A celery_test worker -l info

# start-celery.sh

#!/bin/bash

source ../env/bin/activate

export C_FORCE_ROOT="true"

base_dir=`pwd`

celery_pid() {

echo `ps -ef | grep -E "celery -A celery_test worker" | grep -v grep| awk '{print $2}'`

}

start() {

celery multi start celery_test -A celery_test -l debug --autoscale=50,5 --logfile=$base_dir/var/celery-%I.log --pidfile=celery_test.pid

}

restart() {

celery multi restart celery_test -A celery_test -l debug

}

stop() {

celery multi stop celery_test -A celery_test -l debug

}

#restart(){

# stop

# start

#}

# See how we were called.

case "$1" in

start)

start

;;

restart)

restart

;;

stop)

stop

;;

*)

echo $"Usage: $0 {start|stop|restart}"

exit 2

esac

#nohup celery -A celery_test worker -l debug --concurrency=10 --autoreload & >>celery.log

启动celery 定时任务运行

celery -A celery_test beat -l debug

# celery-crond.sh

#!/bin/bash

#celery 定时任务运行

source ../env/bin/activate

export C_FORCE_ROOT="true"

base_dir=`pwd`

celery_pid() {

echo `ps -ef | grep -E "celery -A celery_test beat" | grep -v grep| awk '{print $2}'`

}

start() {

#django 调度定时任务

#celery -A celery_test beat -l info -S django >> $base_dir/var/celery-cron.log 2>&1 &

celery -A celery_test beat -l debug >> $base_dir/var/Scheduler.log 2>&1 &

sleep 3

pid=$(celery_pid)

echo -e "\e[00;31mcelery is start (pid: $pid)\e[00m"

}

restart() {

pid=$(celery_pid)

echo -e "\e[00;31mcelery is restart (pid: $pid)\e[00m"

ps auxf | grep -E "celery -A celery_test beat" | grep -v grep| awk '{print $2}' | xargs kill -HUP &> /dev/null

}

stop() {

pid=$(celery_pid)

echo -e "\e[00;31mcelery is stop (pid: $pid)\e[00m"

ps -ef | grep -E "celery -A celery_test beat" | grep -v grep| awk '{print $2}' | xargs kill -TERM &> /dev/null

}

case "$1" in

start)

start

;;

restart)

restart

;;

stop)

stop

;;

*)

echo $"Usage: $0 {start|stop|restart}"

exit 2

esac

windows下编写的脚本文件,放到Linux中无法识别格式

在Linux中执行.sh脚本,异常/bin/sh^M: bad interpreter: No such file or directory

set ff=unix

dos2unix start-celery.sh

dos2unix celery-crond.sh

常见报错

Received unregistered task of type ‘XXX’ Celery报错(定时任务中无法找到对应tasks.py文件)

app = Celery(‘opwf’, include=[‘api_workflow.tasks’]) # api_workflow这个app中的tasks文件