在工作中,camera这一块上,可能会有各种各样的需求。比如有人想新增一个虚拟摄像头,当用户app打开摄像头设备时,打开的不是系统默认的camera hal代码,而是自己指定的代码,用自己事先准备好的视频数据,来喂给app;也有人想在系统默认的一套app框架上,新增一个外接的usbcamera,并且要能溶入到camera框架中。app只需要指定usbcamera的id,就能像打开普通摄像头那样,去打开我们的usbcamera;也有人,想在现有的框架上,同时兼容老的hal1+api1流程的android8.0之前的camera,又想新增一个符合android8.0的hidl接口规范的camera模块。

上面所有的需求,归纳起来,核心的就是一点,即如何去新增一个camera hal模块。我这篇博客,是以在mtk android8.0上新增一个usbcamera hal模块来讲的。当然,新增虚拟摄像头,流程跟这个也是一模一样的。

好了,既然是想在android8.0上新增一套符合hidl接口规范的camera流程,那么我们先要了解一下,android原生的hidl接口下的camera流程,下面我们先讲一讲这块。

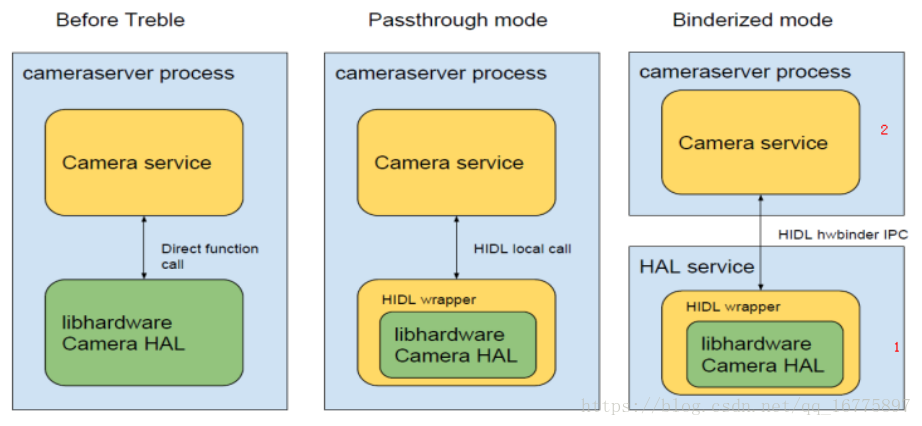

在android8.0之前,frameworks层的cameraservice和hal层的camera代码是在同一个进程的,这样不利于hal层部份独立升级。针对这个问题,8.0后,就推出了hidl机制,将frameworks层和hal层分成了两个进程,从而进行了解耦。借用网上的一张图片来大致的表明下这个区别:

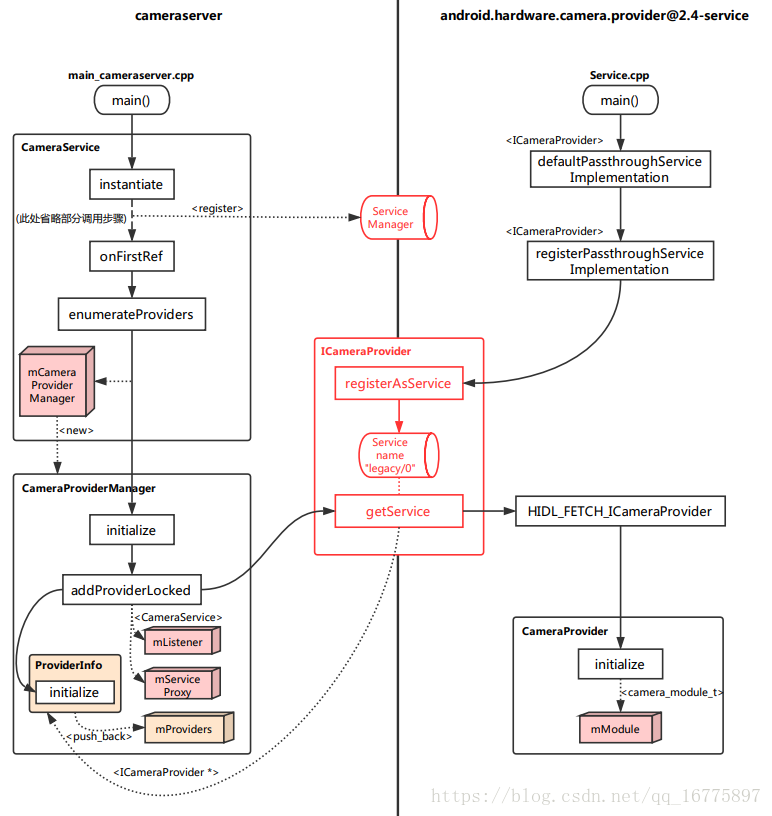

这么一来,看似调用变复杂了,但其实是殊途同归,hal层代码和之前基本上没有变化。具体到camera而言,就是在frameworks层和hal层中间,增加了一个cameraProvider来做为两方联系的桥梁。现在的调用流程,再借网上一张图一用:

接下来以上面这张图来大致的说一下调用流程:

1.) 开机注册cameraProvider(cameraProvider里会和具体的camera hal层对应起来)

2.) 开机实例化cameraService

3.) 查找并列出所有的cameraProvider(这一步会通过hw_get_module来将camera的hal层代码load出来)

这基本上就是camera开机后从frameworks加载到hal层的路线了。下面再具体一步步来分析。

开机注册cameraProvider

在我们的hardware\interfaces\camera\provider\2.4\default目录下,有一个[email protected]文件

service camera-provider-2-4 /vendor/bin/hw/[email protected]

class hal

user cameraserver

group audio camera input drmrpc

ioprio rt 4

capabilities SYS_NICE

writepid /dev/cpuset/camera-daemon/tasks /dev/stune/top-app/tasks当开机时,会自动调用这个文件去启动服务camera-provider-2-4,然后它会调用到/vendor/bin/hw/[email protected]这个文件。然后会调到hardware\interfaces\camera\provider\2.4\default\service.cpp

int main()

{

ALOGI("Camera provider Service is starting.");

// The camera HAL may communicate to other vendor components via

// /dev/vndbinder

android::ProcessState::initWithDriver("/dev/vndbinder");

return defaultPassthroughServiceImplementation<ICameraProvider>("legacy/0", /*maxThreads*/ 6);

}

然后这里会用hidl的标准接口去将我们的"legacy/0"注册到服务上去。

system\libhidl\transport\include\hidl\LegacySupport.h

template<class Interface>

__attribute__((warn_unused_result))

status_t defaultPassthroughServiceImplementation(std::string name,

size_t maxThreads = 1) {

configureRpcThreadpool(maxThreads, true);

status_t result = registerPassthroughServiceImplementation<Interface>(name);

if (result != OK) {

return result;

}

joinRpcThreadpool();

return 0;

}在这里有一点要注意,这里的"legacy/0"必须要先在工程根目录 device/***/芯片名/manifest.xml里先声明,否则即使这里注册了,后面也会因为找不到而报错.

<hal format="hidl">

<name>android.hardware.camera.provider</name>

<transport>hwbinder</transport>

<version>2.4</version>

<interface>

<name>ICameraProvider</name>

<instance>legacy/0</instance>

</interface>

</hal> 开机实例化cameraService

在frameworks\av\camera\cameraserver里有个cameraserver.rc, 它在开机的时候,也会自动启动.

service cameraserver /system/bin/cameraserver

class main

user cameraserver

group audio camera input drmrpc

ioprio rt 4

writepid /dev/cpuset/camera-daemon/tasks /dev/stune/top-app/tasks

然后它会调用到frameworks\av\camera\cameraserver\main_cameraserver.cpp

int main(int argc __unused, char** argv __unused)

{

signal(SIGPIPE, SIG_IGN);

//!++

#if 0

// Set 3 threads for HIDL calls

hardware::configureRpcThreadpool(3, /*willjoin*/ false);

#else

// Set 6 threads for HIDL calls, features need more threads to handle preview+capture

hardware::configureRpcThreadpool(6, /*willjoin*/ false);

#endif

//!--

sp<ProcessState> proc(ProcessState::self());

sp<IServiceManager> sm = defaultServiceManager();

ALOGI("ServiceManager: %p", sm.get());

CameraService::instantiate();

ProcessState::self()->startThreadPool();

IPCThreadState::self()->joinThreadPool();

}

在这个main函数里,我们只需要关注CameraService::instantiate();这行代码. 我们的CameraService继承了BinderService.h.这个:instantiate()调用到了BinderService.h里的代码

frameworks\native\libs\binder\include\binder\BinderService.h

template<typename SERVICE>

class BinderService

{

public:

static status_t publish(bool allowIsolated = false) {

sp<IServiceManager> sm(defaultServiceManager());

return sm->addService(

String16(SERVICE::getServiceName()),

new SERVICE(), allowIsolated);

}

static void publishAndJoinThreadPool(bool allowIsolated = false) {

publish(allowIsolated);

joinThreadPool();

}

static void instantiate() { publish(); }

static status_t shutdown() { return NO_ERROR; }

private:

static void joinThreadPool() {

sp<ProcessState> ps(ProcessState::self());

ps->startThreadPool();

ps->giveThreadPoolName();

IPCThreadState::self()->joinThreadPool();

}

};

}; // namespa从这个文件可以看出,instantiate最终还是调用到了IServiceManager里的addService, 将我们的cameraService注册到了系统的服务管理器里去了.

这里调用到CameraService后, 因为是开机第一次调用,它的引用计数为1,所以会调用到CameraService::onFirstRef()这个函数. 这个函数是从CameraService的父类RefBase里继承过来的.该函数在强引用sp新增引用计数时调用,什么意思?就是当 有sp包装的类初始化的时候调用.我们再看看cameraService::onFirstRef()

frameworks\av\services\camera\libcameraservice\CameraService.cpp

void CameraService::onFirstRef()

{

ALOGI("CameraService process starting");

BnCameraService::onFirstRef();

// Update battery life tracking if service is restarting

BatteryNotifier& notifier(BatteryNotifier::getInstance());

notifier.noteResetCamera();

notifier.noteResetFlashlight();

status_t res = INVALID_OPERATION;

res = enumerateProviders();

if (res == OK) {

mInitialized = true;

}

CameraService::pingCameraServiceProxy();

}

在这个函数里,我们只关注enumerateProviders(),这里就到了列出所有cameraProvider.

查找并列出所有的cameraProvider

status_t CameraService::enumerateProviders() {

status_t res;

Mutex::Autolock l(mServiceLock);

if (nullptr == mCameraProviderManager.get()) {

mCameraProviderManager = new CameraProviderManager();

res = mCameraProviderManager->initialize(this);

......

}

......

}这个函数里,创建了一个CameraProviderManager对象,并调进行了初始化.

frameworks\av\services\camera\libcameraservice\common\CameraProviderManager.cpp

status_t CameraProviderManager::initialize(wp<CameraProviderManager::StatusListener> listener,

ServiceInteractionProxy* proxy) {

std::lock_guard<std::mutex> lock(mInterfaceMutex);

if (proxy == nullptr) {

ALOGE("%s: No valid service interaction proxy provided", __FUNCTION__);

return BAD_VALUE;

}

mListener = listener;

mServiceProxy = proxy;

// Registering will trigger notifications for all already-known providers

bool success = mServiceProxy->registerForNotifications(

/* instance name, empty means no filter */ "",

this);

if (!success) {

ALOGE("%s: Unable to register with hardware service manager for notifications "

"about camera providers", __FUNCTION__);

return INVALID_OPERATION;

}

// See if there's a passthrough HAL, but let's not complain if there's not

addProviderLocked(kLegacyProviderName, /*expected*/ false);

return OK;

}

在这个初始化函数里,我们只关注addProviderLocked, 我们可以看到,这个函数传进了一个kLegacyProviderName, 它的值定义在这个文件的上面:

const std::string kLegacyProviderName("legacy/0");

大家注意到了没有,它的值和我们之前hardware\interfaces\camera\provider\2.4\default\service.cpp里注册服务时return defaultPassthroughServiceImplementation<ICameraProvider>("legacy/0", /*maxThreads*/ 6); 以及manifest.xml里<instance>legacy/0</instance>的值都是一致的. 如果这个值不致,则会调用不成功.

好了,再回到addProviderLocked这个函数来, 这个函数的作用, 就是去加载camera对应的hal层代码, 并将它的信息保存在ProviderInfo里,后面我们就可以通过这个ProviderInfo, 去实行addDevice、getCameraInfo等操作。

status_t CameraProviderManager::addProviderLocked(const std::string& newProvider, bool expected) {

for (const auto& providerInfo : mProviders) {

if (providerInfo->mProviderName == newProvider) {

ALOGW("%s: Camera provider HAL with name '%s' already registered", __FUNCTION__,

newProvider.c_str());

return ALREADY_EXISTS;

}

}

sp<provider::V2_4::ICameraProvider> interface;

interface = mServiceProxy->getService(newProvider);

if (interface == nullptr) {

if (expected) {

ALOGE("%s: Camera provider HAL '%s' is not actually available", __FUNCTION__,

newProvider.c_str());

return BAD_VALUE;

} else {

return OK;

}

}

sp<ProviderInfo> providerInfo =

new ProviderInfo(newProvider, interface, this);

status_t res = providerInfo->initialize();

if (res != OK) {

return res;

}

mProviders.push_back(providerInfo);

return OK;

}这里先检查一下,传进来的newProvider是不是已经添加过,如果已经添加了,就不再处理,直接返回。如果没添加过,就会进行下一步的操作。这里的interface = mServiceProxy->getService(newProvider);,会调用到hardware\interfaces\camera\provider\2.4\default\CameraProvider.cpp的HIDL_FETCH_ICameraProvider函数。

ICameraProvider* HIDL_FETCH_ICameraProvider(const char* name) {

if (strcmp(name, kLegacyProviderName) != 0) {

return nullptr;

}

CameraProvider* provider = new CameraProvider();

if (provider == nullptr) {

ALOGE("%s: cannot allocate camera provider!", __FUNCTION__);

return nullptr;

}

if (provider->isInitFailed()) {

ALOGE("%s: camera provider init failed!", __FUNCTION__);

delete provider;

return nullptr;

}

return provider;

}

在这个函数里,会创建一个CameraProvider对象。

CameraProvider::CameraProvider() :

camera_module_callbacks_t({sCameraDeviceStatusChange,

sTorchModeStatusChange}) {

mInitFailed = initialize();

}bool CameraProvider::initialize() {

camera_module_t *rawModule;

int err = hw_get_module("camera", (const hw_module_t **)&rawModule);

if (err < 0) {

ALOGE("Could not load camera HAL module: %d (%s)", err, strerror(-err));

return true;

}

mModule = new CameraModule(rawModule);

err = mModule->init();

......

}在这个初始化函数中,会看到一个我们非常熟悉的函数hw_get_module。到这里,就跟我们android8.0之前的流程一模一样的了,都是去直接和hal层打交道了。

在CameraProvider里有一个地方值得大家注意

std::string cameraId, int deviceVersion) {

// Maybe consider create a version check method and SortedVec to speed up?

if (deviceVersion != CAMERA_DEVICE_API_VERSION_1_0 &&

deviceVersion != CAMERA_DEVICE_API_VERSION_3_2 &&

deviceVersion != CAMERA_DEVICE_API_VERSION_3_3 &&

deviceVersion != CAMERA_DEVICE_API_VERSION_3_4 ) {

return hidl_string("");

}

bool isV1 = deviceVersion == CAMERA_DEVICE_API_VERSION_1_0;

int versionMajor = isV1 ? 1 : 3;

int versionMinor = isV1 ? 0 : mPreferredHal3MinorVersion;

char deviceName[kMaxCameraDeviceNameLen];

snprintf(deviceName, sizeof(deviceName), "device@%d.%d/legacy/%s",

versionMajor, versionMinor, cameraId.c_str());

return deviceName;

}

从这里看来,如果我们的camera使用了hidl和hal打交道的话,我们hal里的版本号,必须是1.0或者是大于3.2的,如果我们的hal层的的版本号为3.0或3.1的话,是不能正确的加载成功的。

上面用到的图片,引用了https://blog.csdn.net/qq_16775897/article/details/81240600这个博客的,大家也可以去这篇博客看看。启动流程都是一致的,这博主也讲得比较详细。

好了,上面就将android8.0上camera hal层代码的加载流程基本讲完了。有了上面的基础,下面我们再来一步步的讲下,怎么去新增一个camera hal。

###############################################################################################################################################################################################################################################################################################################

移植一个camera hal,需要做的有以下几点:

1.)在device\mediatek\mt6580\manifest.xml里新增要增加的camera的instance

2.)在frameworks\av\services\camera\libcameraservice\common\CameraProviderManager.cpp里,将要添加的camera provider给addProviderLocked进来

3.)在hardware\interfaces\camera\provider\2.4\default\CameraProvider.cpp里将要增加的camera,通过hw_get_module这样给load进来。

4.)准备好对应的camera hal代码

配置manifest.xml

<hal format="hidl">

<name>android.hardware.camera.provider</name>

<transport>hwbinder</transport>

<version>2.4</version>

<interface>

<name>ICameraProvider</name>

<instance>internal/0</instance>

<instance>legacy/1</instance>

</interface>

</hal> 因为mtkcamera已经有了一个camera hal1,名字为internal/0, 所以我们的usbcamera对应的名字跟在它的后面即可。注意,“legacy/1”这个名字的前面“legacy”这串,可以随便取,比如可以叫”abc/1",只要其他用到的地方都叫这个名字就可以。但是这个后面的“1”,不能弄错。前面已经为0了,这个就必须为1,同理,如果还有一个摄像头,就要为2了。因为在CameraProviderManager.cpp里的ProviderInfo::parseDeviceName,是通过后面这个数字,来取对应的id值的。如果我们将这个usbcamera的id也写为0,即名字为“legacy/0”,那么在ProviderInfo::parseDeviceName这里取出来的id,就会和前面的已经有了的主摄冲突。

添加camera的ProviderInfo

在frameworks\av\services\camera\libcameraservice\common\CameraProviderManager.cpp的initialize函数里,将kLegacyProviderName改成"legacy/1"

status_t CameraProviderManager::initialize(wp<CameraProviderManager::StatusListener> listener,

ServiceInteractionProxy* proxy) {

......

// See if there's a passthrough HAL, but let's not complain if there's not

addProviderLocked("legacy/1", /*expected*/ false);

return OK;

}在hardware\interfaces\camera\provider\2.4\default\service.cpp里的main函数里,将"legacy/1"注册上来

int main()

{

ALOGI("Camera provider Service is starting.");

// The camera HAL may communicate to other vendor components via

// /dev/vndbinder

android::ProcessState::initWithDriver("/dev/vndbinder");

return defaultPassthroughServiceImplementation<ICameraProvider>("legacy/1", /*maxThreads*/ 6);

}在hardware\interfaces\camera\provider\2.4\default\CameraProvider.cpp里,将kLegacyProviderName改成"legacy/1";它会被HIDL_FETCH_ICameraProvider调用。

hw_get_module对应camera的hal代码

在hardware\interfaces\camera\provider\2.4\default\CameraProvider.cpp里的initialize()函数里,hw_get_module参数传进去的名字,改成我们usbcamera hal里指定的名字

int err = hw_get_module("usbcamera", (const hw_module_t **)&rawModule);

准备好对应的camera hal代码

我们的usbcamera hal代码,我们放在hardware\libhardware\modules\camera\libuvccamera下面。在这个目录里,有个HalModule.cpp文件,里面定义了camera_module_t的结构体,它的id就是"usbcamera", 在CameraProvider.cpp里hw_get_module时,发现这里定义的id和它要找的id一致,就会load到我们的usbcamera了。

#include <hardware/camera_common.h>

#include <cutils/log.h>

#include <utils/misc.h>

#include <cstdlib>

#include <cassert>

#include "Camera.h"

/******************************************************************************\

DECLARATIONS

Not used in any other project source files, header file is redundant

\******************************************************************************/

extern camera_module_t HAL_MODULE_INFO_SYM;

namespace android {

namespace HalModule {

/* Available cameras */

extern Camera *cams[];

static int getNumberOfCameras();

static int getCameraInfo(int cameraId, struct camera_info *info);

static int setCallbacks(const camera_module_callbacks_t *callbacks);

static void getVendorTagOps(vendor_tag_ops_t* ops);

static int openDevice(const hw_module_t *module, const char *name, hw_device_t **device);

static struct hw_module_methods_t moduleMethods = {

.open = openDevice

};

}; /* namespace HalModule */

}; /* namespace android */

/******************************************************************************\

DEFINITIONS

\******************************************************************************/

camera_module_t HAL_MODULE_INFO_SYM = {

.common = {

.tag = HARDWARE_MODULE_TAG,

.module_api_version = CAMERA_MODULE_API_VERSION_2_3,

.hal_api_version = HARDWARE_HAL_API_VERSION,

.id = "usbcamera",

.name = "V4l2 Camera",

.author = "Antmicro Ltd.",

.methods = &android::HalModule::moduleMethods,

.dso = NULL,

.reserved = {0}

},

.get_number_of_cameras = android::HalModule::getNumberOfCameras,

.get_camera_info = android::HalModule::getCameraInfo,

.set_callbacks = android::HalModule::setCallbacks,

};

namespace android {

namespace HalModule {

static Camera mainCamera;

Camera *cams[] = {

&mainCamera

};

static int getNumberOfCameras() {

return NELEM(cams);

};

static int getCameraInfo(int cameraId, struct camera_info *info) {

cameraId = 0;//cameraId - 1;

if(cameraId < 0 || cameraId >= getNumberOfCameras()) {

return -ENODEV;

}

if(!cams[cameraId]->isValid()) {

return -ENODEV;

}

return cams[cameraId]->cameraInfo(info);

}

int setCallbacks(const camera_module_callbacks_t * /*callbacks*/) {

ALOGI("%s: lihb setCallbacks", __FUNCTION__);

/* TODO: Implement for hotplug support */

return OK;

}

static int openDevice(const hw_module_t *module, const char *name, hw_device_t **device) {

if (module != &HAL_MODULE_INFO_SYM.common) {

return -EINVAL;

}

if (name == NULL) {

return -EINVAL;

}

errno = 0;

int cameraId = (int)strtol(name, NULL, 10);

cameraId = 0;

if(errno || cameraId < 0 || cameraId >= getNumberOfCameras()) {

return -EINVAL;

}

if(!cams[cameraId]->isValid()) {

*device = NULL;

return -ENODEV;

}

return cams[cameraId]->openDevice(device);

}

}; /* namespace HalModule */

}; /* namespace android */

大家可能会发现,在getCameraInfo函数里,我将cameraId写死成了0,但是前面我们不是刚说过,我们的usbcamera是第二个摄像头,id要为1么?其实这里的id,和前面说的那个id,不是同一个意思。在这里之所以写成0,是因为我们这套usbcamera hal代码上面,只挂了一个摄像头,这个摄像头对应的代码放在Camera *cams[]数组里,这个数组里只放了一个对象,所以id自然就要为0了。然后我们在我们的halModule.cpp里操作getCameraInfo或openDevice时,就会通过这个数组调用到static Camera mainCamera;这里定义的这个Camera对象了。这个Camera,也是我们自己写的代码:

//Camera.h

#ifndef CAMERA_H

#define CAMERA_H

#include <utils/Errors.h>

#include <hardware/camera_common.h>

#include <V4l2Device.h>

#include <hardware/camera3.h>

#include <camera/CameraMetadata.h>

#include <utils/Mutex.h>

#include "Workers.h"

#include "ImageConverter.h"

#include "DbgUtils.h"

#include <cutils/ashmem.h>

#include <cutils/log.h>

#include <sys/mman.h>

#include "VGA_YUV422.h"

namespace android {

class Camera: public camera3_device {

public:

Camera();

virtual ~Camera();

bool isValid() { return mValid; }

virtual status_t cameraInfo(struct camera_info *info);

virtual int openDevice(hw_device_t **device);

virtual int closeDevice();

protected:

virtual camera_metadata_t * staticCharacteristics();

virtual int initialize(const camera3_callback_ops_t *callbackOps);

virtual int configureStreams(camera3_stream_configuration_t *streamList);

virtual const camera_metadata_t * constructDefaultRequestSettings(int type);

virtual int registerStreamBuffers(const camera3_stream_buffer_set_t *bufferSet);

virtual int processCaptureRequest(camera3_capture_request_t *request);

/* HELPERS/SUBPROCEDURES */

void notifyShutter(uint32_t frameNumber, uint64_t timestamp);

void processCaptureResult(uint32_t frameNumber, const camera_metadata_t *result, const Vector<camera3_stream_buffer> &buffers);

camera_metadata_t *mStaticCharacteristics;

camera_metadata_t *mDefaultRequestSettings[CAMERA3_TEMPLATE_COUNT];

CameraMetadata mLastRequestSettings;

V4l2Device *mDev;

bool mValid;

const camera3_callback_ops_t *mCallbackOps;

size_t mJpegBufferSize;

private:

ImageConverter mConverter;

Mutex mMutex;

uint8_t* mFrameBuffer;

uint8_t* rszbuffer;

int mProperty_enableTimesTamp = -1;

/* STATIC WRAPPERS */

static int sClose(hw_device_t *device);

static int sInitialize(const struct camera3_device *device, const camera3_callback_ops_t *callback_ops);

static int sConfigureStreams(const struct camera3_device *device, camera3_stream_configuration_t *stream_list);

static int sRegisterStreamBuffers(const struct camera3_device *device, const camera3_stream_buffer_set_t *buffer_set);

static const camera_metadata_t * sConstructDefaultRequestSettings(const struct camera3_device *device, int type);

static int sProcessCaptureRequest(const struct camera3_device *device, camera3_capture_request_t *request);

static void sGetMetadataVendorTagOps(const struct camera3_device *device, vendor_tag_query_ops_t* ops);

static void sDump(const struct camera3_device *device, int fd);

static int sFlush(const struct camera3_device *device);

static void _AddTimesTamp(uint8_t* buffer, int32_t width, int32_t height);

static camera3_device_ops_t sOps;

};

}; /* namespace android */

#endif // CAMERA_H

/*

* Copyright (C) 2015-2016 Antmicro

*

* Licensed under the Apache License, Version 2.0 (the "License");

* you may not use this file except in compliance with the License.

* You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

#define LOG_TAG "usb-Camera"

#define LOG_NDEBUG 0

#include <hardware/camera3.h>

#include <camera/CameraMetadata.h>

#include <utils/misc.h>

#include <utils/Log.h>

#include <hardware/gralloc.h>

#include <ui/Rect.h>

#include <ui/GraphicBufferMapper.h>

#include <ui/Fence.h>

#include <assert.h>

#include "DbgUtils.h"

#include "Camera.h"

#include "ImageConverter.h"

#include "libyuv.h"

//#include <sprd_exvideo.h>

#include <cutils/properties.h>

#include <cstdlib>

#include <cstdio>

namespace android {

/**

* \class Camera

*

* Android's Camera 3 device implementation.

*

* Declaration of camera capabilities, frame request handling, etc. This code

* is what Android framework talks to.

*/

Camera::Camera()

: mStaticCharacteristics(NULL)

, mCallbackOps(NULL)

, mJpegBufferSize(0) {

ALOGI("Camera() start");

DBGUTILS_AUTOLOGCALL(__func__);

for(size_t i = 0; i < NELEM(mDefaultRequestSettings); i++) {

mDefaultRequestSettings[i] = NULL;

}

common.tag = HARDWARE_DEVICE_TAG;

common.version = CAMERA_DEVICE_API_VERSION_3_2;//CAMERA_DEVICE_API_VERSION_3_0;

common.module = &HAL_MODULE_INFO_SYM.common;

common.close = Camera::sClose;

ops = &sOps;

priv = NULL;

mValid = true;

mFrameBuffer = new uint8_t[640*480*4];

rszbuffer = new uint8_t[640*480*4];

mDev = new V4l2Device();

if(!mDev) {

mValid = false;

}

}

Camera::~Camera() {

DBGUTILS_AUTOLOGCALL(__func__);

gWorkers.stop();

mDev->disconnect();

delete[] mFrameBuffer;

delete[] rszbuffer;

delete mDev;

}

status_t Camera::cameraInfo(struct camera_info *info) {

DBGUTILS_AUTOLOGCALL(__func__);

ALOGI("Camera::cameraInfo entry");

ALOGE("Camera::cameraInfo entry");

Mutex::Autolock lock(mMutex);

info->facing = CAMERA_FACING_FRONT;//BACK;//FRONT;

info->orientation = 0;

info->device_version = CAMERA_DEVICE_API_VERSION_3_2;//CAMERA_DEVICE_API_VERSION_3_0;//CAMERA_DEVICE_API_VERSION_3_4;

info->static_camera_characteristics = staticCharacteristics();

return NO_ERROR;

}

int Camera::openDevice(hw_device_t **device) {

ALOGI("%s",__FUNCTION__);

DBGUTILS_AUTOLOGCALL(__func__);

Mutex::Autolock lock(mMutex);

char enableTimesTamp[PROPERTY_VALUE_MAX];

char enableAVI[PROPERTY_VALUE_MAX];

mDev->connect();

*device = &common;

gWorkers.start();

return NO_ERROR;

}

int Camera::closeDevice() {

ALOGI("%s",__FUNCTION__);

DBGUTILS_AUTOLOGCALL(__func__);

Mutex::Autolock lock(mMutex);

gWorkers.stop();

mDev->disconnect();

return NO_ERROR;

}

camera_metadata_t *Camera::staticCharacteristics() {

if(mStaticCharacteristics)

return mStaticCharacteristics;

CameraMetadata cm;

auto &resolutions = mDev->availableResolutions();

auto &previewResolutions = resolutions;

auto sensorRes = mDev->sensorResolution();

/***********************************\

|* START OF CAMERA CHARACTERISTICS *|

\***********************************/

/* fake, but valid aspect ratio */

const float sensorInfoPhysicalSize[] = {

5.0f,

5.0f * (float)sensorRes.height / (float)sensorRes.width

};

cm.update(ANDROID_SENSOR_INFO_PHYSICAL_SIZE, sensorInfoPhysicalSize, NELEM(sensorInfoPhysicalSize));

/* fake */

static const float lensInfoAvailableFocalLengths[] = {3.30f};

cm.update(ANDROID_LENS_INFO_AVAILABLE_FOCAL_LENGTHS, lensInfoAvailableFocalLengths, NELEM(lensInfoAvailableFocalLengths));

static const uint8_t lensFacing = ANDROID_LENS_FACING_FRONT;

cm.update(ANDROID_LENS_FACING, &lensFacing, 1);

const int32_t sensorInfoPixelArraySize[] = {

(int32_t)sensorRes.width,

(int32_t)sensorRes.height

};

cm.update(ANDROID_SENSOR_INFO_PIXEL_ARRAY_SIZE, sensorInfoPixelArraySize, NELEM(sensorInfoPixelArraySize));

const int32_t sensorInfoActiveArraySize[] = {

0, 0,

(int32_t)sensorRes.width, (int32_t)sensorRes.height

};

cm.update(ANDROID_SENSOR_INFO_ACTIVE_ARRAY_SIZE, sensorInfoActiveArraySize, NELEM(sensorInfoActiveArraySize));

static const int32_t scalerAvailableFormats[] = {

HAL_PIXEL_FORMAT_RGBA_8888, //预览流

HAL_PIXEL_FORMAT_IMPLEMENTATION_DEFINED,//预览流

/* Non-preview one, must be last - see following code */

HAL_PIXEL_FORMAT_BLOB//拍照流

};

cm.update(ANDROID_SCALER_AVAILABLE_FORMATS, scalerAvailableFormats, NELEM(scalerAvailableFormats));

/* Only for HAL_PIXEL_FORMAT_BLOB */

const size_t mainStreamConfigsCount = resolutions.size();

/* For all other supported pixel formats */

const size_t previewStreamConfigsCount = previewResolutions.size() * (NELEM(scalerAvailableFormats) - 1);

const size_t streamConfigsCount = mainStreamConfigsCount + previewStreamConfigsCount;

int32_t scalerAvailableStreamConfigurations[streamConfigsCount * 4];

int64_t scalerAvailableMinFrameDurations[streamConfigsCount * 4];

int32_t scalerAvailableProcessedSizes[previewResolutions.size() * 2];

int64_t scalerAvailableProcessedMinDurations[previewResolutions.size()];

int32_t scalerAvailableJpegSizes[resolutions.size() * 2];

int64_t scalerAvailableJpegMinDurations[resolutions.size()];

size_t i4 = 0;

size_t i2 = 0;

size_t i1 = 0;

/* Main stream configurations */

for(size_t resId = 0; resId < resolutions.size(); ++resId) {

scalerAvailableStreamConfigurations[i4 + 0] = HAL_PIXEL_FORMAT_BLOB;

scalerAvailableStreamConfigurations[i4 + 1] = (int32_t)resolutions[resId].width;

scalerAvailableStreamConfigurations[i4 + 2] = (int32_t)resolutions[resId].height;

scalerAvailableStreamConfigurations[i4 + 3] = ANDROID_SCALER_AVAILABLE_STREAM_CONFIGURATIONS_OUTPUT;

scalerAvailableMinFrameDurations[i4 + 0] = HAL_PIXEL_FORMAT_BLOB;

scalerAvailableMinFrameDurations[i4 + 1] = (int32_t)resolutions[resId].width;

scalerAvailableMinFrameDurations[i4 + 2] = (int32_t)resolutions[resId].height;

scalerAvailableMinFrameDurations[i4 + 3] = 1000000000 / 30; /* TODO: read from the device */

scalerAvailableJpegSizes[i2 + 0] = (int32_t)resolutions[resId].width;

scalerAvailableJpegSizes[i2 + 1] = (int32_t)resolutions[resId].height;

scalerAvailableJpegMinDurations[i1] = 1000000000 / 30; /* TODO: read from the device */

i4 += 4;

i2 += 2;

i1 += 1;

}

i2 = 0;

i1 = 0;

/* Preview stream configurations */

for(size_t resId = 0; resId < previewResolutions.size(); ++resId) {

for(size_t fmtId = 0; fmtId < NELEM(scalerAvailableFormats) - 1; ++fmtId) {

scalerAvailableStreamConfigurations[i4 + 0] = scalerAvailableFormats[fmtId];

scalerAvailableStreamConfigurations[i4 + 1] = (int32_t)previewResolutions[resId].width;

scalerAvailableStreamConfigurations[i4 + 2] = (int32_t)previewResolutions[resId].height;

scalerAvailableStreamConfigurations[i4 + 3] = ANDROID_SCALER_AVAILABLE_STREAM_CONFIGURATIONS_OUTPUT;

scalerAvailableMinFrameDurations[i4 + 0] = scalerAvailableFormats[fmtId];

scalerAvailableMinFrameDurations[i4 + 1] = (int32_t)previewResolutions[resId].width;

scalerAvailableMinFrameDurations[i4 + 2] = (int32_t)previewResolutions[resId].height;

scalerAvailableMinFrameDurations[i4 + 3] = 1000000000 / 10; /* TODO: read from the device */

i4 += 4;

}

scalerAvailableProcessedSizes[i2 + 0] = (int32_t)previewResolutions[resId].width;

scalerAvailableProcessedSizes[i2 + 1] = (int32_t)previewResolutions[resId].height;

scalerAvailableProcessedMinDurations[i1] = 1000000000 / 10; /* TODO: read from the device */

i2 += 2;

i1 += 1;

}

cm.update(ANDROID_SCALER_AVAILABLE_STREAM_CONFIGURATIONS, scalerAvailableStreamConfigurations, (size_t)NELEM(scalerAvailableStreamConfigurations));

cm.update(ANDROID_SCALER_AVAILABLE_MIN_FRAME_DURATIONS, scalerAvailableMinFrameDurations, (size_t)NELEM(scalerAvailableMinFrameDurations));

/* Probably fake */

cm.update(ANDROID_SCALER_AVAILABLE_STALL_DURATIONS, scalerAvailableMinFrameDurations, (size_t)NELEM(scalerAvailableMinFrameDurations));

cm.update(ANDROID_SCALER_AVAILABLE_JPEG_SIZES, scalerAvailableJpegSizes, (size_t)NELEM(scalerAvailableJpegSizes));

cm.update(ANDROID_SCALER_AVAILABLE_JPEG_MIN_DURATIONS, scalerAvailableJpegMinDurations, (size_t)NELEM(scalerAvailableJpegMinDurations));

cm.update(ANDROID_SCALER_AVAILABLE_PROCESSED_SIZES, scalerAvailableProcessedSizes, (size_t)NELEM(scalerAvailableProcessedSizes));

cm.update(ANDROID_SCALER_AVAILABLE_PROCESSED_MIN_DURATIONS, scalerAvailableProcessedMinDurations, (size_t)NELEM(scalerAvailableProcessedMinDurations));

//添加capabilities集,否则api2的接口,在调用getStreamConfigurationMap去获取REQUEST_AVAILABLE_CAPABILITIES值时会失败。

Vector<uint8_t> available_capabilities;

available_capabilities.add(ANDROID_REQUEST_AVAILABLE_CAPABILITIES_READ_SENSOR_SETTINGS);

available_capabilities.add(ANDROID_REQUEST_AVAILABLE_CAPABILITIES_PRIVATE_REPROCESSING);

available_capabilities.add(ANDROID_REQUEST_AVAILABLE_CAPABILITIES_YUV_REPROCESSING);

cm.update(ANDROID_REQUEST_AVAILABLE_CAPABILITIES,

available_capabilities.array(),

available_capabilities.size());

/* ~8.25 bit/px (https://en.wikipedia.org/wiki/JPEG#Sample_photographs) */

/* Use 9 bit/px, add buffer info struct size, round up to page size */

mJpegBufferSize = sensorRes.width * sensorRes.height * 9 + sizeof(camera3_jpeg_blob);

mJpegBufferSize = (mJpegBufferSize + PAGE_SIZE - 1u) & ~(PAGE_SIZE - 1u);

const int32_t jpegMaxSize = (int32_t)mJpegBufferSize;

cm.update(ANDROID_JPEG_MAX_SIZE, &jpegMaxSize, 1);

static const int32_t jpegAvailableThumbnailSizes[] = {

0, 0,

320, 240

};

cm.update(ANDROID_JPEG_AVAILABLE_THUMBNAIL_SIZES, jpegAvailableThumbnailSizes, NELEM(jpegAvailableThumbnailSizes));

static const int32_t sensorOrientation = 90;

cm.update(ANDROID_SENSOR_ORIENTATION, &sensorOrientation, 1);

static const uint8_t flashInfoAvailable = ANDROID_FLASH_INFO_AVAILABLE_FALSE;

cm.update(ANDROID_FLASH_INFO_AVAILABLE, &flashInfoAvailable, 1);

static const float scalerAvailableMaxDigitalZoom = 1;

cm.update(ANDROID_SCALER_AVAILABLE_MAX_DIGITAL_ZOOM, &scalerAvailableMaxDigitalZoom, 1);

static const uint8_t statisticsFaceDetectModes[] = {

ANDROID_STATISTICS_FACE_DETECT_MODE_OFF

};

cm.update(ANDROID_STATISTICS_FACE_DETECT_MODE, statisticsFaceDetectModes, NELEM(statisticsFaceDetectModes));

static const int32_t statisticsInfoMaxFaceCount = 0;

cm.update(ANDROID_STATISTICS_INFO_MAX_FACE_COUNT, &statisticsInfoMaxFaceCount, 1);

static const uint8_t controlAvailableSceneModes[] = {

ANDROID_CONTROL_SCENE_MODE_DISABLED

};

cm.update(ANDROID_CONTROL_AVAILABLE_SCENE_MODES, controlAvailableSceneModes, NELEM(controlAvailableSceneModes));

static const uint8_t controlAvailableEffects[] = {

ANDROID_CONTROL_EFFECT_MODE_OFF

};

cm.update(ANDROID_CONTROL_AVAILABLE_EFFECTS, controlAvailableEffects, NELEM(controlAvailableEffects));

static const int32_t controlMaxRegions[] = {

0, /* AE */

0, /* AWB */

0 /* AF */

};

cm.update(ANDROID_CONTROL_MAX_REGIONS, controlMaxRegions, NELEM(controlMaxRegions));

static const uint8_t controlAeAvailableModes[] = {

ANDROID_CONTROL_AE_MODE_OFF

};

cm.update(ANDROID_CONTROL_AE_AVAILABLE_MODES, controlAeAvailableModes, NELEM(controlAeAvailableModes));

static const camera_metadata_rational controlAeCompensationStep = {1, 3};

cm.update(ANDROID_CONTROL_AE_COMPENSATION_STEP, &controlAeCompensationStep, 1);

int32_t controlAeCompensationRange[] = {-9, 9};

cm.update(ANDROID_CONTROL_AE_COMPENSATION_RANGE, controlAeCompensationRange, NELEM(controlAeCompensationRange));

static const int32_t controlAeAvailableTargetFpsRanges[] = {

10, 20

};

cm.update(ANDROID_CONTROL_AE_AVAILABLE_TARGET_FPS_RANGES, controlAeAvailableTargetFpsRanges, NELEM(controlAeAvailableTargetFpsRanges));

static const uint8_t controlAeAvailableAntibandingModes[] = {

ANDROID_CONTROL_AE_ANTIBANDING_MODE_OFF

};

cm.update(ANDROID_CONTROL_AE_AVAILABLE_ANTIBANDING_MODES, controlAeAvailableAntibandingModes, NELEM(controlAeAvailableAntibandingModes));

static const uint8_t controlAwbAvailableModes[] = {

ANDROID_CONTROL_AWB_MODE_AUTO,

ANDROID_CONTROL_AWB_MODE_OFF

};

cm.update(ANDROID_CONTROL_AWB_AVAILABLE_MODES, controlAwbAvailableModes, NELEM(controlAwbAvailableModes));

static const uint8_t controlAfAvailableModes[] = {

ANDROID_CONTROL_AF_MODE_OFF

};

cm.update(ANDROID_CONTROL_AF_AVAILABLE_MODES, controlAfAvailableModes, NELEM(controlAfAvailableModes));

static const uint8_t controlAvailableVideoStabilizationModes[] = {

ANDROID_CONTROL_VIDEO_STABILIZATION_MODE_OFF

};

cm.update(ANDROID_CONTROL_AVAILABLE_VIDEO_STABILIZATION_MODES, controlAvailableVideoStabilizationModes, NELEM(controlAvailableVideoStabilizationModes));

const uint8_t infoSupportedHardwareLevel = ANDROID_INFO_SUPPORTED_HARDWARE_LEVEL_LIMITED;

cm.update(ANDROID_INFO_SUPPORTED_HARDWARE_LEVEL, &infoSupportedHardwareLevel, 1);

/***********************************\

|* END OF CAMERA CHARACTERISTICS *|

\***********************************/

mStaticCharacteristics = cm.release();

return mStaticCharacteristics;

}

int Camera::initialize(const camera3_callback_ops_t *callbackOps) {

DBGUTILS_AUTOLOGCALL(__func__);

Mutex::Autolock lock(mMutex);

mCallbackOps = callbackOps;

return NO_ERROR;

}

const camera_metadata_t * Camera::constructDefaultRequestSettings(int type) {

DBGUTILS_AUTOLOGCALL(__func__);

Mutex::Autolock lock(mMutex);

/* TODO: validate type */

if(mDefaultRequestSettings[type]) {

return mDefaultRequestSettings[type];

}

CameraMetadata cm;

static const int32_t requestId = 0;

cm.update(ANDROID_REQUEST_ID, &requestId, 1);

static const float lensFocusDistance = 0.0f;

cm.update(ANDROID_LENS_FOCUS_DISTANCE, &lensFocusDistance, 1);

auto sensorSize = mDev->sensorResolution();

const int32_t scalerCropRegion[] = {

0, 0,

(int32_t)sensorSize.width, (int32_t)sensorSize.height

};

cm.update(ANDROID_SCALER_CROP_REGION, scalerCropRegion, NELEM(scalerCropRegion));

static const int32_t jpegThumbnailSize[] = {

0, 0

};

cm.update(ANDROID_JPEG_THUMBNAIL_SIZE, jpegThumbnailSize, NELEM(jpegThumbnailSize));

static const uint8_t jpegThumbnailQuality = 50;

cm.update(ANDROID_JPEG_THUMBNAIL_QUALITY, &jpegThumbnailQuality, 1);

static const double jpegGpsCoordinates[] = {

0, 0

};

cm.update(ANDROID_JPEG_GPS_COORDINATES, jpegGpsCoordinates, NELEM(jpegGpsCoordinates));

static const uint8_t jpegGpsProcessingMethod[32] = "None";

cm.update(ANDROID_JPEG_GPS_PROCESSING_METHOD, jpegGpsProcessingMethod, NELEM(jpegGpsProcessingMethod));

static const int64_t jpegGpsTimestamp = 0;

cm.update(ANDROID_JPEG_GPS_TIMESTAMP, &jpegGpsTimestamp, 1);

static const int32_t jpegOrientation = 0;

cm.update(ANDROID_JPEG_ORIENTATION, &jpegOrientation, 1);

/** android.stats */

static const uint8_t statisticsFaceDetectMode = ANDROID_STATISTICS_FACE_DETECT_MODE_OFF;

cm.update(ANDROID_STATISTICS_FACE_DETECT_MODE, &statisticsFaceDetectMode, 1);

static const uint8_t statisticsHistogramMode = ANDROID_STATISTICS_HISTOGRAM_MODE_OFF;

cm.update(ANDROID_STATISTICS_HISTOGRAM_MODE, &statisticsHistogramMode, 1);

static const uint8_t statisticsSharpnessMapMode = ANDROID_STATISTICS_SHARPNESS_MAP_MODE_OFF;

cm.update(ANDROID_STATISTICS_SHARPNESS_MAP_MODE, &statisticsSharpnessMapMode, 1);

uint8_t controlCaptureIntent = 0;

switch (type) {

case CAMERA3_TEMPLATE_PREVIEW: controlCaptureIntent = ANDROID_CONTROL_CAPTURE_INTENT_PREVIEW; break;

case CAMERA3_TEMPLATE_STILL_CAPTURE: controlCaptureIntent = ANDROID_CONTROL_CAPTURE_INTENT_STILL_CAPTURE; break;

case CAMERA3_TEMPLATE_VIDEO_RECORD: controlCaptureIntent = ANDROID_CONTROL_CAPTURE_INTENT_VIDEO_RECORD; break;

case CAMERA3_TEMPLATE_VIDEO_SNAPSHOT: controlCaptureIntent = ANDROID_CONTROL_CAPTURE_INTENT_VIDEO_SNAPSHOT; break;

case CAMERA3_TEMPLATE_ZERO_SHUTTER_LAG: controlCaptureIntent = ANDROID_CONTROL_CAPTURE_INTENT_ZERO_SHUTTER_LAG; break;

default: controlCaptureIntent = ANDROID_CONTROL_CAPTURE_INTENT_CUSTOM; break;

}

cm.update(ANDROID_CONTROL_CAPTURE_INTENT, &controlCaptureIntent, 1);

static const uint8_t controlMode = ANDROID_CONTROL_MODE_OFF;

cm.update(ANDROID_CONTROL_MODE, &controlMode, 1);

static const uint8_t controlEffectMode = ANDROID_CONTROL_EFFECT_MODE_OFF;

cm.update(ANDROID_CONTROL_EFFECT_MODE, &controlEffectMode, 1);

static const uint8_t controlSceneMode = ANDROID_CONTROL_SCENE_MODE_FACE_PRIORITY;

cm.update(ANDROID_CONTROL_SCENE_MODE, &controlSceneMode, 1);

static const uint8_t controlAeMode = ANDROID_CONTROL_AE_MODE_OFF;

cm.update(ANDROID_CONTROL_AE_MODE, &controlAeMode, 1);

static const uint8_t controlAeLock = ANDROID_CONTROL_AE_LOCK_OFF;

cm.update(ANDROID_CONTROL_AE_LOCK, &controlAeLock, 1);

static const int32_t controlAeRegions[] = {

0, 0,

(int32_t)sensorSize.width, (int32_t)sensorSize.height,

1000

};

cm.update(ANDROID_CONTROL_AE_REGIONS, controlAeRegions, NELEM(controlAeRegions));

cm.update(ANDROID_CONTROL_AWB_REGIONS, controlAeRegions, NELEM(controlAeRegions));

cm.update(ANDROID_CONTROL_AF_REGIONS, controlAeRegions, NELEM(controlAeRegions));

static const int32_t controlAeExposureCompensation = 0;

cm.update(ANDROID_CONTROL_AE_EXPOSURE_COMPENSATION, &controlAeExposureCompensation, 1);

static const int32_t controlAeTargetFpsRange[] = {

10, 20

};

cm.update(ANDROID_CONTROL_AE_TARGET_FPS_RANGE, controlAeTargetFpsRange, NELEM(controlAeTargetFpsRange));

static const uint8_t controlAeAntibandingMode = ANDROID_CONTROL_AE_ANTIBANDING_MODE_OFF;

cm.update(ANDROID_CONTROL_AE_ANTIBANDING_MODE, &controlAeAntibandingMode, 1);

static const uint8_t controlAwbMode = ANDROID_CONTROL_AWB_MODE_OFF;

cm.update(ANDROID_CONTROL_AWB_MODE, &controlAwbMode, 1);

static const uint8_t controlAwbLock = ANDROID_CONTROL_AWB_LOCK_OFF;

cm.update(ANDROID_CONTROL_AWB_LOCK, &controlAwbLock, 1);

uint8_t controlAfMode = ANDROID_CONTROL_AF_MODE_OFF;

cm.update(ANDROID_CONTROL_AF_MODE, &controlAfMode, 1);

static const uint8_t controlAeState = ANDROID_CONTROL_AE_STATE_CONVERGED;

cm.update(ANDROID_CONTROL_AE_STATE, &controlAeState, 1);

static const uint8_t controlAfState = ANDROID_CONTROL_AF_STATE_INACTIVE;

cm.update(ANDROID_CONTROL_AF_STATE, &controlAfState, 1);

static const uint8_t controlAwbState = ANDROID_CONTROL_AWB_STATE_INACTIVE;

cm.update(ANDROID_CONTROL_AWB_STATE, &controlAwbState, 1);

static const uint8_t controlVideoStabilizationMode = ANDROID_CONTROL_VIDEO_STABILIZATION_MODE_OFF;

cm.update(ANDROID_CONTROL_VIDEO_STABILIZATION_MODE, &controlVideoStabilizationMode, 1);

static const int32_t controlAePrecaptureId = ANDROID_CONTROL_AE_PRECAPTURE_TRIGGER_IDLE;

cm.update(ANDROID_CONTROL_AE_PRECAPTURE_ID, &controlAePrecaptureId, 1);

static const int32_t controlAfTriggerId = 0;

cm.update(ANDROID_CONTROL_AF_TRIGGER_ID, &controlAfTriggerId, 1);

mDefaultRequestSettings[type] = cm.release();

return mDefaultRequestSettings[type];

}

int Camera::configureStreams(camera3_stream_configuration_t *streamList) {

DBGUTILS_AUTOLOGCALL(__func__);

Mutex::Autolock lock(mMutex);

ALOGI("configureStreams");

/* TODO: sanity checks */

ALOGI("+-------------------------------------------------------------------------------");

ALOGI("| STREAMS FROM FRAMEWORK");

ALOGI("+-------------------------------------------------------------------------------");

for(size_t i = 0; i < streamList->num_streams; ++i) {

camera3_stream_t *newStream = streamList->streams[i];

ALOGI("| p=%p fmt=0x%.2x type=%u usage=0x%.8x size=%4ux%-4u buf_no=%u",

newStream,

newStream->format,

newStream->stream_type,

newStream->usage,

newStream->width,

newStream->height,

newStream->max_buffers);

}

ALOGI("+-------------------------------------------------------------------------------");

/* TODO: do we need input stream? */

camera3_stream_t *inStream = NULL;

unsigned width = 0;

unsigned height = 0;

for(size_t i = 0; i < streamList->num_streams; ++i) {

camera3_stream_t *newStream = streamList->streams[i];

/* TODO: validate: null */

if(newStream->stream_type == CAMERA3_STREAM_INPUT || newStream->stream_type == CAMERA3_STREAM_BIDIRECTIONAL) {

if(inStream) {

ALOGI("Only one input/bidirectional stream allowed (previous is %p, this %p)", inStream, newStream);

return BAD_VALUE;

}

inStream = newStream;

}

/* TODO: validate format */

if(newStream->format == HAL_PIXEL_FORMAT_IMPLEMENTATION_DEFINED) {

newStream->format = HAL_PIXEL_FORMAT_RGBA_8888;

}

/* TODO: support ZSL */

if(newStream->usage & GRALLOC_USAGE_HW_CAMERA_ZSL) {

ALOGI("ZSL STREAM FOUND! It is not supported for now.");

ALOGI(" Disable it by placing following line in /system/build.prop:");

ALOGI(" camera.disable_zsl_mode=1");

return BAD_VALUE;

}

switch(newStream->stream_type) {

case CAMERA3_STREAM_OUTPUT: newStream->usage = GRALLOC_USAGE_SW_WRITE_OFTEN; break;

case CAMERA3_STREAM_INPUT: newStream->usage = GRALLOC_USAGE_SW_READ_OFTEN; break;

case CAMERA3_STREAM_BIDIRECTIONAL: newStream->usage = GRALLOC_USAGE_SW_WRITE_OFTEN | GRALLOC_USAGE_SW_READ_OFTEN; break;

}

newStream->max_buffers = 1; /* TODO: support larger queue */

if(newStream->width * newStream->height > width * height) {

width = newStream->width;

height = newStream->height;

}

/* TODO: store stream pointers somewhere and configure only new ones */

}

if(mDev->isNeedsetResolution(width, height))

{

if(mDev->isStreaming())

{

if (!mDev->setStreaming(false))

{

ALOGI("Could not stop streaming");

return NO_INIT;

}

}

if (!mDev->setResolution(width, height))

{

ALOGI("Could not set resolution");

return NO_INIT;

}

}

ALOGI("+-------------------------------------------------------------------------------");

ALOGI("| STREAMS AFTER CHANGES");

ALOGI("+-------------------------------------------------------------------------------");

for(size_t i = 0; i < streamList->num_streams; ++i) {

const camera3_stream_t *newStream = streamList->streams[i];

ALOGI("| p=%p fmt=0x%.2x type=%u usage=0x%.8x size=%4ux%-4u buf_no=%u",

newStream,

newStream->format,

newStream->stream_type,

newStream->usage,

newStream->width,

newStream->height,

newStream->max_buffers);

}

ALOGI("+-------------------------------------------------------------------------------");

if(!mDev->setStreaming(true)) {

ALOGI("Could not start streaming");

return NO_INIT;

}

return NO_ERROR;

}

int Camera::registerStreamBuffers(const camera3_stream_buffer_set_t *bufferSet) {

DBGUTILS_AUTOLOGCALL(__func__);

Mutex::Autolock lock(mMutex);

ALOGI("+-------------------------------------------------------------------------------");

ALOGI("| BUFFERS FOR STREAM %p", bufferSet->stream);

ALOGI("+-------------------------------------------------------------------------------");

for (size_t i = 0; i < bufferSet->num_buffers; ++i) {

ALOGI("| p=%p", bufferSet->buffers[i]);

}

ALOGI("+-------------------------------------------------------------------------------");

return OK;

}

int Camera::processCaptureRequest(camera3_capture_request_t *request) {

assert(request != NULL);

Mutex::Autolock lock(mMutex);

BENCHMARK_HERE(120);

FPSCOUNTER_HERE(120);

CameraMetadata cm;

const V4l2Device::VBuffer *frame = NULL;

auto res = mDev->resolution();

status_t e;

Vector<camera3_stream_buffer> buffers;

auto timestamp = systemTime();

if(request->settings == NULL && mLastRequestSettings.isEmpty()) {

ALOGI("processCaptureRequest error 1, First request does not have metadata, BAD_VALUE is %d", BAD_VALUE);

return BAD_VALUE;

}

if(request->input_buffer) {

/* Ignore input buffer */

/* TODO: do we expect any input buffer? */

request->input_buffer->release_fence = -1;

}

if(!request->settings) {

cm.acquire(mLastRequestSettings);

} else {

cm = request->settings;

}

notifyShutter(request->frame_number, (uint64_t)timestamp);

BENCHMARK_SECTION("Lock/Read") {

frame = mDev->readLock();

}

if(!frame) {

ALOGI("processCaptureRequest error 2, NOT_ENOUGH_DATA is %d", NOT_ENOUGH_DATA);

return NOT_ENOUGH_DATA;

}

buffers.setCapacity(request->num_output_buffers);

uint8_t *rgbaBuffer = NULL;

char aviRecordering[PROPERTY_VALUE_MAX];

for(size_t i = 0; i < request->num_output_buffers; ++i) {

const camera3_stream_buffer &srcBuf = request->output_buffers[i];

uint8_t *buf = NULL;

sp<Fence> acquireFence = new Fence(srcBuf.acquire_fence);

e = acquireFence->wait(1500); /* FIXME: magic number */

if(e == TIMED_OUT) {

ALOGI("processCaptureRequest buffer %p frame %-4u Wait on acquire fence timed out", srcBuf.buffer, request->frame_number);

}

if(e == NO_ERROR) {

const Rect rect((int)srcBuf.stream->width, (int)srcBuf.stream->height);

e = GraphicBufferMapper::get().lock(*srcBuf.buffer, GRALLOC_USAGE_SW_WRITE_OFTEN, rect, (void **)&buf);

if(e != NO_ERROR) {

ALOGI("processCaptureRequest buffer %p frame %-4u lock failed", srcBuf.buffer, request->frame_number);

}

}

if(e != NO_ERROR) {

ALOGI("processCaptureRequest error 3, e is %d, errno is %d, acquire_fence is %d", e, errno, srcBuf.acquire_fence);

do GraphicBufferMapper::get().unlock(*request->output_buffers[i].buffer); while(i--);

return NO_INIT;

}

switch(srcBuf.stream->format) {

case HAL_PIXEL_FORMAT_RGBA_8888: {

if(!rgbaBuffer) {

BENCHMARK_SECTION("YUV->RGBA") {

/* FIXME: better format detection */

if(frame->pixFmt == V4L2_PIX_FMT_YUYV) {

//mConverter.UYVYToRGBA(frame->buf, buf, res.width, res.height);

libyuv::YUY2ToI422(frame->buf, res.width*2,

mFrameBuffer, res.width,

&mFrameBuffer[res.width*res.height], res.width / 2,

&mFrameBuffer[res.width*res.height + res.width*res.height / 2], res.width / 2,

res.width, res.height);

_AddTimesTamp(mFrameBuffer, res.width, res.height);

libyuv::I422ToABGR(mFrameBuffer, res.width,

&mFrameBuffer[res.width*res.height], res.width / 2,

&mFrameBuffer[res.width*res.height + res.width*res.height / 2], res.width / 2,

buf,res.width*4,

res.width, res.height);

} else if (frame->pixFmt == V4L2_PIX_FMT_MJPEG) {

libyuv::MJPGToI420(frame->buf, frame->len,

rszbuffer, res.width,

&rszbuffer[res.width * res.height], res.width / 2 ,

&rszbuffer[res.width * res.height * 5 / 4 ], res.width / 2 ,

res.width, res.height,

res.width, res.height);

_AddTimesTamp(rszbuffer, res.width, res.height);

libyuv::I420ToABGR(rszbuffer, res.width,

&rszbuffer[res.width * res.height], res.width / 2 ,

&rszbuffer[res.width * res.height * 5 / 4 ], res.width / 2 ,

buf, res.width*4,

res.width, res.height);

// ALOGI("%s, MJPG convert done!", __FUNCTION__);

} else {

;//mConverter.YUY2ToRGBA(frame->buf, buf, res.width, res.height);

}

rgbaBuffer = buf;

}

} else {

BENCHMARK_SECTION("Buf Copy") {

memcpy(buf, rgbaBuffer, srcBuf.stream->width * srcBuf.stream->height * 4);

}

}

break;

}

case HAL_PIXEL_FORMAT_BLOB: {

BENCHMARK_SECTION("YUV->JPEG") {

const size_t maxImageSize = mJpegBufferSize - sizeof(camera3_jpeg_blob);

uint8_t jpegQuality = 95;

if(cm.exists(ANDROID_JPEG_QUALITY)) {

jpegQuality = *cm.find(ANDROID_JPEG_QUALITY).data.u8;

}

ALOGI("JPEG quality = %u", jpegQuality);

/* FIXME: better format detection */

uint8_t *bufEnd = NULL;

if(frame->pixFmt == V4L2_PIX_FMT_UYVY)

{

ALOGI("processCaptureRequest HAL_PIXEL_FORMAT_BLOB frame->pixFmt == V4L2_PIX_FMT_UYVY");

// bufEnd = mConverter.UYVYToJPEG(frame->buf, buf, res.width, res.height, maxImageSize, jpegQuality);

}

else if (frame->pixFmt == V4L2_PIX_FMT_MJPEG) {

ALOGI("processCaptureRequest HAL_PIXEL_FORMAT_BLOB frame->pixFmt == V4L2_PIX_FMT_MJPEG");

int count = frame->len / mPageSize;

int mod = frame->len % mPageSize;

uint8_t *destbuf = buf;

uint8_t * srcBuf = frame->buf;

for(int i=0; i<count; ++i)

{

memcpy(destbuf, srcBuf, mPageSize);

destbuf += mPageSize;

srcBuf += mPageSize;

}

if(mod != 0)

{

memcpy(destbuf, srcBuf, mod);

}

//memcpy(buf, frame->buf, frame->len);

bufEnd = buf + frame->len;

} else

{

ALOGI("processCaptureRequest HAL_PIXEL_FORMAT_BLOB YUY2ToJPEG");

// bufEnd = mConverter.YUY2ToJPEG(frame->buf, buf, res.width, res.height, maxImageSize, jpegQuality);

}

if(bufEnd != buf) {

camera3_jpeg_blob *jpegBlob = reinterpret_cast<camera3_jpeg_blob*>(buf + maxImageSize);

jpegBlob->jpeg_blob_id = CAMERA3_JPEG_BLOB_ID;

jpegBlob->jpeg_size = (uint32_t)(bufEnd - buf);

} else {

ALOGI("%s: JPEG image too big!", __FUNCTION__);

}

}

break;

}

default:

ALOGI("Unknown pixel format %d in buffer %p (stream %p), ignoring", srcBuf.stream->format, srcBuf.buffer, srcBuf.stream);

}

}

/* Unlocking all buffers in separate loop allows to copy data from already processed buffer to not yet processed one */

for(size_t i = 0; i < request->num_output_buffers; ++i) {

const camera3_stream_buffer &srcBuf = request->output_buffers[i];

GraphicBufferMapper::get().unlock(*srcBuf.buffer);

buffers.push_back(srcBuf);

buffers.editTop().acquire_fence = -1;

buffers.editTop().release_fence = -1;

buffers.editTop().status = CAMERA3_BUFFER_STATUS_OK;

}

BENCHMARK_SECTION("Unlock") {

mDev->unlock(frame);

}

int64_t sensorTimestamp = timestamp;

int64_t syncFrameNumber = request->frame_number;

cm.update(ANDROID_SENSOR_TIMESTAMP, &sensorTimestamp, 1);

cm.update(ANDROID_SYNC_FRAME_NUMBER, &syncFrameNumber, 1);

auto result = cm.getAndLock();

processCaptureResult(request->frame_number, result, buffers);

cm.unlock(result);

// Cache the settings for next time

mLastRequestSettings.acquire(cm);

/* Print stats */

char bmOut[1024];

BENCHMARK_STRING(bmOut, sizeof(bmOut), 6);

// ALOGI(" time (avg): %s", bmOut);

// ALOGI("processCaptureRequest no error");

return NO_ERROR;

}

inline void Camera::notifyShutter(uint32_t frameNumber, uint64_t timestamp) {

camera3_notify_msg_t msg;

msg.type = CAMERA3_MSG_SHUTTER;

msg.message.shutter.frame_number = frameNumber;

msg.message.shutter.timestamp = timestamp;

mCallbackOps->notify(mCallbackOps, &msg);

}

void Camera::processCaptureResult(uint32_t frameNumber, const camera_metadata_t *result, const Vector<camera3_stream_buffer> &buffers) {

camera3_capture_result captureResult;

captureResult.frame_number = frameNumber;

captureResult.result = result;

captureResult.num_output_buffers = buffers.size();

captureResult.output_buffers = buffers.array();

captureResult.input_buffer = NULL;

captureResult.partial_result = 0;

mCallbackOps->process_capture_result(mCallbackOps, &captureResult);

}

/******************************************************************************\

STATIC WRAPPERS

\******************************************************************************/

int Camera::sClose(hw_device_t *device) {

/* TODO: check device module */

Camera *thiz = static_cast<Camera *>(reinterpret_cast<camera3_device_t *>(device));

return thiz->closeDevice();

}

int Camera::sInitialize(const camera3_device *device, const camera3_callback_ops_t *callback_ops) {

/* TODO: check pointers */

Camera *thiz = static_cast<Camera *>(const_cast<camera3_device *>(device));

return thiz->initialize(callback_ops);

}

int Camera::sConfigureStreams(const camera3_device *device, camera3_stream_configuration_t *stream_list) {

/* TODO: check pointers */

Camera *thiz = static_cast<Camera *>(const_cast<camera3_device *>(device));

return thiz->configureStreams(stream_list);

}

int Camera::sRegisterStreamBuffers(const camera3_device *device, const camera3_stream_buffer_set_t *buffer_set) {

/* TODO: check pointers */

Camera *thiz = static_cast<Camera *>(const_cast<camera3_device *>(device));

return thiz->registerStreamBuffers(buffer_set);

}

const camera_metadata_t * Camera::sConstructDefaultRequestSettings(const camera3_device *device, int type) {

/* TODO: check pointers */

Camera *thiz = static_cast<Camera *>(const_cast<camera3_device *>(device));

return thiz->constructDefaultRequestSettings(type);

}

int Camera::sProcessCaptureRequest(const camera3_device *device, camera3_capture_request_t *request) {

/* TODO: check pointers */

Camera *thiz = static_cast<Camera *>(const_cast<camera3_device *>(device));

return thiz->processCaptureRequest(request);

}

void Camera::sGetMetadataVendorTagOps(const camera3_device *device, vendor_tag_query_ops_t *ops) {

/* TODO: implement */

}

void Camera::sDump(const camera3_device *device, int fd) {

/* TODO: implement */

}

int Camera::sFlush(const camera3_device *device) {

/* TODO: implement */

return NO_ERROR;//-ENODEV;

}

camera3_device_ops_t Camera::sOps = {

.initialize = Camera::sInitialize,

.configure_streams = Camera::sConfigureStreams,

.register_stream_buffers = Camera::sRegisterStreamBuffers,

.construct_default_request_settings = Camera::sConstructDefaultRequestSettings,

.process_capture_request = Camera::sProcessCaptureRequest,

.get_metadata_vendor_tag_ops = Camera::sGetMetadataVendorTagOps,

.dump = Camera::sDump,

.flush = Camera::sFlush,

.reserved = {0}

};

}; /* namespace android */

大家请注意,在Camera.cpp的Camera()里调用了common.version = CAMERA_DEVICE_API_VERSION_3_2; 在cameraInfo里调用了info->device_version = CAMERA_DEVICE_API_VERSION_3_2; 这里表示,我们的hal的版本定义为了hal3.2。

我们的HalModule.cpp的get_camera_info指向了HalModule::getCameraInfo, getCameraInfo里又调用到了Camera::cameraInfo,然后Camera::cameraInfo通过staticCharacteristics来,获取我们usbcamera的属性。这样在app上就可以通过getCameraInfo来获取我们usbcamera的属性了。也可以使用下面的方法来获取我们预览或者拍尺寸等:

public List<Size> getSupportedPreviewSizes() {

StreamConfigurationMap configMap;

CameraCharacteristics mCameraCharacteristics;

try {

configMap = mCameraCharacteristics.get(

CameraCharacteristics.SCALER_STREAM_CONFIGURATION_MAP);

} catch (Exception ex) {

return new ArrayList<>(0);

}

ArrayList<Size> supportedPictureSizes = new ArrayList<>();

for (android.util.Size androidSize : configMap.getOutputSizes(SurfaceTexture.class)) {

supportedPictureSizes.add(new Size(androidSize));

}

return supportedPictureSizes;

}我这个usbcamera,可以同时兼容api1和api2,当然,如果想要让api2也来调用的话,camera_metadata_t *mStaticCharacteristics;这个值要配好,必须按api2的规范来,该有的值一个都不能少,要不然app在调用getStreamConfigurationMap去获取属性时,就有可能会因为获取不到对应的值而报错。

比如一开始,我在staticCharacteristics这个函数里,没有配置ANDROID_REQUEST_AVAILABLE_CAPABILITIES相关的属性。然后app在用api2的接口去getStreamConfigurationMap的时候,就因为找不到REQUEST_AVAILABLE_CAPABILITIES而报错了。至于api2都需要哪些配置项,大家可以参考frameworks\base\core\java\android\hardware\camera2\impl\CameraMetadataNative.java里的getStreamConfigurationMap()这个函数,这里需要的,都给加上,都必须加上。

后来报错后,我在staticCharacteristics这个函数里,加下了如下代码,就可以了:

Vector<uint8_t> available_capabilities;

available_capabilities.add(ANDROID_REQUEST_AVAILABLE_CAPABILITIES_READ_SENSOR_SETTINGS);

available_capabilities.add(ANDROID_REQUEST_AVAILABLE_CAPABILITIES_PRIVATE_REPROCESSING);

available_capabilities.add(ANDROID_REQUEST_AVAILABLE_CAPABILITIES_YUV_REPROCESSING);

cm.update(ANDROID_REQUEST_AVAILABLE_CAPABILITIES,

available_capabilities.array(),

available_capabilities.size());到这里,我们一个完整的usbcamera hal就添加完成了。当然,虚拟摄像头也是一样的。唯一不同的是camera.cpp里的processCaptureRequest这个函数。我们的usbcamera是真正的一个摄像头,是可以取到实景的,所以就按照v4l2的标准来读取,然后按camera hal的规范来填充就可以。 但是虚拟摄像头因为是没有真正的摄像头的,它取的景,是底层事先录好一个视频,然后喂给buff的。所以虚拟摄像头,需要修改processCaptureRequest这个函数。

虚拟摄像头,只需要在这个函数里,按照app设置的帧率来循环读取视频里的数据,然后abgr的格式,喂给processCaptureResult这个函数,这个函数,再通过回调mCallbackOps->process_capture_result,返回给上面即可。