X: image of a handwritten digit

Y: the digit value recognize the digit in the image

MODEL:

logits = X*w + b

Y_predicted = softmax(logits)

loss = cross_entropy(Y, Y_predicted)

数据集下载地址:

http://yann.lecun.com/exdb/mnist/

代码:

input.py

# Copyright 2015 The TensorFlow Authors. All Rights Reserved. # # Licensed under the Apache License, Version 2.0 (the "License"); # you may not use this file except in compliance with the License. # You may obtain a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an "AS IS" BASIS, # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # See the License for the specific language governing permissions and # limitations under the License. # ============================================================================== """Functions for downloading and reading MNIST data.""" from __future__ import absolute_import from __future__ import division from __future__ import print_function import gzip import os import tempfile import numpy from six.moves import urllib from six.moves import xrange # pylint: disable=redefined-builtin import tensorflow as tf from tensorflow.contrib.learn.python.learn.datasets.mnist import read_data_sets

mnist.py

import tensorflow as tf

import input_data

#define paramaters for the model

learning_rate = 0.01

batch_size = 128

n_epochs = 30

#step 1:read in data

#using TF Learn's built in function to load MNIST data to the folder data/mnist

mnist = input_data.read_data_sets('data/',one_hot = True)

#step 2: creat placeholder for features and labels

#each image in the MNIST data is of shape 28*28 = 784

#therefore,each image is represented with a 1x784 tensor

#there are 10 classes for each image,corresponding to digits 0-9

#each lable iss one hot vector

X = tf.placeholder(tf.float32, [batch_size, 784], name = 'X_placeholder')

Y = tf.placeholder(tf.float32, [batch_size, 10], name = 'Y_placeholder')

#step 3:creat weights and bias

#w is initialized to random variables with mean of 0, stddev of 0.01

#b is initialized to 0

#shape of w depends on the dimension of X and Y so that Y = tf.matul(X, w)

#shape of b depends on Y

w = tf.Variable(tf.random_normal(shape= [784,, 10],stddev = 0.01), name = 'wieghts')

b = tf.Variable(tf.zeros([1,10]), name="bias")

#step 4:build model

#the model that returns the logits

#this logits will be later passed through softmax layer

logits = tf.matmul(X,w)+b

#step 5:define loss function

#use cross entropy of softmax of logits as the loss function

entropy = tf.nn.softmax_cross_entropy_with_logits(logits,Y,name='loss')

loss = tf.reduce_mean(entropy)#computes the mean over all the examples in the batch

#step 6: define traning op

#using gradient descent with learning rate of 0.01 to minimize loss

optimizer = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss)

with tf.Session() as sess:

#to visualize using TensorBoard

writer = tf.summary.FileWriter('./my_graph/03/logistic_reg', sess.graph)

start_time = time.time()

sess.run(tf.global_variables_initializer())

n_batches = int(mnist.train.num_examples/batch_size)

for i in range(n_epochs):#train the model n_epochs times

total_loss = 0

for _ in range(n_batches):

X_batch, Y_batch = mnist.train.next_batch(batch_size)

_, loss_batch = sess.run([optimizer, loss], feed_dict={X:X_batch, Y:Y_batch})

total_loss += loss_batch

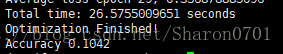

print 'Average loss epoch {0}; {1}'.format(i, total_loss/n_batches)

print 'Total time: {0} seconds'.format(time.time() - start_time)

print ('Optimization Finished!')

#test the model

n_batches = int(mnist.test.num_example/batch_size)

total_correct_preds = 0

for i in range(n_batches):

x_batch, Y_batch = mnist.test.next_batch(batch_size)

_,loss_batch, logits_batch = sess.run([optimizer, loss, logits], feed_dict={X: X_batch, Y:Y_batch})

preds = tf.nn.softmax(logits_batch)

correct_preds = tf.equal(tf.argmax(preds, 1), tf.argmax(Y_batch, 1))

accuracy = tf.reduce_sum(tf.cast(correct_preds, tf.float32))

total_correct_preds += sess.run(accuracy)

print 'Accuracy {0}'.format(total_correct_preds/mnist.test.num_examples)

writer.close()

结果: