官网API

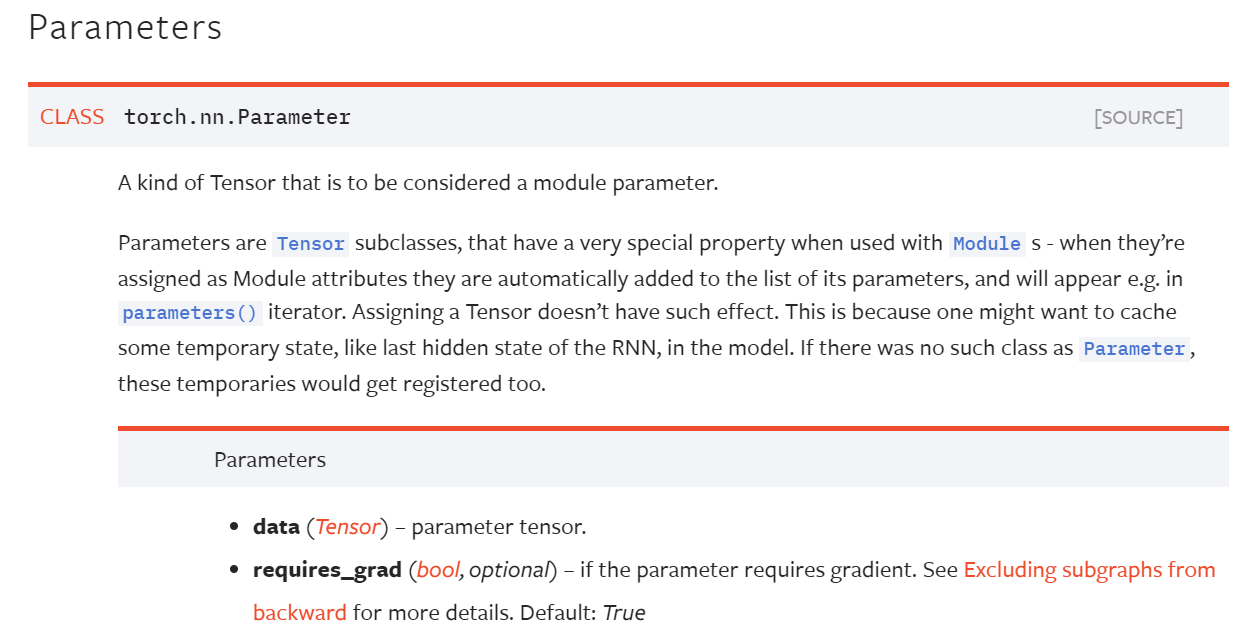

其可以将普通张量转变为模型参数的一部分。Parameters是Tensor的一个子类,当用于Module时具有非常特殊的属性,当其被赋予为模块的属性时,他们自动地添加到模块参数列表中,且将会出现在如parameters()迭代器中。如果赋予一个普通张量则没有这样的效果。这是由于人们可能想要在模型中缓存一些临时状态,如RNN中的上一个隐层状态。如果没有像Parameter这样的类,这些缓存状态也将被注册。

I will break it down for you. Tensors, as you might know, are multi dimensional matrices. Parameter, in its raw form, is a tensor i.e. a multi dimensional matrix. It sub-classes the Variable class.

The difference between a Variable and a Parameter comes in when associated with a module. When a Parameter is associated with a module as a model attribute, it gets added to the parameter list automatically and can be accessed using the 'parameters' iterator.

Initially in Torch, a Variable (which could for example be an intermediate state) would also get added as a parameter of the model upon assignment. Later on there were use cases identified where a need to cache the variables instead of having them added to the parameter list was identified.

One such case, as mentioned in the documentation is that of RNN, where in you need to save the last hidden state so you don't have to pass it again and again. The need to cache a Variable instead of having it automatically register as a parameter to the model is why we have an explicit way of registering parameters to our model i.e. nn.Parameter class.

Recent PyTorch releases just have Tensors, it came out the concept of the Variable has deprecated.

Parameters are just Tensors limited to the module they are defined (in the module constructor __init__ method).

They will appear inside module.parameters(). This comes handy when you build your custom modules, that learn thanks to these parameters gradient descent.

Anything that is true for the PyTorch tensors is true for parameters, since they are tensors.

Additionally, if module goes to GPU, parameters goes as well. If module is saved parameters will also be saved.

There is a similar concept to model parameters called buffers.

These are named tensors inside the module, but these tensors are not meant to learn via gradient descent, instead you can think these are like variables. You will update your named buffers inside module forward() as you like.

For buffers, it is also true they will go to GPU with the module, and they will be saved together with the module.

Are Parameters only limited being used in __init__()? No, but it is most common to define them inside the __init__ method.

https://stackoverflow.com/questions/50935345/understanding-torch-nn-parameter