视觉SLAM笔记基本的 VO目录

一、两两帧的视觉里程计

两两帧之间的 VO 工作示意图如图

在这种 VO 里,定义了参考帧(Ref-erence)和当前帧(Current)这两个概念。以参考帧为坐标系,把当前帧与它进行特征匹配,并估计运动关系。假设参考帧相对世界坐标的变换矩阵为 T rw ,当前帧与世界坐标系间为 T cw ,则待估计的运动与这两个帧的变换矩阵构成左乘关系:

在 t − 1 到 t 时刻,我们以 t − 1 为参考,求取 t 时刻的运动。这可以通过特征点匹配、光流或直接法得到,但这里我们只关心运动,不关心结构。

在匹配特征点的方式中,最重要的参考帧与当前帧之间的特征匹配关系,它的流程可归纳如下:

- 对新来的当前帧,提取关键点和描述子。

- 如果系统未初始化,以该帧为参考帧,根据深度图计算关键点的 3D 位置,返回1。

- 估计参考帧与当前帧间的运动。

- 判断上述估计是否成功。

- 若成功,把当前帧作为新的参考帧,返回 1。

- 若失败,计连续丢失帧数。当连续丢失超过一定帧数,置 VO 状态为丢失,算法结束。若未超过,返回 1。

二、具体代码内容

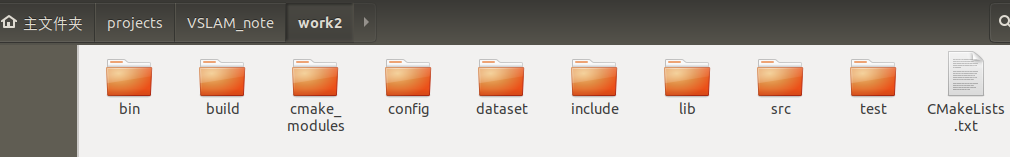

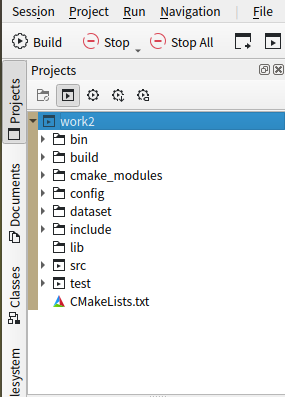

文件结构如图所示,自己手动新建工程和文件夹(我的工程:VSLAM_note/work2);文件夹:bin(存放可执行的文件)、build(cmake、make的地方)、config(存放配置文件)、dataset(存放数据集)、include/myslam(存放 slam 模块的头文件,x.h)、lib( 存放编译好的库文件 )、src(存放源代码文件,x. cpp)、test(存放测试用的cpp文件)

- VSLAM_note/work2/CMakeLists.txt内容如下

cmake_minimum_required( VERSION 2.8 )

project ( myslam )

set( CMAKE_CXX_COMPILER "g++" )

set( CMAKE_BUILD_TYPE "Release" )

set( CMAKE_CXX_FLAGS "-std=c++11 -march=native -O3" )

list( APPEND CMAKE_MODULE_PATH ${PROJECT_SOURCE_DIR}/cmake_modules )

set( EXECUTABLE_OUTPUT_PATH ${PROJECT_SOURCE_DIR}/bin )

set( LIBRARY_OUTPUT_PATH ${PROJECT_SOURCE_DIR}/lib )

############### dependencies ######################

# Eigen

include_directories( "/usr/include/eigen3" )

# OpenCV

find_package( OpenCV 3.1 REQUIRED )

include_directories( ${OpenCV_INCLUDE_DIRS} )

# Sophus

find_package( Sophus REQUIRED )

include_directories( ${Sophus_INCLUDE_DIRS} )

# G2O

find_package( G2O REQUIRED )

include_directories( ${G2O_INCLUDE_DIRS} )

set( THIRD_PARTY_LIBS

${OpenCV_LIBS}

${Sophus_LIBRARIES}

g2o_core g2o_stuff g2o_types_sba

)

############### source and test ######################

include_directories( ${PROJECT_SOURCE_DIR}/include )

add_subdirectory( src )

add_subdirectory( test )

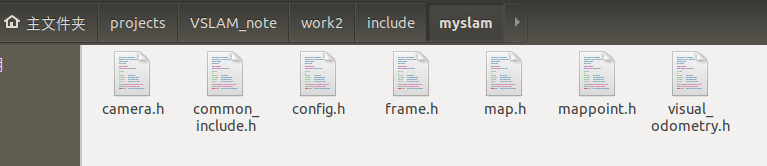

- VSLAM_note/work2/include/myslam文件内容如下

1.camera.h文件内容

#ifndef CAMERA_H

#define CAMERA_H

#include "myslam/common_include.h"

namespace myslam

{

// Pinhole RGBD camera model

class Camera

{

public:

typedef std::shared_ptr<Camera> Ptr;

float fx_, fy_, cx_, cy_, depth_scale_; // Camera intrinsics

Camera();

Camera ( float fx, float fy, float cx, float cy, float depth_scale=0 ) :

fx_ ( fx ), fy_ ( fy ), cx_ ( cx ), cy_ ( cy ), depth_scale_ ( depth_scale )

{}

// coordinate transform: world, camera, pixel

Vector3d world2camera( const Vector3d& p_w, const SE3& T_c_w );

Vector3d camera2world( const Vector3d& p_c, const SE3& T_c_w );

Vector2d camera2pixel( const Vector3d& p_c );

Vector3d pixel2camera( const Vector2d& p_p, double depth=1 );

Vector3d pixel2world ( const Vector2d& p_p, const SE3& T_c_w, double depth=1 );

Vector2d world2pixel ( const Vector3d& p_w, const SE3& T_c_w );

};

}

#endif // CAMERA_H

2.common_include.h文件内容

#ifndef COMMON_INCLUDE_H

#define COMMON_INCLUDE_H

// define the commonly included file to avoid a long include list

// for Eigen

#include <Eigen/Core>

#include <Eigen/Geometry>

using Eigen::Vector2d;

using Eigen::Vector3d;

// for Sophus

#include <sophus/se3.h>

#include <sophus/so3.h>

using Sophus::SE3;

using Sophus::SO3;

// for cv

#include <opencv2/core/core.hpp>

using cv::Mat;

// std

#include <vector>

#include <list>

#include <memory>

#include <string>

#include <iostream>

#include <set>

#include <unordered_map>

#include <map>

using namespace std;

#endif

3.config.h文件内容

#ifndef CONFIG_H

#define CONFIG_H

#include "myslam/common_include.h"

namespace myslam

{

class Config

{

private:

static std::shared_ptr<Config> config_;

cv::FileStorage file_;

Config () {} // private constructor makes a singleton

public:

~Config(); // close the file when deconstructing

// set a new config file

static void setParameterFile( const std::string& filename );

// access the parameter values

template< typename T >

static T get( const std::string& key )

{

return T( Config::config_->file_[key] );

}

};

}

#endif // CONFIG_H

4.frame.h文件内容

#ifndef FRAME_H

#define FRAME_H

#include "myslam/common_include.h"

#include "myslam/camera.h"

namespace myslam

{

// forward declare

class MapPoint;

class Frame

{

public:

typedef std::shared_ptr<Frame> Ptr;

unsigned long id_; // id of this frame

double time_stamp_; // when it is recorded

SE3 T_c_w_; // transform from world to camera

Camera::Ptr camera_; // Pinhole RGBD Camera model

Mat color_, depth_; // color and depth image

public: // data members

Frame();

Frame( long id, double time_stamp=0, SE3 T_c_w=SE3(), Camera::Ptr camera=nullptr, Mat color=Mat(), Mat depth=Mat() );

~Frame();

// factory function

static Frame::Ptr createFrame();

// find the depth in depth map

double findDepth( const cv::KeyPoint& kp );

// Get Camera Center

Vector3d getCamCenter() const;

// check if a point is in this frame

bool isInFrame( const Vector3d& pt_world );

};

}

#endif // FRAME_H

5.map.h文件内容

#ifndef MAP_H

#define MAP_H

#include "myslam/common_include.h"

#include "myslam/frame.h"

#include "myslam/mappoint.h"

namespace myslam

{

class Map

{

public:

typedef shared_ptr<Map> Ptr;

unordered_map<unsigned long, MapPoint::Ptr > map_points_; // all landmarks

unordered_map<unsigned long, Frame::Ptr > keyframes_; // all key-frames

Map() {}

void insertKeyFrame( Frame::Ptr frame );

void insertMapPoint( MapPoint::Ptr map_point );

};

}

#endif // MAP_H

6.mappoint.h文件内容

#ifndef MAPPOINT_H

#define MAPPOINT_H

namespace myslam

{

class Frame;

class MapPoint

{

public:

typedef shared_ptr<MapPoint> Ptr;

unsigned long id_; // ID

Vector3d pos_; // Position in world

Vector3d norm_; // Normal of viewing direction

Mat descriptor_; // Descriptor for matching

int observed_times_; // being observed by feature matching algo.

int matched_times_; // being an inliner in pose estimation

MapPoint();

MapPoint( long id, Vector3d position, Vector3d norm );

// factory function

static MapPoint::Ptr createMapPoint();

};

}

#endif // MAPPOINT_H

7.visual_odometry.h文件内容

#ifndef VISUALODOMETRY_H

#define VISUALODOMETRY_H

#include "myslam/common_include.h"

#include "myslam/map.h"

#include <opencv2/features2d/features2d.hpp>

namespace myslam

{

class VisualOdometry

{

public:

typedef shared_ptr<VisualOdometry> Ptr;

enum VOState {

INITIALIZING=-1,

OK=0,

LOST

};

VOState state_; // current VO status

Map::Ptr map_; // map with all frames and map points

Frame::Ptr ref_; // reference frame

Frame::Ptr curr_; // current frame

cv::Ptr<cv::ORB> orb_; // orb detector and computer

vector<cv::Point3f> pts_3d_ref_; // 3d points in reference frame

vector<cv::KeyPoint> keypoints_curr_; // keypoints in current frame

Mat descriptors_curr_; // descriptor in current frame

Mat descriptors_ref_; // descriptor in reference frame

vector<cv::DMatch> feature_matches_;

SE3 T_c_r_estimated_; // the estimated pose of current frame

int num_inliers_; // number of inlier features in icp

int num_lost_; // number of lost times

// parameters

int num_of_features_; // number of features

double scale_factor_; // scale in image pyramid

int level_pyramid_; // number of pyramid levels

float match_ratio_; // ratio for selecting good matches

int max_num_lost_; // max number of continuous lost times

int min_inliers_; // minimum inliers

double key_frame_min_rot; // minimal rotation of two key-frames

double key_frame_min_trans; // minimal translation of two key-frames

public: // functions

VisualOdometry();

~VisualOdometry();

bool addFrame( Frame::Ptr frame ); // add a new frame

protected:

// inner operation

void extractKeyPoints();

void computeDescriptors();

void featureMatching();

void poseEstimationPnP();

void setRef3DPoints();

void addKeyFrame();

bool checkEstimatedPose();

bool checkKeyFrame();

};

}

#endif // VISUALODOMETRY_H

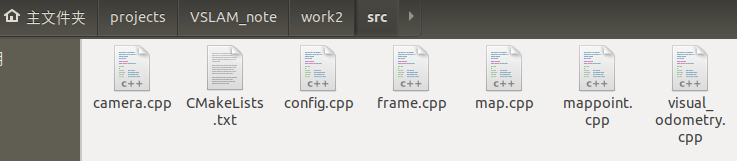

- VSLAM_note/work2/src结构如下

1.camera.cpp文件内容

#include "myslam/camera.h"

#include "myslam/config.h"

namespace myslam

{

Camera::Camera()

{

fx_ = Config::get<float>("camera.fx");

fy_ = Config::get<float>("camera.fy");

cx_ = Config::get<float>("camera.cx");

cy_ = Config::get<float>("camera.cy");

depth_scale_ = Config::get<float>("camera.depth_scale");

}

Vector3d Camera::world2camera ( const Vector3d& p_w, const SE3& T_c_w )

{

return T_c_w*p_w;

}

Vector3d Camera::camera2world ( const Vector3d& p_c, const SE3& T_c_w )

{

return T_c_w.inverse() *p_c;

}

Vector2d Camera::camera2pixel ( const Vector3d& p_c )

{

return Vector2d (

fx_ * p_c ( 0,0 ) / p_c ( 2,0 ) + cx_,

fy_ * p_c ( 1,0 ) / p_c ( 2,0 ) + cy_

);

}

Vector3d Camera::pixel2camera ( const Vector2d& p_p, double depth )

{

return Vector3d (

( p_p ( 0,0 )-cx_ ) *depth/fx_,

( p_p ( 1,0 )-cy_ ) *depth/fy_,

depth

);

}

Vector2d Camera::world2pixel ( const Vector3d& p_w, const SE3& T_c_w )

{

return camera2pixel ( world2camera ( p_w, T_c_w ) );

}

Vector3d Camera::pixel2world ( const Vector2d& p_p, const SE3& T_c_w, double depth )

{

return camera2world ( pixel2camera ( p_p, depth ), T_c_w );

}

}

2.config.cpp文件内容

#include "myslam/config.h"

namespace myslam

{

void Config::setParameterFile( const std::string& filename )

{

if ( config_ == nullptr )

config_ = shared_ptr<Config>(new Config);

config_->file_ = cv::FileStorage( filename.c_str(), cv::FileStorage::READ );

if ( config_->file_.isOpened() == false )

{

std::cerr<<"parameter file "<<filename<<" does not exist."<<std::endl;

config_->file_.release();

return;

}

}

Config::~Config()

{

if ( file_.isOpened() )

file_.release();

}

shared_ptr<Config> Config::config_ = nullptr;

}

3.frame.cpp文件内容

#include "myslam/frame.h"

namespace myslam

{

Frame::Frame()

: id_(-1), time_stamp_(-1), camera_(nullptr)

{

}

Frame::Frame ( long id, double time_stamp, SE3 T_c_w, Camera::Ptr camera, Mat color, Mat depth )

: id_(id), time_stamp_(time_stamp), T_c_w_(T_c_w), camera_(camera), color_(color), depth_(depth)

{

}

Frame::~Frame()

{

}

Frame::Ptr Frame::createFrame()

{

static long factory_id = 0;

return Frame::Ptr( new Frame(factory_id++) );

}

double Frame::findDepth ( const cv::KeyPoint& kp )

{

int x = cvRound(kp.pt.x);

int y = cvRound(kp.pt.y);

ushort d = depth_.ptr<ushort>(y)[x];

if ( d!=0 )

{

return double(d)/camera_->depth_scale_;

}

else

{

// check the nearby points

int dx[4] = {-1,0,1,0};

int dy[4] = {0,-1,0,1};

for ( int i=0; i<4; i++ )

{

d = depth_.ptr<ushort>( y+dy[i] )[x+dx[i]];

if ( d!=0 )

{

return double(d)/camera_->depth_scale_;

}

}

}

return -1.0;

}

Vector3d Frame::getCamCenter() const

{

return T_c_w_.inverse().translation();

}

bool Frame::isInFrame ( const Vector3d& pt_world )

{

Vector3d p_cam = camera_->world2camera( pt_world, T_c_w_ );

if ( p_cam(2,0)<0 )

return false;

Vector2d pixel = camera_->world2pixel( pt_world, T_c_w_ );

return pixel(0,0)>0 && pixel(1,0)>0

&& pixel(0,0)<color_.cols

&& pixel(1,0)<color_.rows;

}

}

4.map.cpp文件内容

#include "myslam/map.h"

namespace myslam

{

void Map::insertKeyFrame ( Frame::Ptr frame )

{

cout<<"Key frame size = "<<keyframes_.size()<<endl;

if ( keyframes_.find(frame->id_) == keyframes_.end() )

{

keyframes_.insert( make_pair(frame->id_, frame) );

}

else

{

keyframes_[ frame->id_ ] = frame;

}

}

void Map::insertMapPoint ( MapPoint::Ptr map_point )

{

if ( map_points_.find(map_point->id_) == map_points_.end() )

{

map_points_.insert( make_pair(map_point->id_, map_point) );

}

else

{

map_points_[map_point->id_] = map_point;

}

}

5.mappoint.cpp文件内容

#include "myslam/common_include.h"

#include "myslam/mappoint.h"

namespace myslam

{

MapPoint::MapPoint()

: id_(-1), pos_(Vector3d(0,0,0)), norm_(Vector3d(0,0,0)), observed_times_(0)

{

}

MapPoint::MapPoint ( long id, Vector3d position, Vector3d norm )

: id_(id), pos_(position), norm_(norm), observed_times_(0)

{

}

MapPoint::Ptr MapPoint::createMapPoint()

{

static long factory_id = 0;

return MapPoint::Ptr(

new MapPoint( factory_id++, Vector3d(0,0,0), Vector3d(0,0,0) )

);

}

}

6.visual_odometry.cpp文件内容

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/imgproc/imgproc.hpp>

#include <opencv2/calib3d/calib3d.hpp>

#include <algorithm>

#include <boost/timer.hpp>

#include "myslam/config.h"

#include "myslam/visual_odometry.h"

namespace myslam

{

VisualOdometry::VisualOdometry() :

state_ ( INITIALIZING ), ref_ ( nullptr ), curr_ ( nullptr ), map_ ( new Map ), num_lost_ ( 0 ), num_inliers_ ( 0 )

{

num_of_features_ = Config::get<int> ( "number_of_features" );

scale_factor_ = Config::get<double> ( "scale_factor" );

level_pyramid_ = Config::get<int> ( "level_pyramid" );

match_ratio_ = Config::get<float> ( "match_ratio" );

max_num_lost_ = Config::get<float> ( "max_num_lost" );

min_inliers_ = Config::get<int> ( "min_inliers" );

key_frame_min_rot = Config::get<double> ( "keyframe_rotation" );

key_frame_min_trans = Config::get<double> ( "keyframe_translation" );

orb_ = cv::ORB::create ( num_of_features_, scale_factor_, level_pyramid_ );

}

VisualOdometry::~VisualOdometry()

{

}

bool VisualOdometry::addFrame ( Frame::Ptr frame )

{

switch ( state_ )

{

case INITIALIZING:

{

state_ = OK;

curr_ = ref_ = frame;

map_->insertKeyFrame ( frame );

// extract features from first frame

extractKeyPoints();

computeDescriptors();

// compute the 3d position of features in ref frame

setRef3DPoints();

break;

}

case OK:

{

curr_ = frame;

extractKeyPoints();

computeDescriptors();

featureMatching();

poseEstimationPnP();

if ( checkEstimatedPose() == true ) // a good estimation

{

curr_->T_c_w_ = T_c_r_estimated_ * ref_->T_c_w_; // T_c_w = T_c_r*T_r_w

ref_ = curr_;

setRef3DPoints();

num_lost_ = 0;

if ( checkKeyFrame() == true ) // is a key-frame

{

addKeyFrame();

}

}

else // bad estimation due to various reasons

{

num_lost_++;

if ( num_lost_ > max_num_lost_ )

{

state_ = LOST;

}

return false;

}

break;

}

case LOST:

{

cout<<"vo has lost."<<endl;

break;

}

}

return true;

}

void VisualOdometry::extractKeyPoints()

{

orb_->detect ( curr_->color_, keypoints_curr_ );

}

void VisualOdometry::computeDescriptors()

{

orb_->compute ( curr_->color_, keypoints_curr_, descriptors_curr_ );

}

void VisualOdometry::featureMatching()

{

// match desp_ref and desp_curr, use OpenCV's brute force match

vector<cv::DMatch> matches;

cv::BFMatcher matcher ( cv::NORM_HAMMING );

matcher.match ( descriptors_ref_, descriptors_curr_, matches );

// select the best matches

float min_dis = std::min_element (

matches.begin(), matches.end(),

[] ( const cv::DMatch& m1, const cv::DMatch& m2 )

{

return m1.distance < m2.distance;

} )->distance;

feature_matches_.clear();

for ( cv::DMatch& m : matches )

{

if ( m.distance < max<float> ( min_dis*match_ratio_, 30.0 ) )

{

feature_matches_.push_back(m);

}

}

cout<<"good matches: "<<feature_matches_.size()<<endl;

}

void VisualOdometry::setRef3DPoints()

{

// select the features with depth measurements

pts_3d_ref_.clear();

descriptors_ref_ = Mat();

for ( size_t i=0; i<keypoints_curr_.size(); i++ )

{

double d = ref_->findDepth(keypoints_curr_[i]);

if ( d > 0)

{

Vector3d p_cam = ref_->camera_->pixel2camera(

Vector2d(keypoints_curr_[i].pt.x, keypoints_curr_[i].pt.y), d

);

pts_3d_ref_.push_back( cv::Point3f( p_cam(0,0), p_cam(1,0), p_cam(2,0) ));

descriptors_ref_.push_back(descriptors_curr_.row(i));

}

}

}

void VisualOdometry::poseEstimationPnP()

{

// construct the 3d 2d observations

vector<cv::Point3f> pts3d;

vector<cv::Point2f> pts2d;

for ( cv::DMatch m:feature_matches_ )

{

pts3d.push_back( pts_3d_ref_[m.queryIdx] );

pts2d.push_back( keypoints_curr_[m.trainIdx].pt );

}

Mat K = ( cv::Mat_<double>(3,3)<<

ref_->camera_->fx_, 0, ref_->camera_->cx_,

0, ref_->camera_->fy_, ref_->camera_->cy_,

0,0,1

);

Mat rvec, tvec, inliers;

cv::solvePnPRansac( pts3d, pts2d, K, Mat(), rvec, tvec, false, 100, 4.0, 0.99, inliers );

num_inliers_ = inliers.rows;

cout<<"pnp inliers: "<<num_inliers_<<endl;

T_c_r_estimated_ = SE3(

SO3(rvec.at<double>(0,0), rvec.at<double>(1,0), rvec.at<double>(2,0)),

Vector3d( tvec.at<double>(0,0), tvec.at<double>(1,0), tvec.at<double>(2,0))

);

}

bool VisualOdometry::checkEstimatedPose()

{

// check if the estimated pose is good

if ( num_inliers_ < min_inliers_ )

{

cout<<"reject because inlier is too small: "<<num_inliers_<<endl;

return false;

}

// if the motion is too large, it is probably wrong

Sophus::Vector6d d = T_c_r_estimated_.log();

if ( d.norm() > 5.0 )

{

cout<<"reject because motion is too large: "<<d.norm()<<endl;

return false;

}

return true;

}

bool VisualOdometry::checkKeyFrame()

{

Sophus::Vector6d d = T_c_r_estimated_.log();

Vector3d trans = d.head<3>();

Vector3d rot = d.tail<3>();

if ( rot.norm() >key_frame_min_rot || trans.norm() >key_frame_min_trans )

return true;

return false;

}

void VisualOdometry::addKeyFrame()

{

cout<<"adding a key-frame"<<endl;

map_->insertKeyFrame ( curr_ );

}

}

7.CMakeLists.txt文件内容

add_library( myslam SHARED

frame.cpp

mappoint.cpp

map.cpp

camera.cpp

config.cpp

visual_odometry.cpp

)

target_link_libraries( myslam

${THIRD_PARTY_LIBS}

)

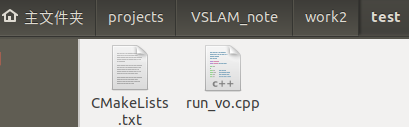

- projects/VSLAM_note/test/run_vo.cpp结构内容如下

1.CMakeLists.txt文件内容

add_executable( run_vo run_vo.cpp )

target_link_libraries( run_vo myslam )

2.run_vo.cpp文件内容

// -------------- test the visual odometry -------------

#include <fstream>

#include <boost/timer.hpp>

#include <opencv2/imgcodecs.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/viz.hpp>

#include "myslam/config.h"

#include "myslam/visual_odometry.h"

int main ( int argc, char** argv )

{

if ( argc != 2 )

{

cout<<"usage: run_vo parameter_file"<<endl;

return 1;

}

myslam::Config::setParameterFile ( argv[1] );

myslam::VisualOdometry::Ptr vo ( new myslam::VisualOdometry );

string dataset_dir = myslam::Config::get<string> ( "dataset_dir" );

cout<<"dataset: "<<dataset_dir<<endl;

ifstream fin ( dataset_dir+"/associate.txt" );

if ( !fin )

{

cout<<"please generate the associate file called associate.txt!"<<endl;

return 1;

}

vector<string> rgb_files, depth_files;

vector<double> rgb_times, depth_times;

while ( !fin.eof() )

{

string rgb_time, rgb_file, depth_time, depth_file;

fin>>rgb_time>>rgb_file>>depth_time>>depth_file;

rgb_times.push_back ( atof ( rgb_time.c_str() ) );

depth_times.push_back ( atof ( depth_time.c_str() ) );

rgb_files.push_back ( dataset_dir+"/"+rgb_file );

depth_files.push_back ( dataset_dir+"/"+depth_file );

if ( fin.good() == false )

break;

}

myslam::Camera::Ptr camera ( new myslam::Camera );

// visualization

cv::viz::Viz3d vis("Visual Odometry");

cv::viz::WCoordinateSystem world_coor(1.0), camera_coor(0.5);

cv::Point3d cam_pos( 0, -1.0, -1.0 ), cam_focal_point(0,0,0), cam_y_dir(0,1,0);

cv::Affine3d cam_pose = cv::viz::makeCameraPose( cam_pos, cam_focal_point, cam_y_dir );

vis.setViewerPose( cam_pose );

world_coor.setRenderingProperty(cv::viz::LINE_WIDTH, 2.0);

camera_coor.setRenderingProperty(cv::viz::LINE_WIDTH, 1.0);

vis.showWidget( "World", world_coor );

vis.showWidget( "Camera", camera_coor );

cout<<"read total "<<rgb_files.size() <<" entries"<<endl;

for ( int i=0; i<rgb_files.size(); i++ )

{

Mat color = cv::imread ( rgb_files[i] );

Mat depth = cv::imread ( depth_files[i], -1 );

if ( color.data==nullptr || depth.data==nullptr )

break;

myslam::Frame::Ptr pFrame = myslam::Frame::createFrame();

pFrame->camera_ = camera;

pFrame->color_ = color;

pFrame->depth_ = depth;

pFrame->time_stamp_ = rgb_times[i];

boost::timer timer;

vo->addFrame ( pFrame );

cout<<"VO costs time: "<<timer.elapsed()<<endl;

if ( vo->state_ == myslam::VisualOdometry::LOST )

break;

SE3 Tcw = pFrame->T_c_w_.inverse();

// show the map and the camera pose

cv::Affine3d M(

cv::Affine3d::Mat3(

Tcw.rotation_matrix()(0,0), Tcw.rotation_matrix()(0,1), Tcw.rotation_matrix()(0,2),

Tcw.rotation_matrix()(1,0), Tcw.rotation_matrix()(1,1), Tcw.rotation_matrix()(1,2),

Tcw.rotation_matrix()(2,0), Tcw.rotation_matrix()(2,1), Tcw.rotation_matrix()(2,2)

),

cv::Affine3d::Vec3(

Tcw.translation()(0,0), Tcw.translation()(1,0), Tcw.translation()(2,0)

)

);

cv::imshow("image", color );

cv::waitKey(1);

vis.setWidgetPose( "Camera", M);

vis.spinOnce(1, false);

}

return 0;

}

三、处理 TUM 数据集(fr1_xyz)

(这里谷歌浏览器好像打不开,我用搜狗浏览器下载的这个数据集)

下载fr1_xyz

下载完TUM 数据集(fr1_xyz)后,需要按照如下截图步骤下载所需的associate.py 文件

- 点击The file formats are described here.

-

点击 automatic evaluation tool

-

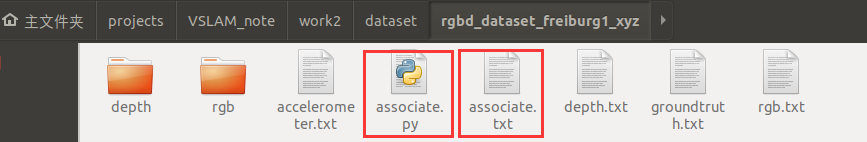

点击 **’‘associate.py’’**会出现associate.py文件的内容,这是你可以“Ctrl+a”,然后“Ctrl+c”手动新建一个associate.py放在路径~/projects/VSLAM_note/work2/dataset/rgbd_dataset_freiburg1_xyz里面,后面有截图提示

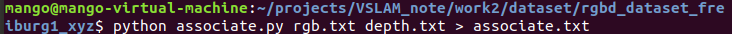

下载之后,将解压后的文件夹放在程序所在路径~/projects/VSLAM_note/work2/dataset/下,使用 associate.py 生成一个配对文件 associate.txt,代码如图所示

$ python associate.py rgb.txt depth.txt > associate.txt

四、具体执行步骤

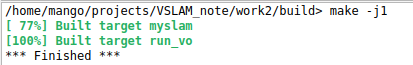

1.在终端运行,执行如下代码

cd build

cmake ..

make

cd ..

cd bin

./run_vo ../config/default.yaml

如图所示

运行结果如图

2.在KDevelop.AppImage运行

先导入工程work2

再build

点击run-configure Launches…然后如图进行相关参数配置操作①②③④

参数配置完成点击 run-Execute Launch运行,运行结果如图所示

在演示程序中,可以看到当前帧的图像与它的估计位置画出了世界坐标系的坐标轴(大)与当前帧的坐标轴(小)颜色与轴的对应关系为:蓝色-Z,红色-X,绿色-Y。你可以直观地感受到相机的运动,它大致与我们人类的感觉是相符的。

最后,清晰的结果显示如图

(运行不成功或者缺文件的可以私信或评论,毕竟都在学习过程中,希望可以帮到大家,加油!)

- 参考文献

视觉SLAM十四讲.pdf