部署事前准备:

1,必须在三个节点都先安装docker-ce并启动。

2, 开始部署安装master节点(192.168.132.200为master 管理节点,192.168.132.201和192.168.132.202为node1和node2 节点)

3,(在master节点端,192.168.132.200端):

yum install etcd -y

vim /etc/etcd/etcd.conf #修改配置etcd文件,设置为如下

ETCD_LISTEN_CLIENT_URLS="http://0.0.0.0:2379"

ETCD_ADVERTISE_CLIENT_URLS="http://0.0.0.0:2379"

systemctl restart etcd.service

systemctl status etcd.service

systemctl enable etcd.service

mkdir /opt/ kubernetes /opt/rh -p

mkdir /opt/kubernetes/bin /opt/kubernetes/cfg -p

cd ~ && tar xf kubernetes-server-linux-amd64.tar.tar && cd kubernetes

cd /root/kubernetes/server/bin/ && cp kube-apiserver kube-controller-manager kube-scheduler kubectl /opt/kubernetes/bin/

cd /root/kubernets/ && tar xf kubernetes-src.tar.gz && cd /root/kubernetes/cluster/centos/master/scripts

&& cp apiserver.sh scheduler.sh controller-manager.sh /opt/kubernetes/bin/

4, (修改master端的配置文件)

4-1, vim apiserver.sh修改为如下:

[root@192 bin]# vim apiserver.sh

MASTER_ADDRESS=${1:-"192.168.132.200"} ##设置master的IP

ETCD_SERVERS=${2:-"http://192.168.132.200:2379"}

注释掉ca,pem项。

[root@192 bin]# ./apiserver.sh 192.168.132.200 http://192.168.132.200:2379 #指定master节点和etcd的集群的地址。

4-2, vim controller-manager.sh 修改为如下:

[root@192 bin]# vim controller-manager.sh

MASTER_ADDRESS=${1:-"192.168.132.200"}

注释掉ca,pem项

[root@192 bin]# ./controller-manager.sh 192.168.132.200

4-3,vim scheduler.sh修改为如下:

[root@192 bin]# vim scheduler.sh

MASTER_ADDRESS=${1:-"192.168.132.200"}

注释掉ca,pem项

[root@192 bin]# ./scheduler.sh 192.168.132.200

[root@192 bin]# echo "export PATH=$PATH:/opt/kubernetes/bin" >> /etc/profile

[root@192 bin]# source /etc/profile

5,开始安装node节点(node端,192.168.132.201和192.168.132.202):

[root@192 opt]# mkdir -p /opt/kubernetes/{bin,cfg}

[root@192 ~]# cd /root/kubernetes/node/bin

[root@192 bin]# cp kube-proxy kubelet /opt/kubernetes/bin/

[root@localhost kubernetes]# tar xf kubernetes-src.tar.gz

[root@192 scripts]# pwd

/root/kubernetes/cluster/centos/node/scripts

[root@192 scripts]# cp kubelet.sh proxy.sh /opt/kubernetes/bin/

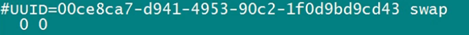

关闭swap:注释掉文件/etc/fstab, 临时关闭swapoff -a

[root@192 bin]# vim kubelet.sh

MASTER_ADDRESS=${1:-"192.168.132.200"}

NODE_ADDRESS=${2:-"192.168.132.201"}

DNS_SERVER_IP=${3:-"192.168.3.100"}

[root@192 bin]# vim proxy.sh

MASTER_ADDRESS=${1:-"192.168.132.200"}

NODE_ADDRESS=${2:-"192.168.132.201"}

[root@192 bin]# ./proxy.sh 192.168.132.200 192.168.132.201

[root@localhost bin]# ./kubelet.sh 192.168.132.200 192.168.132.201 192.168.3.100

node节点安装完毕,接下来同样步骤安装另外一个节点192.168.132.202

6, Flannel 网络部署(master端和node端都需要部署操作,不过master端比node端多操作一个步骤而已)

(master 多操作的是这一步骤,为master的第一步骤):

1)写入分配的子网段到 etcd,供 flanneld 使用

# etcdctl -endpoint="http://192.168.132.200:2379" set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

(下面这些是master和node操作是一样的,顺序操作就可以了)

2)下载二进制包

# wget https://github.com/coreos/flannel/releases/download/v0.9.1/flannel-v0 .9.1-linux-amd64.tar.gz

# tar zxvf flannel-v0.9.1-linux-amd64.tar.gz

# mv flanneld mk-docker-opts.sh /usr/bin

3)配置 Flannel

vi /etc/sysconfig/flanneld

FLANNEL_OPTIONS="--etcd-endpoints=http://192.168.132.200:2379 --ip-masq=true"

4)systemd 管理 Flannel

# vi /usr/lib/systemd/system/flanneld.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network.target

After=network-online.target

Wants=network-online.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/etc/sysconfig/flanneld

ExecStart=/usr/bin/flanneld $FLANNEL_OPTIONS

ExecStartPost=/usr/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target

RequiredBy=docker.service

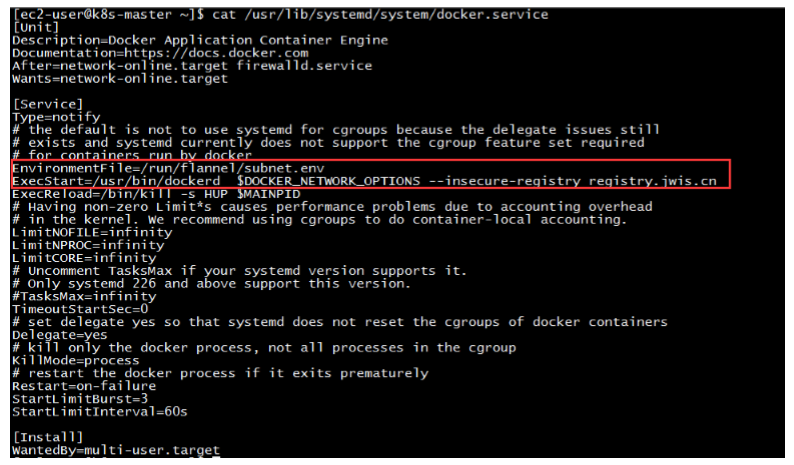

5)配置 Docker 启动指定子网段 (vim /usr/lib/systemd/system/docker.service)

EnvironmentFile=/run/flannel/subnet.env

ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS 红色增加部分

修改成如图:

6)启动

# systemctl daemon-reload

# systemctl start flanneld

# systemctl enable flanneld

# systemctl restart docker

7,部署验证

# kubectl get node

# kubectl get componentstatus