使用codec的multiline插件实现多行匹配,这是一个可以将多行进行合并的插件,而且可以使用what指定将匹配到的行与前面的行合并还是和后面的行合并。

1.java日志收集测试

input {

stdin {

codec => multiline {

pattern => "^\[" //以"["开头进行正则匹配

negate => true //正则匹配成功

what => "previous" //和前面的内容进行合并

}

}

}

output {

stdout {

codec => rubydebug

}

}2.查看elasticsearch日志,已"["开头

# cat /var/log/elasticsearch/cluster.log

[2018-05-29T08:00:03,068][INFO ][o.e.c.m.MetaDataCreateIndexService] [node-1] [systemlog-2018.05.29] creating index, cause [auto(bulk api)], templates [], shards [5]/[1], mappings []

[2018-05-29T08:00:03,192][INFO ][o.e.c.m.MetaDataMappingService] [node-1] [systemlog-2018.05.29/DCO-zNOHQL2sgE4lS_Se7g] create_mapping [system]

[2018-05-29T11:29:31,145][INFO ][o.e.c.m.MetaDataCreateIndexService] [node-1] [securelog-2018.05.29] creating index, cause [auto(bulk api)], templates [], shards [5]/[1], mappings []

[2018-05-29T11:29:31,225][INFO ][o.e.c.m.MetaDataMappingService] [node-1] [securelog-2018.05.29/ABd4qrCATYq3YLYUqXe3uA] create_mapping [secure]3.配置logstash

#vim /etc/logstash/conf.d/java.conf

input {

file {

path => "/var/log/elasticsearch/cluster.log"

type => "elk-java-log"

start_position => "beginning"

stat_interval => "2"

codec => multiline {

pattern => "^\["

negate => true

what => "previous"

}

}

}

output {

if [type] == "elk-java-log" {

elasticsearch {

hosts => ["192.168.1.31:9200"]

index => "elk-java-log-%{+YYYY.MM.dd}"

}

}

}4.启动

logstash -f /etc/logstash/conf.d/java.conf -t

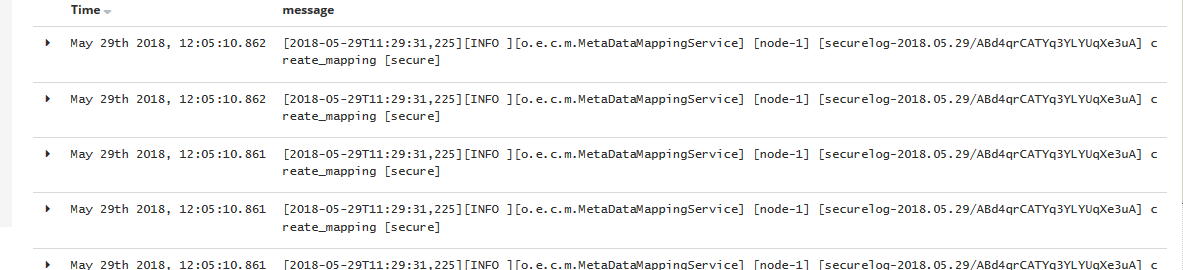

systemctl restart logstash 5.head插件查看

6.kibana添加日志