看这篇前建议先看:http://blog.csdn.net/wenniuwuren/article/details/78461448

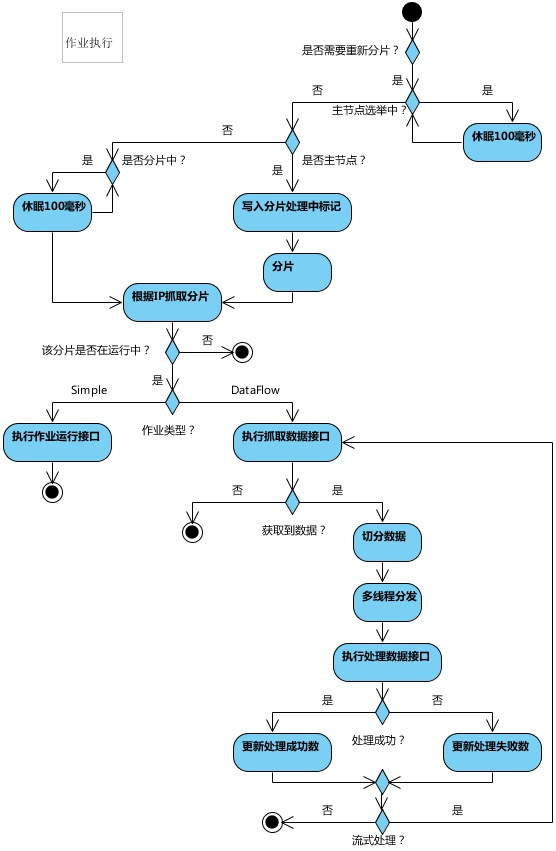

作业执行的核心流程:

因为使用了 Quartz,任务执行是实现了 Quartz 的 Job 接口:

public final class LiteJob implements Job {

@Setter

private ElasticJob elasticJob;

@Setter

private JobFacade jobFacade;

@Override

public void execute(final JobExecutionContext context) throws JobExecutionException {

// 工厂方法生成具体 Job 类型,如当前是 SimpleJob。

JobExecutorFactory.getJobExecutor(elasticJob, jobFacade).execute();

}

}

1.具体的 Job 来执行作业:

/**

* 执行作业.

*/

public final void execute() {

try {

// 检查作业执行环境,检查本机与注册中心的时间误差秒数是否在允许范围

jobFacade.checkJobExecutionEnvironment();

} catch (final JobExecutionEnvironmentException cause) {

jobExceptionHandler.handleException(jobName, cause);

}

// 获取当前作业服务器分片上下文

ShardingContexts shardingContexts = jobFacade.getShardingContexts();

// 是否允许可以发送作业事件.

if (shardingContexts.isAllowSendJobEvent()) {

// 发布作业状态追踪事件.

jobFacade.postJobStatusTraceEvent(shardingContexts.getTaskId(), State.TASK_STAGING, String.format("Job '%s' execute begin.", jobName));

}

if (jobFacade.misfireIfRunning(shardingContexts.getShardingItemParameters().keySet())) {

if (shardingContexts.isAllowSendJobEvent()) {

jobFacade.postJobStatusTraceEvent(shardingContexts.getTaskId(), State.TASK_FINISHED, String.format(

"Previous job '%s' - shardingItems '%s' is still running, misfired job will start after previous job completed.", jobName,

shardingContexts.getShardingItemParameters().keySet()));

}

return;

}

try {

// 作业执行前的执行的方法: 增加分片监听

jobFacade.beforeJobExecuted(shardingContexts);

//CHECKSTYLE:OFF

} catch (final Throwable cause) {

//CHECKSTYLE:ON

jobExceptionHandler.handleException(jobName, cause);

}

execute(shardingContexts, JobExecutionEvent.ExecutionSource.NORMAL_TRIGGER);

while (jobFacade.isExecuteMisfired(shardingContexts.getShardingItemParameters().keySet())) {

jobFacade.clearMisfire(shardingContexts.getShardingItemParameters().keySet());

execute(shardingContexts, JobExecutionEvent.ExecutionSource.MISFIRE);

}

// 故障转移

jobFacade.failoverIfNecessary();

try {

// 作业执行后的执行方法

jobFacade.afterJobExecuted(shardingContexts);

//CHECKSTYLE:OFF

} catch (final Throwable cause) {

//CHECKSTYLE:ON

jobExceptionHandler.handleException(jobName, cause);

}

}

1.1具体看下获取当前作业服务器分片上下文代码:

@Override

public ShardingContexts getShardingContexts() {

// 是否开启故障转移

boolean isFailover = configService.load(true).isFailover();

if (isFailover) {

List<Integer> failoverShardingItems = failoverService.getLocalFailoverItems();

if (!failoverShardingItems.isEmpty()) {

return executionContextService.getJobShardingContext(failoverShardingItems);

}

}

// 如果需要分片且当前节点为主节点, 则作业分片.

// 如果当前无可用节点则不分片.

shardingService.shardingIfNecessary();

// 获取运行在本作业实例的分片集合

List<Integer> shardingItems = shardingService.getLocalShardingItems();

if (isFailover) {

shardingItems.removeAll(failoverService.getLocalTakeOffItems());

}

// 删除禁用的分片项

shardingItems.removeAll(executionService.getDisabledItems(shardingItems));

// 获取当前作业服务分片上下文

return executionContextService.getJobShardingContext(shardingItems);

}

1.1.1进入 shardingService.shardingIfNecessary(); // 任务分片就在这个方法中完成

public void shardingIfNecessary() {

// 获取当前可用作业 instance 即作业节点

List<JobInstance> availableJobInstances = instanceService.getAvailableJobInstances();

if (!isNeedSharding() || availableJobInstances.isEmpty()) {

return;

}

if (!leaderService.isLeaderUntilBlock()) { // 判断当前节点是否是主节点。如果主节点正在选举中而导致取不到主节点, 则sleep至主节点选举完成再返回

blockUntilShardingCompleted();

return;

}

// 等待其他分片执行完成

waitingOtherShardingItemCompleted();

LiteJobConfiguration liteJobConfig = configService.load(false);

int shardingTotalCount = liteJobConfig.getTypeConfig().getCoreConfig().getShardingTotalCount();

log.debug("Job '{}' sharding begin.", jobName);

// 主节点分片时持有此节点作为标记,有次节点所有作业执行都阻塞直到分片结束

jobNodeStorage.fillEphemeralJobNode(ShardingNode.PROCESSING, "");

// 把 jobName/sharding/ 下原有的分片清理掉,重新创建分片信息

resetShardingInfo(shardingTotalCount);

// 选定分片策略,默认是平均分片算法

JobShardingStrategy jobShardingStrategy = JobShardingStrategyFactory.getStrategy(liteJobConfig.getJobShardingStrategyClass());

// 真正执行分片操作地方,分片完成后执行事务回调

jobNodeStorage.executeInTransaction(new PersistShardingInfoTransactionExecutionCallback(jobShardingStrategy.sharding(availableJobInstances, jobName, shardingTotalCount)));

log.debug("Job '{}' sharding complete.", jobName);

}

1.1.1.1 进入 jobNodeStorage.executeInTransaction:

/**

* 在事务中执行操作.

*

* @param callback 执行操作的回调

*/

public void executeInTransaction(final TransactionExecutionCallback callback) {

try {

// 分片肯定是要么全部成功,要么分片全部失败,所以放到事务中操作

CuratorTransactionFinal curatorTransactionFinal = getClient().inTransaction().check().forPath("/").and();

callback.execute(curatorTransactionFinal);

curatorTransactionFinal.commit();

//CHECKSTYLE:OFF

} catch (final Exception ex) {

//CHECKSTYLE:ON

RegExceptionHandler.handleException(ex);

}

}

1.1.1.1.1 事务操作的核心 callback.execute(curatorTransactionFinal);

// 事务执行操作回调接口

@RequiredArgsConstructor

class PersistShardingInfoTransactionExecutionCallback implements TransactionExecutionCallback {

private final Map<JobInstance, List<Integer>> shardingResults;

@Override

public void execute(final CuratorTransactionFinal curatorTransactionFinal) throws Exception {

for (Map.Entry<JobInstance, List<Integer>> entry : shardingResults.entrySet()) {

for (int shardingItem : entry.getValue()) {

// 将分片分配给具体的作业节点。事务操作,全部成功后才能看到分片的结果

curatorTransactionFinal.create().forPath(jobNodePath.getFullPath(ShardingNode.getInstanceNode(shardingItem)), entry.getKey().getJobInstanceId().getBytes()).and();

}

}

// 分片完成后,去掉需要分片标记

curatorTransactionFinal.delete().forPath(jobNodePath.getFullPath(ShardingNode.NECESSARY)).and();

// 分片完成后,去掉分片执行中标记

curatorTransactionFinal.delete().forPath(jobNodePath.getFullPath(ShardingNode.PROCESSING)).and();

}

}

执行完成后,可以 zk 看到结果:

get /elastic-job-demo/JobLite/sharding/0/instance

192.168.243.1@-@18444

1.1.2进入 executionContextService.getJobShardingContext(shardingItems); 细看一下:

/**

* 获取当前作业服务器分片上下文.

*

* @param shardingItems 分片项

* @return 分片上下文

*/

public ShardingContexts getJobShardingContext(final List<Integer> shardingItems) {

// 从zk获取作业配置

LiteJobConfiguration liteJobConfig = configService.load(false);

// 移除当前正在执行的作业分片

removeRunningIfMonitorExecution(liteJobConfig.isMonitorExecution(), shardingItems);

if (shardingItems.isEmpty()) { // 如果分片项为空,则创建一个分片上下文

// 如 taskId=JobLite@-@分片项用逗号隔开的字符串@-@READY@[email protected]@-@484

return new ShardingContexts(buildTaskId(liteJobConfig, shardingItems), liteJobConfig.getJobName(), liteJobConfig.getTypeConfig().getCoreConfig().getShardingTotalCount(),

liteJobConfig.getTypeConfig().getCoreConfig().getJobParameter(), Collections.<Integer, String>emptyMap());

}

Map<Integer, String> shardingItemParameterMap = new ShardingItemParameters(liteJobConfig.getTypeConfig().getCoreConfig().getShardingItemParameters()).getMap();

return new ShardingContexts(buildTaskId(liteJobConfig, shardingItems), liteJobConfig.getJobName(), liteJobConfig.getTypeConfig().getCoreConfig().getShardingTotalCount(),

liteJobConfig.getTypeConfig().getCoreConfig().getJobParameter(), getAssignedShardingItemParameterMap(shardingItems, shardingItemParameterMap));

}

1.2进入 execute(shardingContexts, JobExecutionEvent.ExecutionSource.NORMAL_TRIGGER);

private void execute(final ShardingContexts shardingContexts, final JobExecutionEvent.ExecutionSource executionSource) {

if (shardingContexts.getShardingItemParameters().isEmpty()) {

if (shardingContexts.isAllowSendJobEvent()) {

// 借助 Guava 异步化作业运行轨迹,这样不会影响任务执行,实现解耦

jobFacade.postJobStatusTraceEvent(shardingContexts.getTaskId(), State.TASK_FINISHED, String.format("Sharding item for job '%s' is empty.", jobName));

}

return;

}

jobFacade.registerJobBegin(shardingContexts);

String taskId = shardingContexts.getTaskId();

if (shardingContexts.isAllowSendJobEvent()) {

jobFacade.postJobStatusTraceEvent(taskId, State.TASK_RUNNING, "");

}

try {

process(shardingContexts, executionSource);

} finally {

// TODO 考虑增加作业失败的状态,并且考虑如何处理作业失败的整体回路

jobFacade.registerJobCompleted(shardingContexts);

if (itemErrorMessages.isEmpty()) {

if (shardingContexts.isAllowSendJobEvent()) {

jobFacade.postJobStatusTraceEvent(taskId, State.TASK_FINISHED, "");

}

} else {

if (shardingContexts.isAllowSendJobEvent()) {

jobFacade.postJobStatusTraceEvent(taskId, State.TASK_ERROR, itemErrorMessages.toString());

}

}

}

}

1.2.1 进入 process(shardingContexts, executionSource);

private void process(final ShardingContexts shardingContexts, final JobExecutionEvent.ExecutionSource executionSource) {

Collection<Integer> items = shardingContexts.getShardingItemParameters().keySet();

if (1 == items.size()) { // 1个分片就单线程执行

int item = shardingContexts.getShardingItemParameters().keySet().iterator().next();

JobExecutionEvent jobExecutionEvent = new JobExecutionEvent(shardingContexts.getTaskId(), jobName, executionSource, item);

process(shardingContexts, item, jobExecutionEvent);

return;

}

final CountDownLatch latch = new CountDownLatch(items.size());

for (final int each : items) { // 多个分片多线程执行

final JobExecutionEvent jobExecutionEvent = new JobExecutionEvent(shardingContexts.getTaskId(), jobName, executionSource, each);

if (executorService.isShutdown()) {

return;

}

executorService.submit(new Runnable() {

@Override

public void run() {

try {

process(shardingContexts, each, jobExecutionEvent);

} finally {

latch.countDown();

}

}

});

}

try {

latch.await();

} catch (final InterruptedException ex) {

Thread.currentThread().interrupt();

}

}

1.2.1.2 进入 process(shardingContexts, each, jobExecutionEvent);

private void process(final ShardingContexts shardingContexts, final int item, final JobExecutionEvent startEvent) {

if (shardingContexts.isAllowSendJobEvent()) {

jobFacade.postJobExecutionEvent(startEvent);

}

log.trace("Job '{}' executing, item is: '{}'.", jobName, item);

JobExecutionEvent completeEvent;

try {

process(new ShardingContext(shardingContexts, item));

completeEvent = startEvent.executionSuccess();

log.trace("Job '{}' executed, item is: '{}'.", jobName, item);

if (shardingContexts.isAllowSendJobEvent()) {

jobFacade.postJobExecutionEvent(completeEvent);

}

// CHECKSTYLE:OFF

} catch (final Throwable cause) {

// CHECKSTYLE:ON

completeEvent = startEvent.executionFailure(cause);

jobFacade.postJobExecutionEvent(completeEvent);

itemErrorMessages.put(item, ExceptionUtil.transform(cause));

jobExceptionHandler.handleException(jobName, cause);

}

}

1.2.1.2.1 进入 process(new ShardingContext(shardingContexts, item));// SimpleJobExecutor 类

@Override

protected void process(final ShardingContext shardingContext) {

simpleJob.execute(shardingContext);

}

// 这步再进去就回到我们的测试用例,因为测试用例实现了 SimpleJob 里

public void execute(ShardingContext context) {

System.out.println("调用拉啦啦");

switch (context.getShardingItem()) {

case 0:

// do something by sharding item 0

break;

case 1:

// do something by sharding item 1

break;

case 2:

// do something by sharding item 2

break;

// case n: ...

}

}

1.3故障转移 jobFacade.failoverIfNecessary(); // FailoverService类

@Override

public void failoverIfNecessary() {

if (configService.load(true).isFailover()) {

failoverService.failoverIfNecessary();

}

}

/**

* 如果需要失效转移, 则执行作业失效转移.

*/

public void failoverIfNecessary() {

if (needFailover()) {

// 故障转移的时候使用 /jobName/leader/failover/latch 来保证故障分片不会被重复转移

jobNodeStorage.executeInLeader(FailoverNode.LATCH, new FailoverLeaderExecutionCallback());

}

}

// 故障转移具体内部类

class FailoverLeaderExecutionCallback implements LeaderExecutionCallback {

@Override

public void execute() {

if (JobRegistry.getInstance().isShutdown(jobName) || !needFailover()) {

return;

}

// 从 jobName/leader/failover/items 抓取需要转移的作业

int crashedItem = Integer.parseInt(jobNodeStorage.getJobNodeChildrenKeys(FailoverNode.ITEMS_ROOT).get(0));

log.debug("Failover job '{}' begin, crashed item '{}'", jobName, crashedItem);

jobNodeStorage.fillEphemeralJobNode(FailoverNode.getExecutionFailoverNode(crashedItem), JobRegistry.getInstance().getJobInstance(jobName).getJobInstanceId());

jobNodeStorage.removeJobNodeIfExisted(FailoverNode.getItemsNode(crashedItem));

// TODO 不应使用triggerJob, 而是使用executor统一调度。

JobScheduleController jobScheduleController = JobRegistry.getInstance().getJobScheduleController(jobName);

if (null != jobScheduleController) {

// 这里程序故障转移调度一次就行,因为下次分片的时候不会分片到宕机的机器

jobScheduleController.triggerJob();

}

}

}