传统Hive计算引擎为MapReduce,在Spark1.3版本之后,SparkSql正式发布,并且SparkSql与apache hive基本完全兼容,基于Spark强大的计算能力,使用Spark处理hive中的数据处理速度远远比传统的Hive快。

在idea中使用SparkSql读取HIve表中的数据步骤如下

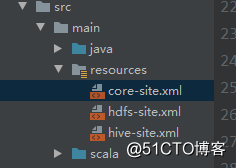

1、首先,准备测试环境,将hadoop集群conf目录下的core-site.xml、hdfs-site.xml和Hive中conf目录下hive-site.xml拷贝在resources目录下

2、pom依赖

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>2.0.1</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.7.1</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-hive_2.11</artifactId>

<version>2.0.1</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.25</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.11</artifactId>

<version>2.0.1</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.7.1</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>2.7.1</version>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-client</artifactId>

<version>1.4.11</version>

</dependency>

<dependency>

<groupId>com.alibaba</groupId>

<artifactId>fastjson</artifactId>

<version>1.2.41</version>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-server</artifactId>

<version>1.4.11</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_2.11</artifactId>

<version>2.2.0</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming_2.11</artifactId>

<version>2.1.0</version>

</dependency>

<dependency>

<groupId>com.101tec</groupId>

<artifactId>zkclient</artifactId>

<version>0.3</version>

</dependency>

<dependency>

<groupId>com.github.sgroschupf</groupId>

<artifactId>zkclient</artifactId>

<version>0.1</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming-kafka-0-10_2.11</artifactId>

<version>2.3.0</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-scala_2.11</artifactId>

<version>1.7.2</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-scala_2.11</artifactId>

<version>1.7.2</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table_2.11</artifactId>

<version>1.7.2</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.11</artifactId>

<version>2.0.1</version>

</dependency>3、代码开发

import org.apache.spark.sql.{DataFrame, SparkSession}

object SparkSql_Hive {

def main(args: Array[String]): Unit = {

//创建SparkSession对象

val spark = SparkSession.builder()

.appName(this.getClass.getSimpleName)

.master("local[*]")

.config("dfs.client.use.datanode.hostname", "true")

.enableHiveSupport()

.getOrCreate()

//指定库名

val sql1 = "use mydb"

spark.sql(sql1)

//查看该库下的表结构

val sql2 = "show tables"

spark.sql(sql2).show()

//读取hivemydb库下per表

val sql3 = "select * from mydb.per"

spark.sql(sql3).show()

}

}4、查看打印结果

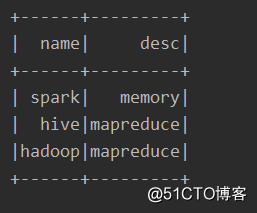

显示mydb库下的所有表

可以看到SparkSql已经读取了Hive中的数据

5、测试中遇到的问题

(1)、找不到HIve相关的类

Exception in thread "main" java.lang.IllegalArgumentException: Unable to instantiate SparkSession with Hive support because Hive classes are not found.

at org.apache.spark.sql.SparkSession$Builder.enableHiveSupport(SparkSession.scala:778)

at com.yangshou.SparkSql_Hive$.main(SparkSql_Hive.scala:12)

at com.yangshou.SparkSql_Hive.main(SparkSql_Hive.scala)通过查阅相关资料,最后认为是Spark版本不对,把pom文件中Spark2.1.0的版本改为2.0.1,最终解决问题