Stable Diffusion is a powerful AI model for image generation, but it often requires a lot of tweaking and hint engineering. Fooocus aims to change that.

Lvmin Zhang, founder of Fooocus (and author of the ControlNet paper), described the project as a redesign of the "Stable Diffusion" and "Midjourney" designs. Fooocus is like a free offline version of Midjourney, but it uses SDXL models. In other words, it has optimized the drawing process of Stable Diffusion very well, without so many cumbersome configurations.

Fooocus has many optimizations and quality improvements built in and automated, turning the manual setup of other pages into an automatic configuration, so that like Midjourney, this will get good results on every try. If you want to do more, you can use Fooocus' Advanced tab. Like setting a clarity filter or customizing lora.

In this post, we will introduce how to use Fooocus locally and on Colab

run on windows

Just download the file, unzip it, and run run.bat, it's that simple

On first run it will automatically download the model, if you already have the files you can copy them to the above location for faster installation.

- sd_xl_base_1.0_0.9vae.safetensors

- sd_xl_refiner_1.0_0.9vae.safetensors

Fooocus can run on a system with 16gb RAM and 6GB VRAM, the performance is very good, the picture below is from Github.

The minimum requirements are 4GB of Nvidia GPU memory (4GB VRAM) and 8GB of system memory (8GB RAM).

run on linux

On Linux it will be even simpler:

git clone https://github.com/lllyasviel/Fooocus.git

cd Fooocus

conda env create -f environment.yaml

conda activate fooocus

pip install -r requirements_versions.txt

Similar to Windows to download the model to speed up the process, but his start command becomes:

python launch.py

Or if you want to open the remote port, you need to use the listen parameter

python launch.py --listen

Run on Google Colab

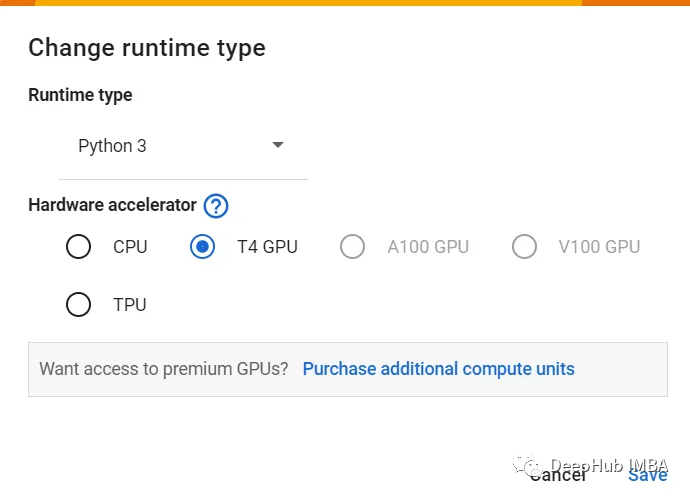

Because the GPU needs to be used, we choose T4 GPU here because it is enough

Then use the following command, due to the download and installation, the operation may take some time to complete, but the download speed of Colab is very fast, we don't need to transfer the model

%cd /content

!git clone https://github.com/lllyasviel/Fooocus

!apt -y update -qq

!wget https://github.com/camenduru/gperftools/releases/download/v1.0/libtcmalloc_minimal.so.4 -O /content/libtcmalloc_minimal.so.4

%env LD_PRELOAD=/content/libtcmalloc_minimal.so.4

!pip install torchsde==0.2.5 einops==0.4.1 transformers==4.30.2 safetensors==0.3.1 accelerate==0.21.0

!pip install pytorch_lightning==1.9.4 omegaconf==2.2.3 gradio==3.39.0 xformers==0.0.20 triton==2.0.0 pygit2==1.12.2

!apt -y install -qq aria2

!aria2c --console-log-level=error -c -x 16 -s 16 -k 1M https://huggingface.co/ckpt/sd_xl_base_1.0/resolve/main/sd_xl_base_1.0_0.9vae.safetensors -d /content/Fooocus/models/checkpoints -o sd_xl_base_1.0_0.9vae.safetensors

!aria2c --console-log-level=error -c -x 16 -s 16 -k 1M https://huggingface.co/ckpt/sd_xl_refiner_1.0/resolve/main/sd_xl_refiner_1.0_0.9vae.safetensors -d /content/Fooocus/models/checkpoints -o sd_xl_refiner_1.0_0.9vae.safetensors

!aria2c --console-log-level=error -c -x 16 -s 16 -k 1M https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0/resolve/main/sd_xl_offset_example-lora_1.0.safetensors -d /content/Fooocus/models/loras -o sd_xl_offset_example-lora_1.0.safetensors

%cd /content/Fooocus

!git pull

!python launch.py --share

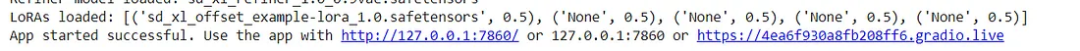

When it completes, you should see a connection similar to the image below

Click the gradio.live link on the right to see the interface. If you want to make advanced settings, you can see more advanced settings in the advanced option

Summarize

The operation of Fooocus is much more convenient than AUTOMATIC1111, and the installation is also simple, look at the results I generated

Finally, more detailed information on Github can be found here

https://avoid.overfit.cn/post/7428cf29b9bd438e9948178252bf9ee5