foreword

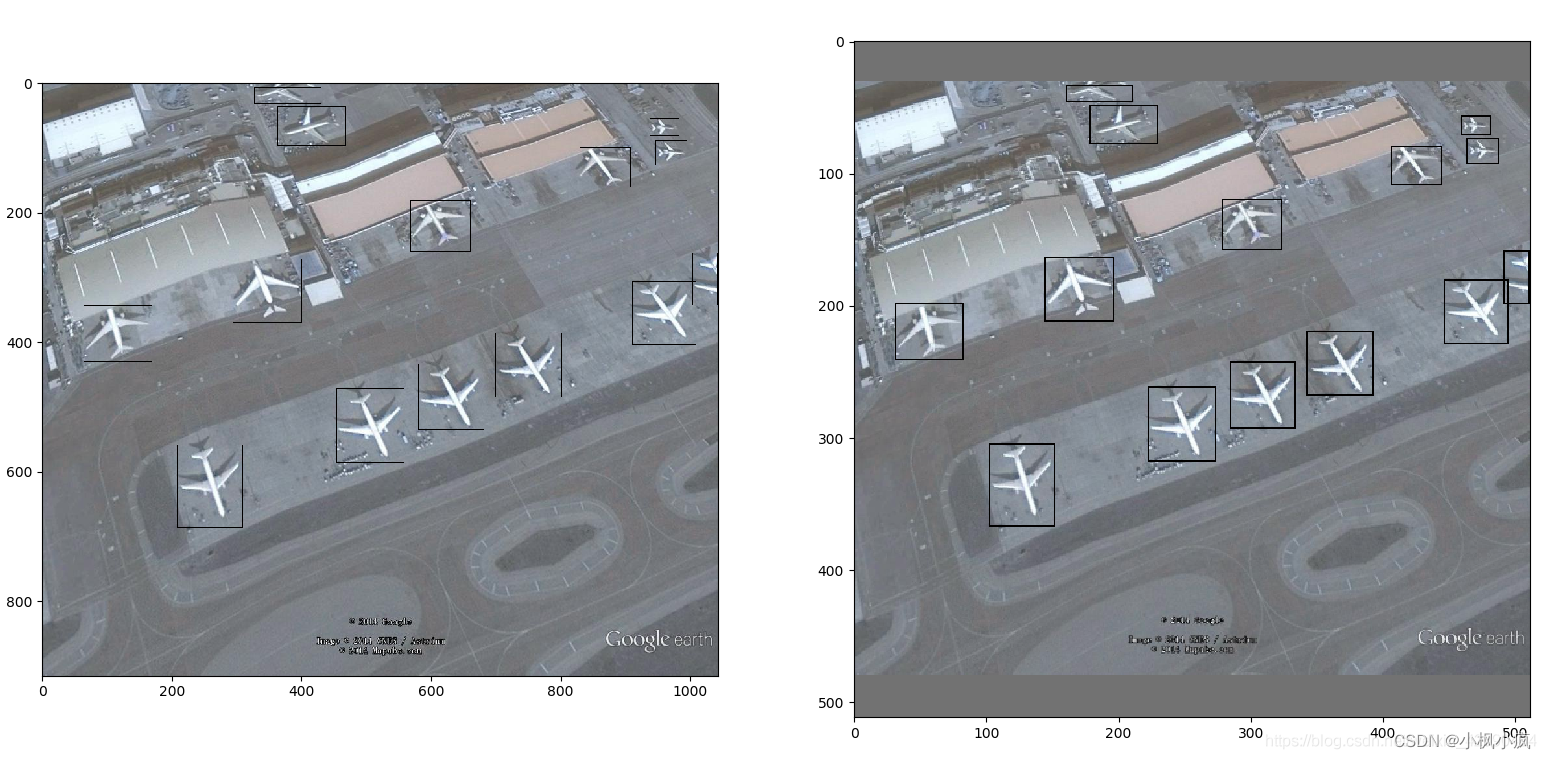

The size of the input picture of the deep learning model is square, while the pictures in the data set are generally rectangular. Rough resizing will distort the picture. Using letterbox can better solve this problem. This method can keep the aspect ratio of the picture, and fill the rest with gray

import cv2

import numpy as np

import xml.etree.ElementTree as ET

class_dict = {'aircraft': 1}

def letterbox(img, new_shape=(640, 640), color=(114, 114, 114), auto=True, scaleFill=False, scaleup=True):

# Resize image to a 32-pixel-multiple rectangle https://github.com/ultralytics/yolov3/issues/232

shape = img.shape[:2] # current shape [height, width]

if isinstance(new_shape, int):

new_shape = (new_shape, new_shape)

# Scale ratio (new / old)

r = min(new_shape[0] / shape[0], new_shape[1] / shape[1])

if not scaleup: # only scale down, do not scale up (for better test mAP)

r = min(r, 1.0)

# Compute padding

ratio = r, r # width, height ratios

new_unpad = int(round(shape[1] * r)), int(round(shape[0] * r))

dw, dh = new_shape[1] - new_unpad[0], new_shape[0] - new_unpad[1] # wh padding

if auto: # minimum rectangle

dw, dh = np.mod(dw, 64), np.mod(dh, 64) # wh padding

elif scaleFill: # stretch

dw, dh = 0.0, 0.0

new_unpad = (new_shape[1], new_shape[0])

ratio = new_shape[1] / shape[1], new_shape[0] / shape[0] # width, height ratios

dw /= 2 # divide padding into 2 sides

dh /= 2

if shape[::-1] != new_unpad: # resize

img = cv2.resize(img, new_unpad, interpolation=cv2.INTER_LINEAR)

top, bottom = int(round(dh - 0.1)), int(round(dh + 0.1))

left, right = int(round(dw - 0.1)), int(round(dw + 0.1))

img = cv2.copyMakeBorder(img, top, bottom, left, right, cv2.BORDER_CONSTANT, value=color) # add border

return img, ratio, (dw, dh)

def parse_xml(path):

tree = ET.parse(path)

root = tree.findall('object')

class_list = []

boxes_list = []

difficult_list = []

for sub in root:

xmin = float(sub.find('bndbox').find('xmin').text)

xmax = float(sub.find('bndbox').find('xmax').text)

ymin = float(sub.find('bndbox').find('ymin').text)

ymax = float(sub.find('bndbox').find('ymax').text)

boxes_list.append([xmin, ymin, xmax, ymax])

class_list.append(class_dict[sub.find('name').text])

difficult_list.append(int(sub.find('difficult').text))

return np.array(class_list), np.array(boxes_list).astype(np.int32)

if __name__=="__main__":

import glob

import matplotlib.pyplot as plt

imglist = glob.glob(f'./JPEGImages/*.jpg')

shape = (512, 512)

imgPath = imglist[0]

xmlPath = imgPath[:-3] + 'xml'

img = cv2.imread(imgPath)

labels, boxes = parse_xml(xmlPath.replace('JPEGImages', 'Annotation/xml'))

img2, ratio, pad = letterbox(img.copy(), shape, auto=False, scaleup=False)

new_boxes = np.zeros_like(boxes)

new_boxes[:, 0] = ratio[0] * boxes[:, 0] + pad[0] # pad width

new_boxes[:, 1] = ratio[1] * boxes[:, 1] + pad[1] # pad height

new_boxes[:, 2] = ratio[0] * boxes[:, 2] + pad[0]

new_boxes[:, 3] = ratio[1] * boxes[:, 3] + pad[1]

sample1 = img.copy()

for box in boxes:

cv2.rectangle(sample1, (box[0], box[1]), (box[2], box[3]), (1, 0, 0), 1)

sample2 = img2.copy()

for box in new_boxes:

cv2.rectangle(sample2, (box[0], box[1]), (box[2], box[3]), (1, 0, 0), 1)

plt.subplot(121)

plt.imshow(sample1)

plt.subplot(122)

plt.imshow(sample2)

plt.show()