Article directory

foreword

Graphics card is 3060ti g6x, operating system win10

1. Basic knowledge

Be clear about the following common sense

1. GPU and CPU are designed with different architectures. Simply put, GPU has many more computing units than CPU, and it is much faster than CPU when used to train the network.

2. CUDA is a computing platform and programming model that provides an interface for operating GPUs.

3. The installation of CUDA mentioned in many online tutorials actually refers to CUDA Toolkit, which is a toolkit.

4. CUDNN is a CUDA-based deep learning GPU acceleration library. With it, deep learning calculations can be completed on the GPU.

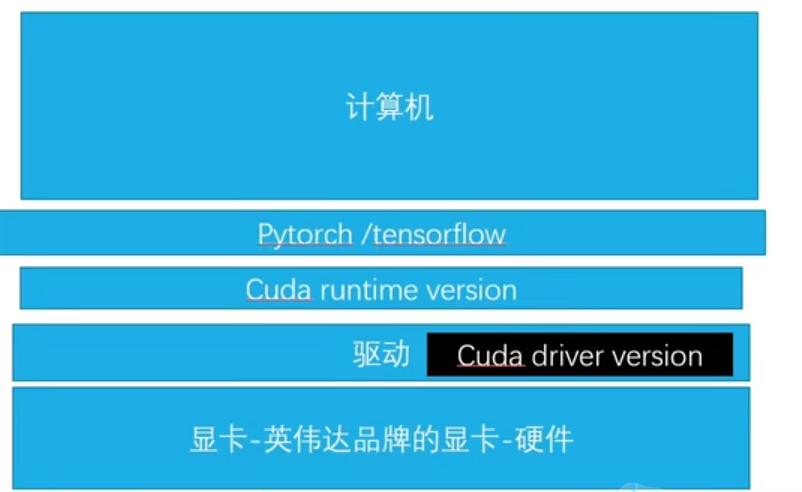

5. Distinguish between Cuda runtime version and Cuda driver version.

Here is a picture of a certain UP master.

Enter the CUDA version in the red box in the nidia-smi picture on the command line.

It represents the Cuda driver version, which is related to the driver of our graphics card. of.

It is often said that installing the corresponding version of Cuda refers to the Cuda runtime version, which can be understood as the version of the Cuda toolkit.

6. Many tutorials download Cuda toolkit and Cudnn separately. In fact, for novices, after using Anaconda to create a virtual environment, you can directly run the pytorch installation command in the virtual environment, and Cuda toolkit and Cudnn will be automatically installed in this in the virtual environment.

2. Installation steps

1. First determine whether you have an Nvidia graphics card

If not, just follow the CPU version.

If yes, go to the next step

2. Install or view your graphics card driver

Check the driver corresponding to your graphics card at the following URL.

It is best to update your graphics card driver to the latest version.

https://www.nvidia.cn/Download/index.aspx?lang=cn

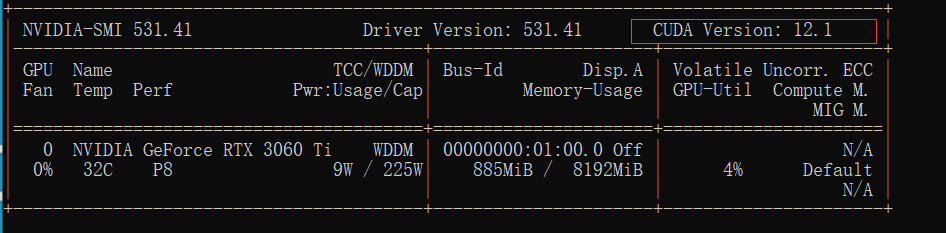

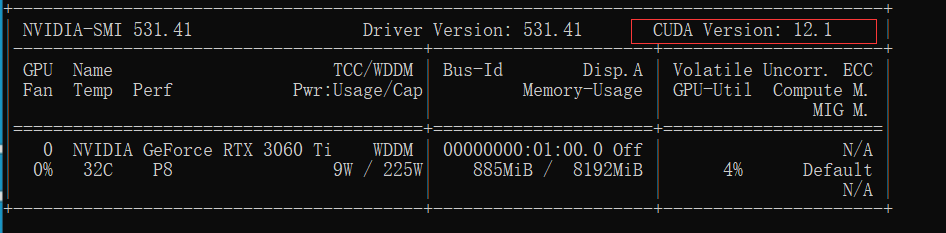

After the driver is installed,

enter in cmd

nvidia-smi

Remind again, the CUDA version here: 12.1 means that the Cuda driver version is 12.1, which is related to the driver of the graphics card.

3. The computing power of the graphics card must match the Cuda runtime version

First of all, let me explain that the Cuda runtime version here is actually the version of the Cuda toolkit, that is, the CUDA version referred to by many online tutorials (different from the second step).

Check the computing power of the graphics card and the matching Cuda runtime version at the following URL

https://en.wikipedia.org/wiki/CUDA#cite_note-38

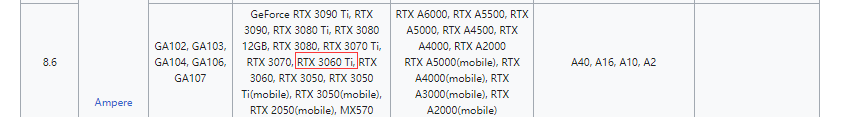

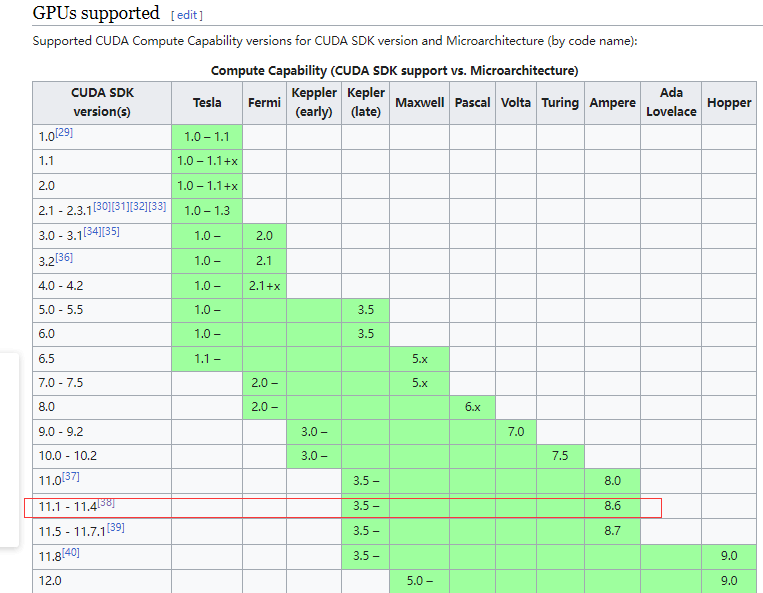

The computing power corresponding to 3060ti is 8.6,

and then check the graphics card computing power 8.6.

The corresponding version of the Cuda runtime version is 11.1-11.4. By the way, Ampere in the figure refers to the architecture adopted by the graphics card.

4. Select the appropriate Cuda runtime version according to the above two

The selected version should meet two requirements

(1) Cuda runtime version must be less than or equal to Cuda driver version, in my case, it must be less than or equal to 12.1.

(2) Under the conditions of (1), the version must also match the computing power of the graphics card.

That is to say, in my example, you can choose 11.1-11.4. I don’t know if the

Cuda driver version is greater than 11.1-11.4 and less than or equal to 12.1, that is, whether it is compatible with lower versions. I haven’t tried it. I know Big guys welcome discussions in the comment area.

5. Download pytorch

pytorch official website

https://pytorch.org/

There are only two versions, 11.7 and 11.8, which do not meet my requirements. Click install previous versions in the picture to find the appropriate version.

According to the above steps, the version that satisfies the conditions is 11.1-11.4, 11.4 is not available, search for 11.3 and

run the following command to change the source to install pytorch

conda install pytorch==1.12.1 torchvision==0.13.1 torchaudio==0.12.1 cudatoolkit=11.3 -c http://mirrors.tuna.tsinghua.edu.cn/anaconda/cloud/pytorch/win-64

Replace the pytorch after the command with Tsinghua source.

The installation process has exited, unable to take a screenshot

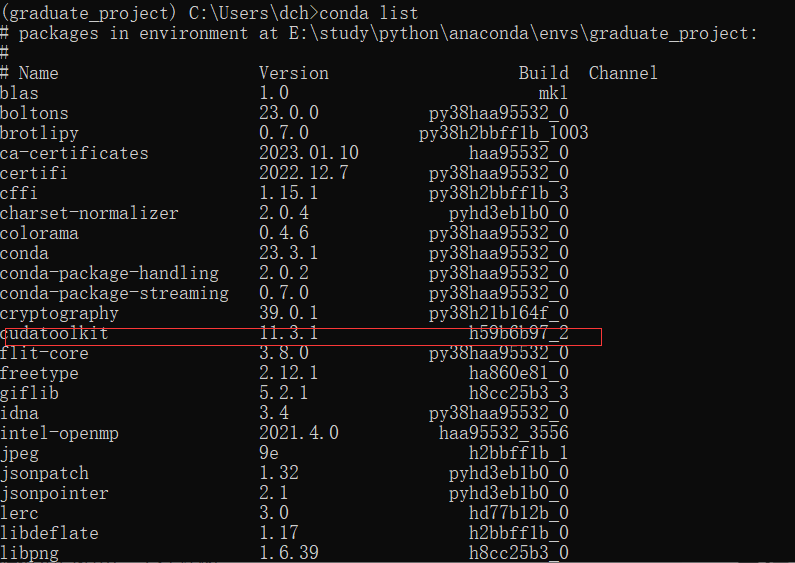

. After the installation is complete, enter and conda list

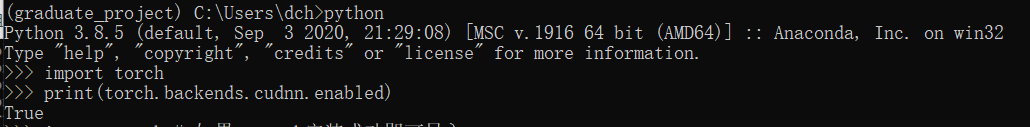

see that cudatoolkit already exists. As for cudann, it cannot be found in the conda list. It is said on the Internet that it is in pytorch. You can verify it through the following command

import torch

print(torch.backends.cudnn.enabled)

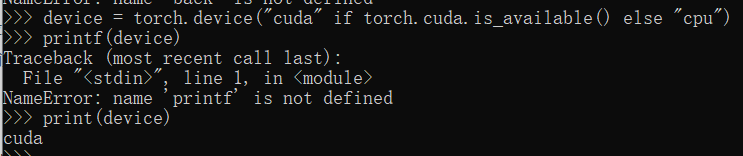

Finally, verify the following Cuda

and enter the following code

import torch

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

#返回cuda表示成功

#或者

print(torch.cuda.is_available())

#返回True表示成功