Introduction to K8S k8s is a brand-new leading solution for distributed architecture based on container technology and an open source version of Google's Borg system.

The essence of K8S is a set of server clusters. It can run specific programs on each node of the cluster to manage the containers in the nodes. Its purpose is to automate resource management. It mainly provides the following functions:

Automatic repair: once a container crashes, it can quickly start a new container in about a second

Elastic scaling: The amount of data that the cluster is running can be automatically adjusted as needed

Service discovery: A service can find the services it depends on through automatic discovery

Load balancing: If a service starts multiple containers, it can automatically achieve load balancing of requests

Storage Orchestration: Storage volumes can be automatically created according to the needs of the container itself

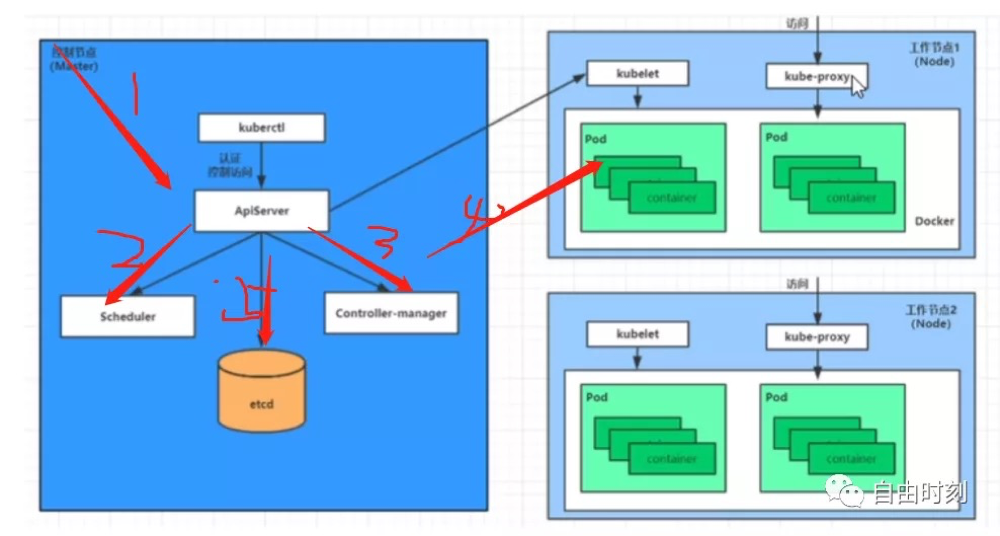

The control node of the master node cluster of the K8S component

ApiServer: The only entry for resource operations, receives commands entered by users, and provides mechanisms such as authentication, authorization, api registration and discovery

Scheduler: Responsible for cluster resource scheduling, install scheduled scheduling policies to schedule pods to corresponding nodes

ControllerManager: Responsible for maintaining the state of the cluster, such as program deployment arrangements, fault detection, automatic expansion, rolling updates, etc.

Etcd: responsible for storing various resource object information in the cluster

The data plane of the node node cluster, responsible for providing the running environment for the container

Kubelet: responsible for maintaining the life cycle of containers, that is, by controlling docker, to create, update, and destroy containers

KubeProxy: responsible for providing service discovery within the cluster and responsible for balancing

Docker: responsible for various operations of the container on the node

K8S concept master: cluster control node, each cluster needs at least one master node responsible for cluster management and control

node: workload node, the master assigns containers to these node worker nodes, and then the docker on the node node is responsible for the running of the container

Pod: The smallest control unit of k8s, containers are run as pods, and a pod can have one or more containers

controller: controller, through which to manage pods, such as starting pods, stopping pods, scaling the number of pods, etc.

service: The unified entrance of pod to external services, which can maintain multiple pods of the same type

label: label, used to classify pods, the same type of pod will have the same label

nameSpace: namespace, used to isolate the running environment of the pod

Note: non-original, follow the dark horse video to learn the notes typed word by word

Video address: https://www.bilibili.com/video/BV1xX4y1K7nb?p=2