一、anaconda3+python3+opencv3.4

主要参考博客https://blog.csdn.net/ling_xiobai/article/details/82082614

opencv3.4可以通过anaconda3下载并自动安装pip install。。。。。(网上方法很多)

之后下载yolov3.weights权重文件、yolov3.cfg网络构建文件、coco.names数据集(可以下载darknet_master,里面能找到coco.names数据集)

新建一个.py文件,我命名为yolo.py,在里面复制如下代码:

# This code is written at BigVision LLC. It is based on the OpenCV project. It is subject to the license terms in the LICENSE file found in this distribution and at http://opencv.org/license.html

# Usage example: python3 object_detection_yolo.py --video=run.mp4

# python3 object_detection_yolo.py --image=bird.jpg

import cv2 as cv

import argparse

import sys

import numpy as np

import os.path

# Initialize the parameters

confThreshold = 0.5 #Confidence threshold

nmsThreshold = 0.4 #Non-maximum suppression threshold置信度阈值

inpWidth = 320 #Width of network's input image,改为320*320更快

inpHeight = 320 #Height of network's input image,改为608*608更准

parser = argparse.ArgumentParser(description='Object Detection using YOLO in OPENCV')

parser.add_argument('--image', help='Path to image file.')

parser.add_argument('--video', help='Path to video file.')

args = parser.parse_args()

# Load names of classes

classesFile = "coco.names";

classes = None

with open(classesFile, 'rt') as f:

classes = f.read().rstrip('\n').split('\n')

# Give the configuration and weight files for the model and load the network using them.

modelConfiguration = "yolov3.cfg";

modelWeights = "yolov3.weights";

net = cv.dnn.readNetFromDarknet(modelConfiguration, modelWeights)

net.setPreferableBackend(cv.dnn.DNN_BACKEND_OPENCV)

net.setPreferableTarget(cv.dnn.DNN_TARGET_CPU) #可切换到GPU,cv.dnn.DNN_TARGET_OPENCL,

# 只支持Intel的GPU,没有则自动切换到cpu

# Get the names of the output layers

def getOutputsNames(net):

# Get the names of all the layers in the network

layersNames = net.getLayerNames()

# Get the names of the output layers, i.e. the layers with unconnected outputs

return [layersNames[i[0] - 1] for i in net.getUnconnectedOutLayers()]

# Draw the predicted bounding box

def drawPred(classId, conf, left, top, right, bottom):

# Draw a bounding box.

cv.rectangle(frame, (left, top), (right, bottom), (255, 178, 50), 3)

label = '%.2f' % conf

# Get the label for the class name and its confidence

if classes:

assert(classId < len(classes))

label = '%s:%s' % (classes[classId], label)

#Display the label at the top of the bounding box

labelSize, baseLine = cv.getTextSize(label, cv.FONT_HERSHEY_SIMPLEX, 0.5, 1)

top = max(top, labelSize[1])

cv.rectangle(frame, (left, top - round(1.5*labelSize[1])), (left + round(1.5*labelSize[0]), top + baseLine), (255, 255, 255), cv.FILLED)

cv.putText(frame, label, (left, top), cv.FONT_HERSHEY_SIMPLEX, 0.75, (0,0,0), 1)

# Remove the bounding boxes with low confidence using non-maxima suppression

def postprocess(frame, outs):

frameHeight = frame.shape[0]

frameWidth = frame.shape[1]

classIds = []

confidences = []

boxes = []

# Scan through all the bounding boxes output from the network and keep only the

# ones with high confidence scores. Assign the box's class label as the class with the highest score.

classIds = []

confidences = []

boxes = []

for out in outs:

for detection in out:

scores = detection[5:]

classId = np.argmax(scores)

confidence = scores[classId]

if confidence > confThreshold:

center_x = int(detection[0] * frameWidth)

center_y = int(detection[1] * frameHeight)

width = int(detection[2] * frameWidth)

height = int(detection[3] * frameHeight)

left = int(center_x - width / 2)

top = int(center_y - height / 2)

classIds.append(classId)

confidences.append(float(confidence))

boxes.append([left, top, width, height])

# Perform non maximum suppression to eliminate redundant overlapping boxes with

# lower confidences.

indices = cv.dnn.NMSBoxes(boxes, confidences, confThreshold, nmsThreshold)

for i in indices:

i = i[0]

box = boxes[i]

left = box[0]

top = box[1]

width = box[2]

height = box[3]

drawPred(classIds[i], confidences[i], left, top, left + width, top + height)

# Process inputs

winName = 'Deep learning object detection in OpenCV'

cv.namedWindow(winName, cv.WINDOW_NORMAL)

outputFile = "yolo_out_py.avi"

if (args.image):

# Open the image file

if not os.path.isfile(args.image):

print("Input image file ", args.image, " doesn't exist")

sys.exit(1)

cap = cv.VideoCapture(args.image)

outputFile = args.image[:-4]+'_yolo_out_py.jpg'

elif (args.video):

# Open the video file

if not os.path.isfile(args.video):

print("Input video file ", args.video, " doesn't exist")

sys.exit(1)

cap = cv.VideoCapture(args.video)

outputFile = args.video[:-4]+'_yolo_out_py.avi'

else:

# Webcam input

cap = cv.VideoCapture(1)

# Get the video writer initialized to save the output video

if (not args.image):

vid_writer = cv.VideoWriter(outputFile, cv.VideoWriter_fourcc('M','J','P','G'), 30, (round(cap.get(cv.CAP_PROP_FRAME_WIDTH)),round(cap.get(cv.CAP_PROP_FRAME_HEIGHT))))

while cv.waitKey(1) < 0:

# get frame from the video

hasFrame, frame = cap.read()

# Stop the program if reached end of video

if not hasFrame:

print("Done processing !!!")

print("Output file is stored as ", outputFile)

cv.waitKey(3000)

break

# Create a 4D blob from a frame.

blob = cv.dnn.blobFromImage(frame, 1/255, (inpWidth, inpHeight), [0,0,0], 1, crop=False)

# Sets the input to the network

net.setInput(blob)

# Runs the forward pass to get output of the output layers

outs = net.forward(getOutputsNames(net))

# Remove the bounding boxes with low confidence

postprocess(frame, outs)

# Put efficiency information. The function getPerfProfile returns the overall time for inference(t) and the timings for each of the layers(in layersTimes)

t, _ = net.getPerfProfile()

label = 'Inference time: %.2f ms' % (t * 1000.0 / cv.getTickFrequency())

cv.putText(frame, label, (0, 15), cv.FONT_HERSHEY_SIMPLEX, 0.5, (0, 0, 255))

# Write the frame with the detection boxes

if (args.image):

cv.imwrite(outputFile, frame.astype(np.uint8));

else:

vid_writer.write(frame.astype(np.uint8))

cv.imshow(winName, frame)

里面哪些包缺少了,对应进行下载安装就好了。需要将之前下载好的yolov3.weights权重文件、yolov3.cfg网络构建文件、coco.names数据集放在yolo.py所在文件夹下。

需要注意的是该代码如果没有找到本地的视频或图片,就会直接调用摄像头。

注意下面这一段代码:

parser = argparse.ArgumentParser(description='Object Detection using YOLO in OPENCV')

parser.add_argument('--image', help='Path to image file.')

parser.add_argument('--video', help='Path to video file.')

args = parser.parse_args()

指向的就是本地的图片或视频,可以将其换为对象的绝对路径,或者通过命令行先进入yolo.py所在的目录下输入:

python yolo.py --image F:\jay.jpg每个人不同的地方需要进行修改,其中yolo.py是对应的.py文件,F:\jay,jpg是对应需要检测的本地图片的绝对路径,视频的方法类似,但需要是.avi格式的视频。下面是我的检测结果(居然泰迪熊都可以识别详细的!):

二、VS2015+opencv3.2.0

主要参考博客https://www.cnblogs.com/skymiao/p/10825286.html

我用的是vs2015,都一样的

在上面同样的地址中下载好darknet_master。

用vs2015打开darknet-master\build\darknet\darknet_no_gpu.sln

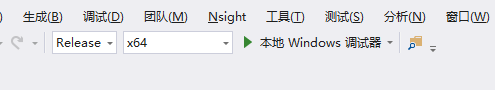

将项目改成Release X64(注意配置opencv时Release和Debug的区别)

在vs2015中配置好opencv3.2的环境(放心,和上面的opencv3.4不冲突的)

注意,一定要在下载好的opencv中找到以下三个文件(opencv_ffmpeg320_64.dll、opencv_world320.dll、opencv_world320d.dll):

将其复制后,放在C:\Windows\System32目录下,

同样将opencv_ffmpeg320_64.dll、opencv_world320.dll、yolov3.weights复制到…\darknet-master\build\darknet\x64 目录下。

完成后。在vs2015中右击项目darknet_no_gpu,选择“生成”,之后点击“本地Windows调试器”进行编译,会在\darknet_master\darknet-master\build\darknet\x64目录下生成darknet_no_gpu.exe文件,该文件不可直接运行。

可以通过命令行进入该目录下,输入:

darknet_no_gpu.exe detector test data/coco.data yolov3.cfg yolov3.weights -i 0 -thresh 0.25 dog.jpg -ext_output可发现完成配置。我的效果如下:

到此,两种方法测试yolov3深度学习目标识别的方法完成。。。