在最近跟着《一起玩转tensorlayer》敲代码,在降噪自编码器的重构图像可视化时总是报错没有给placeholder赋值。检查代码没有发现feed_dict有错误,最后查tensorlayer文档明白了问题出在tl.layers.DropoutLayer层。

如果网络模型中用到了tl.layers.DropoutLayer层,在训练和测试的sess.run()之前必须要对feed_dict做出一定的操作。文档如下:

Dropout 层

class tensorlayer.layers.DropoutLayer(prev_layer, keep=0.5, is_fix=False, is_train=True, seed=None, name='dropout_layer') 源代码

The DropoutLayer class is a noise layer which randomly set some activations to zero according to a keeping probability.

| 参数: |

|

|---|

Examples

Method 1: Using all_drop see tutorial_mlp_dropout1.py

import tensorflow as tf

import tensorlayer as tl

net = tl.layers.InputLayer(x, name='input_layer')

net = tl.layers.DropoutLayer(net, keep=0.8, name='drop1')

net = tl.layers.DenseLayer(net, n_units=800, act=tf.nn.relu, name='relu1')

...

# For training, enable dropout as follow.

feed_dict = {x: X_train_a, y_: y_train_a}

feed_dict.update( net.all_drop ) # enable noise layers

sess.run(train_op, feed_dict=feed_dict)

...

# For testing, disable dropout as follow.

dp_dict = tl.utils.dict_to_one( net.all_drop ) # disable noise layers

feed_dict = {x: X_val_a, y_: y_val_a}

feed_dict.update(dp_dict)

err, ac = sess.run([cost, acc], feed_dict=feed_dict)

...Method 2: Without using all_drop see tutorial_mlp_dropout2.py

def mlp(x, is_train=True, reuse=False):

with tf.variable_scope("MLP", reuse=reuse):

tl.layers.set_name_reuse(reuse)

net = tl.layers.InputLayer(x, name='input')

net = tl.layers.DropoutLayer(net, keep=0.8, is_fix=True,

is_train=is_train, name='drop1')

...

return net

net_train = mlp(x, is_train=True, reuse=False)

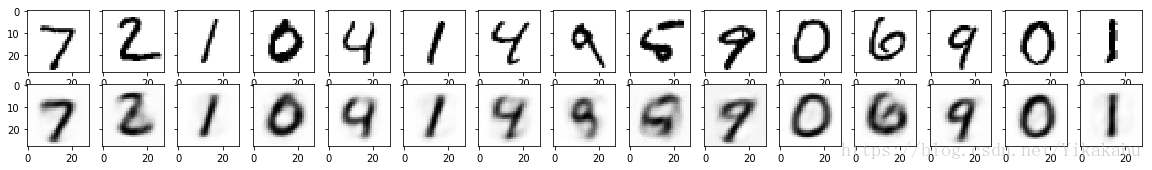

net_test = mlp(x, is_train=False, reuse=True)用了第一种方法在训练和测试中都加上feed_dict的修改,调试通过。得到重构图像的可视化如图: