Paper:Transformer模型起源—2017年的Google机器翻译团队—《Transformer:Attention Is All You Need》翻译并解读-20230802版

目录

Paper:Transformer模型起源—2017年的Google机器翻译团队—《Transformer:Attention Is All You Need》翻译并解读-20171206版

Paper:Transformer模型起源—2017年的Google机器翻译团队—《Transformer:Attention Is All You Need》翻译并解读-20230802版

Paper:Transformer模型起源—2017年的Google机器翻译团队—《Transformer:Attention Is All You Need》翻译并解读-20230802版

基于RNN/CNN的ED架构→带Attention的ED架构→Transformer架构

提出Transformer:摒弃循环机制+全依赖注意力机制、8*P100-GPU+训练12时

优化模型—基于CNN构建块+以减少顺序计算为目标均存在捕获长依赖困难的窘境(如扩展神经GPU、ByteNet、ConvS2S)、新的Transformer更优秀

Self-attention、端到端记忆网络(基于训练注意力机制)、Transformer完全依赖Self-attention

主流的竞争性神经序列转换模型都采用ED架构:编码器(映射为连续表示序列)、解码器(逐个元素生成+自回归)

3.1 Encoder and Decoder Stacks编码器和解码器堆栈

编码器(N=6,2层,捕捉输入序列中的信息) = 多头自注意力层(捕获上下文信息)+前馈网络层(附加残差层+归一化层,易于训练和优化)

3.2 Attention注意力机制:计算查询和键相关性得分【兼容性函数,如点积/加性/双线性】→得分归一化【softmax】→对值加权求和

3.2.1 SDotPA/Scaled Dot-Product Attention缩放的点积注意力(计算查询和键之间的关系)

3.2.2 Multi-Head Attention多头注意力

3.2.3 Applications of Attention in our Model注意力在我们模型中的应用

3.4 Embeddings 和 Softmax:将符号化数据转换为连续向量表示并进行分类概率计算

3.5 Positional Encoding位置编码—引入词序信息

位置编码添加到输入嵌入中(直接求和),两种方式(学习获得、固定表示【本文选择了使用不同频率的正弦和余弦函数来构造位置编码→更容易学习到相对位置的关系+能够推广到比训练中遇到的更长的序列长度】)

Self-Attention的三个必要条件—神经网络中的三个因素:每层的总计算复杂度、可并行计算量、长依赖关系的路径长度

5.1 Training Data and Batching训练数据和批处理

WMT 2014英语-德语数据集(450万句对+字节对编码+37K词汇表)、WMT 2014英语-法语数据集(3600万句对+字节对编码+32K词汇表)

5.2 Hardware and Schedule硬件和计划:8*P100~3.5天

5.3 Optimizer优化器:Adam优化器+warmup技术调整学习率

5.4 Regularization正则化:Residual Dropout(应用到每个子层+并归一化)、Label Smoothing(会降低PPL但是会提高ACC)

WMT 2014 英德翻译任务(达到SOTA,8*P100~3.5天+训练成本极低)、WMT 2014英法翻译任务(达到SOTA,1/4训练成本)

优化技巧:多头比单头好(过多也会损害模型)、比点积更复杂的兼容性函数、更大的模型、采用dropout、也可采用基于学习的位置嵌入

6.3 English Constituency Parsing英语句法分析

评估Transformer泛化性:基于WSJ的40K训练句子(训练4层Transformer)【16K的token词汇表】+半监督的17M训练句子【32K的token词汇表】

性能最优:少量实验+dropout技术+推理阶段最大输出长度增加+300

提出Transformer:一种完全基于注意力的序列转换模型+ED架构中采用多头自注意力替代循环层

Transformer速度更快+性能最优:它的训练速度明显快于基于循环层或卷积层的体系结构

相关文章

Paper:Transformer模型起源—2017年的Google机器翻译团队—《Transformer:Attention Is All You Need》翻译并解读-20171206版

https://yunyaniu.blog.csdn.net/article/details/100112428

Paper:Transformer模型起源—2017年的Google机器翻译团队—《Transformer:Attention Is All You Need》翻译并解读-20230802版

https://yunyaniu.blog.csdn.net/article/details/132187277

Paper:Transformer模型起源—2017年的Google机器翻译团队—《Transformer:Attention Is All You Need》翻译并解读-20230802版

| 地址 |

|

| 时间 |

2017年6月12日 最新版本:2023年8月2日 |

| 作者 |

Ashish Vaswani, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Lukasz Kaiser, Illia Polosukhin |

Abstract

基于RNN/CNN的ED架构→带Attention的ED架构→Transformer架构

| The dominant sequence transduction models are based on complex recurrent or convolutional neural networks that include an encoder and a decoder. The best performing models also connect the encoder and decoder through an attention mechanism. We propose a new simple network architecture, the Transformer, based solely on attention mechanisms, dispensing with recurrence and convolutions entirely. Experiments on two machine translation tasks show these models to be superior in quality while being more parallelizable and requiring significantly less time to train. Our model achieves 28.4 BLEU on the WMT 2014 English-to-German translation task, improving over the existing best results, including ensembles, by over 2 BLEU. On the WMT 2014 English-to-French translation task, our model establishes a new single-model state-of-the-art BLEU score of 41.8 after training for 3.5 days on eight GPUs, a small fraction of the training costs of the best models from the literature. We show that the Transformer generalizes well to other tasks by applying it successfully to English constituency parsing both with large and limited training data. |

主流的序列转换模型基于复杂的循环或卷积神经网络(基础构成),(整体架构)包括一个编码器和一个解码器。表现最好的模型还通过一个注意力机制将编码器和解码器连接起来。我们提出了一种新的简单网络架构,称为Transformer,完全基于注意力机制,完全摒弃了循环和卷积。在两个机器翻译任务的实验结果表明,这些模型在质量上表现出色,而且更易于并行化,训练所需时间显著缩短。 我们的模型在WMT 2014年英德翻译任务上取得了28.4的BLEU分数,相比现有最佳结果(包括集成模型),提高了2个BLEU。 在WMT 2014年英法翻译任务中,我们的模型经过8个GPU训练3.5天后,获得了新的单一模型的最先进BLEU分数,为41.8,其训练成本仅是文献中最佳模型的一小部分。 我们展示了Transformer在其他任务中具有很好的泛化能力,通过成功地将其应用于英语成分句法分析,无论是使用大规模还是有限的训练数据。 |

| ∗Equal contribution. Listing order is random. Jakob proposed replacing RNNs with self-attention and started the effort to evaluate this idea. Ashish, with Illia, designed and implemented the first Transformer models and has been crucially involved in every aspect of this work. Noam proposed scaled dot-product attention, multi-head attention and the parameter-free position representation and became the other person involved in nearly every detail. Niki designed, implemented, tuned and evaluated countless model variants in our original codebase and tensor2tensor. Llion also experimented with novel model variants, was responsible for our initial codebase, and efficient inference and visualizations. Lukasz and Aidan spent countless long days designing various parts of and implementing tensor2tensor, replacing our earlier codebase, greatly improving results and massively accelerating our research. |

∗平等贡献。名单排列顺序是随机的。Jakob提议用自注意力机制取代RNN,并开始评估这一想法。Ashish与Illia一起设计和实现了第一个Transformer模型,并在工作的各个方面起着至关重要的作用。Noam提出了缩放的点积注意力机制,多头注意力和无参数位置表示,并成为几乎涉及每一个细节的其他主要参与者。Niki在我们的原始代码库和tensor2tensor中设计、实现、调优和评估了无数模型变体。Llion也尝试了新颖的模型变体,负责我们最初的代码库,以及高效的推理和可视化。Lukasz和Aidan花费了无数个漫长的日子设计和实现tensor2tensor的各个部分,取代了我们以前的代码库,极大地改进了结果,并大幅加速了我们的研究。 |

| 31st Conference on Neural Information Processing Systems (NIPS 2017), Long Beach, CA, USA. |

本文发表于2017年第31届神经信息处理系统大会(NIPS 2017),位于美国加利福尼亚州长滩。 |

1 Introduction引言

回顾序列建模任务(如语言建模/机器翻译)的代表性算法:RNN(LSTM/GRU,存在词序计算的约束,其天然的顺序性阻碍了并行化)→Encoder-Decoder→Attention机制(早期常结合RNN使用)

| Recurrent neural networks, long short-term memory [13] and gated recurrent [7] neural networks in particular, have been firmly established as state of the art approaches in sequence modeling and transduction problems such as language modeling and machine translation [35, 2, 5]. Numerous efforts have since continued to push the boundaries of recurrent language models and encoder-decoder architectures [38, 24, 15]. Recurrent models typically factor computation along the symbol positions of the input and output sequences. Aligning the positions to steps in computation time, they generate a sequence of hidden states ht, as a function of the previous hidden state ht−1 and the input for position t. This inherently sequential nature precludes parallelization within training examples, which becomes critical at longer sequence lengths, as memory constraints limit batching across examples. Recent work has achieved significant improvements in computational efficiency through factorization tricks [21] and conditional computation [32], while also improving model performance in case of the latter. The fundamental constraint of sequential computation, however, remains. |

在序列建模和转换问题中,尤其是在语言建模和机器翻译方面,循环神经网络、LSTM[13]和GRU[7]已经被牢固地确立为最先进的方法。此后,许多工作不断推动RNLM和编码器-解码器架构的发展[38, 24, 15]。 循环模型通常沿着输入和输出序列的符号位置进行计算。将位置对齐到计算时间的步骤,它们生成一系列隐藏状态ht,作为前一个隐藏状态ht−1和位置t的输入的函数。这种固有的顺序性质阻碍了训练示例内的并行化,在更长的序列长度下变得关键,因为内存约束限制了跨示例的批处理。最近的工作通过因式分解技巧[21]和条件计算[32]在计算效率上取得了显著的改进,同时也提高了模型在后者情况下的性能。然而,顺序计算的基本约束仍然存在。 |

| Attention mechanisms have become an integral part of compelling sequence modeling and transduc-tion models in various tasks, allowing modeling of dependencies without regard to their distance in the input or output sequences [2, 19]. In all but a few cases [27], however, such attention mechanisms are used in conjunction with a recurrent network. |

Attention 注意力机制已经成为各种任务中引人注目的序列建模和转换模型的一个重要组成部分,允许对依赖关系进行建模,而不考虑它们在输入或输出序列中的距离[2, 19]。然而,除了少数几种情况[27]外,这些注意力机制通常与循环网络结合使用。 |

提出Transformer:摒弃循环机制+全依赖注意力机制、8*P100-GPU+训练12时

| In this work we propose the Transformer, a model architecture eschewing recurrence and instead relying entirely on an attention mechanism to draw global dependencies between input and output. The Transformer allows for significantly more parallelization and can reach a new state of the art in translation quality after being trained for as little as twelve hours on eight P100 GPUs. |

在这项工作中,我们提出了Transformer,这是一种模型架构,摒弃了循环,完全依赖于注意力机制来在输入和输出之间建立全局依赖关系。Transformer可以显著提高并行化能力,并且在仅在八个P100 GPU上训练12小时后,可以达到新的翻译质量的最先进水平。 |

2 Background背景

优化模型—基于CNN构建块+以减少顺序计算为目标均存在捕获长依赖困难的窘境(如扩展神经GPU、ByteNet、ConvS2S)、新的Transformer更优秀

| The goal of reducing sequential computation also forms the foundation of the Extended Neural GPU [16], ByteNet [18] and ConvS2S [9], all of which use convolutional neural networks as basic building block, computing hidden representations in parallel for all input and output positions. In these models, the number of operations required to relate signals from two arbitrary input or output positions grows in the distance between positions, linearly for ConvS2S and logarithmically for ByteNet. This makes it more difficult to learn dependencies between distant positions [12]. In the Transformer this is reduced to a constant number of operations, albeit at the cost of reduced effective resolution due to averaging attention-weighted positions, an effect we counteract with Multi-Head Attention as described in section 3.2. |

减少顺序计算的目标也构成了扩展神经GPU[16]、ByteNet[18]和ConvS2S[9]的基础,它们都使用卷积神经网络作为基本构建块,对所有输入和输出位置同时计算隐藏表示。 在这些模型中,将来自两个任意输入或输出位置的信号相关联所需的操作数量,随着位置之间的距离增加而增长,对于ConvS2S是线性增长,对于ByteNet是对数增长。这使得学习远距离位置之间的依赖关系变得更加困难[12]。 在Transformer中,这被减少为恒定数量的操作,尽管代价是由于平均注意加权位置而导致的有效分辨率降低,我们通过在第3.2节中描述的多头注意力机制来抵消这种效应。 |

Self-attention、端到端记忆网络(基于训练注意力机制)、Transformer完全依赖Self-attention

| Self-attention, sometimes called intra-attention is an attention mechanism relating different positions of a single sequence in order to compute a representation of the sequence. Self-attention has been used successfully in a variety of tasks including reading comprehension, abstractive summarization, textual entailment and learning task-independent sentence representations [4, 27, 28, 22]. End-to-end memory networks are based on a recurrent attention mechanism instead of sequence-aligned recurrence and have been shown to perform well on simple-language question answering and language modeling tasks [34]. To the best of our knowledge, however, the Transformer is the first transduction model relying entirely on self-attention to compute representations of its input and output without using sequence-aligned RNNs or convolution. In the following sections, we will describe the Transformer, motivate self-attention and discuss its advantages over models such as [17, 18] and [9]. |

Self-attention自注意力,有时称为内注意力,是一种将单个序列的不同位置联系起来以计算该序列的表示的注意机制。自注意力已经在各种任务中取得了成功,包括阅读理解、抽象摘要、文本蕴含和学习任务无关的句子表示[4, 27, 28, 22]。 端到端记忆网络是基于循环注意力机制而不是序列对齐循环的,并在简单语言问题回答和语言建模任务上表现良好[34]。 然而,据我们所知,Transformer是第一个完全依赖于自注意力来计算其输入和输出表示的传输模型,而不使用序列对齐的RNN或CNN。在接下来的几节中,我们将描述Transformer,解释自注意力的动机,并讨论其相对于模型如[17, 18]和[9]的优势。 |

3 Model Architecture模型架构

主流的竞争性神经序列转换模型都采用ED架构:编码器(映射为连续表示序列)、解码器(逐个元素生成+自回归)

| Most competitive neural sequence transduction models have an encoder-decoder structure [5, 2, 35]. Here, the encoder maps an input sequence of symbol representations (x1, ..., xn) to a sequence of continuous representations z = (z1, ..., zn). Given z, the decoder then generates an output sequence (y1, ..., ym) of symbols one element at a time. At each step the model is auto-regressive [10], consuming the previously generated symbols as additional input when generating the next. |

大多数竞争性的神经序列转换模型都采用编码器-解码器结构[5, 2, 35]。在这里,编码器将输入符号表示序列(x1,...,xn)映射为连续表示序列z = (z1,...,zn)。给定z,解码器逐个元素地生成一个输出序列(y1,...,ym)。在每一步中,模型都是自回归的[10],在生成下一个元素时,将之前生成的符号作为附加输入进行消耗。 |

| The Transformer follows this overall architecture using stacked self-attention and point-wise, fully connected layers for both the encoder and decoder, shown in the left and right halves of Figure 1, respectively. |

Transformer遵循这种整体架构,使用堆叠的自注意力和点对点的全连接层来构建编码器和解码器,分别在图1的左半部分和右半部分中展示。 |

3.1 Encoder and Decoder Stacks编码器和解码器堆栈

Transformer模型中的编码器和解码器都由多个相同的层堆叠而成。这种Encoder-Decoder结构允许Transformer捕获上下文信息和生成自回归序列,结合多头注意力和前馈网络,使模型表现出色。

编码器(N=6,2层,捕捉输入序列中的信息) = 多头自注意力层(捕获上下文信息)+前馈网络层(附加残差层+归一化层,易于训练和优化)

解码器(N=6,3层,生成自回归序列) = 基于类编码器结构(但改进自注意力层【采用masking掩码处理】→确保解码自回归)+(针对编码器的输出)多头注意力层(生成输出时关注输入的相关信息)+残差连接和层归一化

这些设计和修改共同构成了Transformer模型的基本结构,使其成为了一个在自然语言处理等序列转换任务中非常成功的模型。

| Encoder |

Decoder |

| 包含N=6个相同的层。 每个层包含两个子层: >> 第一层是多头自注意力 >> 第二层是一个位置遍历的前馈神经网络 引入残差连接后跟着层规范化。 子层的输出是 LayerNorm(x + Sublayer(x)) 所有子层的维度都是dmodel = 512 |

也包含N=6个相同的层。 每个层有三个子层: >> 多头自注意力 >> 前馈网络 >> 多头注意力用于encoder输出 引入残差连接后跟着层规范化。 修改自注意力子层,防止位置attend后续位置。 这种masking结合输出嵌入偏移一位,确保位置i的预测仅依赖于i前面的已知输出。 |

| Encoder: The encoder is composed of a stack of N = 6 identical layers. Each layer has two sub-layers. The first is a multi-head self-attention mechanism, and the second is a simple, position-wise fully connected feed-forward network. We employ a residual connection [11] around each of the two sub-layers, followed by layer normalization [1]. That is, the output of each sub-layer is LayerNorm(x + Sublayer(x)), where Sublayer(x) is the function implemented by the sub-layer itself. To facilitate these residual connections, all sub-layers in the model, as well as the embedding layers, produce outputs of dimension dmodel = 512. |

编码器:编码器由N = 6个相同的层组成。每一层有两个子层。 第一个子层是一个多头自注意力机制, 第二个子层是一个简单的位置全连接前馈网络。 我们在每个子层周围使用残差连接[11],然后是层归一化[1]。也就是说,每个子层的输出是 LayerNorm(x + Sublayer(x)), 其中Sublayer(x)是子层本身实现的函数。 为了方便这些残差连接,模型中的所有子层以及嵌入层都产生dmodel = 512的输出维度。 |

| Decoder: The decoder is also composed of a stack of N = 6 identical layers. In addition to the two sub-layers in each encoder layer, the decoder inserts a third sub-layer, which performs multi-head attention over the output of the encoder stack. Similar to the encoder, we employ residual connections around each of the sub-layers, followed by layer normalization. We also modify the self-attention sub-layer in the decoder stack to prevent positions from attending to subsequent positions. This masking, combined with fact that the output embeddings are offset by one position, ensures that the predictions for position i can depend only on the known outputs at positions less than i. |

解码器:解码器也由N = 6个相同的层组成。除了每个编码器层中的两个子层外,解码器还插入了第三个子层,对编码器堆栈的输出执行多头注意力。 与编码器类似,我们在每个子层周围使用残差连接,然后是层归一化。 我们还修改了解码器堆栈中的自注意力子层,以防止位置关注后续位置。这种掩蔽操作结合输出嵌入的一位偏移,确保位置i的预测只依赖于已知输出在位置i之前的位置。 |

3.2 Attention注意力机制:计算查询和键相关性得分【兼容性函数,如点积/加性/双线性】→得分归一化【softmax】→对值加权求和

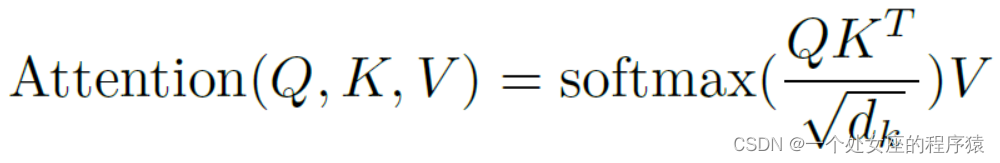

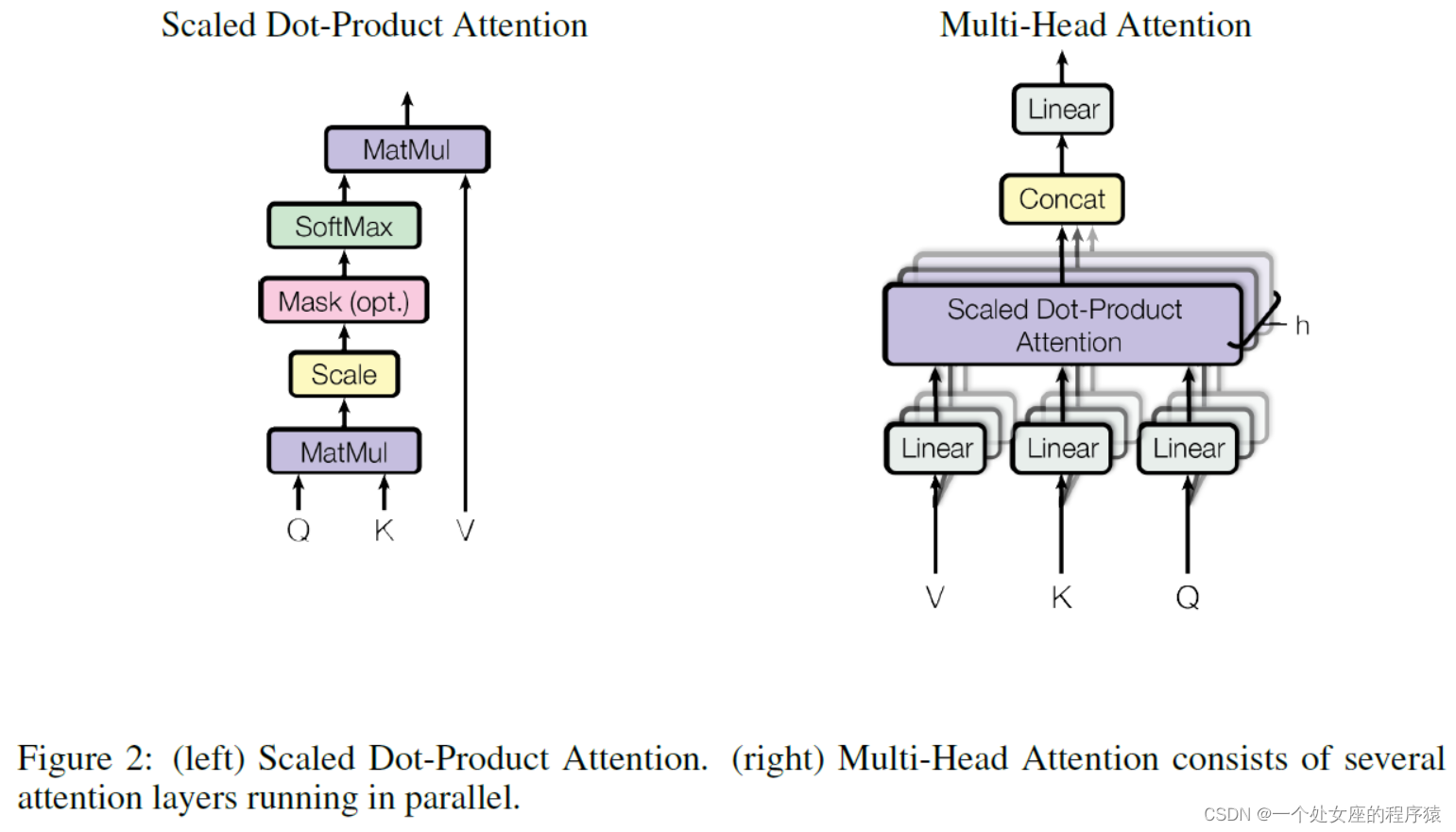

| An attention function can be described as mapping a query and a set of key-value pairs to an output, where the query, keys, values, and output are all vectors. The output is computed as a weighted sum of the values, where the weight assigned to each value is computed by a compatibility function of the query with the corresponding key. |

注意力机制可以被描述为将查询和一组键值对映射为输出,其中查询、键、值和输出都是向量。 输出是值的加权和,每个值的权重由查询与相应键的兼容性函数计算得到。 |

| Figure 2: (left) Scaled Dot-Product Attention. (right) Multi-Head Attention consists of several attention layers running in parallel. |

图2:(左)缩放的点积注意力。(右)多头注意力由平行运行的几个注意层组成。 |

3.2.1 SDotPA/Scaled Dot-Product Attention缩放的点积注意力(计算查询和键之间的关系)

| We call our particular attention "Scaled Dot-Product Attention" (Figure 2). The input consists of queries and keys of dimension dk, and values of dimension dv. We compute the dot products of the query with all keys, divide each by √dk, and apply a softmax function to obtain the weights on the values. |

我们将我们的注意力机制称为“缩放的点积注意力”(图2)。输入由维度为dk的查询和键以及维度为dv的值组成。我们计算查询与所有键的点积,将每个点积除以√dk,并应用softmax函数以获得值的权重。 |

| In practice, we compute the attention function on a set of queries simultaneously, packed together into a matrix Q. The keys and values are also packed together into matrices K and V . We compute the matrix of outputs as: The two most commonly used attention functions are additive attention [2], and dot-product (multi-plicative) attention. Dot-product attention is identical to our algorithm, except for the scaling factor of √1dk . Additive attention computes the compatibility function using a feed-forward network with a single hidden layer. While the two are similar in theoretical complexity, dot-product attention is much faster and more space-efficient in practice, since it can be implemented using highly optimized matrix multiplication code. |

在实践中,我们同时计算一组查询的注意力函数,将它们打包成一个矩阵Q。键和值也被打包成矩阵K和V。我们计算输出矩阵为:

两种最常用的注意力函数是加法注意力[2]和点积(乘法)注意力。 点积注意力与我们的算法相同,只是缩放因子不同,为√1/dk。 加法注意力使用具有单隐藏层的前馈网络来计算兼容性函数。 尽管在理论复杂性上两者相似,但在实践中,点积注意力更快、更节省空间,因为它可以使用高度优化的矩阵乘法代码实现。 |

| While for small values of dk the two mechanisms perform similarly, additive attention outperforms dot product attention without scaling for larger values of dk [3]. We suspect that for large values of dk, the dot products grow large in magnitude, pushing the softmax function into regions where it has extremely small gradients 4. To counteract this effect, we scale the dot products by 1/√dk . |

当dk值较小时,两种机制的表现相似,当dk值较大时,加性注意优于点积注意[3]。 我们怀疑,对于较大的dk值,点积的大小会变大,从而将softmax函数推入具有极小梯度的区域4。为了抵消这个影响,我们将点积缩放为1/√dk。 |

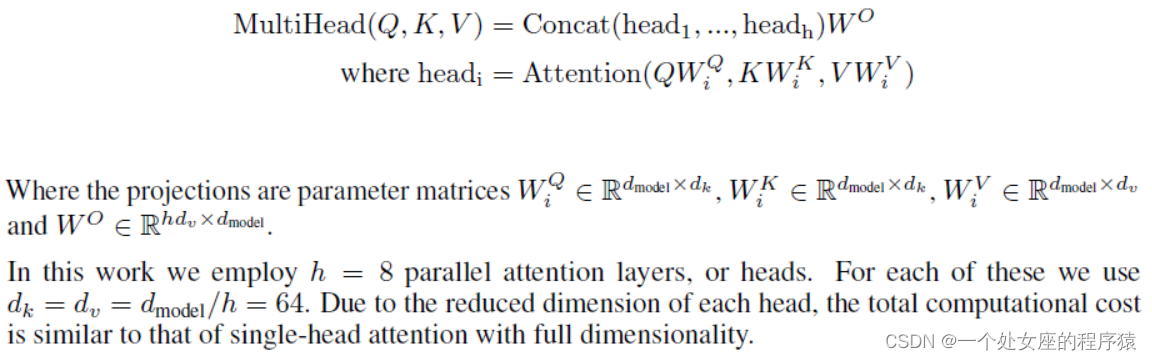

3.2.2 Multi-Head Attention多头注意力

| Instead of performing a single attention function with dmodel-dimensional keys, values and queries, we found it beneficial to linearly project the queries, keys and values h times with different, learned linear projections to dk, dk and dv dimensions, respectively. On each of these projected versions of queries, keys and values we then perform the attention function in parallel, yielding dv-dimensional output values. These are concatenated and once again projected, resulting in the final values, as depicted in Figure 2. |

我们发现将单个具有d model维键、值和查询的注意力函数线性投影为h次,每次使用不同的、学习到的线性投影到dk、dk和dv维,对性能有益。在每个这样投影的查询、键和值上并行执行注意力函数,得到dv维的输出值。这些值被连接在一起,再次进行投影,得到最终的值,如图2所示。 |

| Multi-head attention allows the model to jointly attend to information from different representation subspaces at different positions. With a single attention head, averaging inhibits this. Where the projections are parameter matrices WQ…… |

多头注意力允许模型同时关注不同位置上不同表示子空间的信息。对于单个注意力头,平均操作会抑制这一点。 其中投影是参数矩阵WQ...。 |

| In this work we employ h = 8 parallel attention layers, or heads. For each of these we use dk = dv = dmodel/h = 64. Due to the reduced dimension of each head, the total computational cost is similar to that of single-head attention with full dimensionality. |

在这项工作中,我们采用了h = 8个并行注意力层或头。对于每个头,我们使用dk = dv = dmodel/h = 64。由于每个头部的维数降低,总计算成本与完全维度的单头注意力相似。 |

3.2.3 Applications of Attention in our Model注意力在我们模型中的应用

| The Transformer uses multi-head attention in three different ways: In "encoder-decoder attention" layers, the queries come from the previous decoder layer, and the memory keys and values come from the output of the encoder. This allows every position in the decoder to attend over all positions in the input sequence. This mimics the typical encoder-decoder attention mechanisms in sequence-to-sequence models such as [38, 2, 9]. The encoder contains self-attention layers. In a self-attention layer all of the keys, values and queries come from the same place, in this case, the output of the previous layer in the encoder. Each position in the encoder can attend to all positions in the previous layer of the encoder. Similarly, self-attention layers in the decoder allow each position in the decoder to attend to all positions in the decoder up to and including that position. We need to prevent leftward information flow in the decoder to preserve the auto-regressive property. We implement this inside of scaled dot-product attention by masking out (setting to −∞) all values in the input of the softmax which correspond to illegal connections. See Figure 2. |

Transformer以三种不同的方式使用多头注意力: 在“编码器-解码器注意力”层中,查询来自前一个解码器层,而记忆键和值来自编码器的输出。这使得解码器中的每个位置都可以关注输入序列中的所有位置。这模仿了序列到序列模型中典型的编码器-解码器注意力机制,例如[38,2,9]。 编码器包含自注意力层。在自注意力层中,所有的键、值和查询都来自同一个位置,即编码器中前一层的输出。编码器中的每个位置都可以关注编码器前一层中的所有位置。 类似地,解码器中的自注意力层允许解码器中的每个位置,去关注解码器中包括该位置在内的所有位置。我们需要阻止解码器中的左向信息流,以保持自回归属性。我们通过在缩放的点积注意力中屏蔽(设置为-∞)与非法连接对应的softmax输入中的所有值来实现这一点。参见图2。 |

3.3 PwFFN位置逐元素前馈网络(不同层级的抽象特征表示→增强序列建模能力,可看作是2个卷积操作【卷积核大小为1】):构成(2个线性变换+中间ReLU连接【引入非线性→有助于捕捉序列中位置之间的复杂关系】)、不同的位置结构相同但是参数不同

| In addition to attention sub-layers, each of the layers in our encoder and decoder contains a fully connected feed-forward network, which is applied to each position separately and identically. This consists of two linear transformations with a ReLU activation in between. FFN(x) = max(0, xW1 + b1)W2 + b2 While the linear transformations are the same across different positions, they use different parameters from layer to layer. Another way of describing this is as two convolutions with kernel size 1. The dimensionality of input and output is dmodel = 512, and the inner-layer has dimensionality dff = 2048. |

在编码器和解码器的每一层中,除了注意力子层外,都还包含一个全连接的前馈网络—位置逐元素前馈网络,该网络分别应用于每个位置,并且是相同的。它由2个线性变换和一个ReLU激活函数组成。 FFN(x) = max(0, xW1 + b1)W2 + b2 虽然线性变换在不同的位置上是相同的,但它们在不同的层中使用不同的参数。另一种解释(描述方法)是使用核大小为1的2个卷积。输入和输出的维度是dmodel = 512,内层的维度是dff = 2048。 |

3.4 Embeddings 和 Softmax:将符号化数据转换为连续向量表示并进行分类概率计算

| Similarly to other sequence transduction models, we use learned embeddings to convert the input tokens and output tokens to vectors of dimension dmodel. We also use the usual learned linear transfor-mation and softmax function to convert the decoder output to predicted next-token probabilities. In our model, we share the same weight matrix between the two embedding layers and the pre-softmax linear transformation, similar to [30]. In the embedding layers, we multiply those weights by √dmodel. |

与其他序列转换模型类似,我们使用学习得到的嵌入,将输入标记和输出标记转换为维度为dmodel的向量。 我们还使用通常的学习的线性变换和softmax函数将解码器输出转换为预测的下一个标记的概率。 在我们的模型中,我们在两个嵌入层和pre-softmax线性变换之间共享相同的权重矩阵,类似于[30]。在嵌入层中,我们将这些权重乘以√dmodel。 |

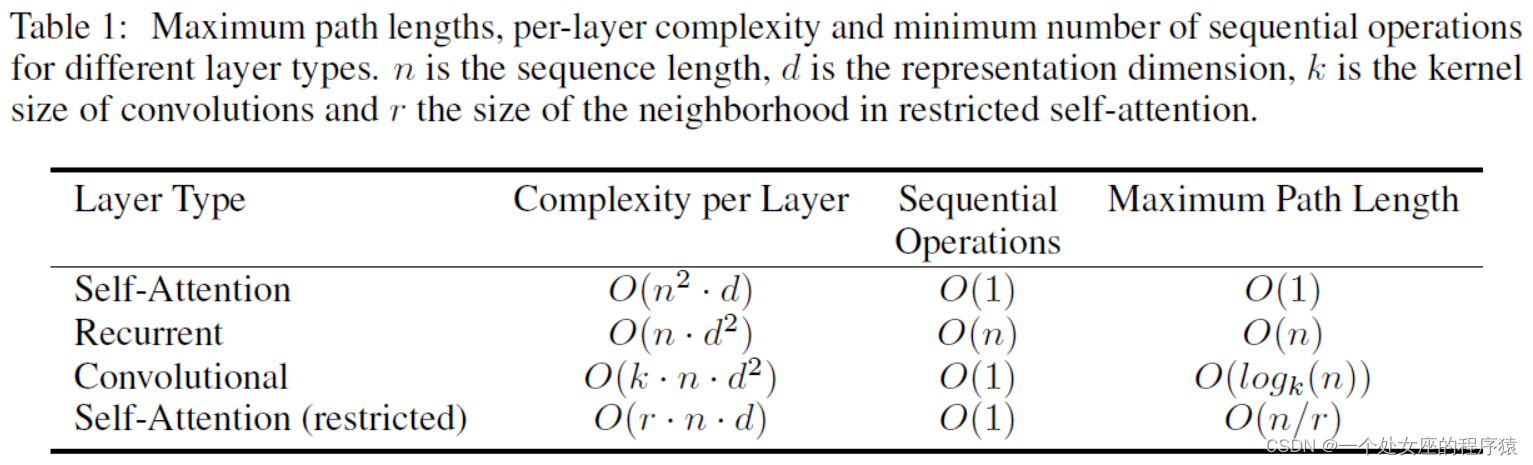

| Table 1: Maximum path lengths, per-layer complexity and minimum number of sequential operations for different layer types. n is the sequence length, d is the representation dimension, k is the kernel size of convolutions and r the size of the neighborhood in restricted self-attention. |

表1:不同层类型的最大路径长度、每层复杂度和最小顺序操作数。N为序列长度,d为表示维数,k为卷积的核大小,r为限制自注意中的邻域大小。 |

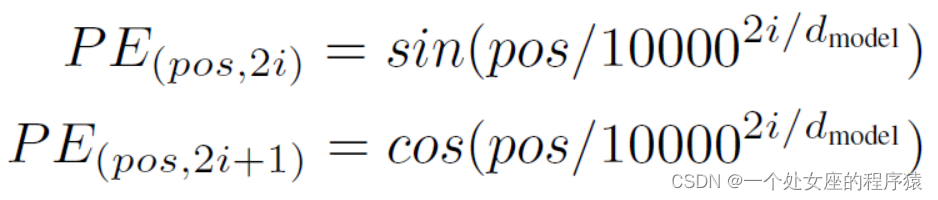

3.5 Positional Encoding位置编码—引入词序信息

位置编码添加到输入嵌入中(直接求和),两种方式(学习获得、固定表示【本文选择了使用不同频率的正弦和余弦函数来构造位置编码→更容易学习到相对位置的关系+能够推广到比训练中遇到的更长的序列长度】)

在序列到序列(Sequence-to-Sequence)的模型中,如 Transformer,由于没有卷积或循环的结构,无法从模型本身获得序列中词语的顺序信息。为了解决这个问题,需要引入额外的信息,即位置编码。

添加位置编码以便模型能够知道每个词语在输入序列中的位置。这样,在进行自注意力机制(Self-Attention)计算时,模型就可以考虑到单词的位置信息。

选择正弦和余弦函数的原因是,猜测这种编码方式可以使模型更容易学习到相对位置的关系。这是因为对于任意固定的偏移 k,位置编码 PE(pos+k) 可以表示为 PE(pos) 的线性函数。这样,模型在进行自注意力计算时,可以通过学习位置编码之间的线性关系来辨别不同位置之间的依赖关系。

| Since our model contains no recurrence and no convolution, in order for the model to make use of the order of the sequence, we must inject some information about the relative or absolute position of the tokens in the sequence. To this end, we add "positional encodings" to the input embeddings at the bottoms of the encoder and decoder stacks. The positional encodings have the same dimension dmodel as the embeddings, so that the two can be summed. There are many choices of positional encodings, learned and fixed [9]. |

由于我们的模型不包含循环和卷积,为了让模型利用序列的顺序信息,我们必须注入关于序列中标记的相对或绝对位置的信息。为此,我们在编码器和解码器堆栈的底部添加了“位置编码”到输入嵌入中。 位置编码与嵌入具有相同的维度dmodel,因此可以进行求和。位置编码有多种选择,有学习得到的和固定的[9]。 |

| In this work, we use sine and cosine functions of different frequencies:

P E(pos,2i) = sin(pos/100002i/dmodel ) P E(pos,2i+1) = cos(pos/100002i/dmodel ) where pos is the position and i is the dimension. That is, each dimension of the positional encoding corresponds to a sinusoid. The wavelengths form a geometric progression from 2π to 10000 · 2π. We chose this function because we hypothesized it would allow the model to easily learn to attend by relative positions, since for any fixed offset k, P Epos+k can be represented as a linear function of P Epos. |

在这项工作中,我们使用不同频率的正弦和余弦函数: PE(pos,2i) = sin(pos/100002i/dmodel ) PE(pos,2i+1) = cos(pos/100002i/dmodel ) 其中pos是位置,i是维度。也就是说,位置编码的每个维度对应一个正弦波。波长形成了一个从2π到10000·2π的几何级数。 我们选择这个函数是因为我们假设它可以让模型轻松地学习相对位置的注意力,因为对于任何固定的偏移量k,PEpos+k可以表示为PEpos的线性函数。 |

| We also experimented with using learned positional embeddings [9] instead, and found that the two versions produced nearly identical results (see Table 3 row (E)). We chose the sinusoidal version because it may allow the model to extrapolate to sequence lengths longer than the ones encountered during training. |

我们还尝试使用学习的位置嵌入[9],并发现这两个版本产生了几乎相同的结果(参见表3行(E)。 我们选择正弦版本是因为它可以让模型推广到训练期间遇到的更长序列长度。 |

4 Why Self-Attention为什么使用自注意力

| In this section we compare various aspects of self-attention layers to the recurrent and convolu-tional layers commonly used for mapping one variable-length sequence of symbol representations (x1, ..., xn) to another sequence of equal length (z1, ..., zn), with xi, zi ∈ Rd, such as a hidden layer in a typical sequence transduction encoder or decoder. Motivating our use of self-attention we consider three desiderata. |

在本节中,我们将自注意力层的各个方面与常用于将一个可变长度符号表示序列(x1,...,xn)映射到另一个等长序列(z1,...,zn)的循环和卷积层进行比较,其中xi,zi ∈ Rd, 例如典型序列转换编码器或解码器中的隐藏层。 在使用自注意力的动机下,我们考虑了三个必要条件。 |

Self-Attention的三个必要条件—神经网络中的三个因素:每层的总计算复杂度、可并行计算量、长依赖关系的路径长度

神经网络中的三个因素:每层的总计算复杂度,可以并行化计算的量(通过最少的顺序操作数量来衡量),以及网络中长程依赖的路径长度。学习长程依赖是许多序列转换任务中的一个关键挑战。影响学习这种依赖能力的一个关键因素是前向和后向信号在网络中必须穿越的路径长度。在输入和输出序列的任意位置组合之间,这些路径越短,学习长程依赖就越容易。因此,我们还比较了由不同层类型组成的网络中任意两个输入和输出位置之间的最大路径长度。

| One is the total computational complexity per layer. Another is the amount of computation that can be parallelized, as measured by the minimum number of sequential operations required. The third is the path length between long-range dependencies in the network. Learning long-range dependencies is a key challenge in many sequence transduction tasks. One key factor affecting the ability to learn such dependencies is the length of the paths forward and backward signals have to traverse in the network. The shorter these paths between any combination of positions in the input and output sequences, the easier it is to learn long-range dependencies [12]. Hence we also compare the maximum path length between any two input and output positions in networks composed of the different layer types. |

其中一个是每层的总计算复杂度。 另一个是可以并行计算的数量,通过所需的最小顺序操作数来衡量。 第三个是网络中长距离依赖之间的路径长度。学习长距离依赖是许多序列转换任务中的一个关键挑战。 影响学习此类依赖性能的一个关键因素是前向和后向信号在网络中必须穿越的路径长度。在输入和输出序列的任意位置组合之间的路径越短,学习长距离依赖性就越容易[12]。因此,我们还比较由不同层类型组成的网络中任意两个输入和输出位置之间的最大路径长度。 |

复杂度对比—自注意力层O(1)、循环层O(n)

Self-Attention Layer的复杂度:Self-Attention层通过固定数量的顺序执行操作连接所有位置,因此其复杂度为O(1),与输入序列长度n无关。这使得Self-Attention在处理较小的序列长度n时,比循环层更快。对于表示维度d较大的情况(如用于现代机器翻译模型的句子表示),通常满足这个条件。

Recurrent Layer的复杂度:Recurrent层的复杂度为O(n),其中n是输入序列的长度。这是因为RNN(循环神经网络)在处理序列时需要顺序地处理每个位置,所以时间复杂度与序列长度n成正比。这使得RNN在处理较长序列时可能会显得比较慢。

| As noted in Table 1, a self-attention layer connects all positions with a constant number of sequentially executed operations, whereas a recurrent layer requires O(n) sequential operations. In terms of computational complexity, self-attention layers are faster than recurrent layers when the sequence length n is smaller than the representation dimensionality d, which is most often the case with sentence representations used by state-of-the-art models in machine translations, such as word-piece [38] and byte-pair [31] representations. To improve computational performance for tasks involving very long sequences, self-attention could be restricted to considering only a neighborhood of size r in the input sequence centered around the respective output position. This would increase the maximum path length to O(n/r). We plan to investigate this approach further in future work. |

如表1所示,自注意力层将所有位置连接到恒定数量的顺序执行操作,而循环层需要O(n)个顺序操作。 在计算复杂度方面,当序列长度n小于表示维度d时,自注意力层比循环层更快,这往往是机器翻译中最先进模型使用的句子表示的情况,例如word-piece词块 [38]和byte-pair字节对 [31]表示。 为了提高涉及非常长序列的任务的计算性能,可以将自注意力限制为只考虑以各自输出位置为中心的输入序列中大小为r的邻域。这将使最大路径长度增加到O(n/r)。我们计划在未来的工作中进一步研究这种方法。 |

只有堆叠多个卷积层才可以增加网络路径长度(一个卷积层无法建立所有位置间的连接),并且卷积层计算复杂度高于循环层(虽然可分离卷积可降低复杂性)—但self-attention层与单独点乘层相当且更加可解释

| A single convolutional layer with kernel width k < n does not connect all pairs of input and output positions. Doing so requires a stack of O(n/k) convolutional layers in the case of contiguous kernels, or O(logk(n)) in the case of dilated convolutions [18], increasing the length of the longest paths between any two positions in the network. Convolutional layers are generally more expensive than recurrent layers, by a factor of k. Separable convolutions [6], however, decrease the complexity considerably, to O(k · n · d + n · d2). Even with k = n, however, the complexity of a separable convolution is equal to the combination of a self-attention layer and a point-wise feed-forward layer, the approach we take in our model. As side benefit, self-attention could yield more interpretable models. We inspect attention distributions from our models and present and discuss examples in the appendix. Not only do individual attention heads clearly learn to perform different tasks, many appear to exhibit behavior related to the syntactic and semantic structure of the sentences. |

一个核宽度为k < n的卷积层不能连接所有的输入和输出位置对。在相邻核的情况下,这样做需要O(n/k)个卷积层的堆栈,在扩展卷积的情况下需要O(logk(n))个卷积层的堆栈[18],从而增加网络中任意两个位置之间最长路径的长度。卷积层通常比循环层的成本高k倍。 然而,可分离卷积[6]显著减少了复杂性,为O(k·n·d + n·d2)。然而,即使对于k = n,可分离卷积的复杂性也等于自注意力层和逐点前馈层的组合,这是我们模型采用的方法。 作为附加好处,自注意力可以产生更可解释的模型。我们检查来自我们的模型的注意力分布,并在附录中展示和讨论示例。 不仅每个注意力头明显学习执行不同的任务,许多注意力头部似乎还表现出与句子的句法和语义结构相关的行为。 |

5 Training训练

| This section describes the training regime for our models. |

本节描述了我们模型的训练方案。 |

5.1 Training Data and Batching训练数据和批处理

WMT 2014英语-德语数据集(450万句对+字节对编码+37K词汇表)、WMT 2014英语-法语数据集(3600万句对+字节对编码+32K词汇表)

| We trained on the standard WMT 2014 English-German dataset consisting of about 4.5 million sentence pairs. Sentences were encoded using byte-pair encoding [3], which has a shared source-target vocabulary of about 37000 tokens. For English-French, we used the significantly larger WMT 2014 English-French dataset consisting of 36M sentences and split tokens into a 32000 word-piece vocabulary [38]. Sentence pairs were batched together by approximate sequence length. Each training batch contained a set of sentence pairs containing approximately 25000 source tokens and 25000 target tokens. |

我们在标准的WMT 2014英语-德语数据集上进行训练,该数据集由大约450万句对组成。句子使用字节对编码进行编码[3],该编码具有大约37000个标记的共享源-目标词汇表。 对于英语-法语,我们使用了更大的WMT 2014英语-法语数据集,该数据集包含3600万个句子,并将标记分割成32000个单词-词汇表[38]。句子对按近似序列长度进行批处理。每个训练批包含一组句子对,其中包含大约25000个源标记和25000个目标标记。 |

5.2 Hardware and Schedule硬件和计划:8*P100~3.5天

| We trained our models on one machine with 8 NVIDIA P100 GPUs. For our base models using the hyperparameters described throughout the paper, each training step took about 0.4 seconds. We trained the base models for a total of 100,000 steps or 12 hours. For our big models,(described on the bottom line of table 3), step time was 1.0 seconds. The big models were trained for 300,000 steps (3.5 days). |

我们在一台带有8个NVIDIA P100 GPU的机器上训练我们的模型。对于使用本文中描述的超参数的基本模型,每个训练步骤大约需要0.4秒。我们对基本模型进行了总共10万步或12小时的训练。对于我们的大型模型(如表3所示),步长为1.0秒。大模型训练了30万步(3.5天)。 |

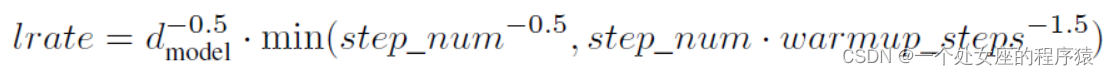

5.3 Optimizer优化器:Adam优化器+warmup技术调整学习率

| We used the Adam optimizer [20] with β1 = 0.9, β2 = 0.98 and ϵ = 10−9. We varied the learning rate over the course of training, according to the formula:

lrate = d−0model.5 · min(step_num−0.5, step_num · warmup_steps−1.5) This corresponds to increasing the learning rate linearly for the first warmup_steps training steps, and decreasing it thereafter proportionally to the inverse square root of the step number. We used warmup_steps = 4000. |

我们使用Adam优化器[20],其中β1 = 0.9,β2 = 0.98,ϵ = 10−9。我们根据以下公式在训练过程中调整学习率: lrate = d−0model.5 · min(step_num−0.5, step_num · warmup_steps−1.5) 这意味着在前warmup_steps个训练步骤中,学习率线性增加,之后与步骤数的倒数平方根成比例地减小。我们使用warmup_steps = 4000。 |

5.4 Regularization正则化:Residual Dropout(应用到每个子层+并归一化)、Label Smoothing(会降低PPL但是会提高ACC)

| We employ three types of regularization during training: |

我们在训练期间采用了三种类型的正则化: |

| Residual Dropout We apply dropout [33] to the output of each sub-layer, before it is added to the sub-layer input and normalized. In addition, we apply dropout to the sums of the embeddings and the positional encodings in both the encoder and decoder stacks. For the base model, we use a rate of Pdrop = 0.1. |

Residual Dropout残差丢弃:我们将Dropout[33]应用于每个子层的输出,然后将其添加到子层输入并归一化。此外,我们将dropout应用于编码器和解码器堆栈中的嵌入和位置编码之和。对于基本模型,我们使用丢弃率Pdrop = 0.1。 |

| Label Smoothing During training, we employed label smoothing of value ϵls = 0.1 [36]. This hurts perplexity, as the model learns to be more unsure, but improves accuracy and BLEU score. |

标签平滑:在训练过程中,我们采用了值为ϵls = 0.1的标签平滑[36]。这会降低困惑度,因为模型学会更加不确定,但会提高准确性和BLEU分数。 |

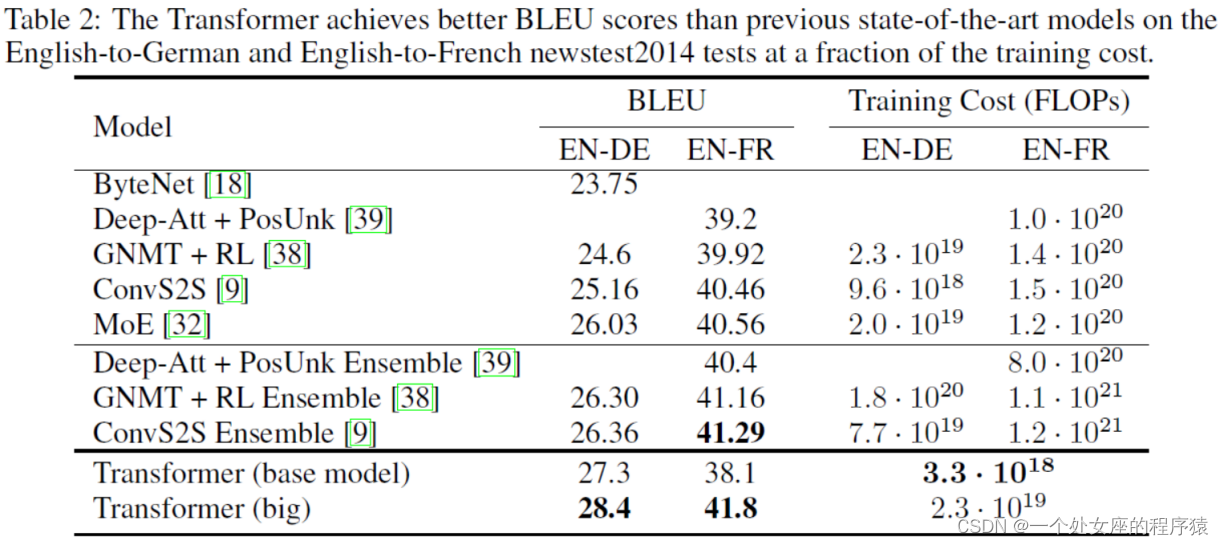

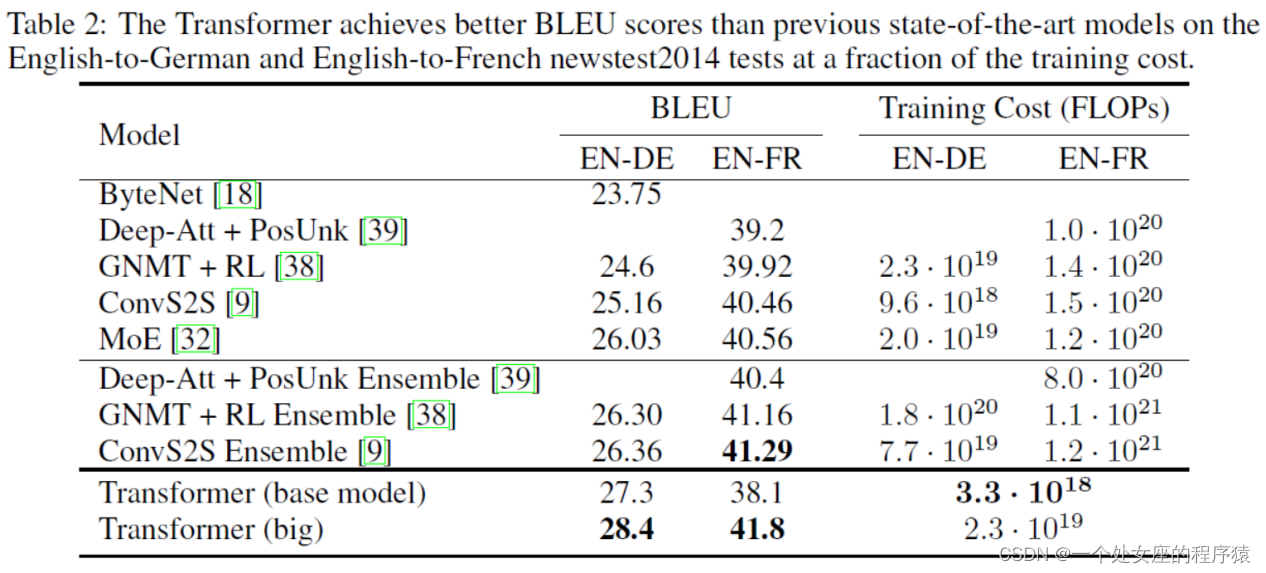

| Table 2: The Transformer achieves better BLEU scores than previous state-of-the-art models on the English-to-German and English-to-French newstest2014 tests at a fraction of the training cost. |

表2:Transformer在英语-德语和英语-法语newstest2014测试中取得了比以前的最先进型号更好的BLEU分数,而训练费用只是其中的一小部分。 |

6 Results结果

6.1 Machine Translation机器翻译

WMT 2014 英德翻译任务(达到SOTA,8*P100~3.5天+训练成本极低)、WMT 2014英法翻译任务(达到SOTA,1/4训练成本)

| On the WMT 2014 English-to-German translation task, the big transformer model (Transformer (big) in Table 2) outperforms the best previously reported models (including ensembles) by more than 2.0 BLEU, establishing a new state-of-the-art BLEU score of 28.4. The configuration of this model is listed in the bottom line of Table 3. Training took 3.5 days on 8 P100 GPUs. Even our base model surpasses all previously published models and ensembles, at a fraction of the training cost of any of the competitive models. On the WMT 2014 English-to-French translation task, our big model achieves a BLEU score of 41.0, outperforming all of the previously published single models, at less than 1/4 the training cost of the previous state-of-the-art model. The Transformer (big) model trained for English-to-French used dropout rate Pdrop = 0.1, instead of 0.3. |

在WMT 2014 英德翻译任务中,大型Transformer模型(表2中的Transformer(大型))的表现优于先前报道的所有模型(包括集合模型),BLEU得分超过2.0,达到了28.4,创造了新的最高BLEU得分。该模型的配置列在表3的最后一行。训练过程在8个P100 GPU上耗时3.5天。即使是我们的基础模型也超过了之前发布的所有模型和集合模型,而训练成本只是竞争模型中任何一个的一小部分。 在WMT 2014英法翻译任务中,我们的大型模型在训练成本不到之前最先进模型的四分之一的情况下,实现了41.0的BLEU得分,超过了先前发布的所有单一模型。英法翻译的Transformer(大型)模型使用了dropout率Pdrop = 0.1,而不是0.3。 |

| For the base models, we used a single model obtained by averaging the last 5 checkpoints, which were written at 10-minute intervals. For the big models, we averaged the last 20 checkpoints. We used beam search with a beam size of 4 and length penalty α = 0.6 [38]. These hyperparameters were chosen after experimentation on the development set. We set the maximum output length during inference to input length + 50, but terminate early when possible [38]. |

对于基础模型,我们使用了最后5个检查点的平均值作为单一模型,这些检查点是以10分钟为间隔保存的。对于大型模型,我们取了最后20个检查点的平均值。我们使用了beam search束搜索,beam束大小为4,长度惩罚参数α = 0.6 [38]。这些超参数是在开发集上进行实验选择的。我们将推理期间的最大输出长度设置为输入长度+50,但尽可能早地终止 [38]。 |

| Table 2 summarizes our results and compares our translation quality and training costs to other model architectures from the literature. We estimate the number of floating point operations used to train a model by multiplying the training time, the number of GPUs used, and an estimate of the sustained single-precision floating-point capacity of each GPU 5. |

表2总结了我们的结果,并将我们的翻译质量和训练成本与文献中的其他模型架构进行了比较。我们通过将训练时间、使用的GPU数量以及每个GPU的持续单精度浮点运算能力的估计相乘,来估计训练模型使用的浮点操作数量 [5]。 |

6.2 Model Variations模型变体

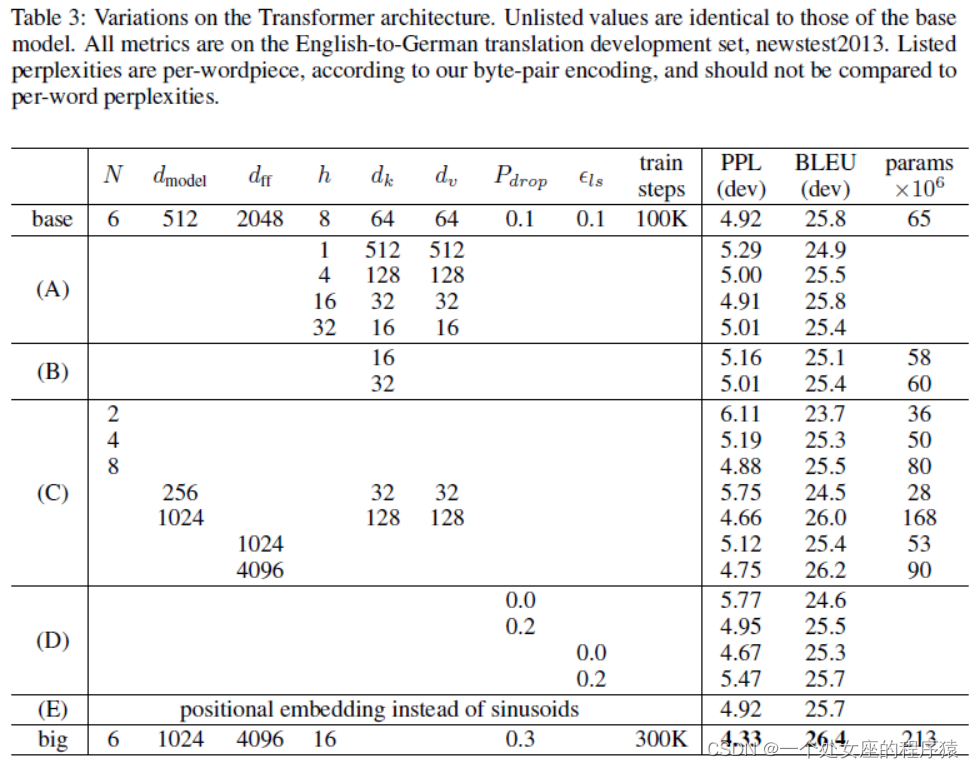

| To evaluate the importance of different components of the Transformer, we varied our base model in different ways, measuring the change in performance on English-to-German translation on the development set, newstest2013. We used beam search as described in the previous section, but no checkpoint averaging. We present these results in Table 3. |

为了评估Transformer不同组件的重要性,我们以不同的方式对基础模型进行了变化,并在开发集newstest2013上测量了英语到德语翻译的性能变化。我们使用了前一节中描述的beam search束搜索,但没有进行检查点平均。我们在表3中呈现了这些结果。 |

优化技巧:多头比单头好(过多也会损害模型)、比点积更复杂的兼容性函数、更大的模型、采用dropout、也可采用基于学习的位置嵌入

| In Table 3 rows (A), we vary the number of attention heads and the attention key and value dimensions, keeping the amount of computation constant, as described in Section 3.2.2. While single-head attention is 0.9 BLEU worse than the best setting, quality also drops off with too many heads. In Table 3 rows (B), we observe that reducing the attention key size dk hurts model quality. This suggests that determining compatibility is not easy and that a more sophisticated compatibility function than dot product may be beneficial. We further observe in rows (C) and (D) that, as expected, bigger models are better, and dropout is very helpful in avoiding over-fitting. In row (E) we replace our sinusoidal positional encoding with learned positional embeddings [9], and observe nearly identical results to the base model. |

在表3行(A)中,我们在保持计算量不变的情况下,改变注意头的数量以及注意键和值维度,如3.2.2节所述。虽然单头注意力比最佳设置差0.9 BLEU,但过多的头也会降低质量。 在表3的(B)行中,我们观察到减小注意力键的大小dk会降低模型质量。这表明确定兼容性并不容易,比点积更复杂的兼容性函数可能是有益的。我们在(C)和(D)行中进一步观察到,正如预期的那样,更大的模型更好,并且dropout对于避免过度拟合非常有帮助。在(E)行中,我们用学习的位置嵌入替换正弦位置编码[9],并观察到与基本模型几乎相同的结果。 |

6.3 English Constituency Parsing英语句法分析

评估Transformer泛化性:基于WSJ的40K训练句子(训练4层Transformer)【16K的token词汇表】+半监督的17M训练句子【32K的token词汇表】

| To evaluate if the Transformer can generalize to other tasks we performed experiments on English constituency parsing. This task presents specific challenges: the output is subject to strong structural constraints and is significantly longer than the input. Furthermore, RNN sequence-to-sequence models have not been able to attain state-of-the-art results in small-data regimes [37]. We trained a 4-layer transformer with dmodel = 1024 on the Wall Street Journal (WSJ) portion of the Penn Treebank [25], about 40K training sentences. We also trained it in a semi-supervised setting, using the larger high-confidence and BerkleyParser corpora from with approximately 17M sentences [37]. We used a vocabulary of 16K tokens for the WSJ only setting and a vocabulary of 32K tokens for the semi-supervised setting. |

为了评估Transformer是否能推广到其他任务,我们在英语句法分析上进行了实验。这个任务具有特定的挑战:输出受到强烈的结构约束,并且比输入长得多。此外,RNN序列到序列模型还不能在小数据体系中获得最先进的结果[37]。 我们在Penn Treebank [25]的华尔街日报(WSJ)部分上训练了一个4层的Transformer,dmodel = 1024,约有40K个训练句子。我们还在半监督设置下使用了更大的高置信度和BerkleyParser语料库,其中包含约17M个句子[37]。 对于WSJ的设置,我们使用了16,000个标记的词汇表, 对于半监督设置,我们使用了32,000个标记的词汇表。 |

性能最优:少量实验+dropout技术+推理阶段最大输出长度增加+300

| We performed only a small number of experiments to select the dropout, both attention and residual (section 5.4), learning rates and beam size on the Section 22 development set, all other parameters remained unchanged from the English-to-German base translation model. During inference, we increased the maximum output length to input length + 300. We used a beam size of 21 and α = 0.3 for both WSJ only and the semi-supervised setting. |

我们只进行了少量实验,选择了dropout(包括注意力和残差),学习率和beam size,这些实验是在Section 22开发集上进行的,其他参数与英德基础翻译模型保持不变。 在推理过程中,我们将最大输出长度增加到输入长度+300。对于WSJ的设置和半监督设置,我们都使用了beam size为21和α = 0.3。 |

| Our results in Table 4 show that despite the lack of task-specific tuning our model performs sur-prisingly well, yielding better results than all previously reported models with the exception of the Recurrent Neural Network Grammar [8]. In contrast to RNN sequence-to-sequence models [37], the Transformer outperforms the Berkeley-Parser [29] even when training only on the WSJ training set of 40K sentences. |

我们在表4中的结果表明,尽管缺乏任务特定的调优,我们的模型表现出色,结果优于先前报道的所有模型,除了递归神经网络语法模型[8]。 与RNN序列到序列模型[37]相比,Transformer即使只在40K个句子的WSJ训练集上训练,也优于Berkeley-Parser[29]。 |

7 Conclusion结论

提出Transformer:一种完全基于注意力的序列转换模型+ED架构中采用多头自注意力替代循环层

| In this work, we presented the Transformer, the first sequence transduction model based entirely on attention, replacing the recurrent layers most commonly used in encoder-decoder architectures with multi-headed self-attention. |

在这项工作中,我们提出了Transformer,这是第一个完全基于注意力的序列转换模型,用多头自注意力来取代了编码器-解码器架构中最常用的循环层。 |

Transformer速度更快+性能最优:它的训练速度明显快于基于循环层或卷积层的体系结构

| For translation tasks, the Transformer can be trained significantly faster than architectures based on recurrent or convolutional layers. On both WMT 2014 English-to-German and WMT 2014 English-to-French translation tasks, we achieve a new state of the art. In the former task our best model outperforms even all previously reported ensembles. We are excited about the future of attention-based models and plan to apply them to other tasks. We plan to extend the Transformer to problems involving input and output modalities other than text and to investigate local, restricted attention mechanisms to efficiently handle large inputs and outputs such as images, audio and video. Making generation less sequential is another research goals of ours. |

对于翻译任务,Transformer的训练速度明显快于基于循环层或卷积层的体系结构。在WMT 2014英语到德语和WMT 2014英语到法语翻译任务上,我们取得了最新的最高水平。在前一个任务中,我们的最佳模型甚至优于所有先前报道的集成。 我们对基于注意力的模型的未来充满期待,并计划将其应用于其他任务。我们计划将Transformer扩展到涉及文本以外的输入和输出模态的问题,并研究局部限制注意力机制以高效处理大输入和输出,如图像、音频和视频。使生成过程不再是严格顺序的是我们的另一个研究目标。 |

| The code we used to train and evaluate our models is available at https://github.com/tensorflow/tensor2tensor. |

我们用于训练和评估模型的代码可在https://github.com/tensorflow/tensor2tensor上找到。 |

Acknowledgements致谢

| We are grateful to Nal Kalchbrenner and Stephan Gouws for their fruitful comments, corrections and inspiration. |

我们感谢Nal Kalchbrenner和Stephan Gouws对我们的宝贵评论、修正和启发的贡献。 |