一、交叉验证

交叉验证(cross validation):将拿到的训练数据分为训练和验证集,以下图为例,将数据分成4份,其中一份作为验证集,经过4次(组)的测试,每次都更换不同的验证集,即得到4组模型的结果,取平均值作为最终结果,又称4折交叉验证

交叉验证目的:为了让被评估的模型更加准确可信,交叉验证不能提高模型的准确率

为了让从训练得到模型结果更加准确,分割数据为

- 训练集:训练集+验证集

- 测试集:测试集

二、网格搜索

网格搜索(Grid Search):通常情况下,有很多参数需要手动指定的(如k-近邻算法中的K值),这种参数叫超参数。但是手动过程繁杂,所以需要对模型预设几种超参数组合,每组超参数都采用交叉验证来进行评估,最后选出最优参数组合建立模型 ,即把超参数的值通过字典形式传入,然后选择最优值

交叉验证,网格搜索(模型选择与调优)API:

交叉验证,网格搜索(模型选择与调优)API:

- sklearn.model_selection.GridSearchCV(estimator, param_grid=None,cv=None):对估计器的指定参数值进行详尽搜索

- estimator:估计器对象

- param_grid:估计器参数(dict){“n_neighbors”:[1,3,5]}

- cv:指定几折交叉验证

- fit:输入训练数据

- score:准确率

- 结果分析:

- bestscore__:在交叉验证中验证的最好结果

- bestestimator:最好的参数模型

- cvresults:每次交叉验证后的验证集准确率结果和训练集准确率结果

三、鸢尾花案例增加K值调优

使用GridSearchCV构建估计器

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split, GridSearchCV

from sklearn.preprocessing import StandardScaler

from sklearn.neighbors import KNeighborsClassifier # 导入模块

# 1.获取数据集

iris = load_iris()

# 2.数据基本处理

x_train, x_test, y_train, y_test = train_test_split(iris.data, iris.target, random_state=22) # 划分数据集

# 3.特征工程:标准化

transfer = StandardScaler() # 实例化一个转换器类

x_train = transfer.fit_transform(x_train) # 调用fit_transform

x_test = transfer.transform(x_test)

# 4.KNN预估器流程

estimator = KNeighborsClassifier(n_neighbors=1) # 实例化预估器类

# 模型选择与调优——网格搜索和交叉验证

param_dict = {"n_neighbors": [1, 3, 5, 7]} # 准备要调的超参数

estimator = GridSearchCV(estimator, param_grid=param_dict, cv=3) # estimator:选择了哪个训练模型,cv为几折交叉验证

estimator.fit(x_train, y_train) # fit数据进行训练

# 5.评估模型效果

# 方法a:比对预测结果和真实值

y_predict = estimator.predict(x_test)

print('预测值是:\n', y_predict)

print("比对预测结果和真实值:\n", y_predict == y_test)

# 方法b:直接计算准确率

score = estimator.score(x_test, y_test)

print("直接计算准确率:\n", score)

# 评估查看最终选择的结果和交叉验证的结果

print("最好的参数模型:\n", estimator.best_estimator_)

print("在交叉验证中验证的最好结果:\n", estimator.best_score_)

print("每次交叉验证后的准确率结果:\n", estimator.cv_results_)

----------------------------------------------------------------

输出:

预测值是:

[0 2 1 2 1 1 1 1 1 0 2 1 2 2 0 2 1 1 1 1 0 2 0 1 2 0 2 2 2 2 0 0 1 1 1 0 0

0]

比对预测结果和真实值:

[ True True True True True True True False True True True True

True True True True True True False True True True True True

True True True True True True True True True True True True

True True]

直接计算准确率:

0.9473684210526315

最好的参数模型:

KNeighborsClassifier()

在交叉验证中验证的最好结果:

0.9732100521574205

每次交叉验证后的准确率结果:

{'mean_fit_time': array([0.00033259, 0.00031996, 0.00066495, 0.00060987]), 'std_fit_time': array([0.00047036, 0.00045249, 0.00047019, 0.00043647]), 'mean_score_time': array([0.00166217, 0.00200717, 0.00171733, 0.00133022]), 'std_score_time': array([4.70134086e-04, 1.72589729e-05, 5.13878713e-04, 4.70639818e-04]), 'param_n_neighbors': masked_array(data=[1, 3, 5, 7],

mask=[False, False, False, False],

fill_value='?',

dtype=object), 'params': [{'n_neighbors': 1}, {'n_neighbors': 3}, {'n_neighbors': 5}, {'n_neighbors': 7}], 'split0_test_score': array([0.97368421, 0.97368421, 0.97368421, 0.97368421]), 'split1_test_score': array([0.97297297, 0.97297297, 0.97297297, 0.97297297]), 'split2_test_score': array([0.94594595, 0.89189189, 0.97297297, 0.97297297]), 'mean_test_score': array([0.96420104, 0.94618303, 0.97321005, 0.97321005]), 'std_test_score': array([0.01291157, 0.03839073, 0.00033528, 0.00033528]), 'rank_test_score': array([3, 4, 1, 1])}四、Facebook人造世界数据预测

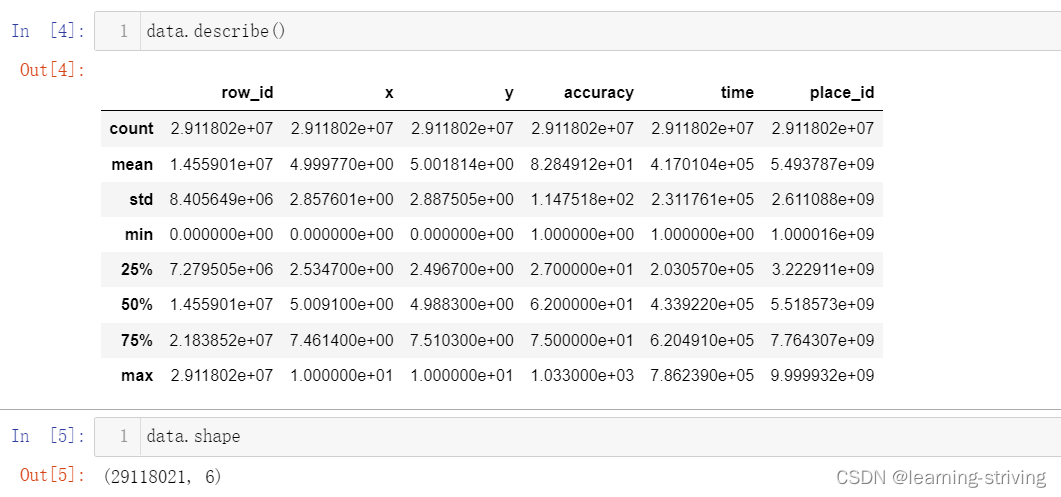

train.csv, test.csv数据获取官网:grid_knn | Kaggle

train.csv, test.csv数据文件内容如下

- row_ id:登记事件的id

- x,y:坐标

- accuracy: 定位准确性

- time: 时间戳

- place. _id:业务的id,这是预测目标

- 基本步骤

- 数据处理

- 缩小数据集范围 DataFrame.query()

- 选取有用的时间特征

- 将签到位置少于n个用户的删除

- 分割数据集

- 标准化处理

- k-近邻预测

- 数据处理

数据介绍:根据用户的位置,准确性和时间戳预测用户正在查看的业务

代码如下

代码如下

import pandas as pd

from sklearn.model_selection import train_test_split, GridSearchCV

from sklearn.preprocessing import StandardScaler

from sklearn.neighbors import KNeighborsClassifier # 导入模块

# 1、获取数据集

facebook = pd.read_csv("../data/train.csv")

# 2.基本数据处理

facebook_data = facebook.query("x>2.0 & x<2.5 & y>2.0 & y<2.5") # 缩小数据范围

time = pd.to_datetime(facebook_data["time"], unit="s") # 选择时间特征

time = pd.DatetimeIndex(time)

facebook_data["day"] = time.day

facebook_data["hour"] = time.hour

facebook_data["weekday"] = time.weekday

# 去掉签到较少的地方

place_count = facebook_data.groupby("place_id").count()

place_count = place_count[place_count["row_id"] > 3]

facebook_data = facebook_data[facebook_data["place_id"].isin(place_count.index)]

x = facebook_data[["x", "y", "accuracy", "day", "hour", "weekday"]] # 确定特征值和目标值

y = facebook_data["place_id"]

x_train, x_test, y_train, y_test = train_test_split(x, y, random_state=22) # 分割数据集

# 3.特征工程--特征预处理(标准化)

transfer = StandardScaler() # 实例化一个转换器

x_train = transfer.fit_transform(x_train) # 调用fit_transform

x_test = transfer.fit_transform(x_test)

# 4.机器学习--knn+cv

estimator = KNeighborsClassifier() # 实例化一个估计器

param_grid = {"n_neighbors": [1, 3, 5, 7, 9]} # 准备要调的超参数

estimator = GridSearchCV(estimator, param_grid=param_grid, cv=10) # 调用gridsearchCV,10折交叉验证

estimator.fit(x_train, y_train) # 模型训练

# 5.模型评估

# 基本评估方式

score = estimator.score(x_test, y_test)

print("最后预测的准确率为:\n", score)

y_predict = estimator.predict(x_test)

print("最后的预测值为:\n", y_predict)

print("预测值和真实值的对比情况:\n", y_predict == y_test)

# 使用交叉验证后的评估方式

print("在交叉验证中验证的最好结果:\n", estimator.best_score_)

print("最好的参数模型:\n", estimator.best_estimator_)

print("每次交叉验证后的验证集准确率结果和训练集准确率结果:\n", estimator.cv_results_)

----------------------------------------------------------

输出:

最后预测的准确率为:

0.36804111804111805

最后的预测值为:

[9983648790 5806536504 9674001925 ... 1247398579 3455925971 5100539171]

预测值和真实值的对比情况:

24703810 True

19445902 False

18490063 True

7762709 False

6505956 False

...

27632888 False

23367671 False

6692268 False

25834435 False

13319005 False

Name: place_id, Length: 17316, dtype: bool

在交叉验证中验证的最好结果:

0.36276655562074106

最好的参数模型:

KNeighborsClassifier()

每次交叉验证后的验证集准确率结果和训练集准确率结果:

{'mean_fit_time': array([0.2586242 , 0.29684422, 0.27456429, 0.30488372, 0.31562543]), 'std_fit_time': array([0.08488032, 0.07275422, 0.10609217, 0.10391282, 0.0656314 ]), 'mean_score_time': array([0.51792595, 0.61323991, 0.72256181, 0.88158529, 0.97560654]), 'std_score_time': array([0.10981396, 0.11335467, 0.24410747, 0.13826019, 0.14417211]), 'param_n_neighbors': masked_array(data=[1, 3, 5, 7, 9],

mask=[False, False, False, False, False],

fill_value='?',

dtype=object), 'params': [{'n_neighbors': 1}, {'n_neighbors': 3}, {'n_neighbors': 5}, {'n_neighbors': 7}, {'n_neighbors': 9}], 'split0_test_score': array([0.36650626, 0.35534167, 0.3653513 , 0.36496631, 0.35611165]), 'split1_test_score': array([0.36323388, 0.35630414, 0.35765159, 0.36034649, 0.3574591 ]), 'split2_test_score': array([0.3680462 , 0.35437921, 0.36284889, 0.36111646, 0.36419634]), 'split3_test_score': array([0.36169394, 0.35091434, 0.36438884, 0.36458133, 0.36188643]), 'split4_test_score': array([0.35842156, 0.34898941, 0.35380173, 0.35784408, 0.3520693 ]), 'split5_test_score': array([0.35399423, 0.34879692, 0.36111646, 0.35861405, 0.35668912]), 'split6_test_score': array([0.3599615 , 0.35303176, 0.36785371, 0.36458133, 0.35899904]), 'split7_test_score': array([0.3574591 , 0.35688162, 0.37208855, 0.37170356, 0.36053898]), 'split8_test_score': array([0.35444744, 0.34674625, 0.36176357, 0.35425491, 0.34867154]), 'split9_test_score': array([0.3600308 , 0.35252214, 0.36080092, 0.35829804, 0.35656527]), 'mean_test_score': array([0.36037949, 0.35239075, 0.36276656, 0.36163066, 0.35731868]), 'std_test_score': array([0.00441373, 0.00327258, 0.00486026, 0.0046996 , 0.00431428]), 'rank_test_score': array([3, 5, 1, 2, 4])}学习导航:http://xqnav.top/