最近在进行flink sql 任务开发,在idea中执行都正常,但是打成jar包后,使用 java -jar 或者上传到flink 集群 就报错。

后文提供了2个解决办法,亲测可行。

报错内容如下:

Exception in thread "main" org.apache.flink.table.api.TableException: Could not instantiate the executor. Make sure a planner module is on the classpath

at org.apache.flink.table.api.bridge.java.internal.StreamTableEnvironmentImpl.lookupExecutor(StreamTableEnvironmentImpl.java:194)

at org.apache.flink.table.api.bridge.java.internal.StreamTableEnvironmentImpl.create(StreamTableEnvironmentImpl.java:156)

at org.apache.flink.table.api.bridge.java.StreamTableEnvironment.create(StreamTableEnvironment.java:128)

at com.gbl.starter.FlinkSqlTask.getEnv(FlinkSqlTask.java:68)

at com.gbl.starter.FlinkSqlTask.main(FlinkSqlTask.java:28)

Caused by: org.apache.flink.table.api.NoMatchingTableFactoryException: Could not find a suitable table factory for 'org.apache.flink.table.delegation.ExecutorFactory' in

the classpath.

Reason: No factory implements 'org.apache.flink.table.delegation.ExecutorFactory'.

The following properties are requested:

class-name=org.apache.flink.table.planner.delegation.BlinkExecutorFactory

streaming-mode=true

The following factories have been considered:

org.apache.flink.connector.jdbc.table.JdbcTableSourceSinkFactory

at org.apache.flink.table.factories.TableFactoryService.filterByFactoryClass(TableFactoryService.java:215)

at org.apache.flink.table.factories.TableFactoryService.filter(TableFactoryService.java:176)

at org.apache.flink.table.factories.TableFactoryService.findAllInternal(TableFactoryService.java:164)

at org.apache.flink.table.factories.TableFactoryService.findAll(TableFactoryService.java:121)

at org.apache.flink.table.factories.ComponentFactoryService.find(ComponentFactoryService.java:50)

at org.apache.flink.table.api.bridge.java.internal.StreamTableEnvironmentImpl.lookupExecutor(StreamTableEnvironmentImpl.java:185)

... 4 more

在网上找了很多资料,最常用的是 添加如下依赖:

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-planner-blink_2.11</artifactId>

<version>1.13.3</version>

</dependency>

或者把 provided 去掉。

反复试了就是不得行,心态差点蹦了…

最后找到了2个解决办法,都能够解决问题。希望对你有帮助!

方法1:

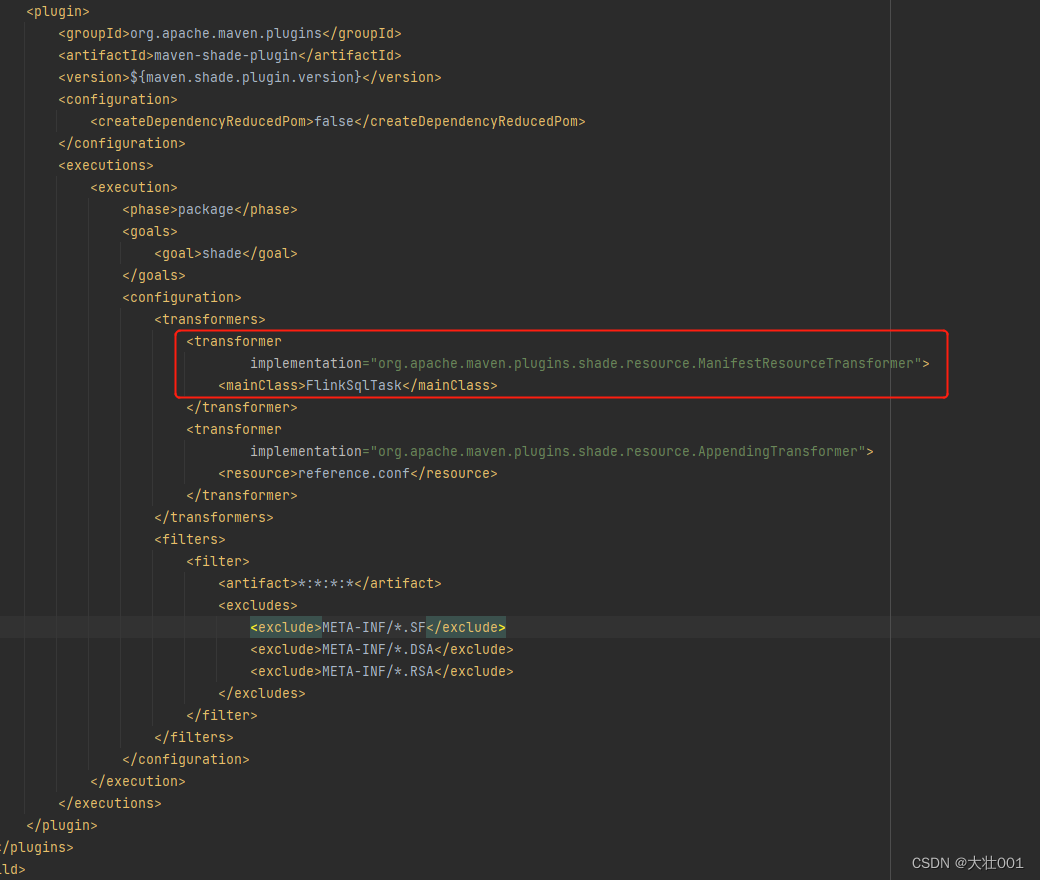

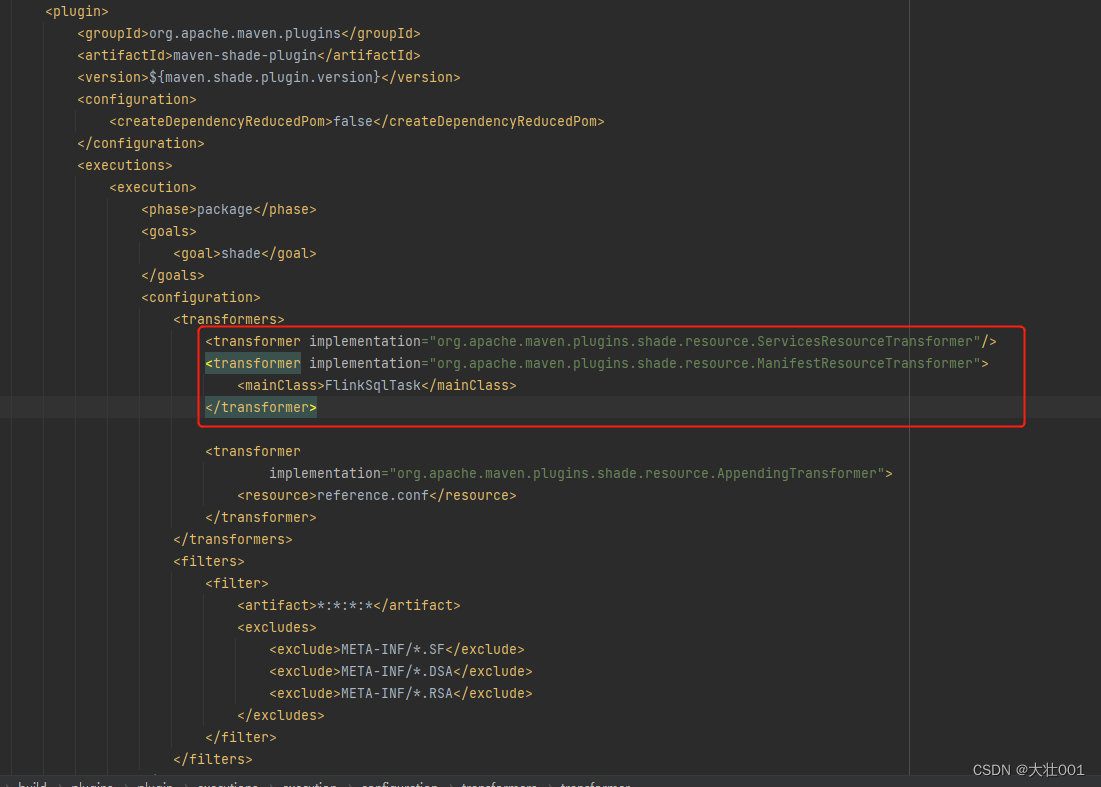

我用的是 maven-shade-plugin 插件打包的,有个配置需要改改:

新增:

修改前:

修改后:

方法2

使用 maven-jar-plugin 来打包

完整build配置如下:

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.1</version>

<configuration>

<source>${

java.version}}</source>

<target>${

java.version}}</target>

<encoding>UTF-8</encoding>

</configuration>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-jar-plugin</artifactId>

<version>3.0.2</version>

<configuration>

<archive>

<manifest>

<addClasspath>true</addClasspath>

<mainClass>FlinkSqlTask</mainClass>

<classpathPrefix>lib/</classpathPrefix>

</manifest>

<addMavenDescriptor>false</addMavenDescriptor>

</archive>

<excludes>

<exclude>1*.p12</exclude>

<exclude>cg-casb.properties</exclude>

</excludes>

</configuration>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-dependency-plugin</artifactId>

<version>3.0.1</version>

<executions>

<execution>

<id>copy-dependencies</id>

<phase>package</phase>

<goals>

<goal>copy-dependencies</goal>

</goals>

<configuration>

<includeScope>runtime</includeScope>

<outputDirectory>${

project.build.directory}/lib/</outputDirectory>

<overWriteReleases>false</overWriteReleases>

<overWriteSnapshots>false</overWriteSnapshots>

<overWriteIfNewer>true</overWriteIfNewer>

</configuration>

</execution>

</executions>

</plugin>

</plugins>

</build>

mvn clean package 之后得到 lib 文件夹 还有 一个 jar包,两个都拷贝到新目录下,执行 java -jar …test.jar 即可。