To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

Traceback (most recent call last):

File "tfifd.py", line 24, in <module>

.appName("TfIdfExample")\

File "/opt/module/software/miniconda3/envs/superset/lib/python3.6/site-packages/pyspark/sql/session.py", line 173, in getOrCreate

sc = SparkContext.getOrCreate(sparkConf)

File "/opt/module/software/miniconda3/envs/superset/lib/python3.6/site-packages/pyspark/context.py", line 343, in getOrCreate

SparkContext(conf=conf or SparkConf())

File "/opt/module/software/miniconda3/envs/superset/lib/python3.6/site-packages/pyspark/context.py", line 118, in __init__

conf, jsc, profiler_cls)

File "/opt/module/software/miniconda3/envs/superset/lib/python3.6/site-packages/pyspark/context.py", line 188, in _do_init

self._javaAccumulator = self._jvm.PythonAccumulatorV2(host, port)

File "/opt/module/software/miniconda3/envs/superset/lib/python3.6/site-packages/py4j/java_gateway.py", line 1525, in __call__

answer, self._gateway_client, None, self._fqn)

File "/opt/module/software/miniconda3/envs/superset/lib/python3.6/site-packages/py4j/protocol.py", line 332, in get_return_value

format(target_id, ".", name, value))

py4j.protocol.Py4JError: An error occurred while calling None.org.apache.spark.api.python.PythonAccumulatorV2. Trace:

py4j.Py4JException: Constructor org.apache.spark.api.python.PythonAccumulatorV2([class java.lang.String, class java.lang.Integer]) does not exist

at py4j.reflection.ReflectionEngine.getConstructor(ReflectionEngine.java:179)

at py4j.reflection.ReflectionEngine.getConstructor(ReflectionEngine.java:196)

at py4j.Gateway.invoke(Gateway.java:237)

at py4j.commands.ConstructorCommand.invokeConstructor(ConstructorCommand.java:80)

at py4j.commands.ConstructorCommand.execute(ConstructorCommand.java:69)

at py4j.GatewayConnection.run(GatewayConnection.java:238)

at java.lang.Thread.run(Thread.java:748)

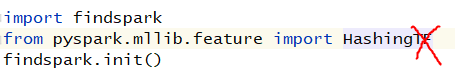

安装findspark

import findspark

findspark.init()

记住上面的中间不能加其他的,最好顶格写,不要问我为什么!