文章目录

测试题:参考博文

笔记:02.改善深层神经网络:超参数调试、正则化以及优化 W3. 超参数调试、Batch Norm和程序框架

- 像TensorFlow、Paddle、Torch、Caffe、Keras等机器学习框架可以显著加快机器学习的发展

- 神经网络编程框架 不仅可以缩短编码时间,有时还可以执行优化来加速你的代码

本作业TensorFlow内容:

- 初始化变量

- 定义 session

- 训练算法

- 实现一个神经网络

1. 探索TensorFlow库

- 导入一些库

import math

import numpy as np

import h5py

import matplotlib.pyplot as plt

import tensorflow as tf

from tensorflow.python.framework import ops

from tf_utils import load_dataset, random_mini_batches, convert_to_one_hot, predict

%matplotlib inline

np.random.seed(1)

import sys

sys.path.append(r"/path/file")

比如要计算损失函数:

l o s s = L ( y ^ , y ) = ( y ^ ( i ) − y ( i ) ) 2 loss = \mathcal{L}(\hat{y}, y) = (\hat y^{(i)} - y^{(i)})^2 loss=L(y^,y)=(y^(i)−y(i))2

y_hat = tf.constant(36, name='y_hat')

# Define y_hat constant. Set to 36.

y = tf.constant(39, name='y')

# Define y. Set to 39

loss = tf.Variable((y - y_hat)**2, name='loss')

# Create a variable for the loss

init = tf.global_variables_initializer()

# When init is run later (session.run(init)),

# the loss variable will be initialized and ready to be computed

with tf.Session() as session:

# Create a session and print the output

session.run(init)

# Initializes the variables

print(session.run(loss))

# Prints the loss

输出: ( 36 − 39 ) 2 = 9 (36-39)^2 = 9 (36−39)2=9

9

TensorFlow编程步骤:

- 创建

Tensors(变量)(尚未执行的) - 写出操作方法(训练之类的)

- 初始化

Tensors - 创建

Session - 运行

Session(运行上面的操作方法)

a = tf.constant(2)

b = tf.constant(10)

c = tf.multiply(a,b)

print(c)

输出:

Tensor("Mul:0", shape=(), dtype=int32)

- 我们没有看见 20,看见输出了一个 Tensor,没有shape,int32类型

- 我只是把变量放入了计算图(computation graph),但是没有运行

sess = tf.Session()

print(sess.run(c))

输出:20

placeholder:placeholder(dtype, shape=None, name=None)

placeholder是一个值只能稍后给定的对象- 当我们运行一个Session时,采用一个字典 (

feed_dict) 给placeholder传递数值

# Change the value of x in the feed_dict

x = tf.placeholder(tf.int64, name = 'xa')

# 定义变量x,稍后才能赋值

print(sess.run(2 * x, feed_dict = {

x: 3}))

# 运行(计算图,给placeholder变量喂数据)

sess.close()

输出:6

1.1 线性函数

计算 W X + b WX + b WX+b, W W W 是矩阵, b b b 是向量

X = tf.constant(np.random.randn(3,1), name = "X")tf.matmul(..., ...)矩阵乘法tf.add(..., ...)加法np.random.randn(...)随机初始化(n 正态分布)

# GRADED FUNCTION: linear_function

def linear_function():

"""

Implements a linear function:

Initializes W to be a random tensor of shape (4,3)

Initializes X to be a random tensor of shape (3,1)

Initializes b to be a random tensor of shape (4,1)

Returns:

result -- runs the session for Y = WX + b

"""

np.random.seed(1)

### START CODE HERE ### (4 lines of code)

X = tf.constant(np.random.randn(3,1), name='X')

W = tf.constant(np.random.randn(4,3), name='W')

b = tf.constant(np.random.randn(4,1), name='b')

Y = tf.matmul(W,X)+b

# Y = tf.add(tf.matmul(W,X), b) # 也可以

# Y = W*X+b # 错误写法!!!!!!!!!!

### END CODE HERE ###

# Create the session using tf.Session() and run it with sess.run(...) on the variable you want to calculate

### START CODE HERE ###

sess = tf.Session()

result = sess.run(Y)

### END CODE HERE ###

# close the session

sess.close()

return result

1.2 计算 sigmoid

- 定义

placeholder变量x - 定义操作

tf.sigmoid(),计算sigmoid值 - 在

session里运行

Method 1:

sess = tf.Session()

# Run the variables initialization (if needed), run the operations

result = sess.run(..., feed_dict = {

...})

sess.close() # Close the session

Method 2: with不用自己写close,比较方便

with tf.Session() as sess:

# run the variables initialization (if needed), run the operations

result = sess.run(..., feed_dict = {

...})

# This takes care of closing the session for you :)

# GRADED FUNCTION: sigmoid

def sigmoid(z):

"""

Computes the sigmoid of z

Arguments:

z -- input value, scalar or vector

Returns:

results -- the sigmoid of z

"""

### START CODE HERE ### ( approx. 4 lines of code)

# Create a placeholder for x. Name it 'x'.

x = tf.placeholder(tf.float32, name='x')

# compute sigmoid(x)

sigmoid = tf.sigmoid(x)

# Create a session, and run it.

# Please use the method 2 explained above.

# You should use a feed_dict to pass z's value to x.

with tf.Session() as sess:

# Run session and call the output "result"

result = sess.run(sigmoid,feed_dict={

x:z})

### END CODE HERE ###

return result

1.3 计算损失函数

计算损失函数:

J = − 1 m ∑ i = 1 m ( y ( i ) log a [ 2 ] ( i ) + ( 1 − y ( i ) ) log ( 1 − a [ 2 ] ( i ) ) ) J = - \frac{1}{m} \sum_{i = 1}^m \bigg (y^{(i)} \log a^{ [2] (i)} + (1-y^{(i)})\log (1-a^{ [2] (i)} )\bigg ) J=−m1i=1∑m(y(i)loga[2](i)+(1−y(i))log(1−a[2](i)))

- TF 内置

tf.nn.sigmoid_cross_entropy_with_logits(logits = ..., labels = ...),它计算以下式子:

− 1 m ∑ i = 1 m ( y ( i ) log σ ( z [ 2 ] ( i ) ) + ( 1 − y ( i ) ) log ( 1 − σ ( z [ 2 ] ( i ) ) ) - \frac{1}{m} \sum_{i = 1}^m \bigg ( y^{(i)} \log \sigma(z^{[2](i)}) + (1-y^{(i)})\log (1-\sigma(z^{[2](i)})\bigg ) −m1i=1∑m(y(i)logσ(z[2](i))+(1−y(i))log(1−σ(z[2](i)))

代码需要输入 z,计算 sigmoid 值(得到 a),然后计算交叉熵损失(tf 一行搞定!)

# GRADED FUNCTION: cost

def cost(logits, labels):

"""

Computes the cost using the sigmoid cross entropy

Arguments:

logits -- vector containing z, output of the last linear unit

(before the final sigmoid activation)

labels -- vector of labels y (1 or 0)

Note: What we've been calling "z" and "y" in this class are

respectively called "logits" and "labels"

in the TensorFlow documentation.

So logits will feed into z, and labels into y.

Returns:

cost -- runs the session of the cost (formula (2))

"""

### START CODE HERE ###

# Create the placeholders for "logits" (z) and "labels" (y) (approx. 2 lines)

z = tf.placeholder(tf.float32, name='z')

y = tf.placeholder(tf.float32, name='y')

# Use the loss function (approx. 1 line)

cost = tf.nn.sigmoid_cross_entropy_with_logits(logits=z, labels=y)

# Create a session (approx. 1 line). See method 1 above.

sess = tf.Session()

# Run the session (approx. 1 line).

cost = sess.run(cost, feed_dict={

z:logits, y:labels})

# Close the session (approx. 1 line). See method 1 above.

sess.close()

### END CODE HERE ###

return cost

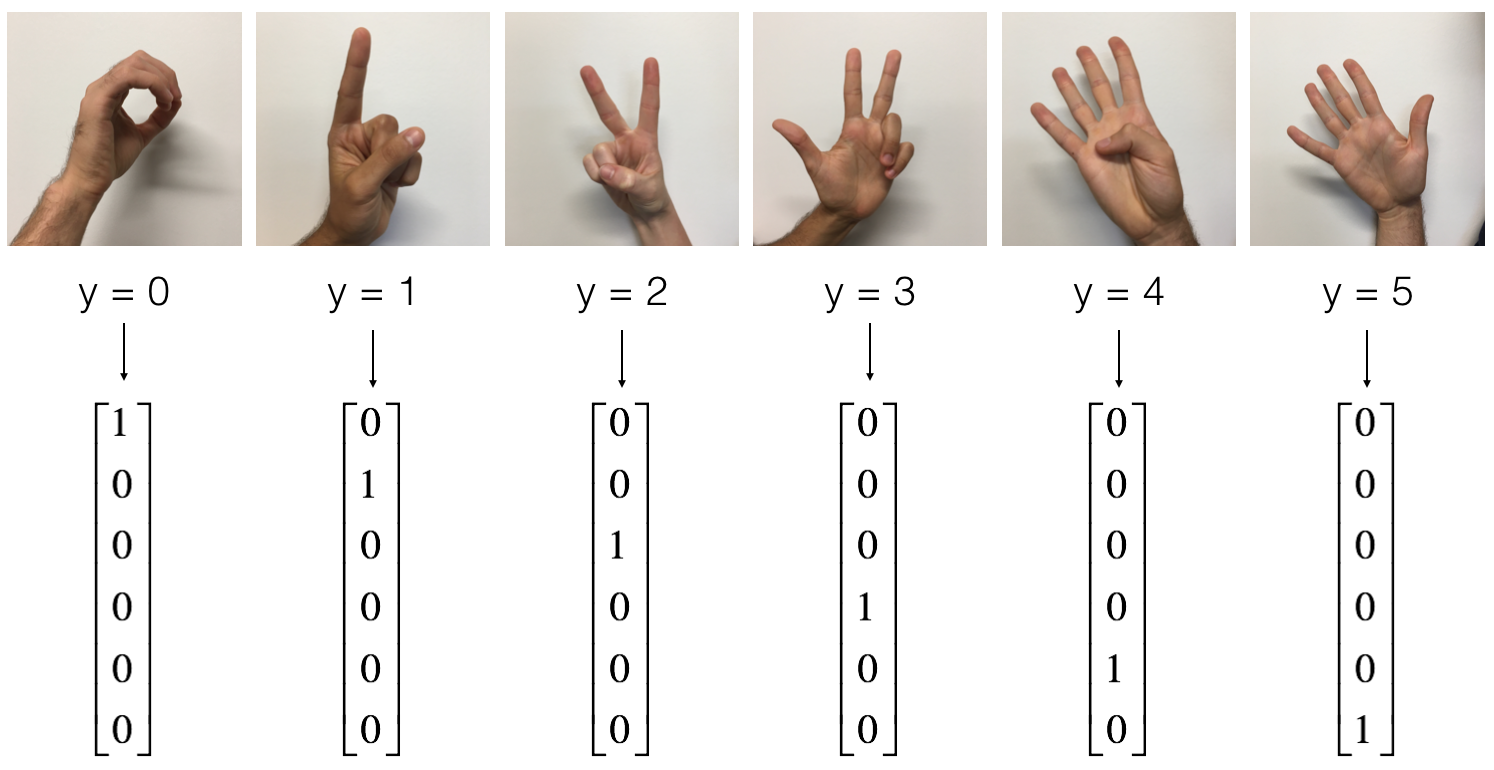

1.4 One_Hot 编码

比如有标签 y 向量,有4种标签值,要进行编码:

tf.one_hot(labels, depth, axis)

# GRADED FUNCTION: one_hot_matrix

def one_hot_matrix(labels, C):

"""

Creates a matrix where the i-th row corresponds to the ith class number and the jth column

corresponds to the jth training example. So if example j had a label i. Then entry (i,j)

will be 1.

Arguments:

labels -- vector containing the labels

C -- number of classes, the depth of the one hot dimension

Returns:

one_hot -- one hot matrix

"""

### START CODE HERE ###

# Create a tf.constant equal to C (depth), name it 'C'. (approx. 1 line)

C = tf.constant(C, name='C')

# Use tf.one_hot, be careful with the axis (approx. 1 line)

one_hot_matrix = tf.one_hot(labels, C, axis=0)

# 不写 axis默认为 1, 0 是列向

# Create the session (approx. 1 line)

sess = tf.Session()

# Run the session (approx. 1 line)

one_hot = sess.run(one_hot_matrix)

# Close the session (approx. 1 line). See method 1 above.

sess.close()

### END CODE HERE ###

return one_hot

1.5 用0,1初始化

tf.ones(shape),tf.zeros(shape)

# GRADED FUNCTION: ones

def ones(shape):

"""

Creates an array of ones of dimension shape

Arguments:

shape -- shape of the array you want to create

Returns:

ones -- array containing only ones

"""

### START CODE HERE ###

# Create "ones" tensor using tf.ones(...). (approx. 1 line)

ones = tf.ones(shape)

# Create the session (approx. 1 line)

sess = tf.Session()

# Run the session to compute 'ones' (approx. 1 line)

ones = sess.run(ones)

# Close the session (approx. 1 line). See method 1 above.

sess.close()

### END CODE HERE ###

return ones

2. 用TensorFlow建立你的第一个神经网络

实现TF模型步骤:

- 创建计算图

- 运行图

2.0 数字手势识别

- 训练集:1080张图片(64*64像素)(0-5手势,每种180张)

- 测试集:120张图片(64*64像素)(0-5手势,每种20张)

加载数据集,看一看

# Loading the dataset

X_train_orig, Y_train_orig, X_test_orig, Y_test_orig, classes = load_dataset()

# Example of a picture

index = 1

plt.imshow(X_train_orig[index])

print ("y = " + str(np.squeeze(Y_train_orig[:, index])))

- 归一化像素值(除以255),对标签进行 one_hot 编码

# Flatten the training and test images

X_train_flatten = X_train_orig.reshape(X_train_orig.shape[0], -1).T

X_test_flatten = X_test_orig.reshape(X_test_orig.shape[0], -1).T

# Normalize image vectors

X_train = X_train_flatten/255.

X_test = X_test_flatten/255.

# Convert training and test labels to one hot matrices

Y_train = convert_to_one_hot(Y_train_orig, 6)

Y_test = convert_to_one_hot(Y_test_orig, 6)

print ("number of training examples = " + str(X_train.shape[1]))

print ("number of test examples = " + str(X_test.shape[1]))

print ("X_train shape: " + str(X_train.shape))

print ("Y_train shape: " + str(Y_train.shape))

print ("X_test shape: " + str(X_test.shape))

print ("Y_test shape: " + str(Y_test.shape))

输出:(注意维度正确与否)

number of training examples = 1080

number of test examples = 120

X_train shape: (12288, 1080) # 64*64*3 = 12288

Y_train shape: (6, 1080)

X_test shape: (12288, 120)

Y_test shape: (6, 120)

下面建立的模型结构为:LINEAR -> RELU -> LINEAR -> RELU -> LINEAR -> SOFTMAX

(多于2种输出,将 sigmoid 输出改成 softmax 输出)

2.1 创建 placeholder

创建 X,Y 的 placeholder,一会运行的时候,给他 feed 训练数据

# GRADED FUNCTION: create_placeholders

def create_placeholders(n_x, n_y):

"""

Creates the placeholders for the tensorflow session.

Arguments:

n_x -- scalar, size of an image vector (num_px * num_px = 64 * 64 * 3 = 12288)

n_y -- scalar, number of classes (from 0 to 5, so -> 6)

Returns:

X -- placeholder for the data input, of shape [n_x, None] and dtype "float"

Y -- placeholder for the input labels, of shape [n_y, None] and dtype "float"

Tips:

- You will use None because it let's us be flexible on the number of examples you will for the placeholders.

In fact, the number of examples during test/train is different.

"""

### START CODE HERE ### (approx. 2 lines)

X = tf.placeholder(tf.float32, shape=(n_x, None), name='X')

Y = tf.placeholder(tf.float32, shape=(n_y, None), name='Y')

### END CODE HERE ###

return X, Y

2.2 初始化参数

用 Xavier 初始化权重,0初始化偏置

参考:深度学习中Xavier初始化

W1 = tf.get_variable("W1", [25,12288], initializer = tf.contrib.layers.xavier_initializer(seed = 1))b1 = tf.get_variable("b1", [25,1], initializer = tf.zeros_initializer())

# GRADED FUNCTION: initialize_parameters

def initialize_parameters():

"""

Initializes parameters to build a neural network with tensorflow. The shapes are:

W1 : [25, 12288]

b1 : [25, 1]

W2 : [12, 25]

b2 : [12, 1]

W3 : [6, 12]

b3 : [6, 1]

Returns:

parameters -- a dictionary of tensors containing W1, b1, W2, b2, W3, b3

"""

tf.set_random_seed(1) # so that your "random" numbers match ours

### START CODE HERE ### (approx. 6 lines of code)

W1 = tf.get_variable('W1',[25,12288],initializer=tf.contrib.layers.xavier_initializer(seed=1))

b1 = tf.get_variable('b1',[25,1],initializer=tf.zeros_initializer())

W2 = tf.get_variable('W2',[12,25],initializer=tf.contrib.layers.xavier_initializer(seed=1))

b2 = tf.get_variable('b2',[12,1],initializer=tf.zeros_initializer())

W3 = tf.get_variable('W3',[6,12],initializer=tf.contrib.layers.xavier_initializer(seed=1))

b3 = tf.get_variable('b3',[6,1],initializer=tf.zeros_initializer())

### END CODE HERE ###

parameters = {

"W1": W1,

"b1": b1,

"W2": W2,

"b2": b2,

"W3": W3,

"b3": b3}

return parameters

2.3 前向传播

tf.add(...,...)加tf.matmul(...,...)矩阵乘法tf.nn.relu(...)ReLU 激活函数

注意,前向传播在 z3 处停止

原因是在tensorflow中,最后一个线性层的输出作为计算损失的函数的输入

所以,不需要 a3

# GRADED FUNCTION: forward_propagation

def forward_propagation(X, parameters):

"""

Implements the forward propagation for the model: LINEAR -> RELU -> LINEAR -> RELU -> LINEAR -> SOFTMAX

Arguments:

X -- input dataset placeholder, of shape (input size, number of examples)

parameters -- python dictionary containing your parameters "W1", "b1", "W2", "b2", "W3", "b3"

the shapes are given in initialize_parameters

Returns:

Z3 -- the output of the last LINEAR unit

"""

# Retrieve the parameters from the dictionary "parameters"

W1 = parameters['W1']

b1 = parameters['b1']

W2 = parameters['W2']

b2 = parameters['b2']

W3 = parameters['W3']

b3 = parameters['b3']

### START CODE HERE ### (approx. 5 lines) # Numpy Equivalents:

Z1 = tf.matmul(W1, X) + b1 # Z1 = np.dot(W1, X) + b1

A1 = tf.nn.relu(Z1) # A1 = relu(Z1)

Z2 = tf.matmul(W2, A1) + b2 # Z2 = np.dot(W2, a1) + b2

A2 = tf.nn.relu(Z2) # A2 = relu(Z2)

Z3 = tf.matmul(W3, A2) + b3 # Z3 = np.dot(W3,Z2) + b3

### END CODE HERE ###

return Z3

# 测试

tf.reset_default_graph()

with tf.Session() as sess:

X, Y = create_placeholders(12288, 6)

parameters = initialize_parameters()

Z3 = forward_propagation(X, parameters)

print("Z3 = " + str(Z3))

# Z3 = Tensor("add_2:0", shape=(6, ?), dtype=float32)

2.4 计算损失

tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits = ..., labels = ...))reduce_mean求平均tf.nn.softmax_cross_entropy_with_logits的输入logits和labels的形状必须是(样本个数, 分类个数classes)

# GRADED FUNCTION: compute_cost

def compute_cost(Z3, Y):

"""

Computes the cost

Arguments:

Z3 -- output of forward propagation (output of the last LINEAR unit), of shape (6, number of examples)

Y -- "true" labels vector placeholder, same shape as Z3

Returns:

cost - Tensor of the cost function

"""

# to fit the tensorflow requirement for tf.nn.softmax_cross_entropy_with_logits(...,...)

logits = tf.transpose(Z3) # 形状不对,先转置

labels = tf.transpose(Y)

### START CODE HERE ### (1 line of code)

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=logits, labels=labels))

### END CODE HERE ###

return cost

2.5 后向传播、更新参数

此步,框架会帮你完成,你需要建立 optimizer 优化器对象

调用这个优化器(传入cost),在 Session 中运行它

例如:

创建梯度下降优化器

optimizer = tf.train.GradientDescentOptimizer(learning_rate = learning_rate).minimize(cost)

运行优化

_ , c = sess.run([optimizer, cost], feed_dict={X: minibatch_X, Y: minibatch_Y})

2.6 建立完整的TF模型

- 使用上面的函数

- 使用 Adam 优化器

optimizer = tf.train.AdamOptimizer(learning_rate=learning_rate).minimize(cost)

def model(X_train, Y_train, X_test, Y_test, learning_rate = 0.0001,

num_epochs = 1500, minibatch_size = 32, print_cost = True):

"""

Implements a three-layer tensorflow neural network: LINEAR->RELU->LINEAR->RELU->LINEAR->SOFTMAX.

Arguments:

X_train -- training set, of shape (input size = 12288, number of training examples = 1080)

Y_train -- test set, of shape (output size = 6, number of training examples = 1080)

X_test -- training set, of shape (input size = 12288, number of training examples = 120)

Y_test -- test set, of shape (output size = 6, number of test examples = 120)

learning_rate -- learning rate of the optimization

num_epochs -- number of epochs of the optimization loop

minibatch_size -- size of a minibatch

print_cost -- True to print the cost every 100 epochs

Returns:

parameters -- parameters learnt by the model. They can then be used to predict.

"""

ops.reset_default_graph()

# to be able to rerun the model without overwriting tf variables

tf.set_random_seed(1)

# to keep consistent results

seed = 3

# to keep consistent results

(n_x, m) = X_train.shape

# (n_x: input size, m : number of examples in the train set)

n_y = Y_train.shape[0]

# n_y : output size

costs = []

# To keep track of the cost

# Create Placeholders of shape (n_x, n_y)

### START CODE HERE ### (1 line)

X, Y = create_placeholders(n_x, n_y)

### END CODE HERE ###

# Initialize parameters

### START CODE HERE ### (1 line)

parameters = initialize_parameters()

### END CODE HERE ###

# Forward propagation: Build the forward propagation in the tensorflow graph

### START CODE HERE ### (1 line)

Z3 = forward_propagation(X, parameters)

### END CODE HERE ###

# Cost function: Add cost function to tensorflow graph

### START CODE HERE ### (1 line)

cost = compute_cost(Z3, Y)

### END CODE HERE ###

# Backpropagation: Define the tensorflow optimizer. Use an AdamOptimizer.

### START CODE HERE ### (1 line)

optimizer = tf.train.AdamOptimizer(learning_rate=learning_rate).minimize(cost)

### END CODE HERE ###

# 初始化所有变量

init = tf.global_variables_initializer()

# Start the session to compute the tensorflow graph

with tf.Session() as sess:

# Run the initialization

sess.run(init)

# Do the training loop

for epoch in range(num_epochs):

epoch_cost = 0. # Defines a cost related to an epoch

num_minibatches = int(m / minibatch_size)

# 多少个minibatch子集

seed = seed + 1

minibatches = random_mini_batches(X_train, Y_train, minibatch_size, seed)

for minibatch in minibatches:#对每个随机的子集

# Select a minibatch

(minibatch_X, minibatch_Y) = minibatch

# IMPORTANT: The line that runs the graph on a minibatch.

# Run the session to execute the "optimizer" and the "cost",

# the feedict should contain a minibatch for (X,Y).

### START CODE HERE ### (1 line)

_ , minibatch_cost = sess.run([optimizer, cost], feed_dict={

X:minibatch_X, Y:minibatch_Y})

### END CODE HERE ###

epoch_cost += minibatch_cost / num_minibatches

# Print the cost every epoch

if print_cost == True and epoch % 100 == 0:

print ("Cost after epoch %i: %f" % (epoch, epoch_cost))

if print_cost == True and epoch % 5 == 0:

costs.append(epoch_cost)

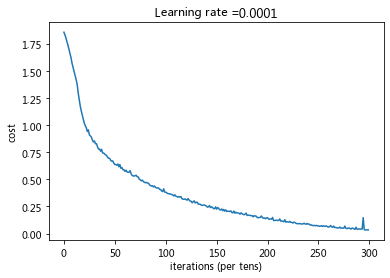

# plot the cost

plt.plot(np.squeeze(costs))

plt.ylabel('cost')

plt.xlabel('iterations (per tens)')

plt.title("Learning rate =" + str(learning_rate))

plt.show()

# lets save the parameters in a variable

parameters = sess.run(parameters)

print ("Parameters have been trained!")

# Calculate the correct predictions

correct_prediction = tf.equal(tf.argmax(Z3), tf.argmax(Y))

# Calculate accuracy on the test set

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))

print ("Train Accuracy:", accuracy.eval({

X: X_train, Y: Y_train}))

print ("Test Accuracy:", accuracy.eval({

X: X_test, Y: Y_test}))

return parameters

- 运行模型进行训练

parameters = model(X_train, Y_train, X_test, Y_test)

Cost after epoch 0: 1.855702

Cost after epoch 100: 1.017255

Cost after epoch 200: 0.733184

Cost after epoch 300: 0.573071

Cost after epoch 400: 0.468573

Cost after epoch 500: 0.381228

Cost after epoch 600: 0.313815

Cost after epoch 700: 0.253708

Cost after epoch 800: 0.203900

Cost after epoch 900: 0.166453

Cost after epoch 1000: 0.146636

Cost after epoch 1100: 0.107279

Cost after epoch 1200: 0.086699

Cost after epoch 1300: 0.059341

Cost after epoch 1400: 0.052289

Parameters have been trained!

Train Accuracy: 0.9990741

Test Accuracy: 0.725

- 可以看出模型在训练集上拟合的很好,在测试集上效果一般,存在过拟合,可以尝试添加 L2 正则化、dropout 正则化,降低过拟合

2.7 用自己的照片测试

import scipy

from PIL import Image

from scipy import ndimage

## START CODE HERE ## (PUT YOUR IMAGE NAME)

my_image = "thumbs_up.jpg"

## END CODE HERE ##

# We preprocess your image to fit your algorithm.

fname = "images/" + my_image

image = np.array(ndimage.imread(fname, flatten=False))

my_image = scipy.misc.imresize(image, size=(64,64)).reshape((1, 64*64*3)).T

my_image_prediction = predict(my_image, parameters)

plt.imshow(image)

print("Your algorithm predicts: y = " + str(np.squeeze(my_image_prediction)))

Your algorithm predicts: y = 3

程序预测出错了,因为训练集里没有竖着大拇指表示的1

Your algorithm predicts: y = 5

总结

- TensorFlow是一个深度学习编程框架

- TensorFlow中的两个主要对象是 Tensors 和 Operators

- code 步骤:

- 创建图包含Tensors (

Variables,Placeholders…)和 Operators (tf.matmul,tf.add, …) - 创建一个 Session

- 初始化 Session

- 运行 Session 执行图

- 可以多次执行图

在 optimizer 优化器对象上运行 Session 时,反向传播和优化将自动完成

我的CSDN博客地址 https://michael.blog.csdn.net/

长按或扫码关注我的公众号(Michael阿明),一起加油、一起学习进步!