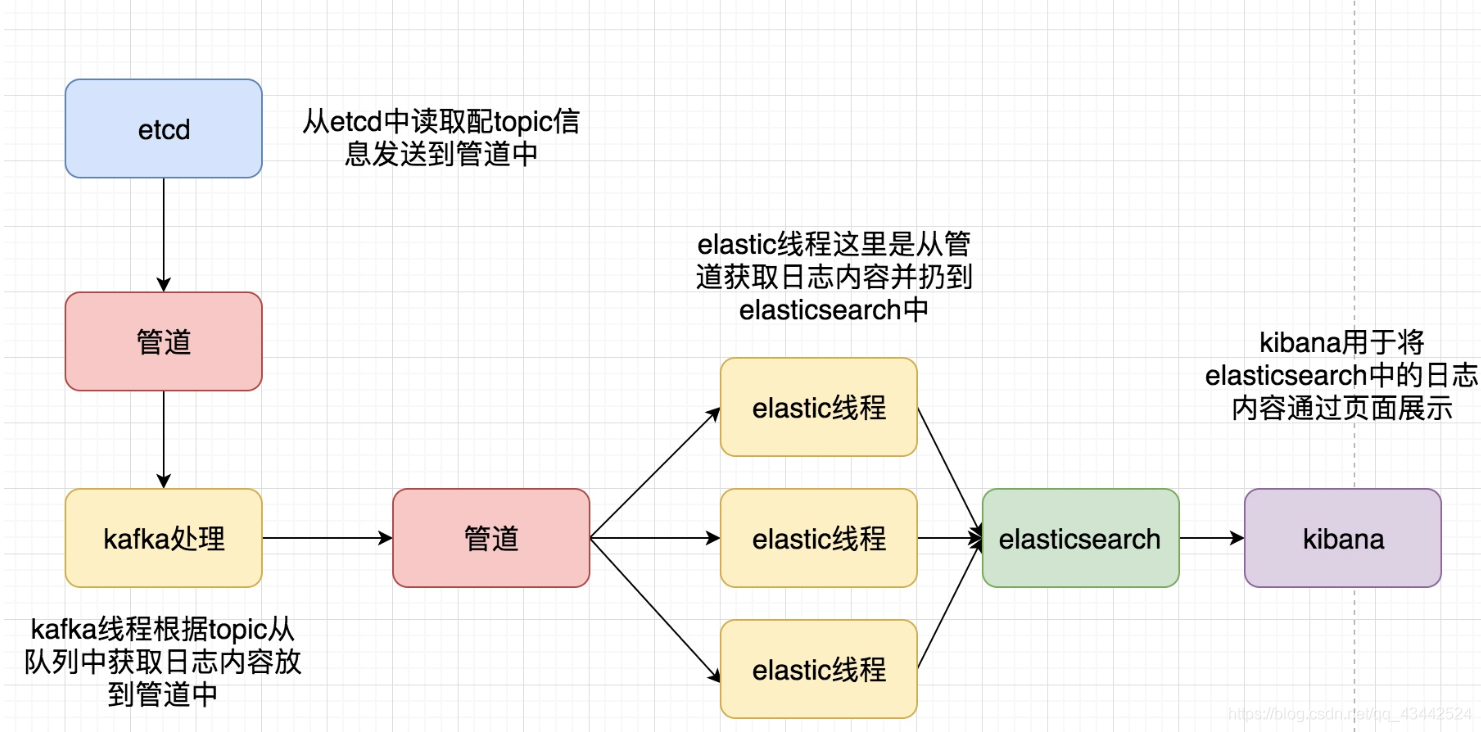

前面我们将logTransfor的配置初始化成功了, 下面将从Kafka中消费数据, 并将日志入库到Elasticsearch并通过Kibana进行展示

图源自 : https://www.cnblogs.com/zhaof/p/8948516.html

将日志保存到ES中

初始化Es

在main函数中添加初始化InitEs函数

// 初始化Es

err = es.InitEs(logConfig.EsAddr)

if err != nil {

logs.Error("初始化Elasticsearch失败, err:", err)

return

}

logs.Debug("初始化Es成功")

初始化Es

elasticsearch.go

package es

import (

"fmt"

"github.com/olivere/elastic/v7"

)

type Tweet struct {

User string

Message string

}

var (

esClient *elastic.Client

)

func InitEs(addr string) (err error) {

client, err := elastic.NewClient(elastic.SetSniff(false), elastic.SetURL(addr))

if err != nil {

fmt.Println("connect es error", err)

return nil

}

esClient = client

return

}

注意: Es的地址需要填写为

http://localhost:9200/

运行main函数

020/03/28 18:01:53.360 [D] 初始化日志模块成功

2020/03/28 18:01:53.374 [D] 初始化Kafka成功

2020/03/28 18:01:53.381 [D] 初始化Es成功

编写run与SendToES函数

在main函数中添加run函数, 用于运行kafka消费数据到Es

// 运行

err = run()

if err != nil {

logs.Error("运行错误, err:", err)

return

}

run.go

package main

import (

"github.com/Shopify/sarama"

"github.com/astaxie/beego/logs"

)

func run() (err error) {

partitionList, err := kafkaClient.Client.Partitions(kafkaClient.Topic)

if err != nil {

logs.Error("Failed to get the list of partitions: ", err)

return

}

for partition := range partitionList {

pc, errRet := kafkaClient.Client.ConsumePartition(kafkaClient.Topic, int32(partition), sarama.OffsetNewest)

if errRet != nil {

err = errRet

logs.Error("Failed to start consumer for partition %d: %s\n", partition, err)

return

}

defer pc.AsyncClose()

go func(pc sarama.PartitionConsumer) {

kafkaClient.wg.Add(1)

for msg := range pc.Messages() {

logs.Debug("Partition:%d, Offset:%d, Key:%s, Value:%s", msg.Partition, msg.Offset, string(msg.Key), string(msg.Value))

err = es.SendToES(kafkaClient.topic, msg.Value)

if err != nil {

logs.Warn("send to es failed, err:%v", err)

}

}

kafkaClient.wg.Done()

}(pc)

}

kafkaClient.wg.Wait()

return

}

es.go

package es

import (

"context"

"fmt"

"github.com/olivere/elastic/v7"

)

type LogMessage struct {

App string

Topic string

Message string

}

var (

esClient *elastic.Client

)

func InitEs(addr string) (err error) {

client, err := elastic.NewClient(elastic.SetSniff(false), elastic.SetURL(addr))

if err != nil {

fmt.Println("connect es error", err)

return nil

}

esClient = client

return

}

func SendToES(topic string, data []byte) (err error) {

msg := &LogMessage{}

msg.Topic = topic

msg.Message = string(data)

_, err = esClient.Index().

Index(topic).

BodyJson(msg).

Do(context.Background())

if err != nil {

panic(err)

return

}

return

}

构建测试

运行logAgent数据, 从etcd中读取日志配置, 经过Kafka生产

2020/03/28 20:25:17.581 [D] 导入日志成功&{debug E:\\Go\\logCollect\\logAgent\\logs\\my.log 100 0.0.0.0:9092 [] 0.0.0.0:2379 /backend/logagent/config/}

2020/03/28 20:25:17.589 [D] resp from etcd:[]

2020/03/28 20:25:17.590 [D] resp from etcd:[]

2020/03/28 20:25:17.590 [D] resp from etcd:[]

2020/03/28 20:25:17.591 [D] resp from etcd:[]

2020/03/28 20:25:17.613 [D] resp from etcd:[key:"/backend/logagent/config/192.168.0.11" create_revision:6 mod_revision:12 version:7 value:"[{\"logpath\":\"E:/nginx/logs/access.log\",\"topic\":\"nginx_log\"},{\"logpath\":\"E:/nginx/logs/error.log\",\"topic\":\"nginx_log_err\"},{\"logpath\":\"E:/nginx/logs/error2.log\",\"topic\":\"nginx_log_err2\"}]" ]

2020/03/28 20:25:17.613 [D] 日志设置为[{E:/nginx/logs/access.log nginx_log} {E:/nginx/logs/error.log nginx_log_err} {E:/nginx/logs/error2.log nginx_log_err2}]

2020/03/28 20:25:17.613 [D] resp from etcd:[]

2020/03/28 20:25:17.613 [D] 连接etcd成功

2020/03/28 20:25:17.613 [D] 初始化etcd成功!

2020/03/28 20:25:17.613 [D] 初始化tailf成功!

2020/03/28 20:25:17.613 [D] 开始监控key: /backend/logagent/config/192.168.0.1

2020/03/28 20:25:17.613 [D] 开始监控key: /backend/logagent/config/169.254.109.181

2020/03/28 20:25:17.614 [D] 开始监控key: /backend/logagent/config/192.168.106.1

2020/03/28 20:25:17.615 [D] 开始监控key: /backend/logagent/config/169.254.30.148

2020/03/28 20:25:17.616 [D] 开始监控key: /backend/logagent/config/169.254.153.68

2020/03/28 20:25:17.616 [D] 开始监控key: /backend/logagent/config/192.168.0.11

2020/03/28 20:25:17.617 [D] 初始化Kafka producer成功,地址为: 0.0.0.0:9092

2020/03/28 20:25:17.617 [D] 初始化Kafka成功!

2020/03/28 20:26:31.675 [D] get config from etcd ,Type: PUT, Key:/backend/logagent/config/192.168.0.11, Value:[{"logpath":"E:\\Go\\logCollect\\logAgent\\logs\\my.log","topic":"nginx_log"}]

2020/03/28 20:26:31.675 [D] get config from etcd success, [{E:\Go\logCollect\logAgent\logs\my.log nginx_log}]

2020/03/28 20:26:31.675 [W] tail obj 退出, 配置项为conf:{E:/nginx/logs/error2.log nginx_log_err2}

2020/03/28 20:26:31.675 [W] tail obj 退出, 配置项为conf:{E:/nginx/logs/access.log nginx_log}

2020/03/28 20:26:31.675 [W] tail obj 退出, 配置项为conf:{E:/nginx/logs/error.log nginx_log_err}

2020/03/28 20:26:31.947 [D] read success, pid:0, offset:34210, topic:nginx_log

2020/03/28 20:26:31.948 [D] read success, pid:0, offset:34211, topic:nginx_log

2020/03/28 20:26:32.177 [D] read success, pid:0, offset:34212, topic:nginx_log

2020/03/28 20:26:32.178 [D] read success, pid:0, offset:34213, topic:nginx_log

2020/03/28 20:26:32.180 [D] read success, pid:0, offset:34214, topic:nginx_log

2020/03/28 20:26:32.180 [D] read success, pid:0, offset:34215, topic:nginx_log

2020/03/28 20:26:32.427 [D] read success, pid:0, offset:34216, topic:nginx_log

2020/03/28 20:26:32.428 [D] read success, pid:0, offset:34217, topic:nginx_log

2020/03/28 20:26:32.430 [D] read success, pid:0, offset:34218, topic:nginx_log

2020/03/28 20:26:32.432 [D] read success, pid:0, offset:34219, topic:nginx_log

2020/03/28 20:26:32.432 [D] read success, pid:0, offset:34220, topic:nginx_log

2020/03/28 20:26:32.433 [D] read success, pid:0, offset:34221, topic:nginx_log

启动logTransfer, 收集日志信息, 并将日志信息传入Es中

2020/03/28 20:29:17.109 [D] 初始化日志模块成功

2020/03/28 20:29:17.118 [D] 初始化Kafka成功

2020/03/28 20:29:17.120 [D] 初始化Es成功

2020/03/28 20:29:25.518 [D] Partition:0, Offset:153210, Key:, Value:2020/03/28 20:29:25.265 [D] 开始监控key: /backend/logagent/config/192.168.0.1

2020/03/28 20:29:27.361 [D] Partition:0, Offset:153211, Key:, Value:2020/03/28 20:29:25.265 [D] 开始监控key: /backend/logagent/config/169.254.30.148

2020/03/28 20:29:27.531 [D] Partition:0, Offset:153212, Key:, Value:2020/03/28 20:29:25.265 [D] 开始监控key: /backend/logagent/config/169.254.153.68

2020/03/28 20:29:27.743 [D] Partition:0, Offset:153213, Key:, Value:2020/03/28 20:29:25.266 [D] 开始监控key: /backend/logagent/config/192.168.0.11

2020/03/28 20:29:27.870 [D] Partition:0, Offset:153214, Key:, Value:2020/03/28 20:29:25.267 [D] 初始化Kafka producer成功,地址为: 0.0.0.0:9092

2020/03/28 20:29:28.012 [D] Partition:0, Offset:153215, Key:, Value:2020/03/28 20:29:25.267 [D] 初始化Kafka成功!

2020/03/28 20:29:28.191 [D] Partition:0, Offset:153216, Key:, Value:2020/03/28 20:29:25.518 [D] read success, pid:0, offset:153210, topic:nginx_log

2020/03/28 20:29:28.311 [D] Partition:0, Offset:153217, Key:, Value:

2020/03/28 20:29:28.456 [D] Partition:0, Offset:153218, Key:, Value:2020/03/28 20:29:25.521 [D] read success, pid:0, offset:153211, topic:nginx_log

2020/03/28 20:29:28.600 [D] Partition:0, Offset:153219, Key:, Value:

2020/03/28 20:29:28.744 [D] Partition:0, Offset:153220, Key:, Value:2020/03/28 20:29:25.522 [D] read success, pid:0, offset:153212, topic:nginx_log

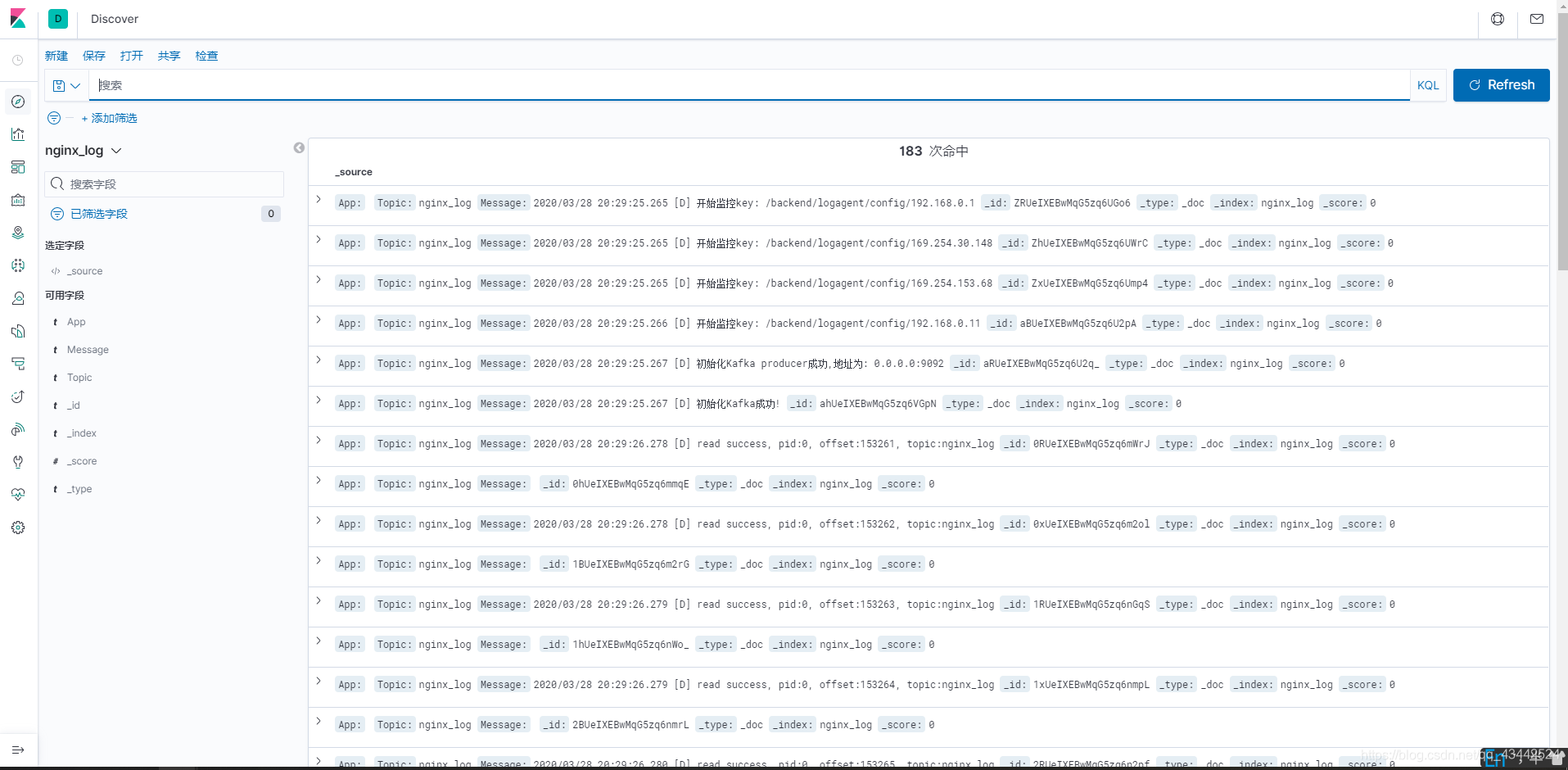

打开http://localhost:5601/ kibana

添加刚才传入的Topic为索引, 然后打开Discover查看

已经能看到最新的数据了, 成功收集!