1 Introduction

Cross-platform data between heterogeneous database synchronization, limited program, Oracle OGG considered a more reliable:

Advantages: Good performance, fast large amount of data speed, impact on the online database performance is negligible;Disadvantages: install configure, maintain a bit of trouble, especially in the late when the field changes;

personally feel suitable for deployment on a small scale, large amount of data, high performance requirements of synchronization requirements.

This case with Oracle (10.10.10.1) - explain the deployment process and precautions> mysql (10.10.10.2).

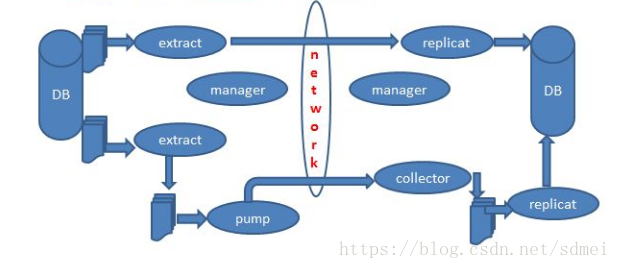

Rationale:

OGG extracted from the log file or redo log of the source database data change related table, generate specific file formats, sent to the target database; target library to read the file, applied to the target table;

source library has ext \ pump process, the target library has rep processes that were completed data extraction, send files, job application documents;

in this case are ext1 \ pump1 \ rep1 processes, each process has its own configuration file;

if the source database has been online ran on for some time, with the amount of data, you need to initialize the target database through the initialization tasks, and then by ext1 \ pump1 \ rep1 incremental synchronization process'

in this case are initext1 \ initrep1 completed initialization (Note: initialize no pump process)

In addition, the source and destination have a process manager responsible for global configuration.

Pirates a map:

2. Install

Oracle forExport ORACLE_HOME = / u01 / App / Oracle / Product / 11.2.0 / dbhome_1

Export the LD_LIBRARY_PATH = $ the LD_LIBRARY_PATH: / u01 / App / Oracle / GGS: $ ORACLE_HOME / lib

./runInstaller

installation path specified

./ggsci

Create SUBDIRS

for mysql

-extracting

direct execution ./ggsci

the Create SUBDIRS

3. Prepare

Oracle database archiving mode;Oracle database and set SUPPLEMENTAL LOG LOGGING FORCE:

the SELECT supplemental_log_data_min, force_logging the FROM v $ Database;

SQL> the ALTER DATABASE the ADD SUPPLEMENTAL LOG the DATA;

SQL> the ALTER DATABASE FORCE LOGGING;

Oracle database table setting trandata

./ggci

GGSCI> dblogin System password mypwd the userid

GGSCI> the Add trandata myschema.mydb

MySQL set the following parameters:

binlog_row_image: Full (default)

log_bin

log_bin-index

max_binlog_size

binlog_format

MySQL database mydb created in the checkpoint table: chkpt_mysql_create.sql

source and target-table must have a primary key or unique key;

the target table empty;

the target table disabling foreign keys, constraints, triggers;

if the initial large amount of data, delete temporary target table index, and then built the initial import,

4. Profiles

The following directory file in dirprarm- the source -

mgr:

PORT 7809

DYNAMICPORTLIST 7810-7820

ACCESSRULE, PROG *, IPADDR 192.168.*.*, ALLOW

--AUTOSTART ER *

--AUTORESTART ER *, RETRIES 3, WAITMINUTES 3

STARTUPVALIDATIONDELAY 5

PURGEOLDEXTRACTS /backup/ggs12/dirdat/*, USECHECKPOINTS, MINKEEPHOURS 2

initext1:

EXTRACT initext1

SETENV (ORACLE_HOME = "/u01/app/oracle/product/11.2.0/dbhome_1")

SETENV (ORACLE_SID = "myora")

USERID system PASSWORD mypasswprd

RMTHOST 10.10.10.2, MGRPORT 7809

RMTTASK REPLICAT, GROUP initrep1

TABLE schema_name.table_name;

ext1:

EXTRACT ext1

SETENV (ORACLE_HOME = "/u01/app/oracle/product/11.2.0/dbhome_1")

SETENV (ORACLE_SID = "myora")

USERID system PASSWORD mypwd

LOGALLSUPCOLS

EXTTRAIL /backup/ggs12/dirdat/aa

TABLE myschema.mytable;

pump:

EXTRACT pump1

USERID system PASSWORD mypassword

RMTHOST 10.10.10.2, MGRPORT 7809

RMTTRAIL /data1/ggs/dirdat/aa

TABLE myschema.myname;mgr:

PORT 7809

DYNAMICPORTLIST 7810-7820

#AUTOSTART ER *

#AUTORESTART ER *, RETRIES 3, WAITMINUTES 3

STARTUPVALIDATIONDELAY 5

PURGEOLDEXTRACTS /data1/ggs/dirdat/*, USECHECKPOINTS, MINKEEPHOURS 2

initrep1:

REPLICAT initrep1

TARGETDB [email protected]:3306, USERID root, PASSWORD mypassword

MAP myschema.mytable, TARGET mydb.mytable, COLMAP(USEDEFAULTS, source_cola = target_cola, source_colb = target_colb);

rep1:

REPLICAT rep1

TARGETDB [email protected]:3306, USERID root, PASSWORD mypassword

MAP myschema.mytable, TARGET mydb.mytable, COLMAP(USEDEFAULTS, source_cola = target_cola, source_colb = target_colb);5. Create a process

After creating the configuration file, you receive the following command to create a process (auto-read configuration)- source -

ggsci > add extract initext1, sourceistable

ggsci > add extract ext1, tranlog, begin now

ggsci > add exttrail /backup/ggs12/dirdat/aa, extract ext1

ggsci > add ext pump1, exttrailsource /backup/ggs12/dirdat/aa

ggsci > add rmttrail /data1/ggs/dirdat/aa, ext pump1ggsci > add replicat initrep1, specialrun

ggsci > add rep rep1, exttrail /data1/ggs/dirdat/aa, checkpointtable mydb.ggs_checkpoint6. Start Sync

source:

ggsci > start ext1

ggsci > start pump1

target

rep1设置HANDLECOLLISIONS

source:

ggsci > start initext1

target:

ggsci > view report initrep1

确认initrep1执行完成

ggsci > start rep1

ggsci > info rep1

rep1配置文件删除HANDLECOLLISIONS?配置

ggsci > send replicat rep1, nohandlecollisions

ggsci > start rep rep17. Notes

(1) .OGG not recognize complex unique key, so there is need to specify a composite key keyCols, or to all fields as Key;

(2) the step of adjusting the source or destination field of the table:

Stop ext \ pump \ rep processesmodify the source and target database field

Start ext \ pump \ rep process

In order to prevent misuse DBA or operation and maintenance, create a trigger on the table related oracle, which is to remind ggs table:

create or replace trigger tri_ddl_ggstab_permission

before drop or truncate or alter on database

begin

if ORA_DICT_OBJ_NAME in ('TABNAME1','TABNAME2') then

raise_application_error(-20001,'GGS table, Contact DBA.');

end if;

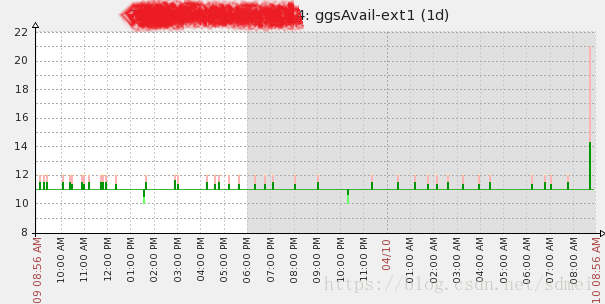

end;(3) Everything is inseparable from surveillance, ggs monitoring heartbeat table by creating real-time synchronization monitor the situation

Description:

In source and target tables built heartbeat table;

Source updated automatically by the JOB heartbeat table;

Heartbeat object table regularly check time table and the current time of the difference;

Target table now () - update_time, the reaction ggs synchrony;

Destination table auto_time - update_time, the reaction ggs delay situation;

source:

create table ggs_monitor(ggs_process varchar2(100), update_time date) tablespace lbdata;

alter table ggs_monitor add constraint pk_ggsmonitor primary key(ggs_process);

insert into ggs_monitor(ggs_process,update_time) values ('ext1',sysdate);

begin

dbms_scheduler.create_job(job_name => 'job_ggs_monitor',

job_type => 'PLSQL_BLOCK',

job_action => 'begin update ggs_monitor set update_time=sysdate; commit; end;',

start_date => sysdate,

enabled => true,

repeat_interval => 'Freq=Secondly;Interval=10');

end;

target:

create table ggs_monitor (

ggs_process varchar(100) COLLATE utf8_bin DEFAULT NULL,

update_time datetime DEFAULT NULL,

auto_tim` datetime DEFAULT CURRENT_TIMESTAMP ON UPDATE CURRENT_TIMESTAMP,

primary key(ggs_process)

);

GGS配置注意事项:

ext1: TABLE system.ggs_monitor, WHERE (ggs_process = 'ext1');

zabbix

UserParameter=ggsAvail[*],/etc/zabbix/script/ggsAvail.sh $1

UserParameter=ggsDelay[*],/etc/zabbix/script/ggsDelay.sh $1

ggsAvail.sh

#!/bin/bash

if [ $# -ne 1 ]; then

echo "Usage:$0 extname"

exit

fi

extname=$1

rootPath=/etc/zabbix/script

tmpLog=$rootPath/tmpGgsAvail${extname}.log

mysql -u root -pmypwd <<EOF > ${tmpLog} 2>/dev/null

select concat('RESULTLINE#',now() - update_time,'#') message from dbadmin.ggs_monitor where ggs_process='${extname}';

EOF

sed -i '/RESULTLINE/!d' ${tmpLog}

resultLine=`cat ${tmpLog} | wc -l`

if [ $resultLine -ne 1 ]; then

echo 3600

exit

fi

echo `cat ${tmpLog} | cut -d "#" -f 2`

exit

ggsDelay.sh

#!/bin/bash

if [ $# -ne 1 ]; then

echo "Usage:$0 extname"

exit

fi

extname=$1

rootPath=/etc/zabbix/script

tmpLog=$rootPath/tmpGgsDelay${extname}.log

mysql -u root -pmypwd <<EOF > ${tmpLog} 2>/dev/null

select concat('RESULTLINE#',auto_time - update_time,'#') message from dbadmin.ggs_monitor where ggs_process='${extname}';

EOF

sed -i '/RESULTLINE/!d' ${tmpLog}

resultLine=`cat ${tmpLog} | wc -l`

if [ $resultLine -ne 1 ]; then

echo 3600

exit

fi

echo `cat ${tmpLog} | cut -d "#" -f 2`

exitzabbix monitoring results