The following are notes on the key points of the first part of "Neural Networks and Deep Learning" in the DeepLearning.ai course project of Teacher Wu Enda on Coursera.

The notes do not contain all the records of the small video courses. If you need to study the content discarded in the notes, please go to Coursera or NetEase Cloud Classroom. At the same time, before reading the following notes, it is strongly recommended to study the video course of teacher Wu Enda.

1

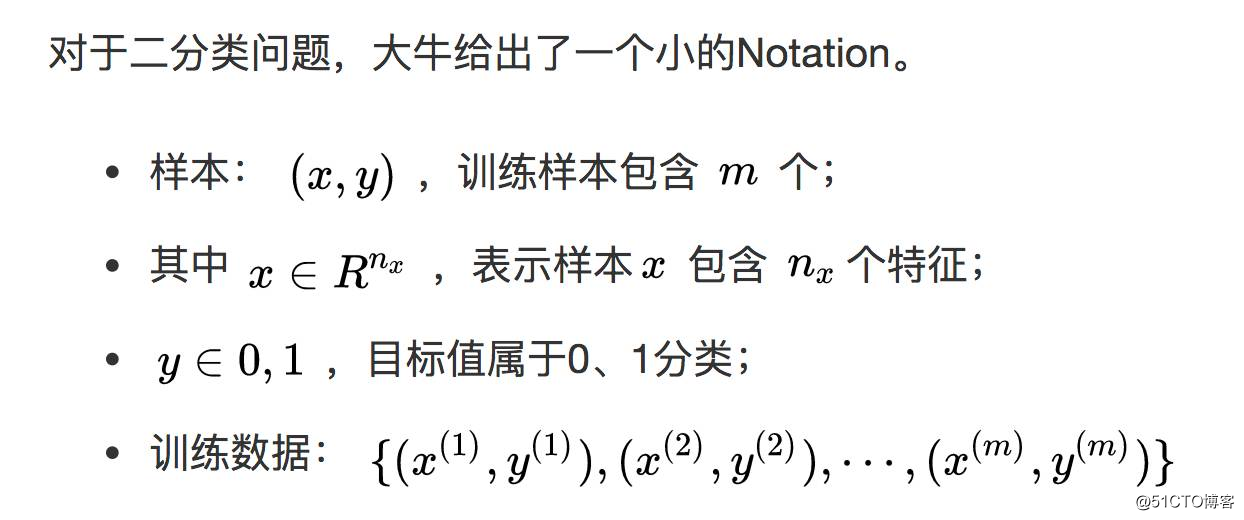

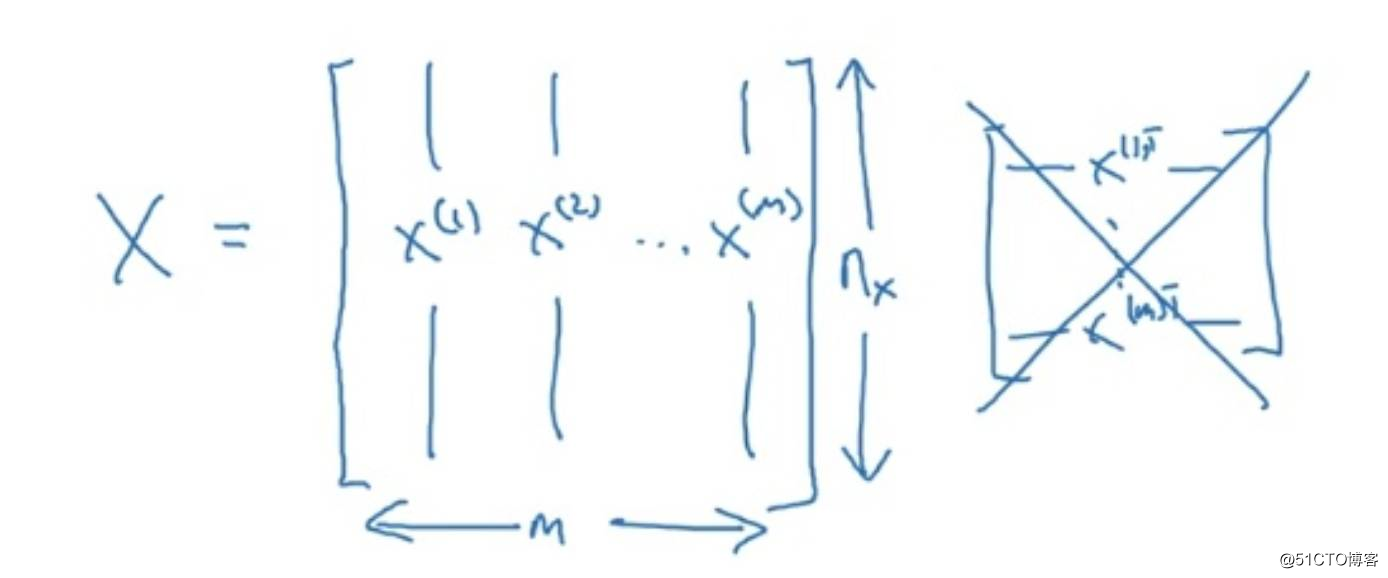

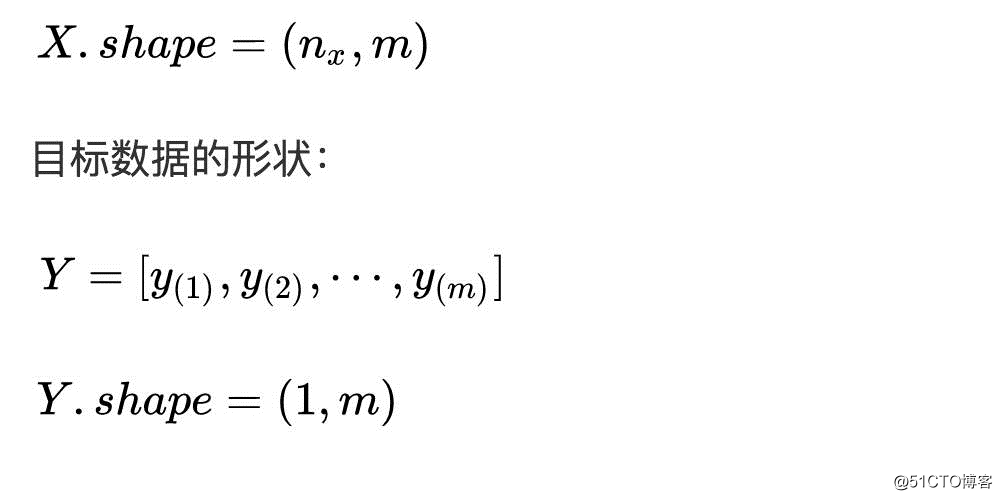

Two classification problem

The shape of the target data:

2

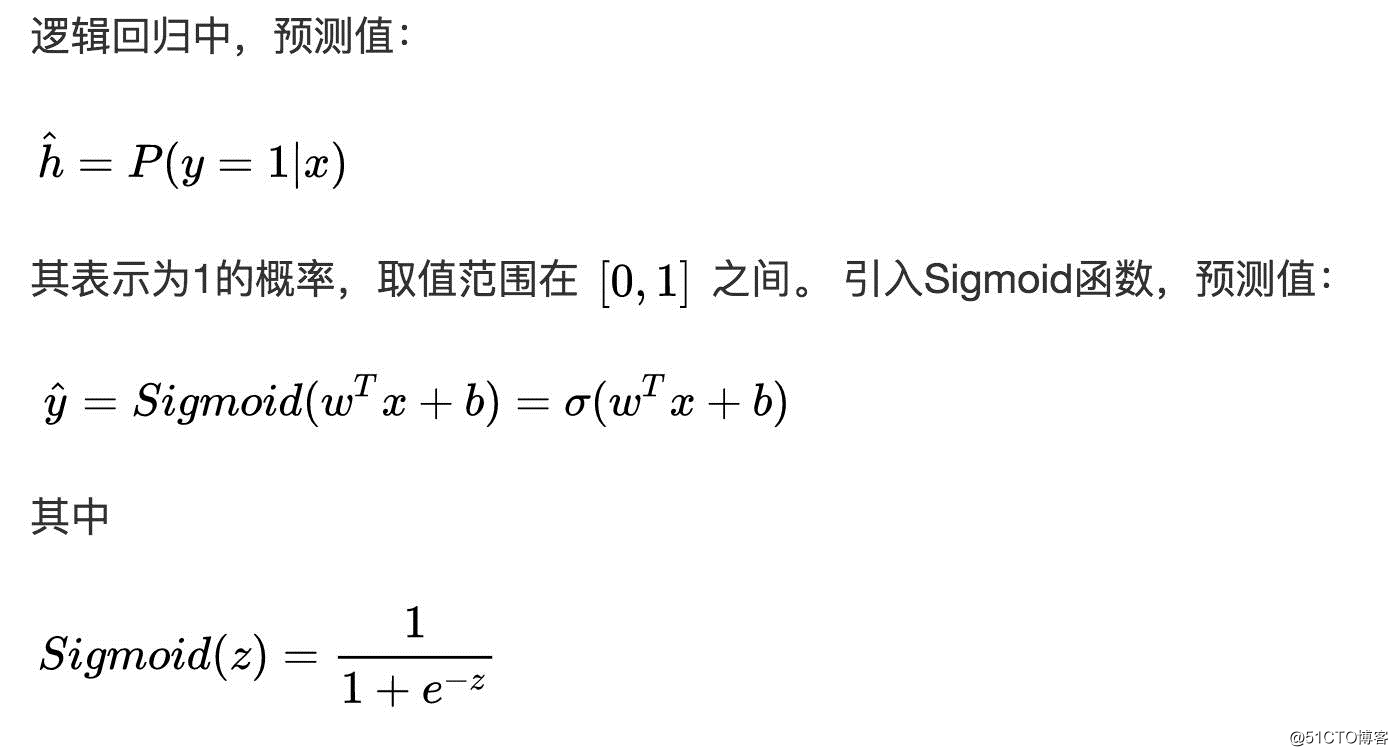

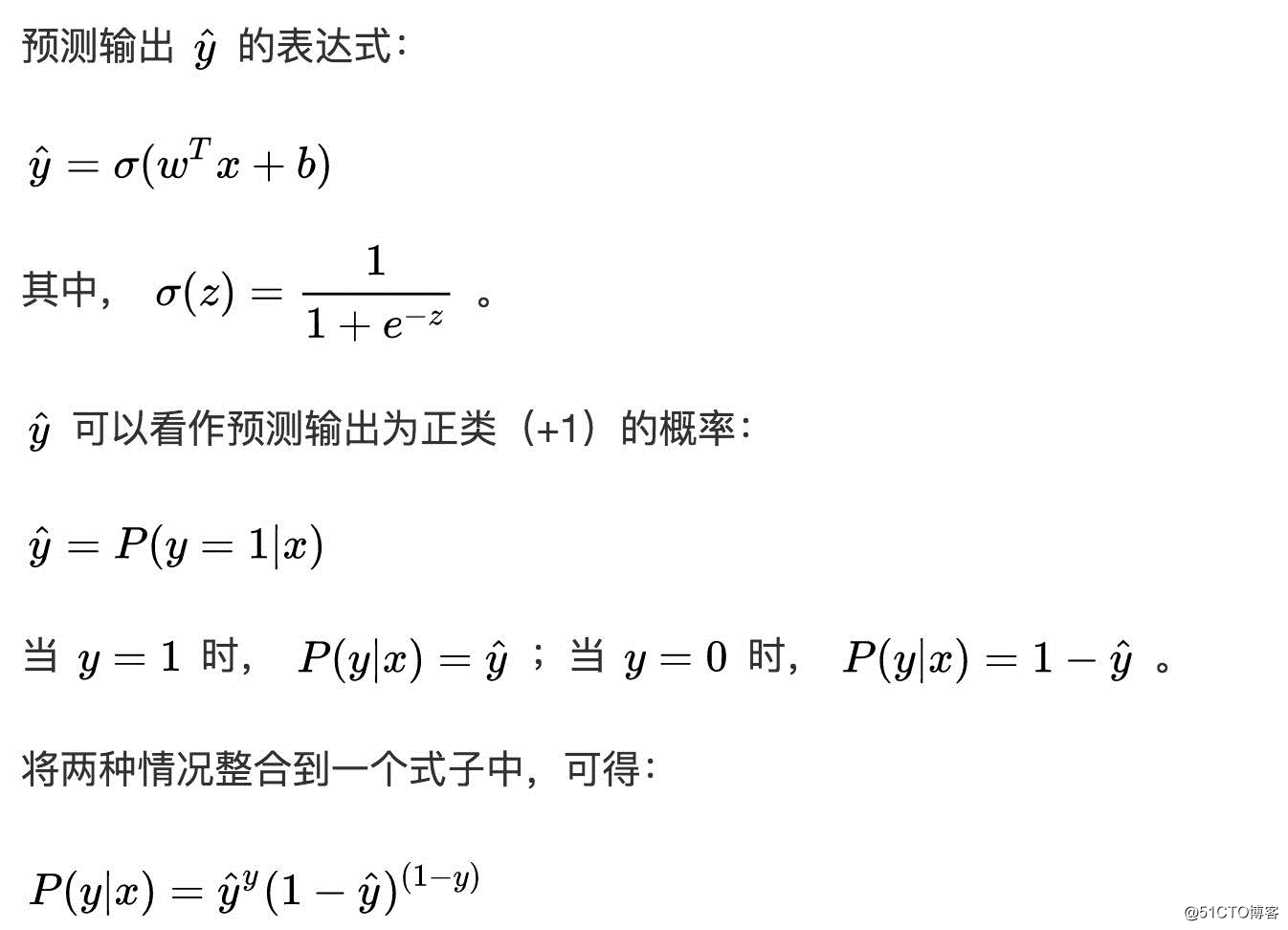

logistic Regression

Note: The first derivative of a function can be expressed by itself,

3

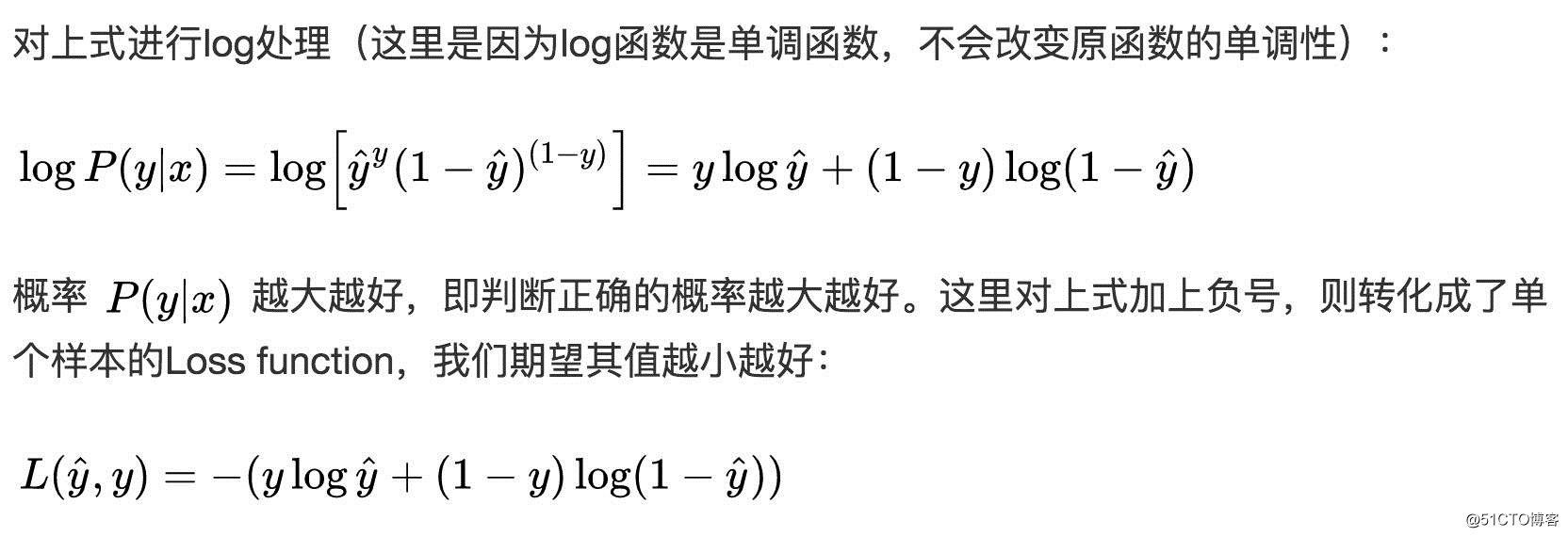

logistic regression loss function

Loss function

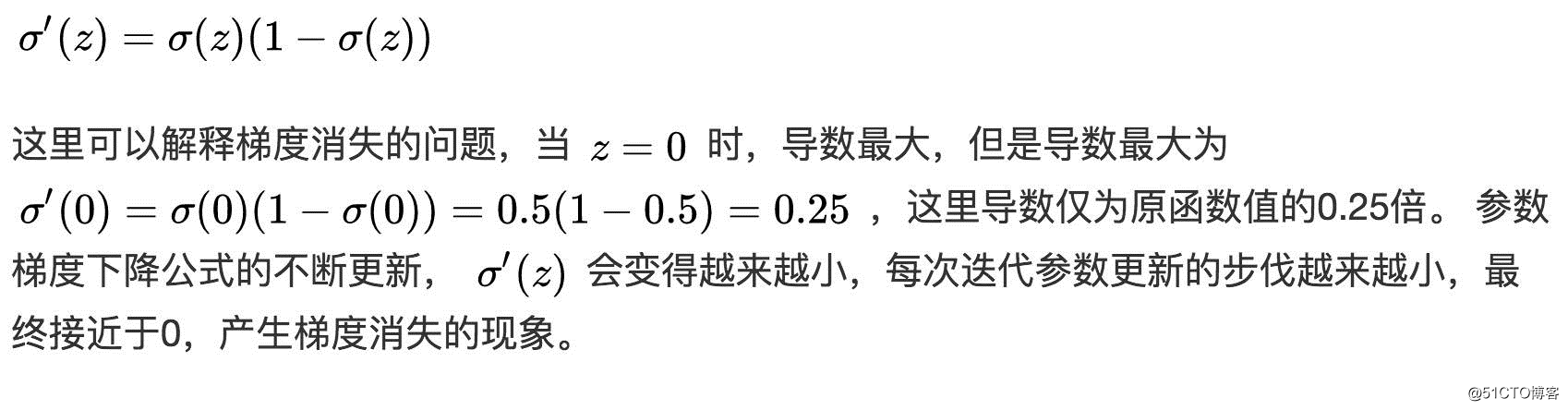

In general experience, squared error is used to measure Loss Function:

However, for logistic regression, squared error is generally not used as Loss Function. This is because the squared error loss function above is generally a non-convex function. -convex), when using the low-degree descent algorithm, it is easy to get the local optimal solution instead of the global optimal solution. So choose convex function.

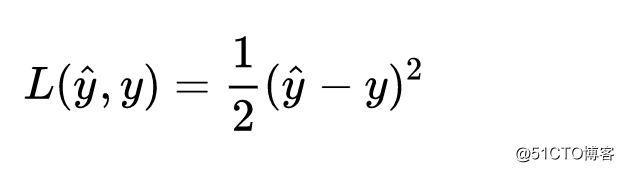

Loss Function of Logistic Regression:

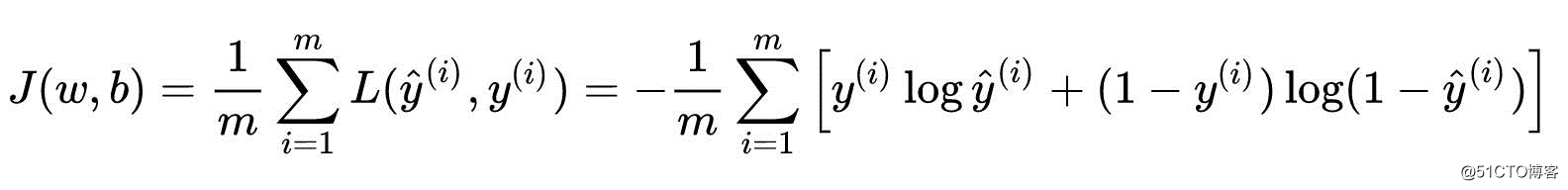

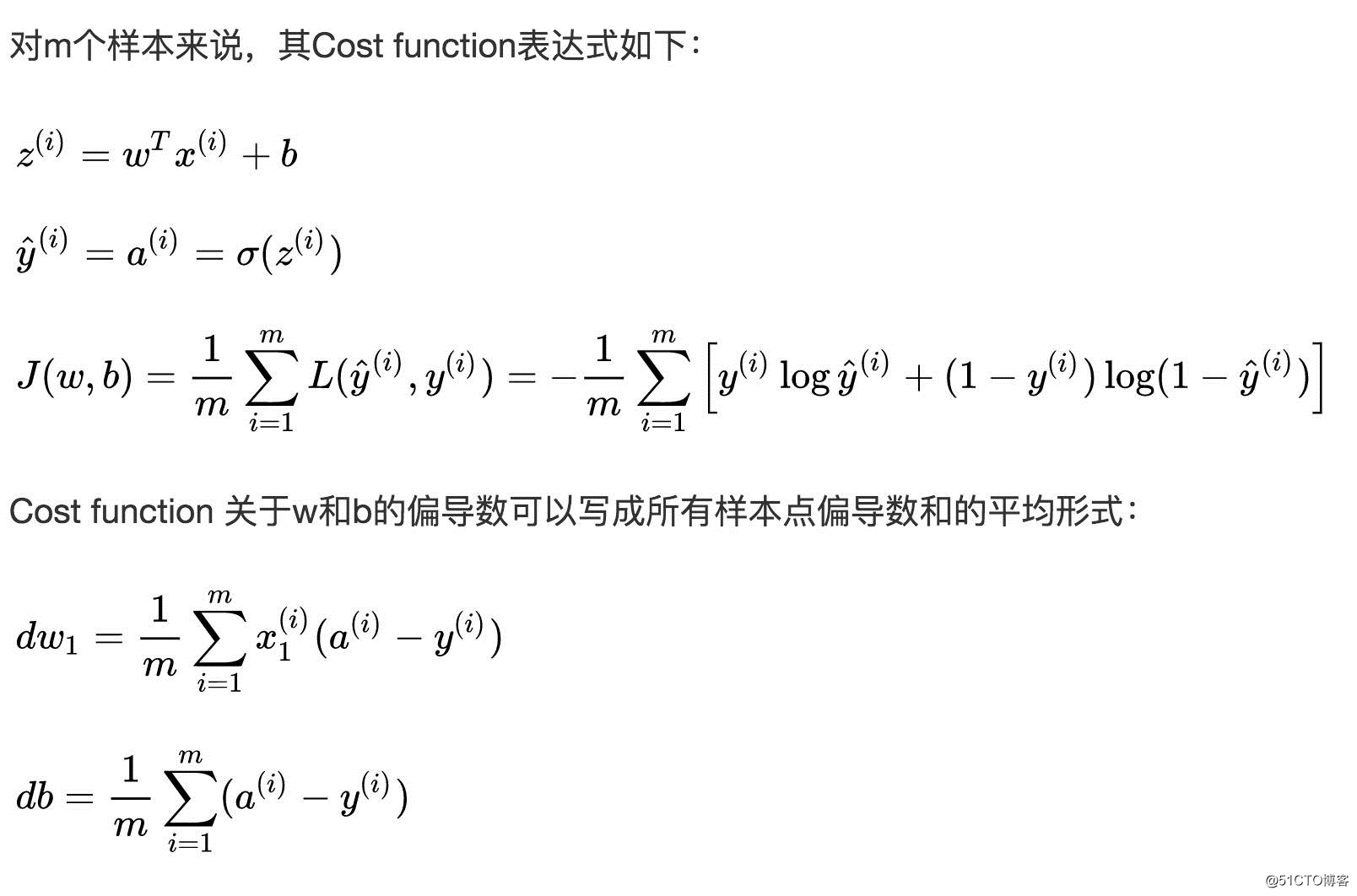

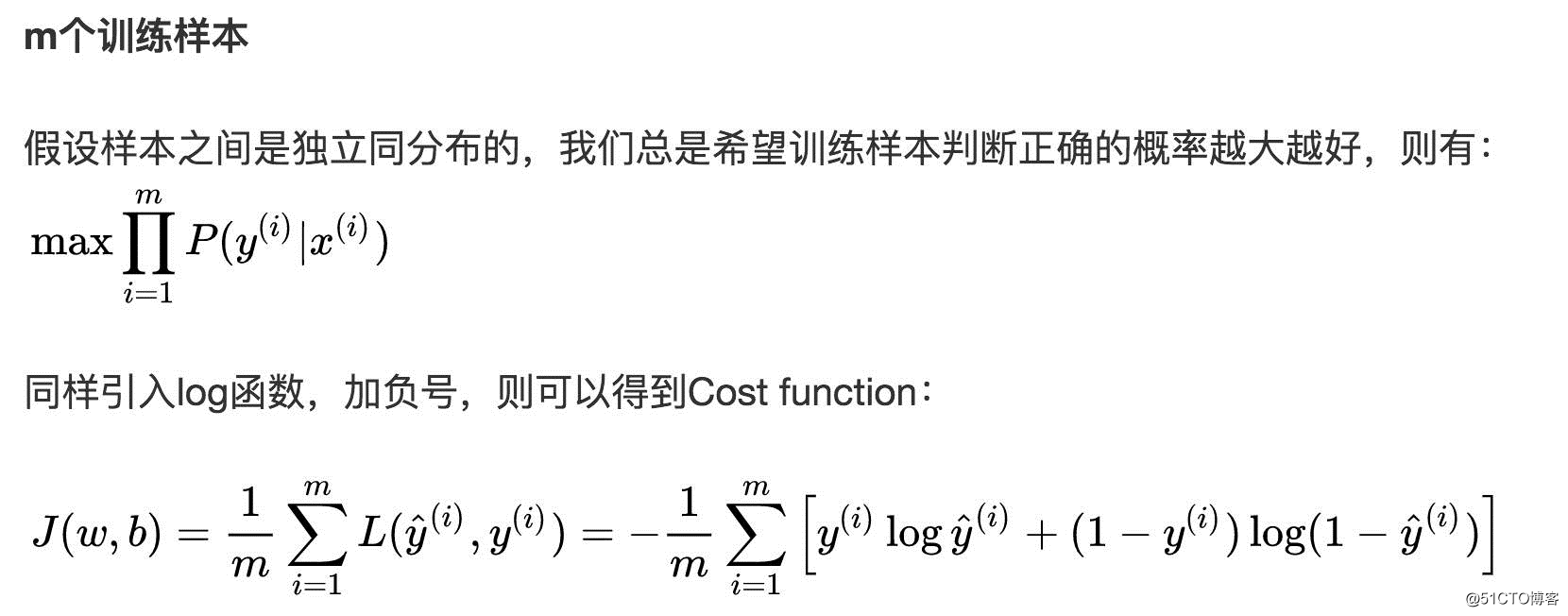

Cost function

The average value of the sum of the Loss function of all training data sets is the cost function of the training set.

- Cost function is a function of waiting coefficients w and b;

- Our goal is to iteratively calculate the best values of w and b, minimize the Cost function, and make it as close to 0 as possible.

4

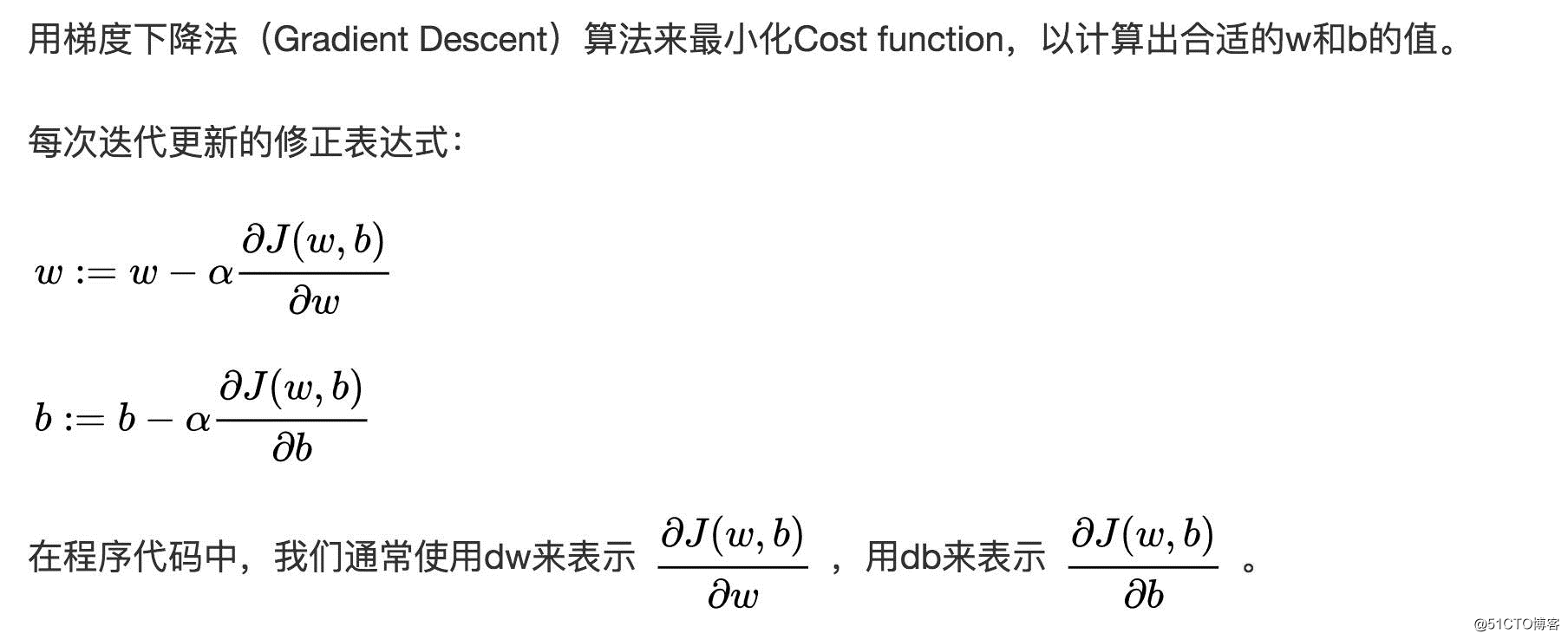

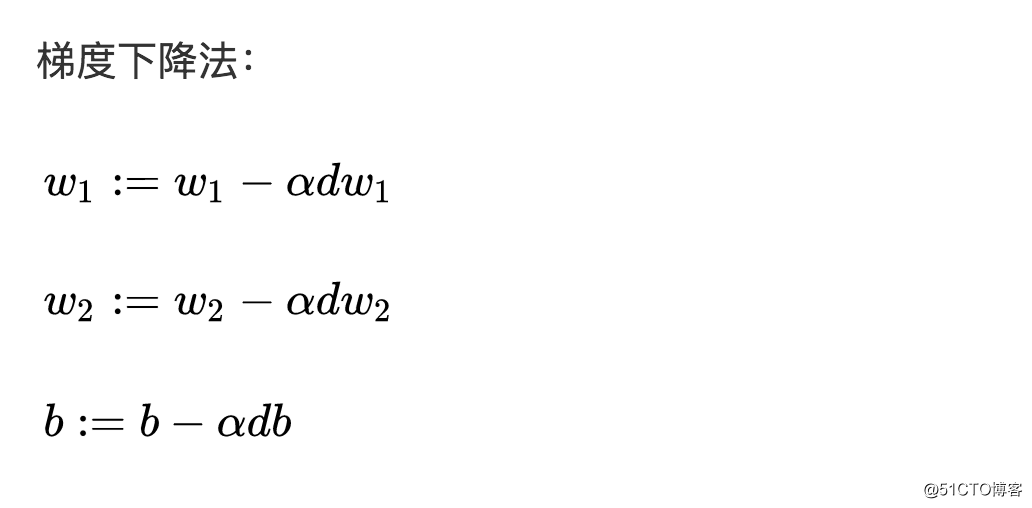

Gradient descent

5

Gradient descent method in logistic regression

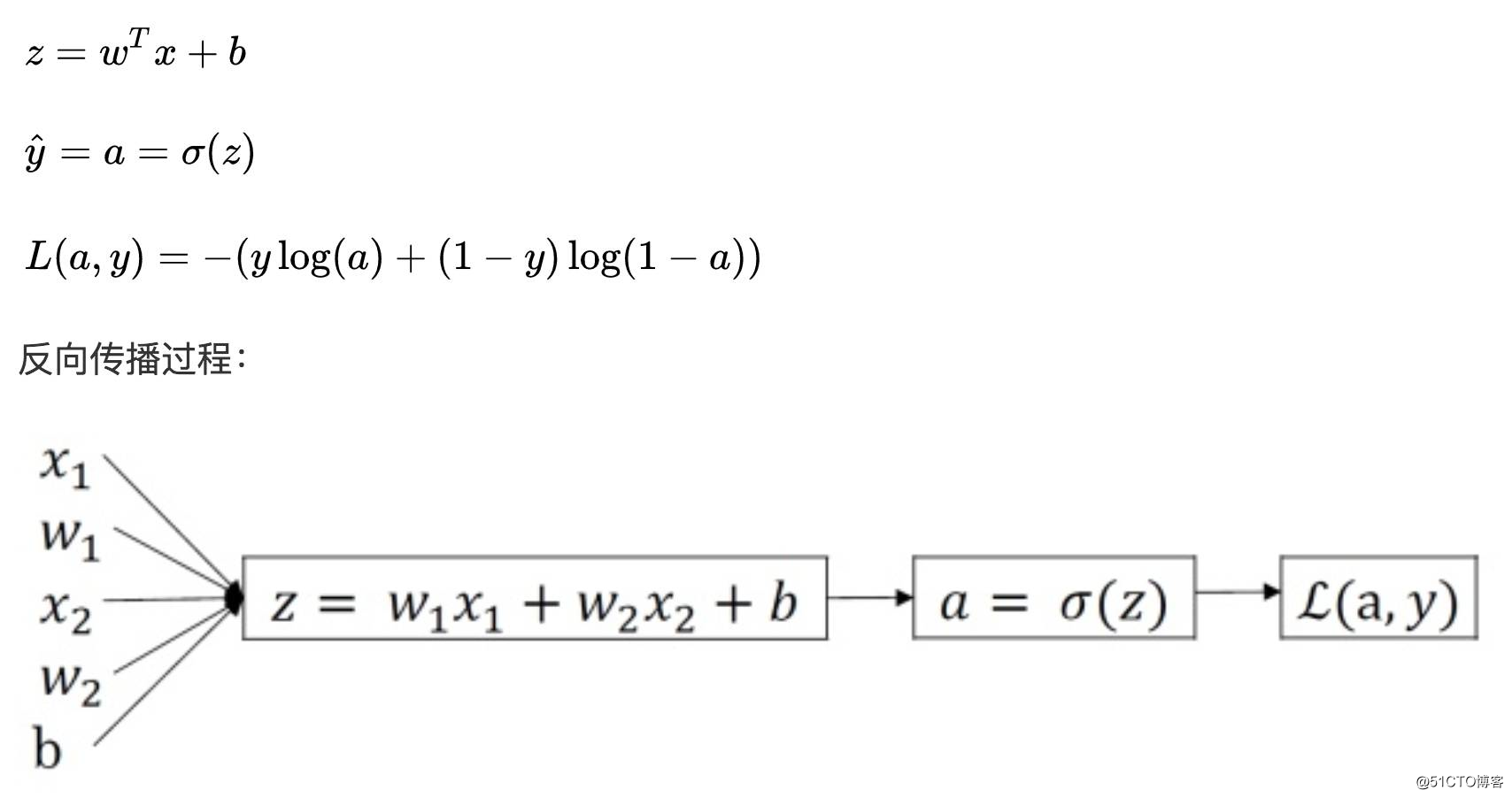

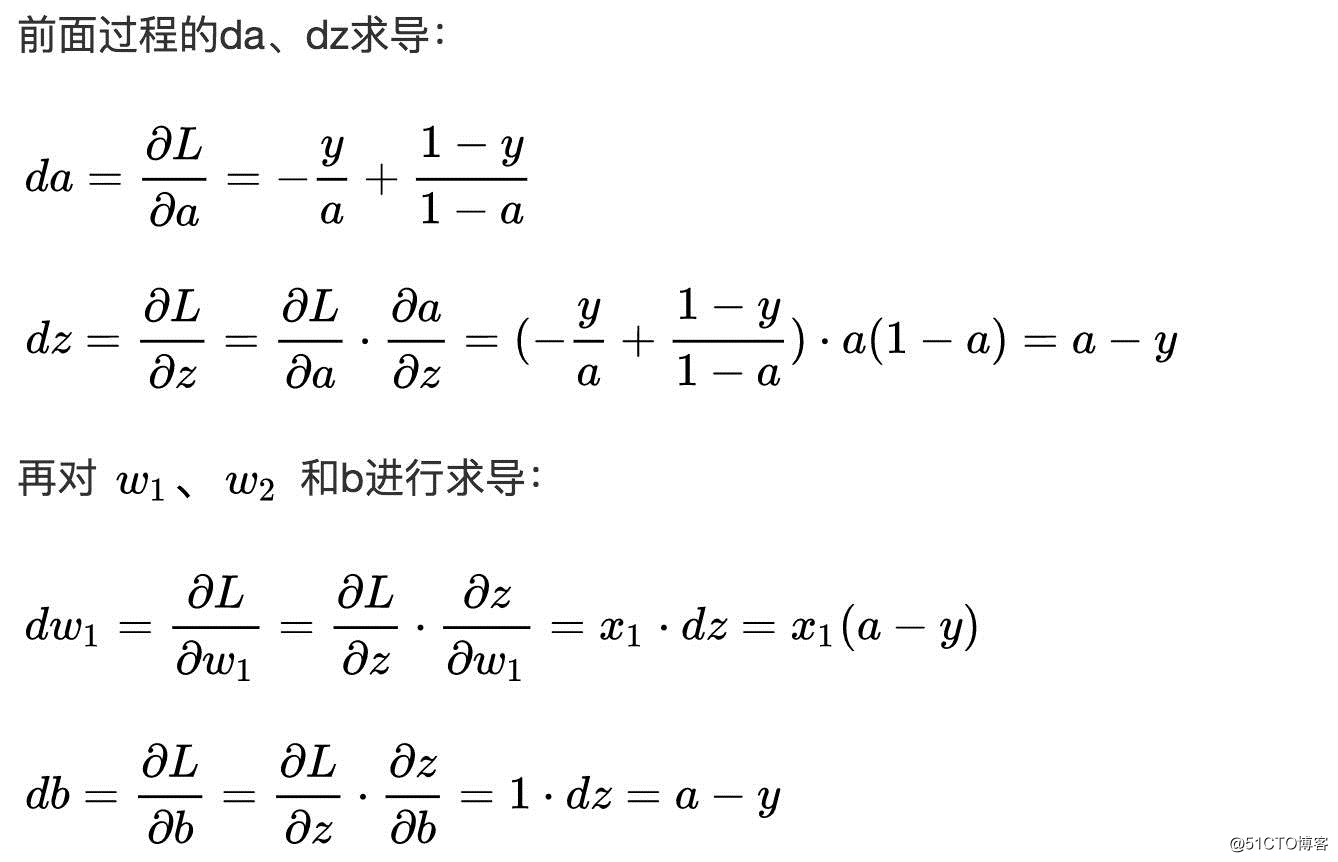

For a single sample, the logistic regression Loss function expression:

6

Gradient descent of m samples

7

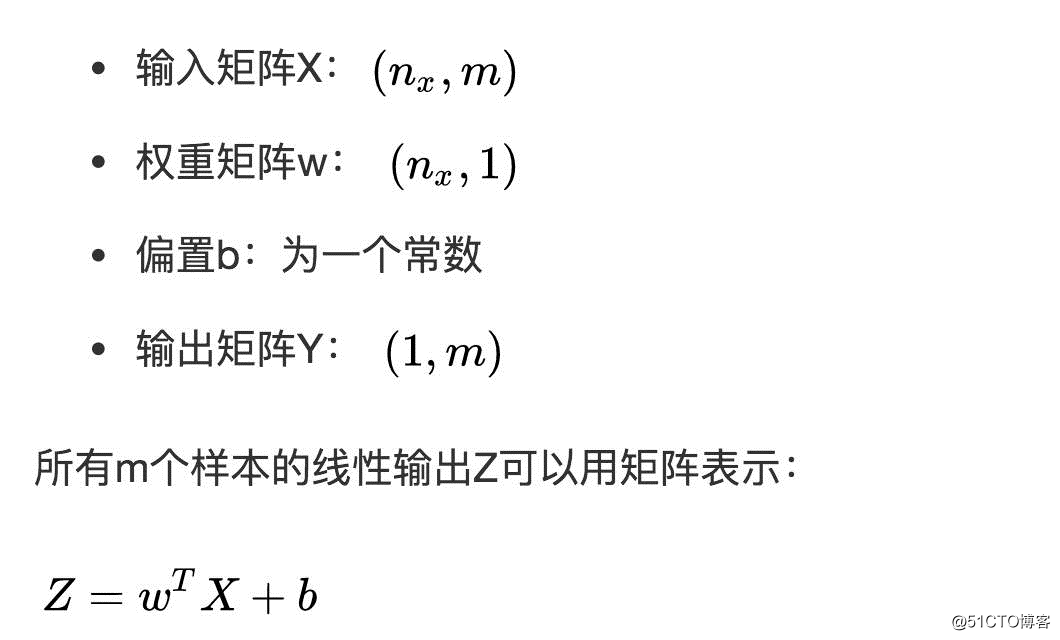

Vectorization

In deep learning algorithms, we usually have a lot of data. In the process of programming, we should use as little loop statements as possible. Use python to achieve matrix operations, thereby improving the running speed of the program and avoiding for loops. usage of.

Logistic regression vectorization

python code:

db = 1/m*np.sum(dZ)Single iteration gradient descent algorithm flow

Z = np.dot(w.T,X) + b

A = sigmoid(Z)

dZ = A-Ydw = 1/m*np.dot(X,dZ.T)

db = 1/m*np.sum(dZ)

w = w - alpha*dwb = b - alpha*db8

python notation

Although there is a broadcasting mechanism in Python, in order to ensure the correctness of matrix operations in Python programs, you can use the reshape() function to set the dimensions of the matrix to be calculated. This is a good habit;

If the following statement is used to define a vector, the dimension of a generated by this statement is (5,), which is neither a row vector nor a column vector, which is called an array with a rank of 1. If a is transposed , You will get a itself, which will bring us some problems in the calculation.

a = np.random.randn(5)If you need to define a (5, 1) or (1, 5) vector, use the following standard statement:

a = np.random.randn(5,1)

b = np.random.randn(1,5)You can use the assert statement to judge the dimensions of a vector or array. assert will judge the embedded statement, that is, judge whether the dimension of a is (5, 1), if not, the program stops here. Using assert statements is also a good habit, which can help us check and find out whether the statements are correct in time.

assert(a.shape == (5,1))You can use the reshape function to set the required dimensions of the array

a.reshape((5,1))9

Explanation of logistic regression cost function

The origin of Cost function

column: https://zhuanlan.zhihu.com/p/29688927

Recommended reading:

Featured Dry Goods|Summary of

Dry Goods Catalogue for Nearly Six Months Dry Goods|Taiwan University Lin Xuantian Machine Learning Foundation Stone Course Study Notes 5 - Training versus Testing

Dry Goods|MIT Linear Algebra Course Fine Notes [First Lesson]

欢迎关注公众号学习交流~

Welcome to join the exchange group to exchange learning