About the Flink on Yarn trilogy

This article is the first in the "Flink on Yarn Trilogy". The entire series consists of the following three:

- Preparation: Before setting up the Flink on Yarn environment, prepare all hardware and software resources;

- Deployment and settings: Deploy CDH and Flink, and then make related settings

- Flink combat: submit Flink tasks in Yarn environment

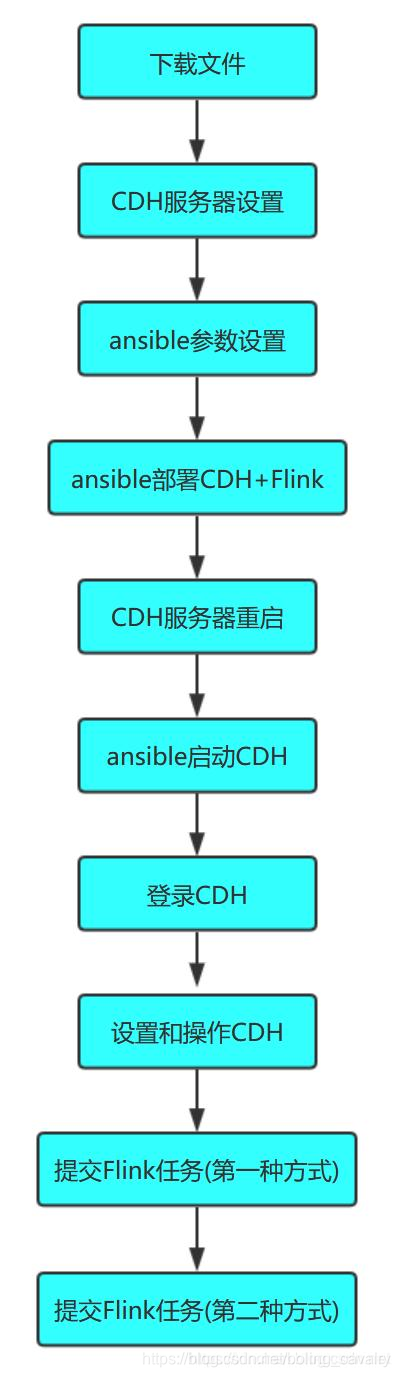

The actual combat content of the entire trilogy is shown in the figure below:

Let's start with the most basic preparations.

Full text link

- "Flink on Yarn Trilogy One: Preparation"

- "

Flink on Yarn Trilogy Part Two: Deployment and Setup

" - "Flink on Yarn Trilogy Part Three: Submit Flink Tasks"

About Flink on Yarn

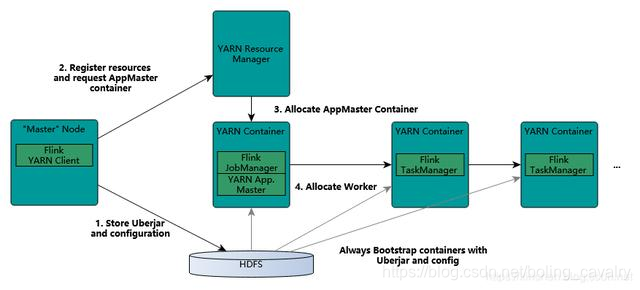

In addition to the common standalone mode, Flink also supports submitting tasks to the Yarn environment for execution. The computing resources required by the tasks are allocated by Yarn Remource Manager, as shown in the following figure (from Flink's official website):

Therefore, a Yarn environment needs to be built to deploy Yarn through CDH , HDFS and other services are common methods, and then use this method to deploy;

Deployment method

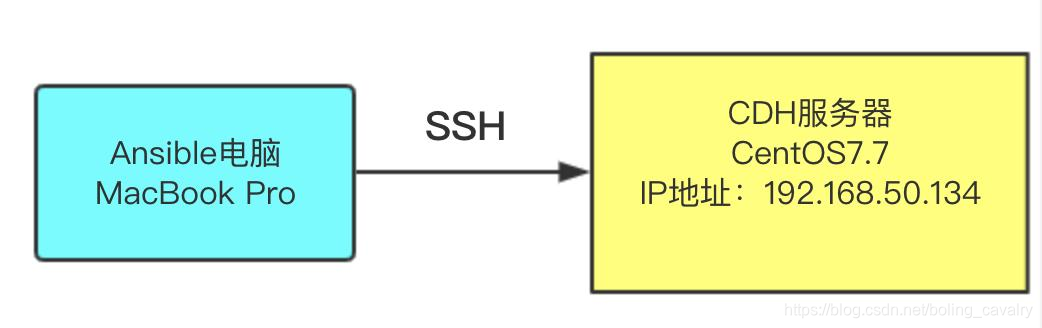

Ansible is a commonly used operation and maintenance tool, which can greatly simplify the entire deployment process. Next, ansible will be used to complete the deployment. If you do n’t know enough about ansible, please refer to "Ansible2.4 Installation and Experience" . The deployment operation is as shown in the following figure. It shows that the script is run on a computer with ansible installed, and ansible is remotely connected to a CentOS7.7 server to complete the deployment:

Hardware preparation

- A computer that can run ansible, I used a MacBook Pro here, also verified with CentOS, all can be successfully deployed;

- A CentOS 7.7 computer is used to run Yarn and Flink (the CDH server in this article refers to this computer). In order to simplify the operation, this time, we deployed CDH, Yarn, HDFS, and Flink on this machine. The CPU of this computer must be at least dual-core, and the memory is not less than 16G . If you want to deploy CDH with multiple computers, it is recommended to modify the ansible script to deploy separately. The script address will be given later;

Software version

- Ansible computer operating system: macOS Catalina 10.15 (CentOS can also be successfully measured)

- CDH server operating system: CentOS Linux release 7.7.1908

- cm version: 6.3.1

- parcel version: 5.16.2

- flink version: 1.7.2

Note : because flink requires hadoop version 2.6, parcel chose 5.16.2, which corresponds to hadoop version 2.6

CDH server settings

You need to log in to the CDH server to perform the following settings:

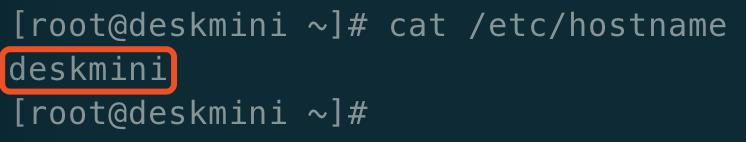

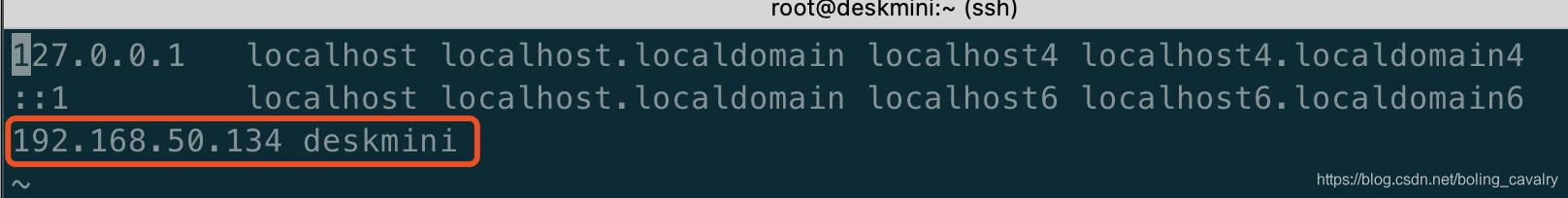

- Check if the / etc / hostname file is correct, as shown below:

- Modify the / etc / hosts file, configure your own IP address and hostname, as shown in the red box below ( it turns out that this step is very important , if you do not do it , it may cause you to be stuck in the "allocation" stage during deployment, see the agent log Show that the progress of agent download parcel has been zero percent):

Download file (ansible computer)

In this actual combat, 13 files are prepared, as shown in the following table (the way of obtaining each file will be given later):

| Numbering | file name | Introduction |

|---|---|---|

| 1 | jdk-8u191-linux-x64.tar.gz | Linux version jdk installation package |

| 2 | mysql-connector-java-5.1.34.jar | JDBC driver for MySQL |

| 3 | cloudera-manager-server-6.3.1-1466458.el7.x86_64.rpm | cm server installation package |

| 4 | cloudera-manager-daemons-6.3.1-1466458.el7.x86_64.rpm | cmemon installation package |

| 5 | cloudera-manager-agent-6.3.1-1466458.el7.x86_64.rpm | cm agent installation package |

| 6 | CDH-5.16.2-1.cdh5.16.2.p0.8-el7.parcel | CDH application offline installation package |

| 7 | CDH-5.16.2-1.cdh5.16.2.p0.8-el7.parcel.sha | CD verification code for offline installation package of CDH application |

| 8 | nimble-1.7.2-bin-hadoop26-scala_2.11.tgz | flink installation package |

| 9 | hosts | The remote host configuration used by ansible, which records the information of the CDH6 server |

| 10 | ansible.cfg | Configuration information used by ansible |

| 11 | cm6-cdh5-flink1.7-single-install.yml | Ansible script used when deploying CDH |

| 12 | cdh-single-start.yml | The ansible script used when starting CDH for the first time |

| 13 | var.yml | Variables used in the script are set here, such as CDH package name, flink file name, etc., for easy maintenance |

The following is the download address of each file:

- jdk-8u191-linux-x64.tar.gz: Oracle's official website is available. In addition, I packaged and uploaded jdk-8u191-linux-x64.tar.gz and mysql-connector-java-5.1.34.jar to csdn, you Can be downloaded at one time, address: https://download.csdn.net/download/boling_cavalry/12098987

- mysql-connector-java-5.1.34.jar: maven central warehouse is available. In addition, I package and upload jdk-8u191-linux-x64.tar.gz and mysql-connector-java-5.1.34.jar to csdn You can download it once, address: https://download.csdn.net/download/boling_cavalry/12098987

- cloudera-manager-server-6.3.1-1466458.el7.x86_64.rpm:https://archive.cloudera.com/cm6/6.3.1/redhat7/yum/RPMS/x86_64/cloudera-manager-server-6.3.1-1466458.el7.x86_64.rpm

- cloudera-manager-daemons-6.3.1-1466458.el7.x86_64.rpm:https://archive.cloudera.com/cm6/6.3.1/redhat7/yum/RPMS/x86_64/cloudera-manager-daemons-6.3.1-1466458.el7.x86_64.rpm

- cloudera-manager-agent-6.3.1-1466458.el7.x86_64.rpm:https://archive.cloudera.com/cm6/6.3.1/redhat7/yum/RPMS/x86_64/cloudera-manager-agent-6.3.1-1466458.el7.x86_64.rpm

- CDH-5.16.2-1.cdh5.16.2.p0.8-el7.parcel:https://archive.cloudera.com/cdh5/parcels/5.16.2/CDH-5.16.2-1.cdh5.16.2.p0.8-el7.parcel

- CDH-5.16.2-1.cdh5.16.2.p0.8-el7.parcel.sha: https://archive.cloudera.com/cdh5/parcels/5.16.2/CDH-5.16.2-1.cdh5. 16.2.p0.8-el7.parcel.sha1 (After downloading, change the extension from .sha1 to .sha)

- flink-1.7.2-bin-hadoop26-scala_2.11.tgz:http://ftp.jaist.ac.jp/pub/apache/flink/flink-1.7.2/flink-1.7.2-bin-hadoop26-scala_2.11.tgz

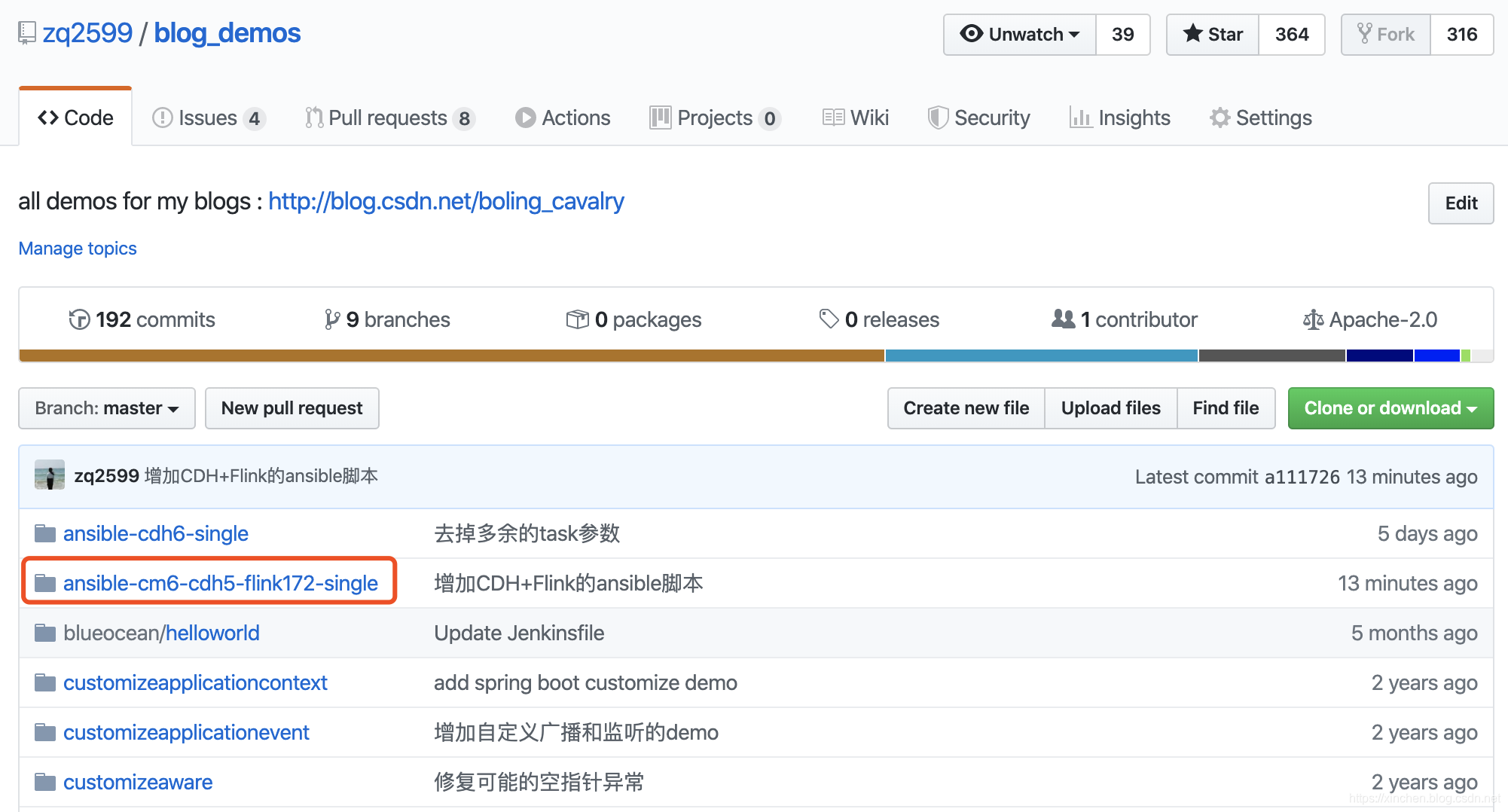

- hosts, ansible.cfg, cm6-cdh5-flink1.7-single-install.yml, cdh-single-start.yml, var.yml: these five files are stored in my GitHub repository, the address is: https: / /github.com/zq2599/blog_demos, there are multiple folders, the above files are in the folder named ansible-cm6-cdh5-flink172-single , as shown in the red box in the following figure:

File placement (ansible computer)

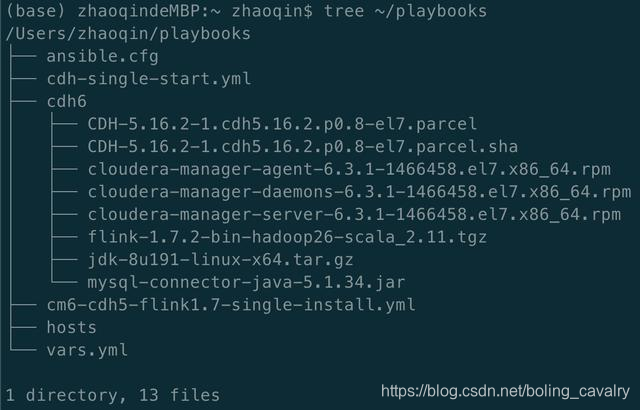

If you have downloaded the above 13 files, please place them according to the following locations so that the deployment can be successfully completed:

-

Create a new folder named playbooks under the home directory : mkdir ~ / playbooks

-

Put these five files in the playbooks folder: hosts, ansible.cfg, cm6-cdh5-flink1.7-single-install.yml, cdh-single-start.yml, vars.yml

-

Create a new subfolder named cdh6 in the playbooks folder;

-

Put these eight files into the cdh6 folder (that is, the remaining eight): jdk-8u191-linux-x64.tar.gz, mysql-connector-java-5.1.34.jar, cloudera-manager-server-6.3. 1-1466458.el7.x86_64.rpm, cloudera-manager-daemons-6.3.1-1466458.el7.x86_64.rpm, cloudera-manager-agent-6.3.1-1466458.el7.x86_64.rpm, CDH-5.16. 2-1.cdh5.16.2.p0.8-el7.parcel, CDH-5.16.2-1.cdh5.16.2.p0.8-el7.parcel.sha, flink-1.7.2-bin-hadoop26-scala_2. 11.tgz

-

After the placement, the directory and files are as shown in the figure below. Remind again: the folder playbooks must be placed in the home directory (ie: ~ / ):

ansible parameter setting (ansible computer)

The operation setting of ansible parameter setting is very simple: configure the access parameters of the CDH server, including the IP address, login account, password, etc., modify the ~ / playbooks / hosts file, as shown below, you need to modify deskmini ansible_host, ansible_port, ansible_user, ansible_password:

[cdh_group]deskmini ansible_host=192.168.50.134 ansible_port=22 ansible_user=root ansible_password=888888

At this point, all preparations have been completed, the next article we will complete these operations:

- Deploy CDH and Flink

- Start CDH

- Set up CDH, install Yarn online, HDFS, etc.

- Adjust Yarn parameters so that Flink tasks can be submitted successfully