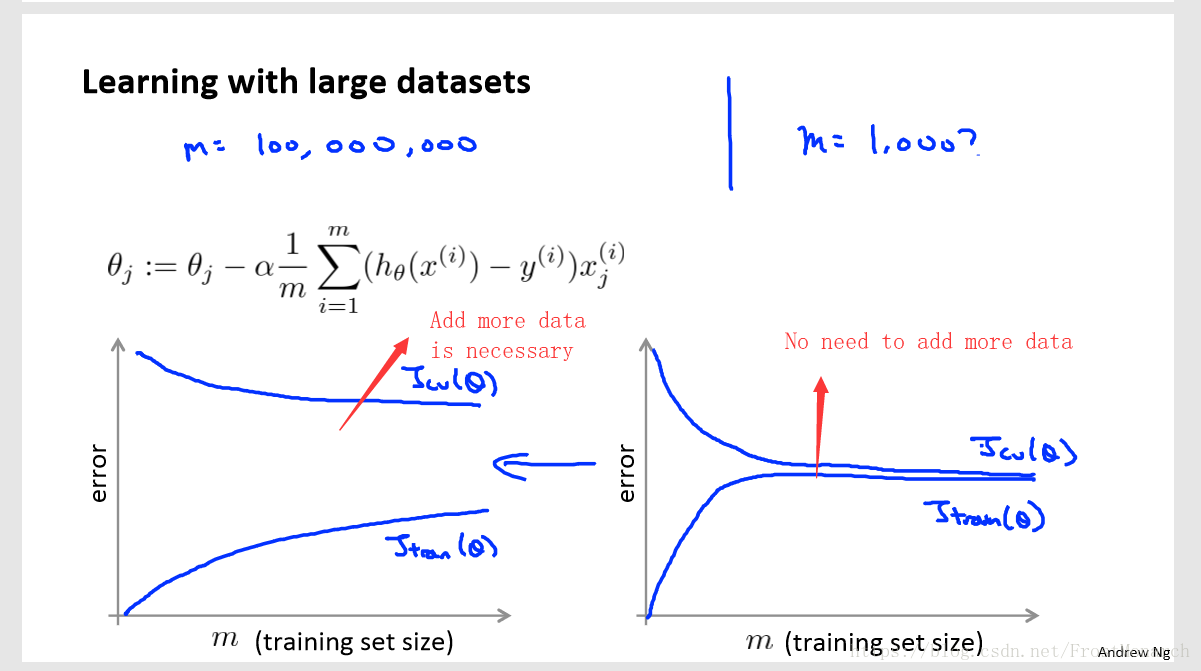

When facing the large scale data sets, it's necessary to compute more efficiently. Before we need to use a more computational method, we'd better sanity check first.

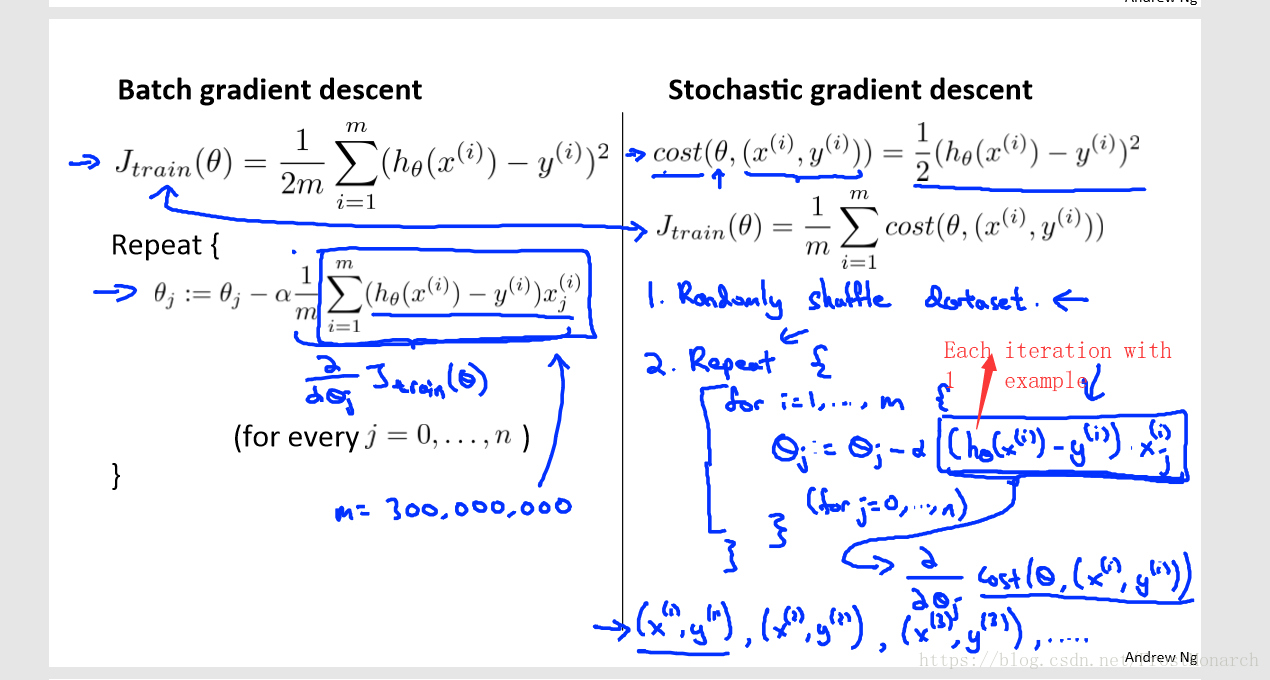

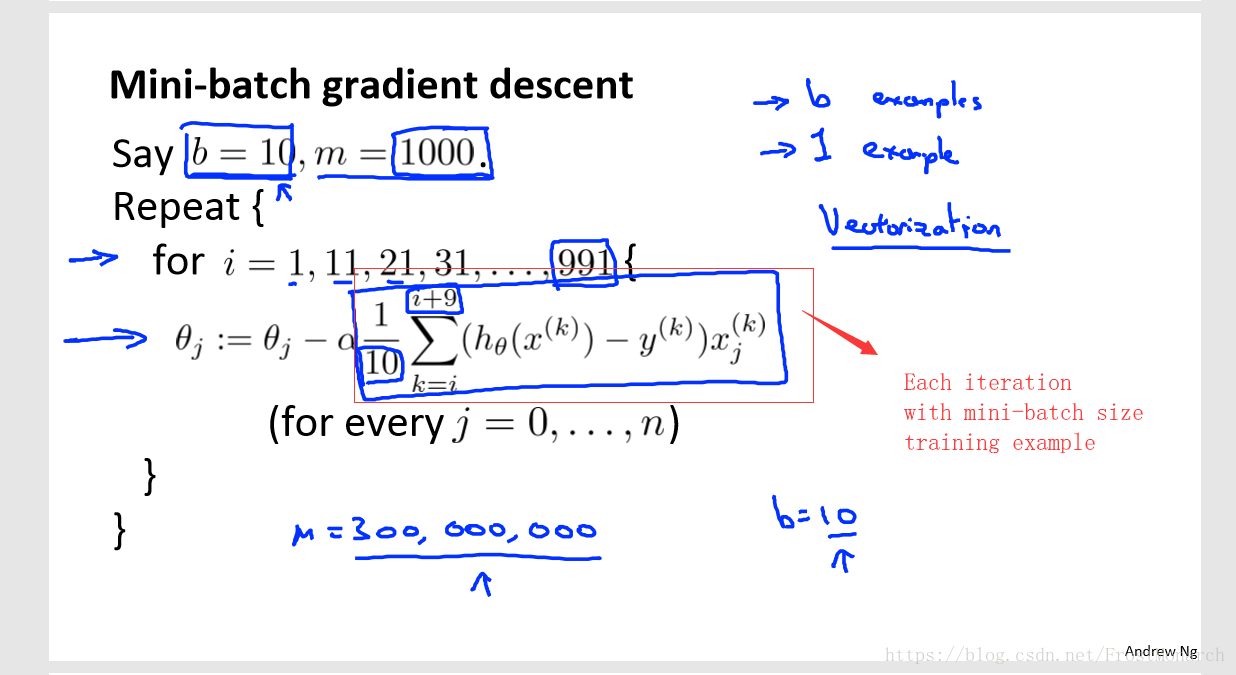

There are several improving gradient decent method.

(1)Stochastic gradient decent

(2)Mini-batch gradient decent

(3)Synthesis large scale data

As we know that in machine learning, large scale data will be very beneficial. There're 2 ways to synthesize data.

First, we can add disturbance to one data to create new data(Remember random disturbance will not be helped)

Second, we can just combine several elements together, for example in text detection, we can just download font styles form the Internet then we can add backgrounds to them to crete new data.