Índice

3. Verifique se a implantação foi bem-sucedida

4 Notícias sobre consumo do consumidor

4. Construir plataforma de gerenciamento kafka

1. Implantar o Zookeeper

1 Puxe a imagem do Zookeeper

docker pull wurstmeister/zookeeper

- 1

doisExecute o Zookeeper

docker run --restart=always --name zookeeper \

--log-driver json-file \

--log-opt max-size=100m \

--log-opt max-file=2 \

-p 2181:2181 \

-v /etc/localtime:/etc/localtime \

-d wurstmeister/zookeeper2. Departamento Kafka

1 Extraia a imagem Kafka

docker pull wurstmeister/kafka

2. Executando Kafka

docker run --restart=always --name kafka \

--log-driver json-file \

--log-opt max-size=100m \

--log-opt max-file=2 \

-p 9092:9092 \

-e KAFKA_BROKER_ID=0 \

-e KAFKA_ZOOKEEPER_CONNECT=192.168.8.102:2181 \

-e KAFKA_ADVERTISED_LISTENERS=PLAINTEXT://192.168.8.102:9092 \

-e KAFKA_LISTENERS=PLAINTEXT://0.0.0.0:9092 \

-v /etc/localtime:/etc/localtime \

-d wurstmeister/kafka

Descrição do parâmetro:

-e KAFKA_BROKER_ID=0 No cluster kafka, cada kafka tem um BROKER_ID para se distinguir

-e KAFKA_ZOOKEEPER_CONNECT=172.16.0.13:2181/kafka Configure o zookeeper para gerenciar o caminho do kafka 172.16.0.13:2181/kafka

-e KAFKA_ADVERTISED_LISTENERS =PLAINTEXT://172.16.0.13:9092 Registre o endereço e a porta do kafka para o zookeeper. Se for acesso remoto, ele precisa ser alterado para o IP da rede externa. Por exemplo, programas Java não podem se conectar ao acessar.

-e KAFKA_LISTENERS=PLAINTEXT://0.0.0.0:9092 Configure a porta de escuta do kafka

-v /etc/localtime:/etc/localtime O tempo do contêiner sincroniza o horário da máquina virtual

3. Verifique se a implantação foi bem-sucedida

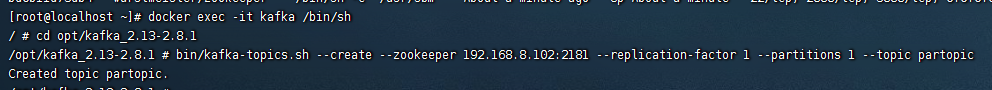

1 Entre no contêiner kafka

docker exec -it kafka /bin/sh

2 Criar produtor de tópico

cd opt/kafka_2.13-2.8.1

bin/kafka-topics.sh --create --zookeeper 192.168.8.102:2181 --replication-factor 1 --partitions 1 --topic partopic

3 O produtor envia a mensagem

bin/kafka-console-producer.sh --broker-list 192.168.8.102:9092 --topic partopic

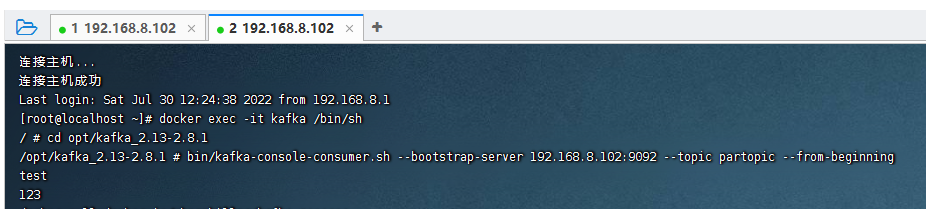

4 Notícias sobre consumo do consumidor

- Abra uma nova janela ssh

- Insira o contêiner como na etapa anterior

bin/kafka-console-consumer.sh --bootstrap-server 192.168.8.102:9092 --topic partopic --from-beginning

4. Construir plataforma de gerenciamento kafka

docker pesquisa kafdrop

docker run -d --rm -p 9000:9000 \

-e JVM_OPTS="-Xms32M -Xmx64M" \

-e KAFKA_BROKERCONNECT=<host:port,host:port> \

-e SERVER_SERVLET_CONTEXTPATH="/" \

obsidiandynamics/kafdrop

<host:port,host:port> 为 外网集群地址 多个用逗号分隔 例如xxx.xxx.xxx.xxx:9092,yyy.yyy.yyy.yyy:9092 尖角号不留

上面的命令是百度的

以下是我自己尝试的

docker run -d --name kafdrop -p 9001:9001 \

-e JVM_OPTS="-Xms32M -Xmx64M -Dserver.port=9001" \

-e KAFKA_BROKERCONNECT=192.168.58.130:9092 \

-e SERVER_SERVLET_CONTEXTPATH="/" \

obsidiandynamics/kafdrop

因为我docker启动了其他东西占用了9001端口,而这个kafdrop其实就是一个springboot项目,以jar命令的形式启动Endereço de visita: Kafdrop: Lista de Corretores

5. SpringBoot integra Kafka

1. Importar dependências

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

</dependency>2. Modifique a configuração

spring:

kafka:

bootstrap-servers: 192.168.58.130:9092 #部署linux的kafka的ip地址和端口号

producer:

# 发生错误后,消息重发的次数。

retries: 1

#当有多个消息需要被发送到同一个分区时,生产者会把它们放在同一个批次里。该参数指定了一个批次可以使用的内存大小,按照字节数计算。

batch-size: 16384

# 设置生产者内存缓冲区的大小。

buffer-memory: 33554432

# 键的序列化方式

key-serializer: org.apache.kafka.common.serialization.StringSerializer

# 值的序列化方式

value-serializer: org.apache.kafka.common.serialization.StringSerializer

# acks=0 : 生产者在成功写入消息之前不会等待任何来自服务器的响应。

# acks=1 : 只要集群的首领节点收到消息,生产者就会收到一个来自服务器成功响应。

# acks=all :只有当所有参与复制的节点全部收到消息时,生产者才会收到一个来自服务器的成功响应。

acks: 1

consumer:

# 自动提交的时间间隔 在spring boot 2.X 版本中这里采用的是值的类型为Duration 需要符合特定的格式,如1S,1M,2H,5D

auto-commit-interval: 1S

# 该属性指定了消费者在读取一个没有偏移量的分区或者偏移量无效的情况下该作何处理:

# latest(默认值)在偏移量无效的情况下,消费者将从最新的记录开始读取数据(在消费者启动之后生成的记录)

# earliest :在偏移量无效的情况下,消费者将从起始位置读取分区的记录

auto-offset-reset: earliest

# 是否自动提交偏移量,默认值是true,为了避免出现重复数据和数据丢失,可以把它设置为false,然后手动提交偏移量

enable-auto-commit: false

# 键的反序列化方式

key-deserializer: org.apache.kafka.common.serialization.StringDeserializer

# 值的反序列化方式

value-deserializer: org.apache.kafka.common.serialization.StringDeserializer

listener:

# 在侦听器容器中运行的线程数。

concurrency: 5

#listner负责ack,每调用一次,就立即commit

ack-mode: manual_immediate

missing-topics-fatal: falseEste teste : endereço Linux: 192.168.58.130

spring.kafka.bootstrap-servers = 192.168.58.130:9092

anunciado.listeners= 192.168.58.130:9092

3. Produtor

import com.alibaba.fastjson.JSON;

import lombok.extern.slf4j.Slf4j;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.kafka.core.KafkaTemplate;

import org.springframework.kafka.support.SendResult;

import org.springframework.stereotype.Component;

import org.springframework.util.concurrent.ListenableFuture;

import org.springframework.util.concurrent.ListenableFutureCallback;

/**

* 事件的生产者

*/

@Slf4j

@Component

public class KafkaProducer {

@Autowired

public KafkaTemplate kafkaTemplate;

/** 主题 */

public static final String TOPIC_TEST = "Test";

/** 消费者组 */

public static final String TOPIC_GROUP = "test-consumer-group";

public void send(Object obj){

String obj2String = JSON.toJSONString(obj);

log.info("准备发送消息为:{}",obj2String);

//发送消息

ListenableFuture<SendResult<String, Object>> future = kafkaTemplate.send(TOPIC_TEST, obj);

//回调

future.addCallback(new ListenableFutureCallback<SendResult<String, Object>>() {

@Override

public void onFailure(Throwable ex) {

//发送失败的处理

log.info(TOPIC_TEST + " - 生产者 发送消息失败:" + ex.getMessage());

}

@Override

public void onSuccess(SendResult<String, Object> result) {

//成功的处理

log.info(TOPIC_TEST + " - 生产者 发送消息成功:" + result.toString());

}

});

}

}4. Consumidores

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.springframework.kafka.annotation.KafkaListener;

import org.springframework.kafka.support.Acknowledgment;

import org.springframework.kafka.support.KafkaHeaders;

import org.springframework.messaging.handler.annotation.Header;

import org.springframework.stereotype.Component;

import java.util.Optional;

/**

* 事件消费者

*/

@Component

public class KafkaConsumer {

private Logger logger = LoggerFactory.getLogger(org.apache.kafka.clients.consumer.KafkaConsumer.class);

@KafkaListener(topics = KafkaProducer.TOPIC_TEST,groupId = KafkaProducer.TOPIC_GROUP)

public void topicTest(ConsumerRecord<?,?> record, Acknowledgment ack, @Header(KafkaHeaders.RECEIVED_TOPIC) String topic){

Optional<?> message = Optional.ofNullable(record.value());

if (message.isPresent()) {

Object msg = message.get();

logger.info("topic_test 消费了: Topic:" + topic + ",Message:" + msg);

ack.acknowledge();

}

}

}5. Teste o envio de mensagens

@Test

void kafkaTest(){

kafkaProducer.send("Hello Kafka");

}6. Teste a mensagem recebida

![]()