Problem statement

When using the huggingface model on the server, if you directly specify the model name and use AutoTokenizer.from_pretrained(“model_name”), an error may be reported due to network reasons. Failed to connect to huggingface.co port 443 after 75018 ms: Operation time out

Therefore, we need to download the model to the server, get the local path model_dir of the model, and then use it through AutoTokenizer.from_pretrained(model_dir).

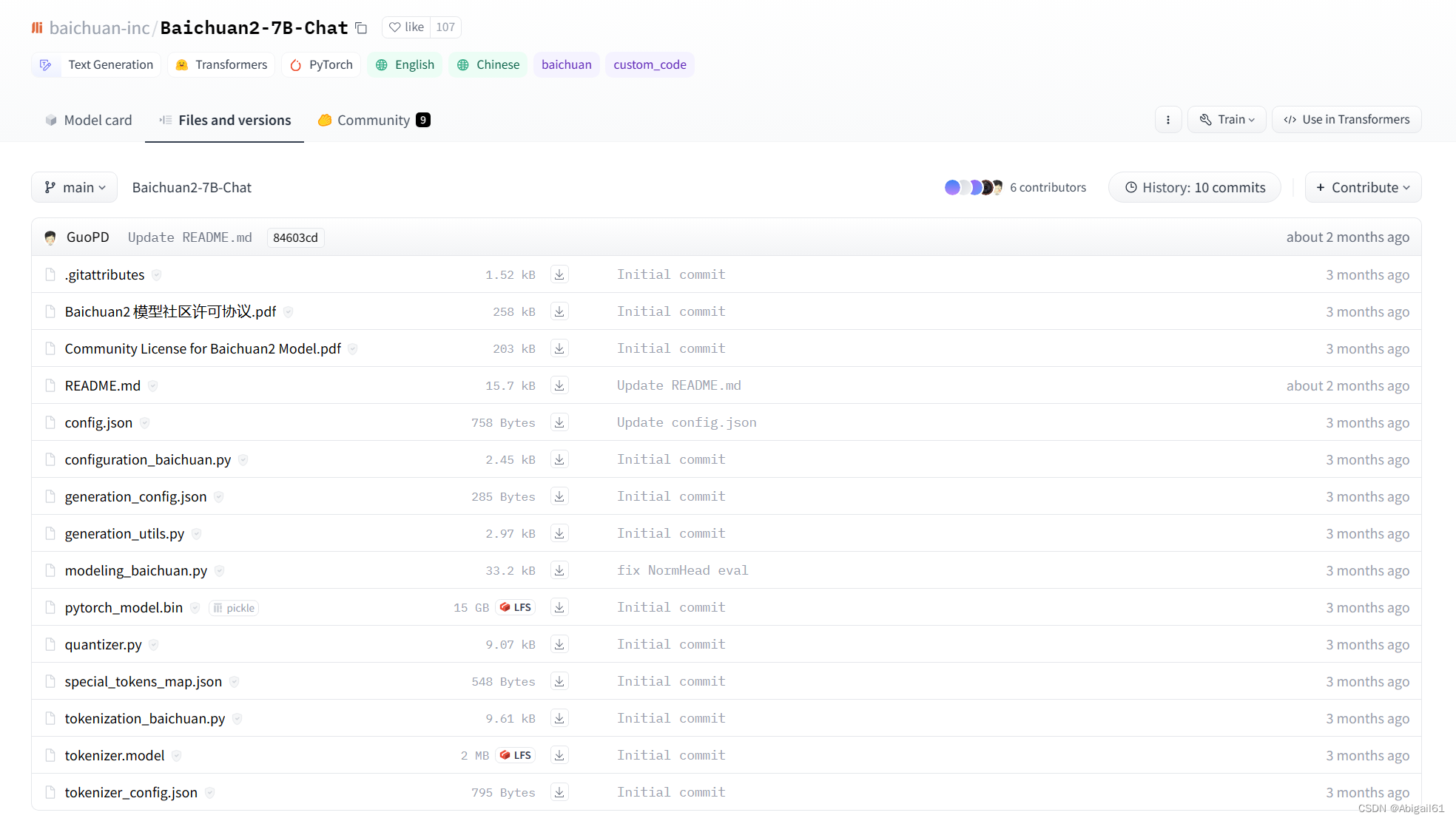

Download method 1: Manually download the corresponding file from the huggingface interface

Download the files one by one from the huggingface official website. This method requires downloading the model locally and then uploading it to the server. After two transmissions, it is very troublesome. Not recommended

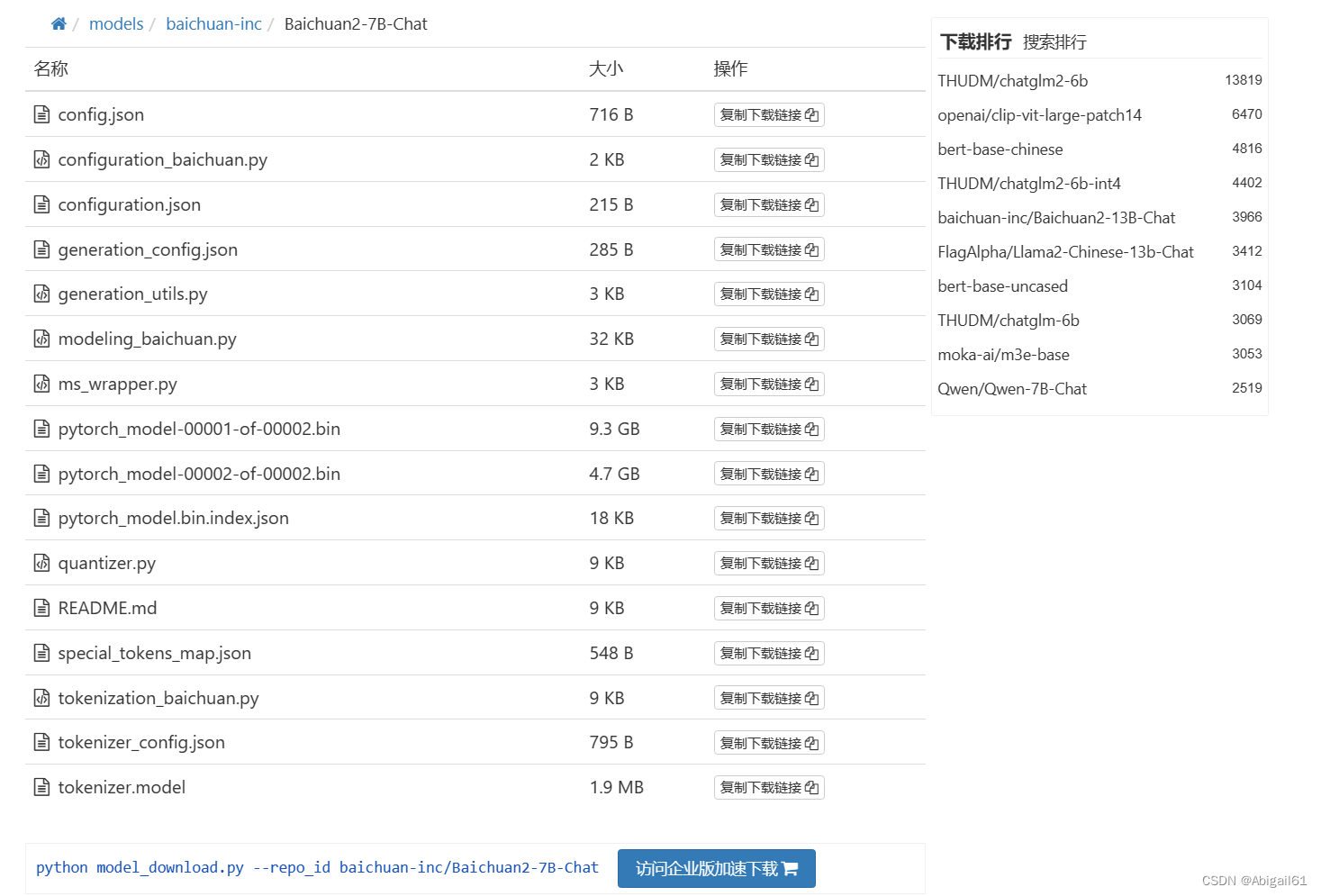

Download method 2 Use downloader to download

Go to huggingface Mirror website, first download the model_download.py in the picture to the model path in the server.

Then run the code:

pip install huggingface_hub

python model_download.py --repo_id (模型ID)

If you don’t know the model ID, you can search for the model name in the search bar, such as baichuan2-7B-Chat

As shown in the figure, the corresponding download code will be given: python model_download.py --repo_id baichuan-inc/Baichuan2-7B-Chat

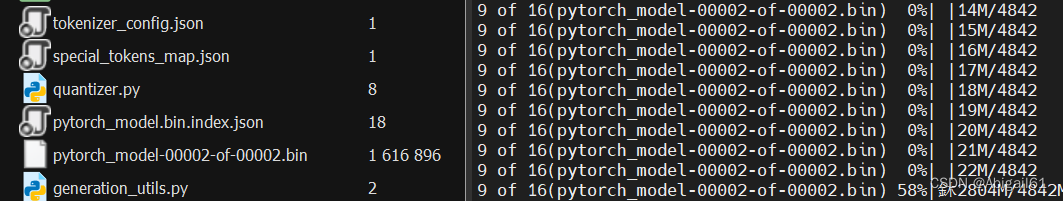

In this way, the huggingface model can be downloaded directly on the server, and the download progress bar will be displayed. The speed is about 2M/s

Kind tips

Model downloading usually takes a long time. Don’t forget to open the tmux window to prevent the computer from sleeping and causing network interruption.

It doesn’t matter if you forget to open the tmux window. You can press ctrl-z to pause the task. , then open tmux and re-run python model_download.py --repo_id model id. This line downloads the code and you can continue downloading

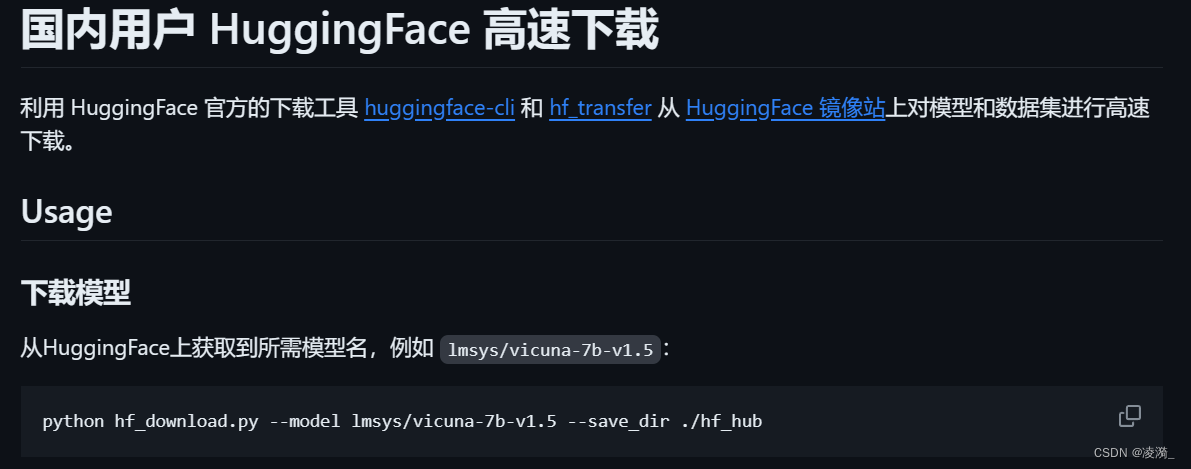

Download method 3 Use github script to download

Through this project, you can download and load models only by the model name.

github project link: https://github.com/LetheSec/HuggingFace-Download-Accelerator