Use python to implement programming to realize file merging and deduplication operations

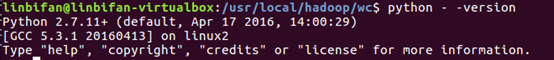

1. View the python environment

2. Write mapper function and reducer function

Under the /usr/local/hadoop/wc folder (create one if there is no folder)

gedit mapper.py

mapper.py

#!/usr/bin/env python3

# encoding=utf-8

lines = {

}

import sys

for line in sys.stdin:

line = line.strip()

key, value = line.split()

if key not in lines.keys():

lines[key] = []

if value not in lines[key]:

lines[key].append(value)

lines[key] = sorted(lines[key])

for key, value in lines.items():

print(key, end = ' ')

for i in value:

print(i, end = ' ')

print()

gedit reducer.py

reducer.py

#!/usr/bin/env python3

# encoding=utf-8

import sys

key, values = None, []

for line in sys.stdin:

line = line.strip().split(' ')

if key == None:

key = line[0]

if line[0] != key:

key = line[0]

values = []

if line[1] not in values:

print('%s\t%s' % (line[0], line[1]))

values.append(line[1])

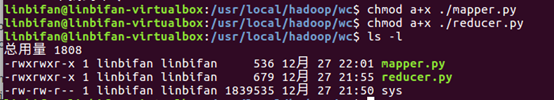

3. Runnable permission setting and viewing

chomod a+x ./mapper.py

chomod a+x ./mapper.py

4. Running on Hadoop to implement file merging and deduplication operations

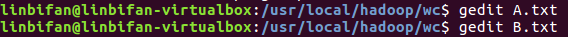

Write A.txt, B.txt

gedit A.txt

20150101 x

20150102 y

20150103 x

20150104 y

20150105 z

20150106 x

gedit B.txt

20150101 y

20150102 y

20150103 x

20150104 z

20150105 y

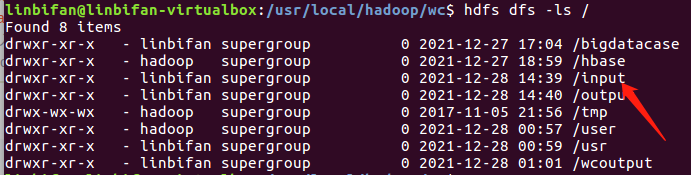

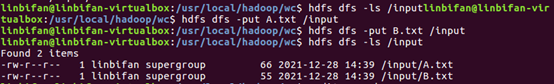

Run Python code on Hadoop

If there is a problem with the hdfs command, add the environment variable

export PATH=$PATH:/usr/local/hadoop/bin

If there is no input folder, you need to create it

export STREAM=$HADOOP_HOME/share/hadoop/tools/lib/hadoop-streaming-*.jar

hadoop jar $STREAM \

-file /usr/local/hadoop/wc/mapper.py \

-mapper /usr/local/hadoop/wc/mapper.py \

-file /usr/local/hadoop/wc/reducer.py \

-reducer /usr/local/hadoop/wc/reducer.py \

-input /input/* \

-output /output